How SMBs Can Use Gemini Personal Intelligence to Automate Meeting Prep, Email Triage, and Research in 2026

Now I have all the data I need. Let me compile the internal and external links and inject them into the content. **Internal links found:**...

Read articleLarge Language Models (LLMs)

View all

The Hidden Cost of Upgrading to Claude Opus 4.7: Why Your Token Bill May Jump 35%

Anthropic’s release of Claude Opus 4.7 on April 16, 2026, has been met with both excitement for its performance gains…

AI Tools & Frameworks

View all

How to Enable and Use Gemini Personal Intelligence: A Step-by-Step Setup Guide (2026)

Now I have all the data I need. Let me compile the internal and external links and inject them into…

MLOps & AI Engineering

View all

From Raspberry Pi to Data Center: A Technical Guide to Deploying Gemma 4’s Agentic Models

Deploying sophisticated AI agents locally rather than relying on cloud APIs has become the dominant architectural pattern for privacy-conscious and…

All Articles

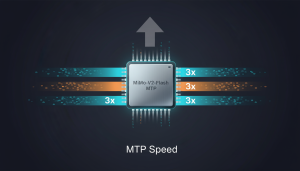

How to 3x Inference Speed with MiMo-V2-Flash’s MTP Module

Deploying large Mixture-of-Experts (MoE) models often leads to high inference costs and latency, creating bottlenecks in production environments. MiMo-V2-Flash’s open-source Multi-Token Prediction (MTP) module addresses...

AI in 2025: From Tools to Teammates – What’s Changed?

As of November 2025, artificial intelligence has undergone a seismic shift—from static tools requiring manual input to dynamic, autonomous agents capable of orchestrating entire workflows....

How to Edit AI Images Without Re-Prompting Using Manus Design View

Are you tired of endlessly re-prompting AI image generators to fix minor details? Manus AI’s revolutionary Design View offers a solution that transforms AI image...

Gemini 3 Flash: Your Guide to Low-Cost, High-Reasoning AI

Developers face a persistent challenge: balancing advanced reasoning capabilities with low-latency performance in AI applications. Gemini 3 Flash, powered by Distillation Pretraining, emerges as a...

MiMo-V2-Flash vs. Mixtral: Which MoE Model Offers Better ROI?

Enterprises face a critical decision when selecting cost-effective Mixture-of-Experts (MoE) models for large-scale AI deployments. Xiaomi’s MiMo-V2-Flash, released in Q4 2024, claims groundbreaking efficiency by...

How to Build Your First Reusable AI Agent Skill

AI agents often struggle with domain-specific tasks due to lack of contextual understanding. The Agent Skills open standard solves this by enabling developers to create...

How to Write an agents.md to Get the Best AI Agent Results

Struggling to get consistent, high-quality results from your AI coding agents? The root cause might be hiding in plain sight: your agents.md file. This critical...

How to Create the Perfect CLAUDE.md for Top Results

Struggling to get consistent, high-quality outputs from Claude Code? The difference between mediocre and exceptional results often comes down to one critical file: your CLAUDE.md...

How to Start Vibe Coding: A Developer’s Guide to AI-Powered Dev

In November 2025, developers face unprecedented demands for speed and quality. Vibe coding—a paradigm where AI handles boilerplate while humans focus on architecture—has emerged as...

How to Train ChatGPT on Your Brand Voice with New Tone Controls

Struggling to maintain brand consistency in AI-generated content? As of November 2025, OpenAI’s ChatGPT introduces powerful tone controls that let marketers and content creators embed...