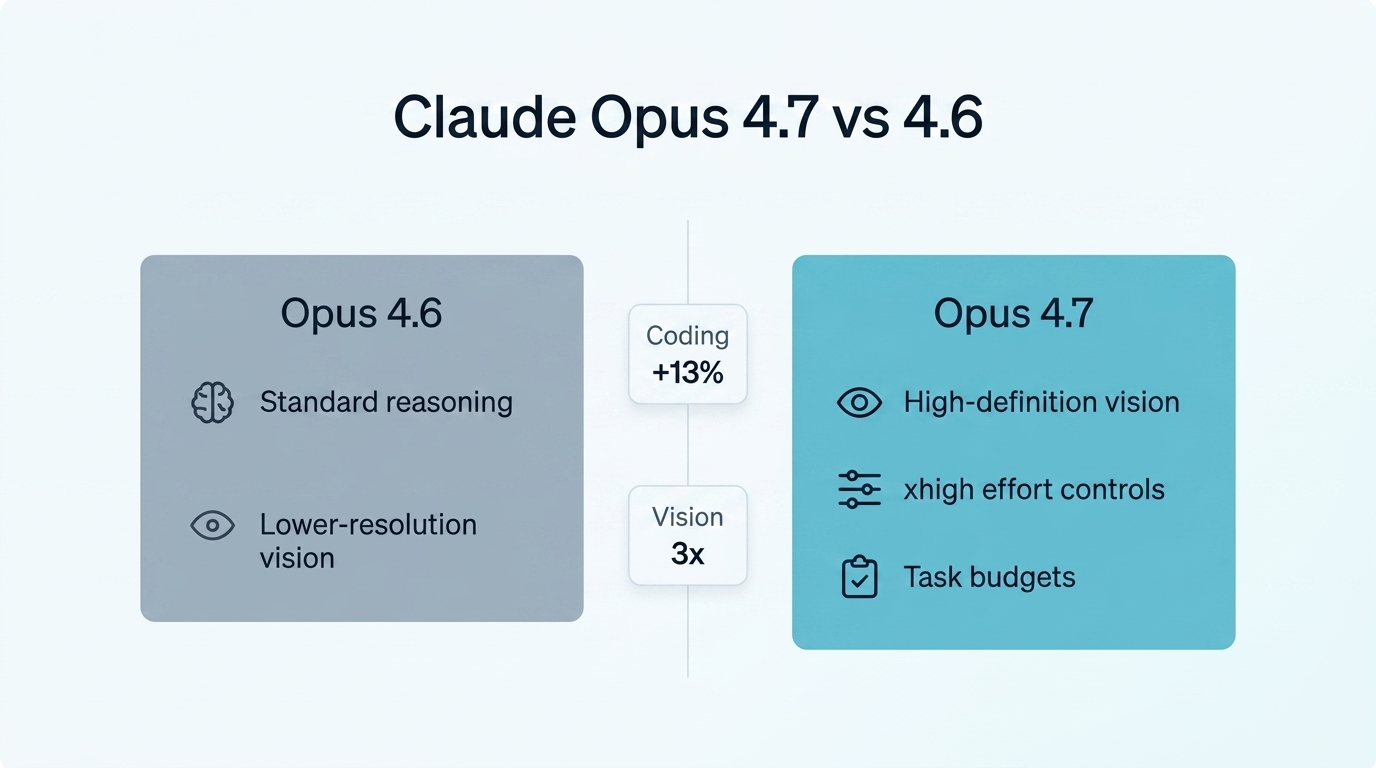

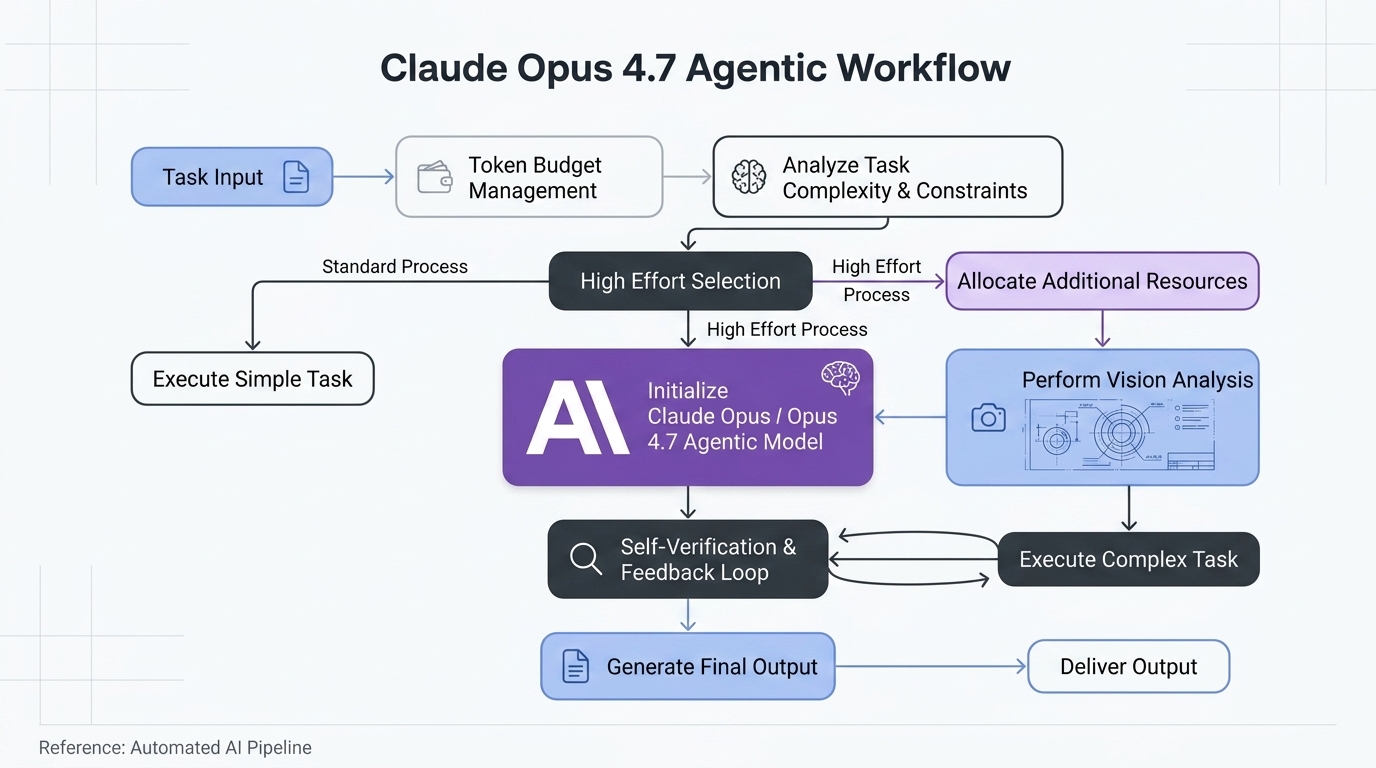

Anthropic officially released Claude Opus 4.7 on April 16, 2026, marking a significant evolutionary step in the Claude 4 architecture. While Opus 4.6 already held a dominant position in long-context reasoning, the 4.7 update focuses on “horizons of autonomy”—the ability for AI to handle multi-hour, multi-step tasks without human intervention. By refining the vision pipeline and introducing granular compute controls like “xhigh” effort and task budgets, Anthropic has optimized Opus 4.7 for professional workflows in coding and the hard sciences. However, this precision comes with architectural shifts that require immediate attention from developers migrating legacy pipelines.

The performance delta: benchmarking the upgrade

The most immediate change in Opus 4.7 is its raw capability lift in technical domains. Anthropic reported a +13% resolution lift on a proprietary 93-task coding benchmark compared to Opus 4.6. This is particularly visible in “long-horizon” tasks where the model must not only generate code but also devise its own verification methods. In early testing by companies like Replit and Cursor, the model demonstrated an increased “opinionated” perspective, often pushing back on suboptimal user requests rather than simply agreeing—a behavior shift aimed at reducing technical debt in AI-generated codebases.

| Metric | Claude Opus 4.6 | Claude Opus 4.7 |

|---|---|---|

| Coding Benchmark Lift | Baseline | +13% over 4.6 |

| Vision Resolution | ~1.1 MP | 3.75 MP (3x Improvement) |

| XBOW Visual Acuity | 54.5% | 98.5% |

| Effort Levels | low, medium, high | low, medium, high, xhigh |

| GDPval-AA Score | 0.767 (Finance) | 0.813 (Finance) |

1. Threefold vision resolution upgrade

Vision has been the “Achilles’ heel” for many frontier models when dealing with fine-grained technical data. Opus 4.7 addresses this with a massive infrastructure upgrade to its vision pipeline. The model now supports images up to 2,576 pixels on the long edge, roughly 3.75 megapixels. This is more than triple the resolution of Opus 4.6. The impact is most evident in “computer use” benchmarks like XBOW, where the model’s visual acuity jumped from 54.5% to a near-perfect 98.5%. For life sciences and engineering, this enables the model to accurately read chemical structures, complex circuit diagrams, and dense patent filings that were previously too blurry for reliable extraction.

2. New ‘xhigh’ effort and reasoning depth

Anthropic has expanded its “Effort API” by introducing the xhigh (extra high) level. Positioned between high and max, this level allows the model to spend more time “thinking” and planning before committing to an output. In Claude Code, xhigh is now the default for complex PR reviews. This is not just a latency trade-off; it changes the model’s behavior. Early testers at Harvey (legal tech) noted that at xhigh effort, Opus 4.7 correctly distinguishes between complex legal clauses—such as assignment vs. change-of-control provisions—that previously required manual human review.

3. Task budgets and token management

Managing costs in agentic workflows has historically been a guessing game. Along with Opus 4.7, Anthropic launched “task budgets” in public beta. This feature allows developers to set a hard cap on token spend for a specific run. Instead of just cutting off the model when the limit is reached, the model is aware of its budget and can prioritize critical steps or condense its reasoning to stay within limits. This is essential for autonomous agents running in environments like n8n or LangChain, where a runaway loop could otherwise lead to unexpected API bills.

The trade-offs: what got worse?

Despite the capability gains, the transition to Opus 4.7 is not a “drop-in” replacement without consequences. Anthropic has highlighted two specific areas where users might see regressions or increased friction:

1. Token inflation via the updated tokenizer

Opus 4.7 uses an updated tokenizer designed to improve text processing efficiency. However, the immediate side effect is that the same string of text may now map to 1.0–1.35× more tokens than it did in Opus 4.6. For users with high-volume pipelines, this effectively results in a 10% to 35% cost increase for the same input data. While Anthropic claims the net efficiency is better because the model solves tasks in fewer turns, the initial “input cost” for long documents will be measurably higher.

2. Literal instruction following (prompt breakage)

In Opus 4.6, the model often used “common sense” to interpret loosely phrased instructions. Opus 4.7 has been tuned for “strict fidelity,” meaning it follows instructions literally. If your legacy prompts contain contradictory commands or “fluff” that 4.6 ignored, 4.7 may attempt to execute them precisely, leading to unexpected results. Teams integrating the new model must plan for a prompt re-tuning phase to ensure that the increased literalism doesn’t break existing automation logic.

Implementation guide: migrating your API calls

To start using Opus 4.7, developers need to update their model string to claude-opus-4-7. Pricing remains consistent with the previous version: $5 per million input tokens and $25 per million output tokens. Below is a standard implementation example using the new effort and task_budget parameters available in the latest SDKs.

import anthropic

client = anthropic.Anthropic()

# Optimized call for an autonomous agent

message = client.messages.create(

model="claude-opus-4-7",

max_tokens=4096,

effort="xhigh", # Utilize the new depth level

task_budget=50000, # Public beta: cap total run tokens

messages=[{

"role": "user",

"content": "Analyze these high-res architectural blueprints and identify structural risks."

}]

)

print(message.content[0].text)Conclusion: should you upgrade?

Claude Opus 4.7 represents a shift from “conversational AI” to “agentic infrastructure.” The 3x vision resolution and +13% coding lift make it the definitive choice for engineering and scientific teams, even with the token inflation caused by the new tokenizer. For teams using automated pipelines in n8n or Make.com, the addition of task budgets provides a much-needed safety net for autonomous loops. However, because of the model’s new literalism, the upgrade requires more than a simple API string change; it demands a thorough audit of legacy prompts. As we move further into 2026, Opus 4.7 sets the new baseline for what developers should expect from a frontier reasoning model.

Leave a Comment

Sign in to join the discussion and share your thoughts.

Login to Comment