AI coding agents are transforming software development, but they share a critical weakness: they start every session blind. When Claude Code, Codex, or Gemini CLI needs to understand your codebase structure, they explore it file by file—grep here, read there, grep again. As of April 2026, developers report this approach burns through 45,000 tokens for a simple question like “what calls ProcessOrder?” In production environments, this inefficiency translates to unsustainable API costs and slower iteration cycles.

The breakthrough solution lies in persistent code knowledge graphs. By leveraging the Model Context Protocol (MCP) specification 2025-06-18—the latest revision as of June 2025—developers can now construct intelligent code repositories that reduce token consumption by up to 120×. This guide walks through building such a graph using open-source MCP tools, enabling small-to-medium businesses to embed automated code reviews in n8n workflows without exploding their API expenditures.

Understanding the token burn problem

Modern AI coding agents explore codebases through an inefficient pattern of file-by-file discovery. When asked to trace callers of a function, agents typically execute this sequence:

- Grep for the function name across all files (~15,000 tokens)

- Read each matching file to understand context (~25,000 tokens)

- Follow imports to find indirect callers (~20,000 tokens)

- Often give up after hitting context limits, delivering incomplete results

Multiply this inefficiency across every question in a coding session, and teams burn hundreds of thousands of tokens per hour—most spent reading files irrelevant to the actual query. The 2026 benchmarks from real‑world implementations show a 45,000‑token cost for simple structural questions that should require fewer than 400 tokens.

How code knowledge graphs transform the paradigm

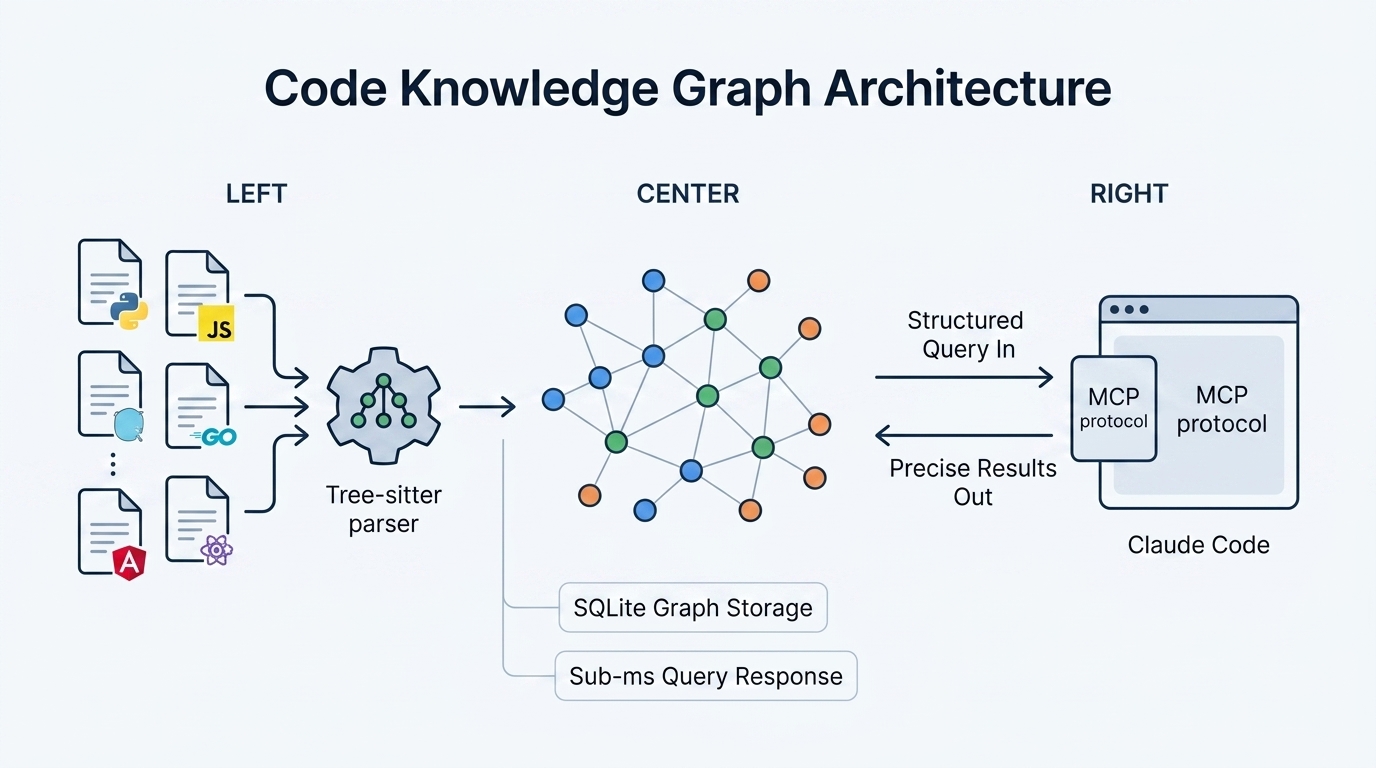

A code knowledge graph flips the exploration model. Instead of real‑time file scanning, the repository is parsed once into a persistent graph database containing functions, classes, method calls, imports, HTTP routes, and cross‑service relationships. Once indexed, queries execute in under 1ms and return structured results requiring minimal tokens.

codebase-memory-mcp, an open‑source MCP server released in March 2026, implements this approach using Tree‑sitter AST parsing and SQLite‑backed graph storage. The tool exposes 14 MCP tools that AI agents use to query code structure directly. The same “what calls ProcessOrder?” query now costs approximately 200 tokens—achieving the documented 120× reduction.

For more details, see the codebase-memory-mcp GitHub repository.

Technical implementation with MCP 2025-06-18

The Model Context Protocol specification revision 2025-06-18 introduced structured tool output, resource links in tool calls, and enhanced security through OAuth Resource Server classification. These features make code knowledge graphs more practical for production workflows.

At its core, codebase-memory-mcp operates as a structural analysis backend. It builds and queries the knowledge graph while relying on the connected AI agent—Claude Code, Codex, or any MCP‑compatible agent—to serve as the intelligence layer. This design eliminates the need for an intermediate LLM to translate natural language into graph queries, removing additional API costs and configuration complexity.

Performance benchmarks

Independent testing across 31 languages with 372 questions reveals dramatic improvements:

| Query Type | Knowledge Graph | File-by-File Search | Savings |

|---|---|---|---|

| Find function by pattern | ~200 tokens | ~45,000 tokens | 225× |

| Trace call chain (depth 3) | ~800 tokens | ~120,000 tokens | 150× |

| Dead code detection | ~500 tokens | ~85,000 tokens | 170× |

| List all HTTP routes | ~400 tokens | ~62,000 tokens | 155× |

| Architecture overview | ~1,500 tokens | ~100,000 tokens | 67× |

| Total (5 queries) | ~3,400 | ~412,000 | 121× |

This represents a 99.2% token reduction—translating directly to API cost savings. The Linux kernel benchmark—28 million lines across 75,000 files—indexes in 3 minutes on an Apple M3 Pro, generating 2.1 million nodes and 4.9 million edges.

Building your code knowledge graph: step-by-step

Implementation requires three commands and supports 66 languages through vendored Tree‑sitter grammars compiled into a single static binary. Zero Docker containers, no runtime dependencies, and no API keys.

Step 1: Install the MCP server

# macOS / Linux one-liner

curl -fsSL https://raw.githubusercontent.com/DeusData/codebase-memory-mcp/main/install.sh | bash

# With graph visualization UI

curl -fsSL https://raw.githubusercontent.com/DeusData/codebase-memory-mcp/main/install.sh | bash -s -- --uiWindows users can run the PowerShell equivalent. The install command auto-detects and configures Claude Code, Codex CLI, Gemini CLI, Zed, OpenCode, Antigravity, Aider, KiloCode, VS Code, and OpenClaw through MCP config entries, instruction files, and pre-tool hooks.

Step 2: Index your repository

codebase-memory-mcp install

# Restart your agent, then:

"Index this project"The indexing pipeline uses a RAM-first approach: LZ4 compression, in-memory SQLite, and fused Aho‑Corasick pattern matching. Memory releases back to the OS after completion. Auto-sync via background watchers keeps the graph fresh as files change.

Step 3: Query through MCP tools

The 14 available tools enable precise structural queries:

- search_graph: Find functions/classes by name pattern, label, or degree

- trace_call_path: Follow callers/callees at configurable depth

- detect_changes: Map git diff to affected symbols with risk classification

- query_graph: Execute Cypher‑like graph queries

- get_architecture: Return languages, packages, entry points, routes, hotspots

Example workflow: an agent asks “what calls ProcessOrder?” → calls trace_call_path → receives structured call chain in ~200 tokens → presents results. No file opening required.

Integrating with n8n for automated code reviews

Small-to-medium businesses can leverage this architecture in n8n workflows to automate code reviews without the token costs that traditionally make such automation impractical.

The n8n integration pattern

n8n workflows trigger on GitHub/GitLab pull requests, pass the changed files to codebase-memory-mcp’s detect_changes tool for impact analysis, then query the graph for relevant context before sending a compact, structured prompt to an LLM for review generation.

Without the knowledge graph, a workflow processing a multi-file PR might consume 50,000+ tokens per review just gathering context. With the graph:

- Webhook receives PR event (negligible tokens)

- detect_changes identifies affected symbols (~500 tokens)

- trace_call_path fetches related call chains (~800 tokens)

- get_code_snippet retrieves specific function bodies (~1,200 tokens)

- LLM receives focused context (~2,500 tokens total vs. 50,000+)

Example n8n workflow configuration

// HTTP Request node calling codebase-memory-mcp

{

"method": "POST",

"url": "http://localhost:9749/mcp/detect_changes",

"body": {

"repo_path": "/repos/{{ $json.repository.name }}",

"diff": "{{ $json.pull_request.diff }}"

}

}

// Follow-up: Get affected function details

{

"method": "POST",

"url": "http://localhost:9749/mcp/get_code_snippet",

"body": {

"qualified_name": "{{ $json.affected_symbols[0].qualified_name }}"

}

}Typical SMB implementations report reducing automated review costs from $200‑500/month to under $15/month while improving review quality through precise, context‑aware analysis.

Supported languages and graph features

As of April 2026, codebase-memory-mcp supports 66 languages compiled into a single static binary—no dependency management or grammar updates required:

Programming languages (39): Python, Go, JavaScript, TypeScript, TSX, Rust, Java, C++, C#, C, PHP, Ruby, Kotlin, Scala, Swift, Dart, Zig, Elixir, Haskell, OCaml, Objective‑C, Lua, Bash, Perl, Groovy, Erlang, R, Clojure, F#, Julia, Vim Script, Nix, Common Lisp, Elm, Fortran, CUDA, COBOL, Verilog, Emacs Lisp

Config/markup (20): HTML, CSS, SCSS, YAML, TOML, HCL, SQL, Dockerfile, JSON, XML, Markdown, Makefile, CMake, Protobuf, GraphQL, Vue, Svelte, Meson, GLSL, INI

Scientific (5): MATLAB, Lean 4, FORM, Magma, Wolfram

Recent updates include an LSP‑style hybrid type resolution for Go, C, and C++—combining Tree‑sitter structural parsing with language server protocol semantic analysis for more accurate cross‑reference resolution.

Production considerations and next steps

For production deployments, consider these configuration options:

- Auto-indexing: Enable with

codebase-memory-mcp config set auto_index truefor new projects and ongoing change detection - Custom cache location: Set

CBM_CACHE_DIRenvironment variable for centralized storage - File limits: Configure

auto_index_limitfor large repositories (default: 50,000 files) - Custom extensions: Add framework‑specific mappings via

.codebase-memory.json

The graph visualizer UI variant runs at localhost:9749, providing 3D interactive exploration of your codebase structure—useful for onboarding and architecture reviews.

Conclusion

Code knowledge graphs represent a fundamental shift in AI coding agent efficiency. By parsing repositories once into persistent graph structures and exposing them through MCP tools, developers achieve up to 120× token reduction—transforming token‑limited workflows into economically viable automation.

For small-to-medium businesses, the integration with n8n opens automated code reviews that previously cost prohibitive amounts in API fees. The single‑binary, zero‑dependency architecture makes adoption straightforward across development teams.

The next frontier includes expanded LSP hybrid support for additional languages and deeper cross‑service HTTP linking with confidence scoring. As of April 2026, the MIT‑licensed codebase-memory-mcp remains the most performant open‑source option for code intelligence—indexing the Linux kernel in under 3 minutes while serving sub‑millisecond queries that save real money on every development session.

[…] broadly and hoping for the best. Early 2026 has witnessed a dramatic shift: the emergence of persistent code knowledge graphs has trimmed these costs to a fraction of previous usage while delivering instant structural […]

[…] Salesforce) or accounting software (QuickBooks) often requires a bridge. This is where the Model Context Protocol (MCP) and n8n come into play. Hermes Agent features native MCP support, allowing it to connect to any […]

[…] Code directly into its observability stack, connecting the AI agent to Datadog and Sentry via Model Context Protocol (MCP) servers. This integration allows Claude to autonomously aggregate logs, error reports, and system […]