AI coding agents entered 2026 facing a fundamental constraint when developers asked them questions like “what calls ProcessOrder?” These agents would burn through 45,000 tokens just opening files and scanning for matches—reading broadly and hoping for the best. Early 2026 has witnessed a dramatic shift: the emergence of persistent code knowledge graphs has trimmed these costs to a fraction of previous usage while delivering instant structural comprehension.

The problem with file-by-file exploration

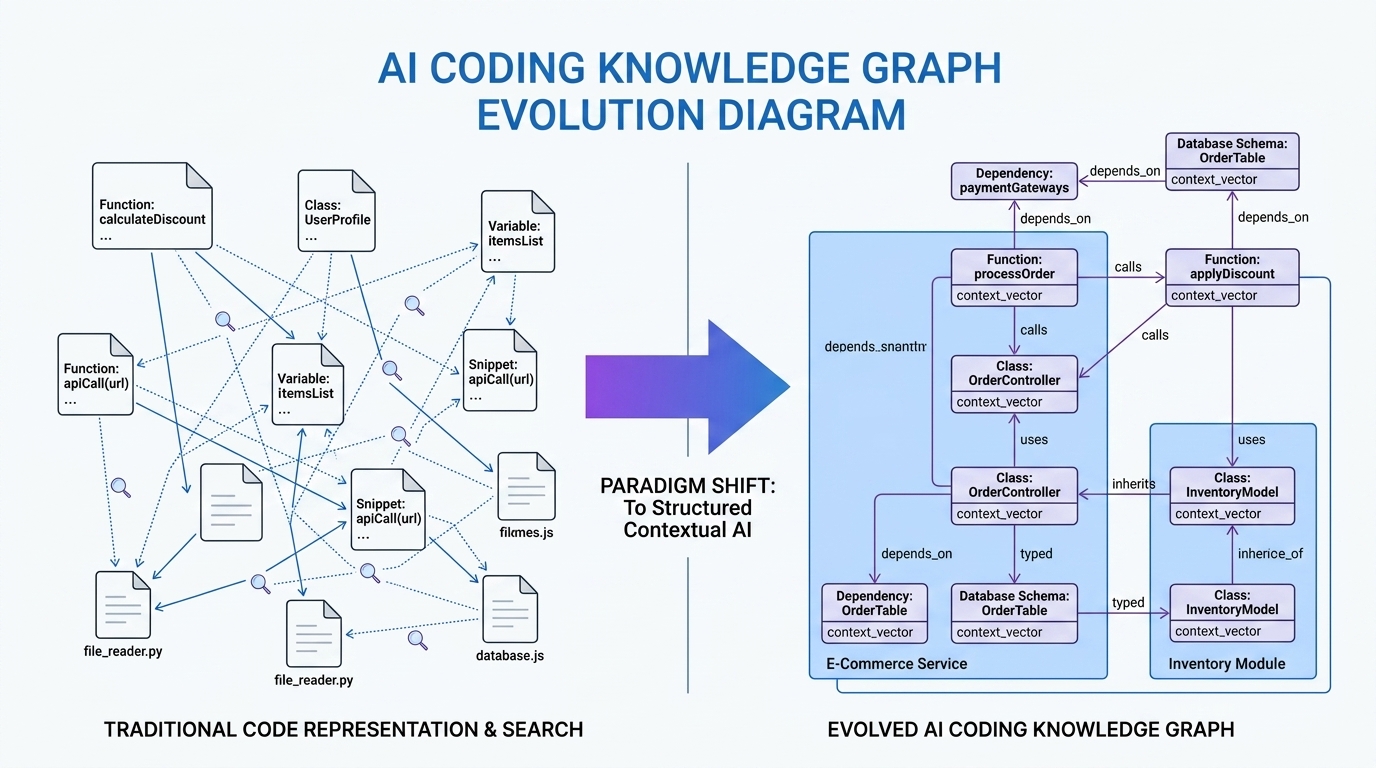

When Claude Code, Cursor, or Gemini CLI encounters a codebase for the first time, they possess no structural understanding of what calls what, which files depend on which, or where boundaries between concerns lie. The approach was laboriously primitive: grep for function names, read each matching file to gather context, follow imports to find indirect callers, then potentially give up after hitting context limits and missing half the call chain.

That single question cost approximately 12,000 tokens. The answer required maybe 800. Practical experience reveals that 60-80% of consumed tokens went toward figuring out where things are, not answering actual questions. The industry’s initial response was to expand context windows—Gemini offers 1 million tokens, Claude provides 200K—but this proves analogous to solving a bad filing system by renting a larger office. The core issue remains precision, not capacity.

The knowledge graph paradigm

By mid-2026, tools like codebase-memory-mcp and CartoGopher demonstrated a superior approach: parse once, query forever. These systems run a one-time indexing pass using tree-sitter AST parsing, extracting every function, class, method, import, call relationship, and HTTP route into persistent graph storage. After initialization, the graph stays fresh automatically via background watchers that detect file changes.

The performance metrics speak clearly. Real-world benchmarks across 31 languages and 372 questions reveal striking efficiency gains:

| Query Type | Knowledge Graph | File-by-File Search | Savings |

|---|---|---|---|

| Find function by pattern | ~200 tokens | ~45,000 tokens | 225x |

| Trace call chain (depth 3) | ~800 tokens | ~120,000 tokens | 150x |

| Dead code detection | ~500 tokens | ~85,000 tokens | 170x |

| List all HTTP routes | ~400 tokens | ~62,000 tokens | 155x |

| Architecture overview | ~1,500 tokens | ~100,000 tokens | 67x |

Why structured context outperforms embeddings

Prior solutions relied on vector embeddings and semantic similarity, but these approaches share a fundamental structural blind spot. Ask a vector-based tool “how does authentication work?” and it retrieves chunks mentioning “auth,” “login,” and “token.” What it misses is that middleware.ts calls refresh.ts, which depends on jwt-config.ts—the call chain, the actual architecture, remains invisible because embeddings capture similarity, not structure.

Knowledge graphs store relationships as structured data, not vector similarity. Nodes represent code entities (functions, types, files, packages) while edges encode relationships (calls, imports, implements, depends-on). This allows agents to traverse the codebase following function calls to implementations and dependencies rather than guessing at relevance. Systems utilizing this approach report 40-95% token savings without losing answer accuracy.

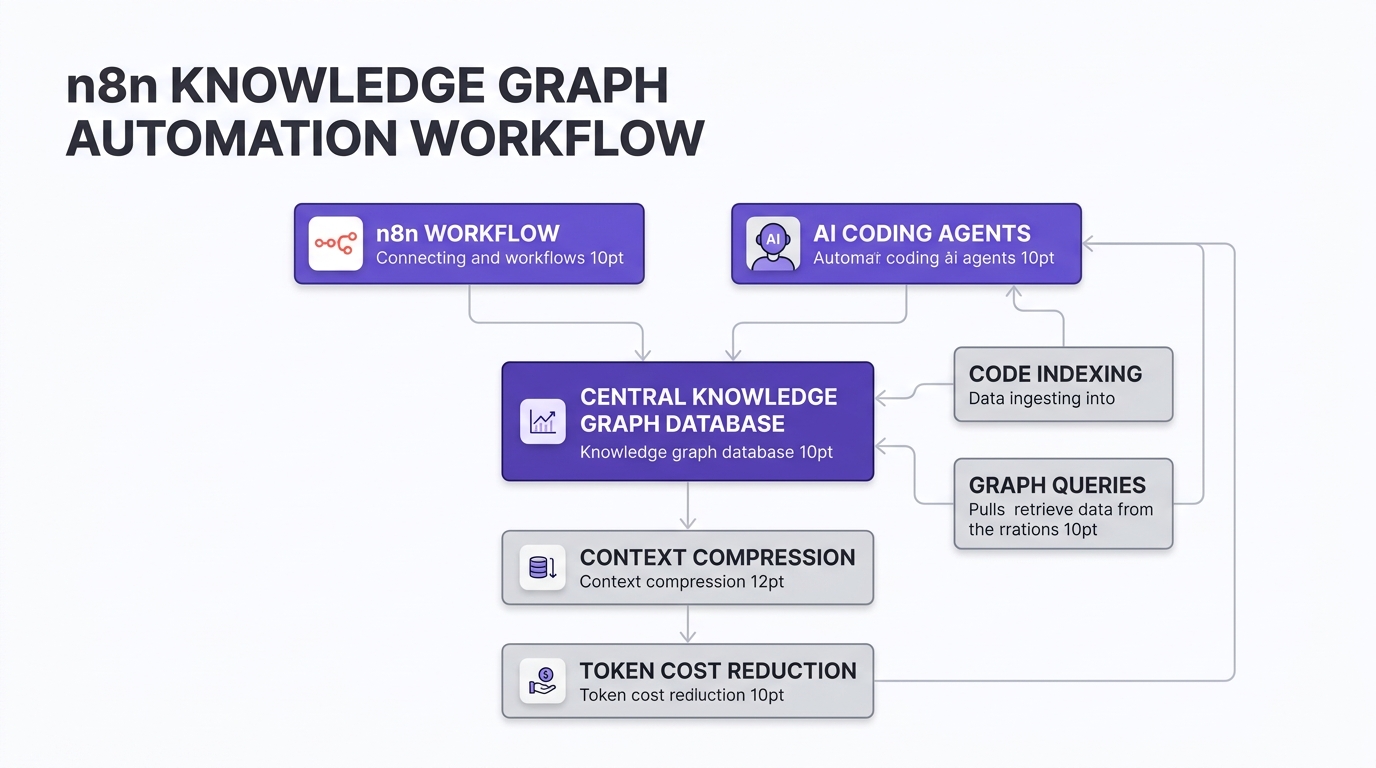

Implementing with n8n orchestration

For small-to-medium businesses, implementing these capabilities need not require custom development. The 2026 n8n ecosystem supports multi-agent orchestration patterns that integrate seamlessly with knowledge graph systems, enabling sophisticated automation pipelines.

Supervisor/delegator patterns work particularly well: a primary agent receives requests and delegates to specialist agents based on content. N8n’s AI Agent Tool node enables this configuration where specialist agents have focused tool sets and domain-specific prompts. Alternatively, sub-workflow orchestration isolates each specialist in its own workflow with independent triggers, error handling, and deployment lifecycles.

State management becomes critical across agent handoffs. External storage using Supabase, PostgreSQL, or Redis maintains conversation context and agent decisions persistently. Implement session IDs consistently and consider idempotency patterns to prevent duplicate actions during workflow restarts. Role-appropriate model selection further optimizes costs—orchestrators using capable models like Claude 3 while data retrieval agents operate with faster, cheaper alternatives.

The ecosystem reality

Enterprise tools have converged on this approach. Augment’s Context Engine builds real-time knowledge graphs mapping dependency paths across services—capturing relationships as structured data. Supermodel provides graph-powered tools with MCP server integrations for Claude Code. Google’s Conductor, introduced in early 2026, addresses similar problems through persistent Markdown with structured spec-plan-implement workflows. GitLab’s integration with their GKG (GitLab Knowledge Graph) and Bosync’s codebase graphs represent further industry recognition.

“When Claude Code auto-compacts, your agent loses its understanding of your codebase. Graph reloads restore that structural summary after every compaction so agents keep working like nothing happened.”

Solutions like Supermodel demonstrating persistent graph utility

Practical implementation for SMBs

Organizations do not need to rebuild what already exists. CartoGopher and codebase-memory-mcp expose their capabilities through 14 MCP (Model Context Protocol) tools supporting Claude Code, Codex CLI, Gemini CLI, Zed, OpenCode, and other agents. The setup requires minimal infrastructure—a static binary averaging 15MB with zero runtime dependencies.

For n8n orchestration, implement these core components:

- Graph indexing workflow: Trigger repository analysis when code changes are pushed

- Query routing: Classify requests as needing file content versus structural queries

- Context compression node: Convert graph query results into minimal token representations

- Agent delegation: Route to appropriate specialists based on graph-derived classifications

- Cost monitoring: Track token savings per workflow to validate ROI

Conclusion

The 2026 evolution from file-by-file grep to persistent knowledge graphs represents more than an optimization—it fundamentally changes how AI agents comprehend codebases. Systems that parse once and query forever achieve 120x token reduction compared to naive exploration, transforming coding assistant economics from opacity to transparency.

For SMBs, this shift enables n8n-orchestrated pipelines that deliver enterprise-grade context management without re-engineering core infrastructure. The architecture patterns—supervisor delegation, pipeline processing, and state management—integrate seamlessly with graph-based context providers. As token budgets increasingly determine operational viability, persistent knowledge graphs have become not a luxury but a requirement for cost-effective AI coding workflows.

[…] the right AI coding assistant in 2026 is no longer just about features—it is about maximizing developer productivity while […]

[…] price reads $25/month for Core and $100/month for Pro. For a small or midsize business evaluating AI-powered app development, those numbers look reasonable compared to hiring a developer. But after analyzing independent cost […]

[…] features, you can stop working in your business and start working on it, letting Anthropic’s agentic ecosystem handle the cognitive heavy […]