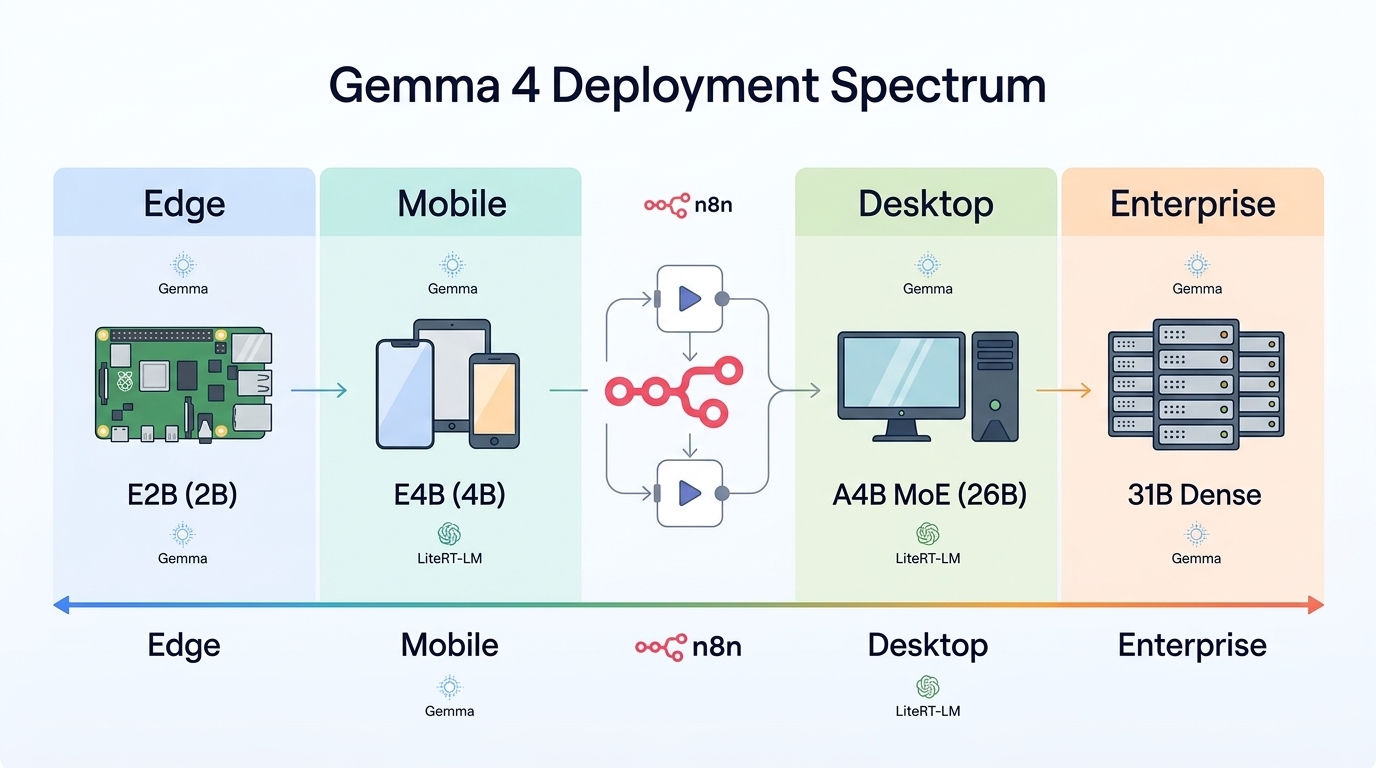

Deploying sophisticated AI agents locally rather than relying on cloud APIs has become the dominant architectural pattern for privacy-conscious and latency-sensitive applications. Google’s Gemma 4 family, released in April 2026 under Apache 2.0, represents a watershed moment for on-device agentic AI—delivering frontier-level reasoning, coding, and multimodal capabilities across an unprecedented hardware range. From the efficient 2B-parameter edge model running on a Raspberry Pi to the dense 31B variant deployed in enterprise data centers, Gemma 4 enables developers to build autonomous agents that maintain complete data sovereignty.

Understanding the Gemma 4 model architecture

Gemma 4 ships four distinct model variants, each optimized for specific deployment scenarios. The edge-focused E2B and E4B models employ Per-Layer Embeddings (PLE) to maximize parameter efficiency, while the server-grade variants utilize dense and Mixture-of-Experts (MoE) architectures.

| Model | Effective Parameters | Total Parameters | Context Length | Modalities | Target Platform |

|---|---|---|---|---|---|

| E2B | 2.3B | 5.1B | 128K tokens | Text, Image, Audio | Raspberry Pi, Mobile |

| E4B | 4.5B | 8B | 128K tokens | Text, Image, Audio | Mid-range Phones, Edge |

| 26B A4B | 3.8B | 25.2B | 256K tokens | Text, Image | Workstation, Consumer GPU |

| 31B | 31B | 30.7B | 256K tokens | Text, Image | Data Center, DGX |

The E2B model delivers remarkable efficiency for constrained environments, achieving 7.6 decode tokens per second on Raspberry Pi 5 (8GB) using Q4_K_M quantization. The E4B variant pushes performance further while maintaining edge viability. The 26B A4B MoE architecture activates only 3.8B parameters during inference, delivering near-4B speed with 26B capability—making it ideal for workstations with 16GB VRAM. The 31B dense model requires 24GB+ VRAM but provides maximum reasoning depth for complex agentic workflows.

Hardware deployment spectrum: from Pi to data center

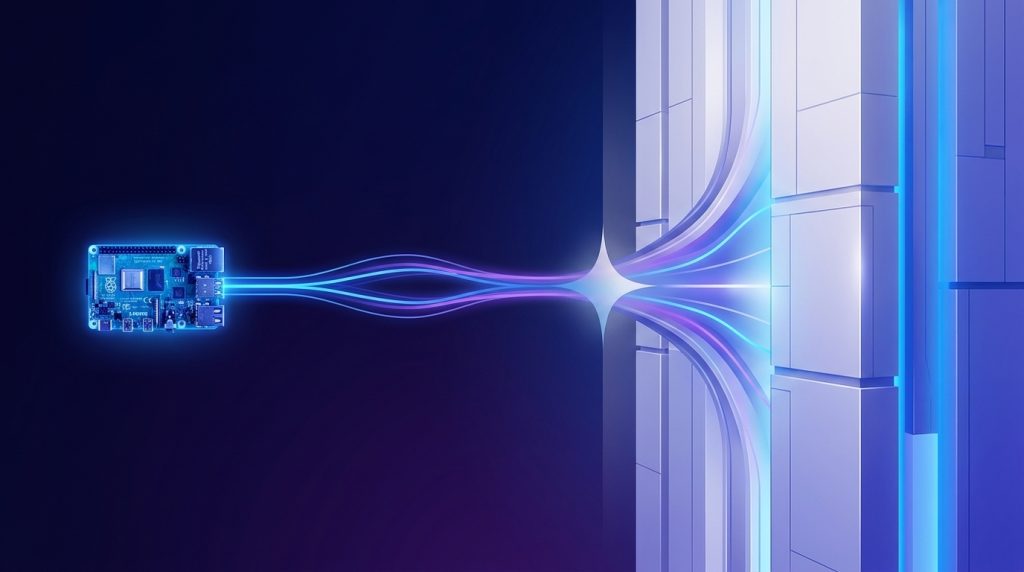

Edge deployment: Raspberry Pi and IoT

Google’s LiteRT-LM framework enables Gemma 4 E2B and E4B deployment on Raspberry Pi 5 with minimal overhead. The framework supports 2-bit and 4-bit quantization along with memory-mapped per-layer embeddings, allowing E2B to run using under 1.5GB memory. LiteRT-LM also supports constrained decoding for structured outputs—critical for reliable tool-calling implementations.

For Raspberry Pi 5 with 8GB RAM, Q4_K_M quantization of E2B achieves approximately 7.6 decode tokens per second on CPU. The 16GB configuration can comfortably run E4B, pushing performance to roughly 4-5 tokens per second while maintaining audio processing capabilities. The platform also supports the Arduino VENTUNO Q powered by Qualcomm Dragonwing IQ8, where NPU acceleration delivers 3,700 prefill and 31 decode tokens per second.

Consumer hardware: desktop and mobile

The 26B A4B MoE model represents the sweet spot for consumer GPU deployment. Running on consumer hardware like RTX 4070 (12GB) or 4090 (24GB), this model activates only 3.8B parameters per inference while leveraging the full 25.2B parameter pool for knowledge. This architecture delivers 80.0% on LiveCodeBench v6 and 88.3% on AIME 2026—nearly matching the 31B dense model while requiring significantly less VRAM.

Mobile deployment benefits from the Google AI Edge Gallery application, available on iOS and Android, which manages on-device agent skills including Wikipedia querying, data visualization generation, and end-to-end application building through conversation. Android developers can access Android AICore Developer Preview for system-wide Gemma 4 integration.

Enterprise deployment: workstations and data centers

The 31B dense model targets enterprise environments with dedicated GPU resources. NVIDIA A100 or H100 deployments can leverage full precision for maximum accuracy, while desktop workstations with RTX 4090 cards can run quantized variants using frameworks like vLLM or Ollama. The 256K token context window enables processing extensive documents, codebases, or multi-turn agentic workflows without truncation.

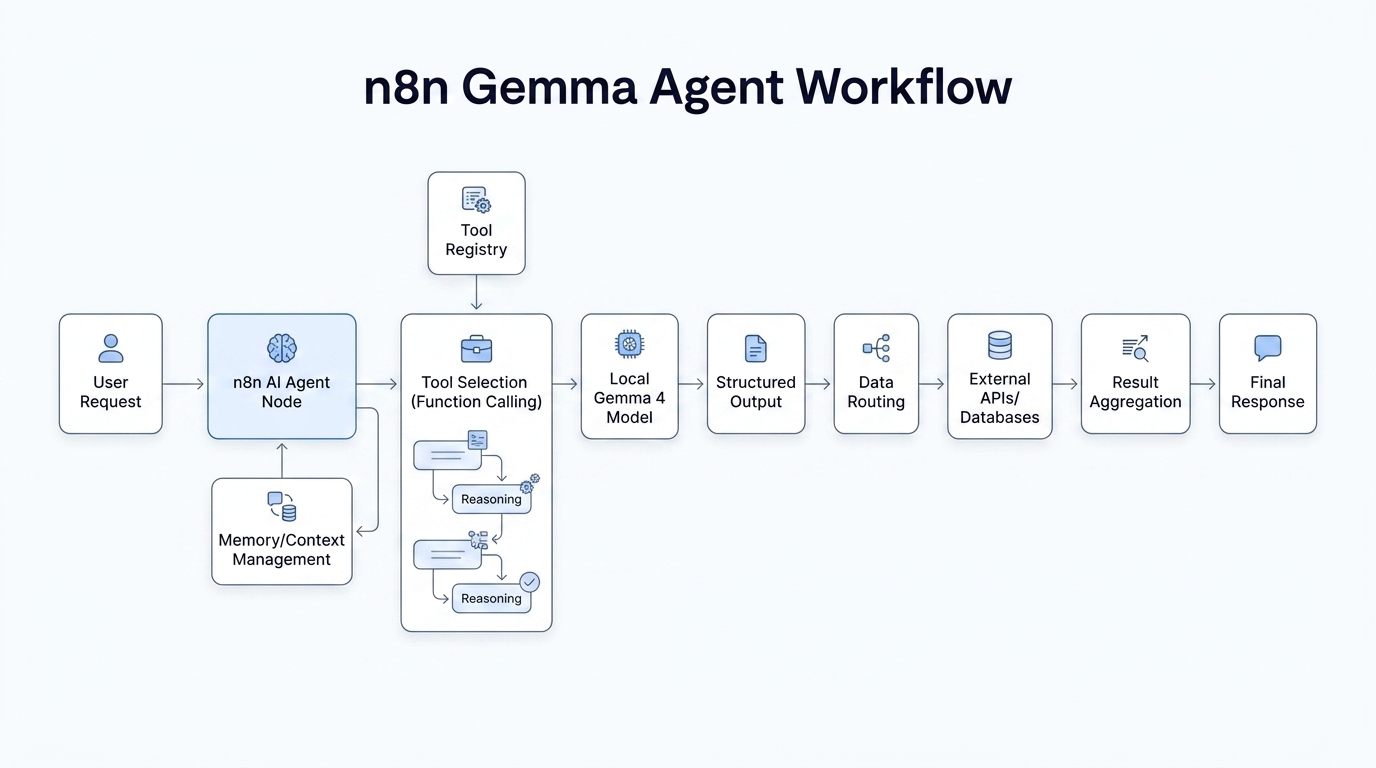

Building agentic workflows with n8n

Implementing Gemma 4 as a local tool-calling agent requires a robust orchestration layer to manage function calling, data routing, and memory persistence. n8n provides an ideal framework for this orchestration through its AI Agent node, which handles complex multi-step reasoning while maintaining connection to your local Gemma instance.

Setting up the n8n AI agent node

The n8n AI Agent node natively supports function calling, allowing you to define tool schemas that Gemma 4 can invoke during reasoning. When connecting to a local Gemma instance via Ollama or an OpenAI-compatible API wrapper, configure the model to use the system role with the <|think|> token to enable reasoning mode.

// Example n8n AI Agent node configuration for Gemma 4

{

"options": {

"systemMessage": "<|think|>You are an autonomous agent with access to Wikipedia search, database queries, and file system operations. Think step by step before taking actions.",

"temperature": 1.0,

"topP": 0.95,

"topK": 64

}

}Gemma 4’s native function calling capability returns structured JSON that n8n can parse and route to appropriate downstream nodes. This eliminates the need for complex prompt engineering to extract tool invocations—the model understands when to invoke tools and formats responses accordingly.

Implementing tool registry and data routing

A production-grade Gemma 4 agent requires a structured tool registry within n8n. Define tools as HTTP Request nodes, Function nodes, or integrations with external services like PostgreSQL, REST APIs, or file systems. The AI Agent node evaluates the user’s intent, selects appropriate tools from the registry, and manages the conversation flow.

For memory management across multi-turn conversations, use n8n’s built-in data persistence or connect to external vector databases like ChromaDB or Pinecone for long-term context. Gemma 4’s 256K context window (for larger models) allows substantial conversation history, but for truly persistent agent memory, store conversation summaries in a vector database and inject relevant context into each prompt.

Handling multimodal inputs

For edge deployments using E2B or E4B, n8n workflows can integrate audio and vision capabilities. Configure the AI Agent node to process Base64-encoded images or audio files, passing them to Gemma 4’s multimodal endpoints. The E2B and E4B models natively handle audio tokens without requiring separate ASR preprocessing, simplifying pipeline complexity.

Performance benchmarks and optimization

Choosing the appropriate Gemma 4 variant requires balancing capability against latency constraints. The benchmarks below illustrate performance trade-offs across model sizes:

| Benchmark | Gemma 4 31B | Gemma 4 26B A4B | Gemma 4 E4B | Gemma 4 E2B |

|---|---|---|---|---|

| MMLU Pro | 85.2% | 82.6% | 69.4% | 60.0% |

| AIME 2026 | 89.2% | 88.3% | 42.5% | 37.5% |

| LiveCodeBench v6 | 80.0% | 77.1% | 52.0% | 44.0% |

| GPQA Diamond | 84.3% | 82.3% | 58.6% | 43.4% |

For agentic workflows requiring complex reasoning and tool chaining, the 26B A4B model offers the optimal price-performance ratio for local deployment. If your application primarily handles conversational agents with occasional tool use, the E4B model running on consumer hardware delivers 69.4% MMLU Pro accuracy—surpassing many previous-generation 27B models.

Conclusion and next steps

Gemma 4 democratizes agentic AI by delivering frontier capabilities across the entire hardware spectrum. Whether deploying a privacy-preserving assistant on Raspberry Pi, a coding agent on a consumer workstation, or an enterprise knowledge worker in a data center, the unified architecture ensures consistent behavior and tool compatibility.

To begin your deployment, start with the Google AI Edge Gallery for prototyping on mobile or Raspberry Pi, then migrate to LiteRT-LM for production edge applications. For n8n orchestration, configure the AI Agent node with your local Ollama or vLLM endpoint, define your tool registry, and enable thinking mode for complex multi-step workflows. The era of local, sovereign AI agents is here—no cloud dependency required.

Leave a Comment

Sign in to join the discussion and share your thoughts.

Login to Comment