The business case for Claude Code in 2026 has shifted from experimental curiosity to a verified engine for massive return on investment (ROI). As of April 2026, enterprise giants like Rakuten, Ramp, and Wiz have published data-backed results that redefine developer productivity. From collapsing development cycles by 79% to migrating decades of legacy code in less than a day, these organizations are proving that Claude Code isn’t just an assistant—it’s an autonomous force multiplier. This article breaks down these landmark case studies and provides a blueprint for Small and Medium-sized Businesses (SMBs) to replicate these enterprise-grade efficiencies using automated pipelines and n8n.

Rakuten: Shrinking feature delivery from 24 days to 5

Rakuten, the global e-commerce and fintech leader, faced a common enterprise bottleneck: the “long tail” of feature delivery. Before integrating Anthropic’s Claude Code v2.1.101, the average time to move a feature from concept to production was 24 working days. By leveraging parallel Claude Code sessions and the latest Claude 4.7 Opus model (released April 18, 2026), Rakuten reduced that cycle to just 5 days.

The transformation was driven by “Parallel Agentic Workflows.” Instead of a single developer working on a single task, Rakuten engineers now deploy up to 24 parallel Claude sessions within their monorepo. Each agent handles a specific component—writing unit tests, mocking APIs, or refactoring legacy modules—simultaneously. A standout benchmark saw Claude Code sustain seven hours of autonomous coding on a complex vLLM library refactor, achieving 99.9% numerical accuracy without manual intervention. For Rakuten, this equates to a 79% reduction in time-to-market, allowing them to ship major releases every two weeks rather than quarterly.

Wiz: The 20-hour migration of a 50,000-line library

Legacy debt often paralyzes product roadmaps. Wiz, a leading cloud security platform, had a critical bottleneck: a 50,000-line Python library for PDF parsing that needed to be migrated to Go for performance and security reasons. Manual estimates put the project at 2–3 months of specialized engineering effort. Liron Levin, a software engineer at Wiz, completed the migration using Claude Code in roughly 20 hours—with only 10 hours of active development time.

The result was a 2x increase in PDF processing speed in production and a library that was 60% leaner (18,413 lines of Go compared to the original 50,000 lines of Python). Wiz achieved this through an iterative “Zero-Knowledge Debugging” loop. When 1% of files failed during production testing, Levin used Claude to generate non-sensitive structural diagnostic tools, allowing the AI to fix edge cases without ever seeing proprietary customer data. This capability has now driven a 1.5x increase in merged pull requests across Wiz’s top 100 contributors.

Ramp: Slashing incident investigation by 80%

Financial operations platform Ramp has integrated Claude Code directly into its observability stack, connecting the AI agent to Datadog and Sentry via Model Context Protocol (MCP) servers. This integration allows Claude to autonomously aggregate logs, error reports, and system metrics the moment an incident is triggered. Early data shows that Ramp has reduced initial incident triage time by 80%, as on-call engineers no longer need to manually piece together data from fragmented sources.

Furthermore, Ramp has democratized data access using Claude’s natural language reasoning. Non-engineering teams in sales, risk, and finance can now query their Snowflake data warehouse using natural language. Instead of waiting for a data engineer to write a complex SQL query, these teams get immediate insights, effectively turning every employee into a “builder.” In a single 30-day period, Ramp accepted over 1 million lines of AI-suggested code, maintaining a 50% weekly active usage rate across their entire engineering organization.

Claude enterprise ROI comparison: 2026 benchmarks

| Company | Primary Use Case | Previous Timeline | New Timeline / ROI | Key Metric |

|---|---|---|---|---|

| Rakuten | Feature Delivery | 24 Days | 5 Days | 79% Speed Increase |

| Wiz | Codebase Migration | 3 Months | 20 Hours | 2x Performance Gain |

| Ramp | Incident Response | N/A (Manual Triage) | 80% Reduction | 1M+ Lines/Month |

Translating enterprise success to SMB environments

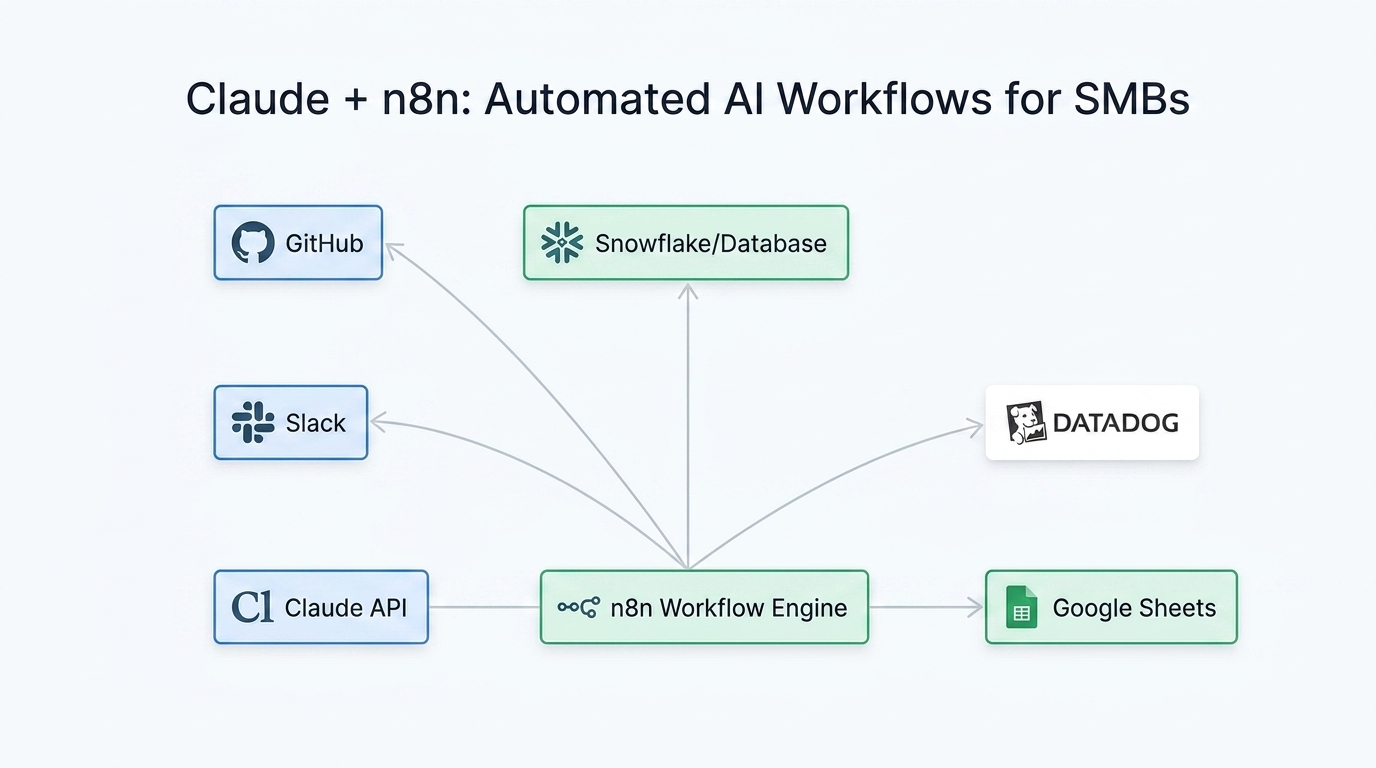

While SMBs may not have the massive monorepos of Rakuten or the engineering headcount of Ramp, the underlying mechanics of their ROI are accessible through n8n automation pipelines. SMBs often struggle with a lack of dedicated DevOps or data staff. By embedding Claude-powered workflows into n8n, smaller teams can achieve “Enterprise-Grade Autonomy” without building custom infrastructure.

- Automated Data Querying: Use n8n’s “AI Agent” node with a Claude 4.7 Sonnet model to allow your sales team to query HubSpot or Postgres databases via Slack.

- Autonomous Ticket Triage: Connect GitHub or Jira to n8n. When a bug report arrives, Claude can analyze the description, search the codebase, and suggest a fix—all before a human developer even opens the ticket.

- Document Modernization: Replicate the Wiz migration strategy by using n8n to batch-process legacy documentation or smaller scripts, modernizing them for 2026 standards in minutes.

Conclusion: The forward-looking path for AI ROI

The evidence from 2026 is clear: the most successful companies are no longer treating Claude as a chatbot, but as a core infrastructure component. Rakuten’s 5-day delivery cycle, Wiz’s 20-hour migration, and Ramp’s 80% faster incident response are not outliers—they are the new standard for AI-native engineering. For SMBs, the path forward involves moving beyond manual prompting and toward integrated, automated workflows. By adopting Parallel Agentic Workflows and leveraging platforms like n8n, smaller teams can bridge the resource gap and outpace larger, less agile competitors. The era of “measuring AI” is over; the era of “executing with AI” has begun. Start by identifying your longest “days-to-delivery” bottleneck and apply a Claude-powered automation loop today.

Leave a Comment

Sign in to join the discussion and share your thoughts.

Login to Comment