Anthropic’s release of Claude Opus 4.7 on April 16, 2026, has been met with both excitement for its performance gains and caution regarding its operational costs. While the official per-token pricing remains unchanged from Opus 4.6—at $5 per million input tokens and $25 per million output tokens—developers are discovering that their actual bills are climbing. As of April 2026, early technical analyses indicate that the same tasks can cost up to 35% more on the new model. This discrepancy arises from a fundamental change in how the model processes text and a new “thinking” paradigm that significantly impacts token consumption.

The updated tokenizer: why the same text uses more tokens

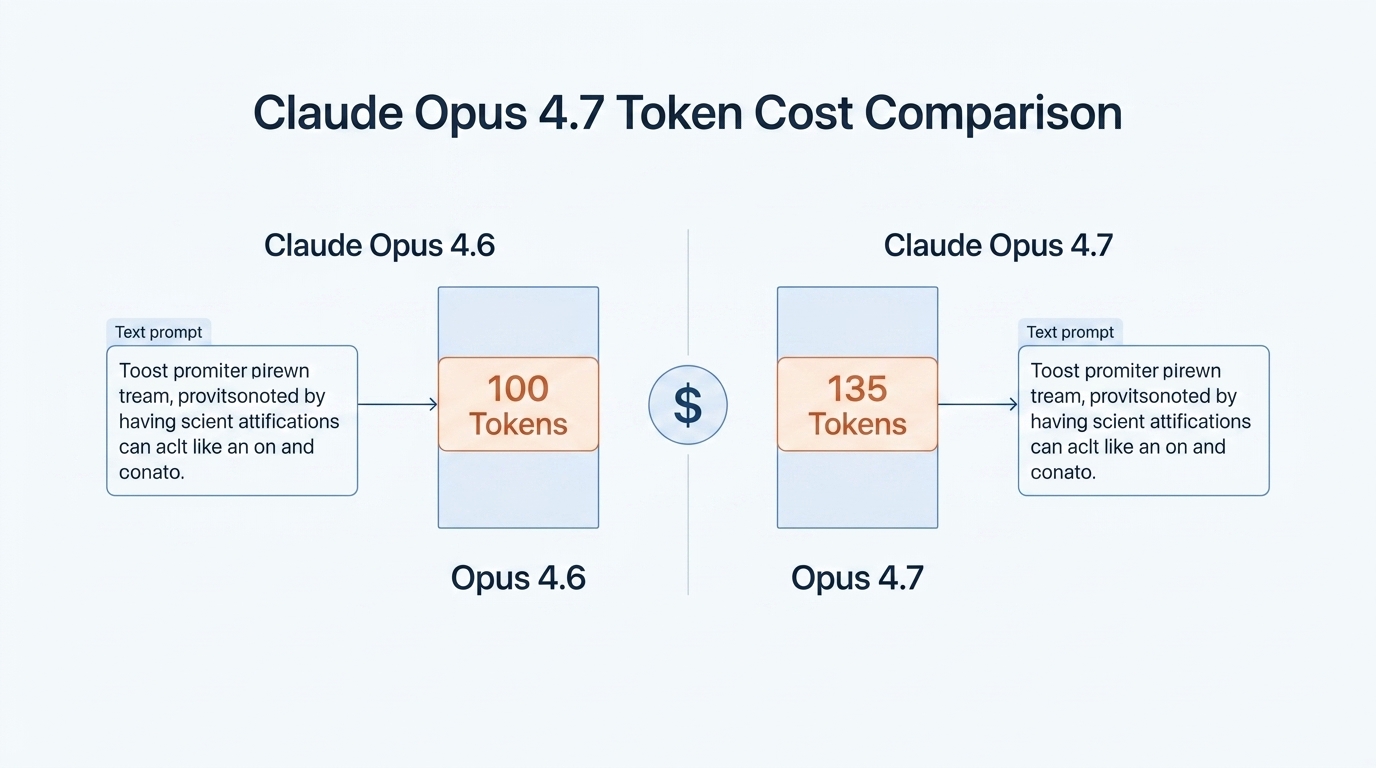

The primary driver of the “hidden” cost increase in Claude Opus 4.7 is the updated tokenizer. A tokenizer is the component that breaks down raw text into the numerical units LLMs understand. While the $5/$25 price tag is tied to the number of tokens, the ratio of text-to-tokens has shifted. According to Anthropic’s documentation, the new tokenizer is designed to improve performance in coding and structured data tasks, but it does so by creating more tokens for the same input strings.

For standard English prose, the impact is minimal. However, for codebases and structured data like JSON or XML, the token count can increase by a factor of 1.0 to 1.35. In practical terms, a prompt that mapped to 1,000 tokens in Opus 4.6 might now require 1,350 tokens in Opus 4.7. Because you are billed per token, this translates to a direct 35% cost increase for the exact same input. Anthropic notes that 1 million tokens now cover roughly 555,000 words in Opus 4.7, compared to 750,000 words in previous versions.

Inference effort and the cost of ‘thinking harder’

Beyond input costs, Opus 4.7 introduces a new xhigh effort level and shifts how the model handles internal reasoning. The model is now more autonomous and exhibits a tendency to “think harder” at higher effort levels. While this leads to a 13% improvement in coding tasks and an 87.6% score on SWE-bench Verified, it results in a higher volume of generated tokens.

Claude Opus 4.7 calibrates its response length based on perceived task complexity rather than a fixed verbosity. For complex agentic workflows, the model will often produce more internal “thinking” tokens to visually verify its own outputs and double-check logic. While these thinking blocks are essential for the model’s increased accuracy, they directly contribute to the output token count. Teams migrating legacy applications where prompts were “fragile” or highly optimized for 4.6 should expect higher variability in output costs and are encouraged to re-baseline their spend.

Managing runaway costs with task budgets (public beta)

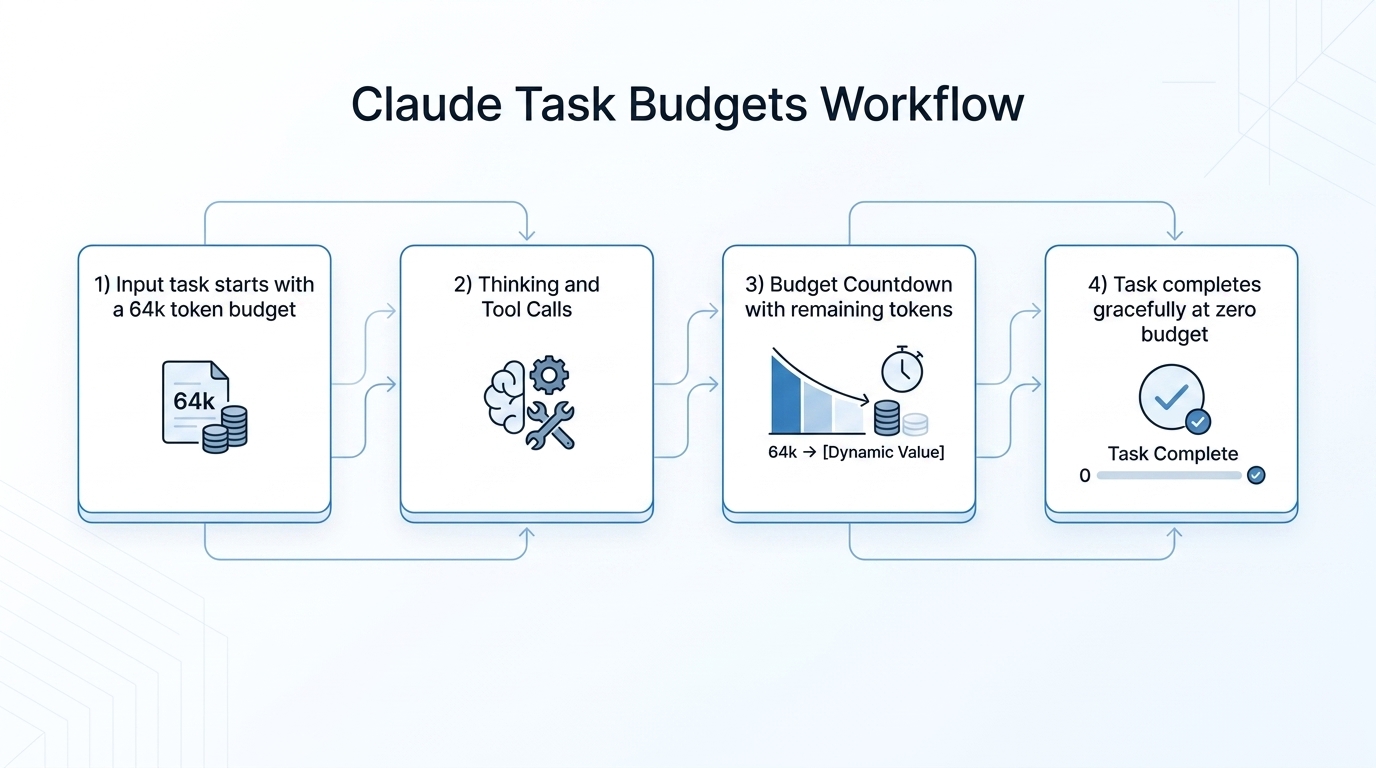

To help developers manage the potential for runaway costs in long-running agent pipelines, Anthropic has introduced a feature called “Task Budgets,” currently in public beta. A task budget is an advisory cap that spans a full agentic loop—potentially multiple requests and tool calls. Unlike the max_tokens parameter, which is a hard cap unknown to the model, the task budget provides Claude with a running countdown of its remaining allowance. This allows the model to prioritize its work and finish gracefully (e.g., summarizing results) as the budget depletes, rather than being cut off mid-sentence.

For SMBs and developers using tools like n8n or LangChain for budget-sensitive automation, configuring these budgets is no longer optional. To implement a task budget, you must set the task-budgets-2026-03-13 beta header. The following example demonstrates how to set a budget using the Python SDK:

# Claude Opus 4.7 Task Budget Configuration

response = client.beta.messages.create(

model="claude-opus-4-7",

max_tokens=128000,

output_config={

"effort": "high",

"task_budget": {"type": "tokens", "total": 64000},

},

messages=[

{"role": "user", "content": "Review the codebase and propose a refactor plan."}

],

betas=["task-budgets-2026-03-13"],

)

Strategic migration: a comparison of Claude models

As of April 2026, the Claude lineup offers a clear trade-off between intelligence and cost efficiency. While Opus 4.7 is the flagship for reasoning, Sonnet 4.6 and Haiku 4.5 remain viable for less complex tasks. Below is a comparison of the current model family to help teams determine where the upgrade cost is justified.

| Model | Input Price (per 1M) | Output Price (per 1M) | Token Density (Relative) | Primary Use Case |

|---|---|---|---|---|

| Claude Opus 4.7 | $5.00 | $25.00 | 1.0–1.35x | Complex agentic work, high-res vision |

| Claude Opus 4.6 | $5.00 | $25.00 | 1.0x (Baseline) | Legacy logic, balanced reasoning |

| Claude Sonnet 4.6 | $3.00 | $15.00 | 1.0x | Scaled automation, knowledge work |

| Claude Haiku 4.5 | $1.00 | $5.00 | 1.0x | Near-instant responses, data extraction |

Recommendations for cost optimization

Transitioning to Claude Opus 4.7 requires more than just a model-ID change. To keep bills predictable, engineering teams should adopt the following strategies:

- Measure token density: Use the

/v1/messages/count_tokensendpoint to compare token usage between 4.6 and 4.7 on your actual production traffic. Do not rely on word counts. - Leverage prompt caching: Opus 4.7 maintains support for prompt caching, which can offer up to 90% savings on repeat inputs. Ensure large context blocks are cached to offset the tokenizer increase.

- Tune effort levels: Do not default all tasks to

xhigh. Start withhighfor most sensitive cases and reservexhighonly for the most challenging software engineering or vision tasks. - Implement task budgets: For autonomous agents, always set a

task_budgetthat aligns with your p99 token spend distribution to prevent outliers from inflating your bill.

Conclusion

The upgrade to Claude Opus 4.7 represents a significant leap in AI capability, but it comes with financial implications that go beyond the sticker price. The effective 35% cost increase caused by the new tokenizer and the model’s intensive reasoning steps can catch unprepared teams by surprise. However, by understanding the mechanics of token density and utilizing the new task budgets feature, developers can harness Opus 4.7’s power while maintaining strict fiscal control. As we move further into 2026, the “budget-aware agent” is becoming the industry standard, and Opus 4.7 provides the necessary tools for this new era of AI deployment.

Leave a Comment

Sign in to join the discussion and share your thoughts.

Login to Comment