As of late April 2026, the artificial intelligence landscape has reached a fever pitch. With Anthropic’s recent release of Claude Opus 4.7 on April 16, the “throne” of frontier models has shifted once again. For small and medium businesses (SMBs), this is more than a technical curiosity—it is a critical decision point for operational efficiency. The choice between Claude Opus 4.7, OpenAI’s GPT-5.4, and Google’s Gemini 3.1 Pro now involves balancing extreme reasoning capabilities against significant cost variations and specialized features like massive context windows or multilingual expertise. This guide breaks down the performance metrics and strategic considerations necessary to navigate these flagship models in 2026.

Claude Opus 4.7: The new king of agentic reasoning

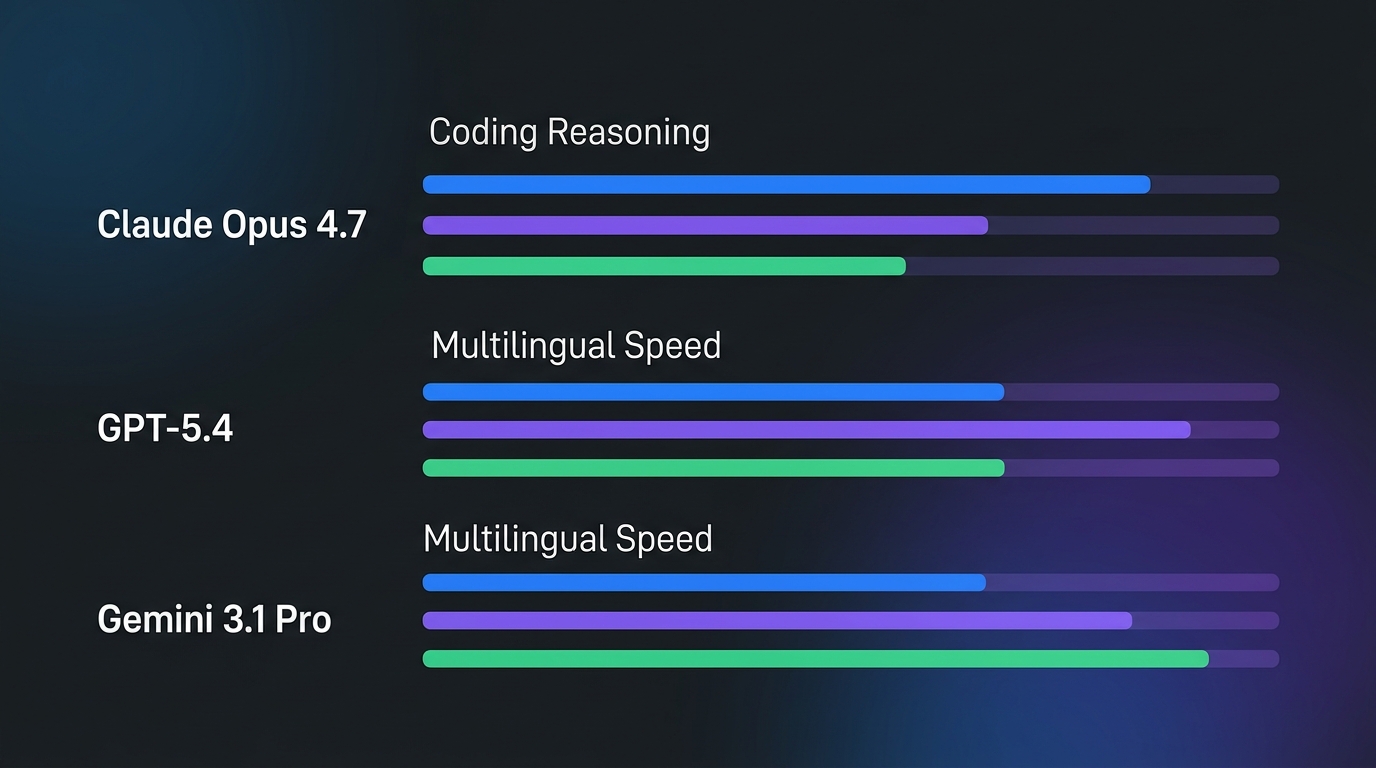

Anthropic has reclaimed the lead in technical benchmarks with Claude Opus 4.7, specifically targeting “agentic” tasks—scenarios where the AI doesn’t just answer a question but executes a multi-step workflow. For SMBs focused on software development, automated QA, or complex data reconciliation, the numbers are hard to ignore. Opus 4.7 currently dominates the 2026 SWE-bench leaderboards, a gold standard for evaluating how well an AI can solve real-world GitHub issues.

- SWE-bench Verified: 87.6% (Top of field)

- SWE-bench Pro: 64.3% (Defining the current state-of-the-art)

- Agentic Focus: Improved tool-use stability and “self-correction” loops that reduce human intervention by 30% compared to Opus 4.0.

While these benchmarks prove Opus 4.7 is the most capable “brain” for technical logic, that power comes at a premium. Anthropic has maintained a high-tier pricing structure, positioning Opus as the choice for high-value reasoning rather than high-volume chat.

GPT-5.4 vs. Gemini 3.1 Pro: Versatility vs. context

OpenAI’s GPT-5.4 remains the “all-rounder” of the group. While it was narrowly defeated by Claude in coding logic, it excels in multimodal processing—specifically vision-to-action tasks. For an SMB, this means GPT-5.4 is often better at interpreting messy document scans or navigating complex web UIs to pull data. Its latency is also notably lower, making it more suitable for customer-facing applications where a five-second wait for a response is unacceptable.

Gemini 3.1 Pro, on the other hand, has carved out a niche in “Infinite Context” and multilingualism. With a context window that now comfortably handles up to 5 million tokens, Gemini allows businesses to upload their entire company wiki, year-over-year financial reports, and codebase into a single prompt. For international SMBs, Gemini 3.1 Pro’s performance in non-English reasoning remains 15-20% higher than Claude or GPT, making it the default for global operations.

The pricing trade-off: Input vs. output costs

In 2026, token efficiency is the primary driver of AI return on investment. Claude Opus 4.7 is significantly more expensive, costing roughly double the price per token of its main rivals. For an SMB running millions of queries a month, this price gap can be the difference between a profitable automation and an unsustainable overhead.

| Model | Input Cost (per 1M) | Output Cost (per 1M) | Primary Strength |

|---|---|---|---|

| Claude Opus 4.7 | $5.00 | $25.00 | Coding & Logic |

| GPT-5.4 | $2.50 | $10.00 | Vision & Speed |

| Gemini 3.1 Pro | $1.25 | $5.00 | Context & Multilingual |

The pricing suggests a “tiered” strategy: Use Opus 4.7 for your most difficult 5% of tasks (like writing new feature code), GPT-5.4 for general reasoning, and Gemini for data extraction from massive document sets where token volume is high but reasoning complexity is moderate.

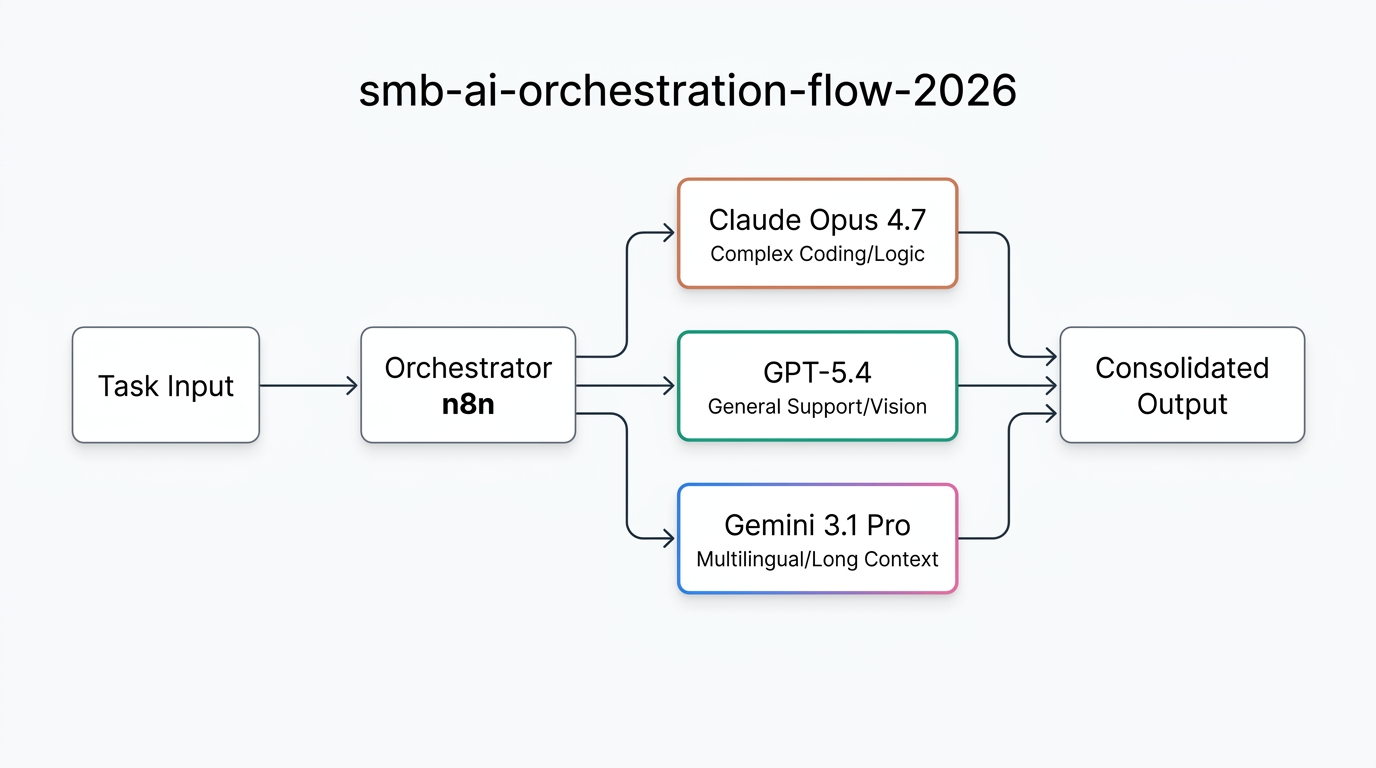

Orchestrating the multi-model SMB workflow

Because no single model is the most cost-effective at everything, 2026 has become the year of the “Orchestrator.” Rather than choosing one model and sticking with it, savvy SMBs are using automation platforms like n8n to route tasks dynamically. This approach—often managed by specialized n8n automation partners—allows a business to reap the benefits of Opus 4.7’s reasoning without paying its high prices for simple tasks.

In a typical n8n workflow, a task is first analyzed by a cheaper model (like GPT-5.4 mini or Gemini Flash). If the task is identified as “highly complex logic,” the workflow routes it to Claude Opus 4.7. If it’s a high-volume data extraction, it goes to Gemini 3.1 Pro. This “Routing Logic” can reduce AI costs by up to 60% while maintaining the highest possible quality of output.

Decision matrix: Which model should you choose?

To choose the right model for your SMB in April 2026, you must categorize your primary AI workloads. The “best” model is no longer a single answer but a situational one:

- Choose Claude Opus 4.7 if: You are building autonomous software agents, need to solve complex mathematical proofs, or require the absolute highest reasoning reliability for code generation.

- Choose GPT-5.4 if: You need a robust API for vision-based tasks, customer-facing chatbots with low latency, or a reliable generalist that integrates deeply with the Microsoft/OpenAI ecosystem.

- Choose Gemini 3.1 Pro if: You are processing massive PDFs/codebases, need the best multilingual support for international markets, or are looking for the lowest cost-per-token for high-volume data analysis.

Conclusion: The path forward for SMBs

The release of Claude Opus 4.7 has clarified the AI market: we are no longer in a race for just “better” AI, but for more specialized AI. For the average SMB, the most significant risk in 2026 is model lock-in. By relying on a single flagship, you either overpay for simple tasks or under-perform on complex ones. The most successful businesses in this era are those adopting a modular approach, using tools like n8n to stitch together a custom AI stack. Whether you need the surgical precision of Opus 4.7 or the broad vision of GPT-5.4, the key is orchestration. Start by auditing your token usage today, and consider partnering with an automation expert to build the multi-model infrastructure your business needs to scale.

Leave a Comment

Sign in to join the discussion and share your thoughts.

Login to Comment