Coordinating hundreds of AI agents without cascading failures is one of the hardest unsolved problems in production AI. A single stuck sub-agent can poison an entire multi-step pipeline. Moonshot AI’s Kimi K2.6, released April 20, 2026, takes a direct swing at this problem with its Agent Swarm architecture — a system that scales to 300 parallel sub-agents executing up to 4,000 coordinated steps in a single run. The model runs on a 1-trillion-parameter Mixture-of-Experts (MoE) backbone that activates only 32 billion parameters per token, keeping inference costs comparable to a dense 32B model. This technical breakdown covers how the MoE routing works, how the orchestration layer prevents failures, and how developers can trigger swarms via API or self-host with vLLM and SGLang.

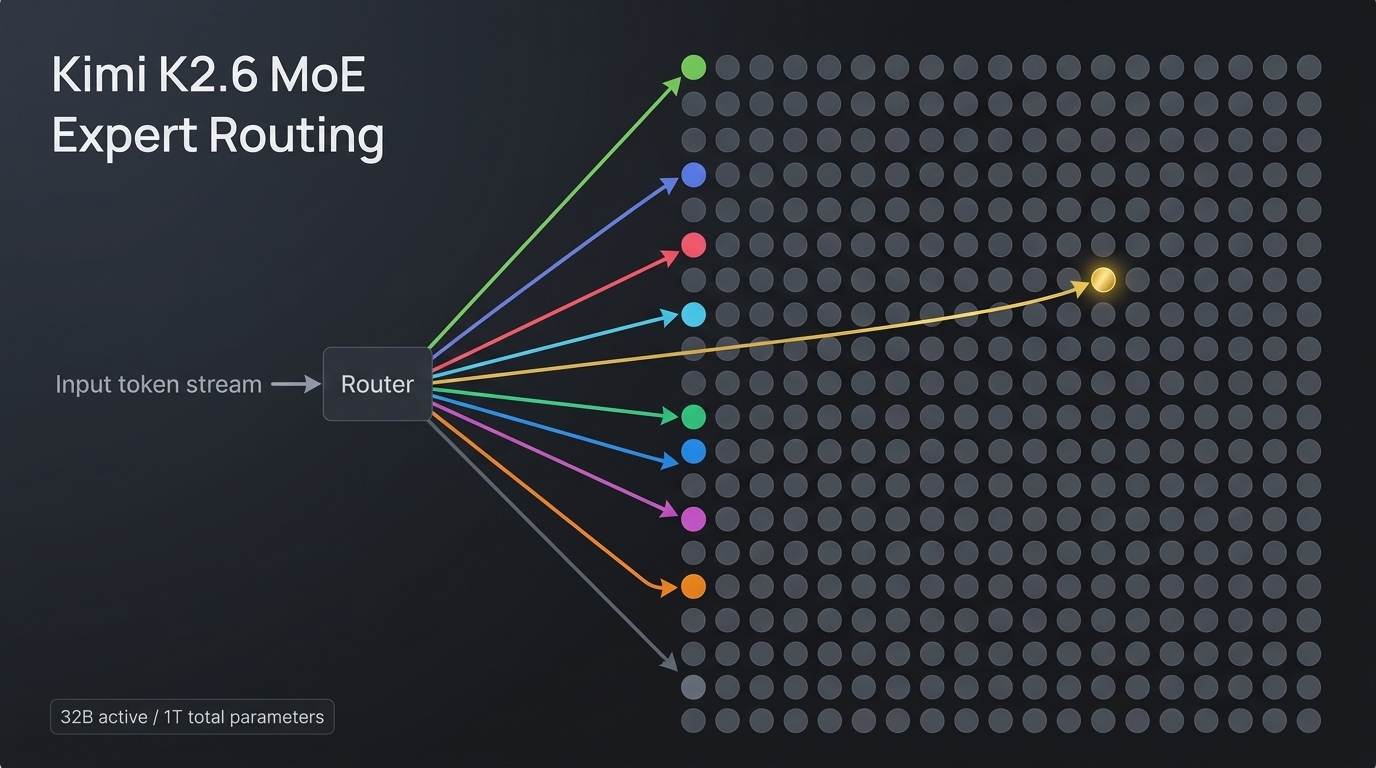

The MoE backbone: 384 experts, 32B active parameters

K2.6 inherits the same MoE architecture Moonshot introduced with the original Kimi K2. The transformer has 384 expert modules distributed across 61 layers. For every token, a learned gating network selects 8 routed experts plus 1 shared expert that fires on every pass. That gives you the reasoning capacity of a trillion-parameter model at the inference cost of roughly a 32B dense model — a practical trade-off that makes large-scale agent swarms economically viable.

The architecture uses Multi-head Latent Attention (MLA), which compresses key-value pairs into a lower-dimensional latent space to reduce KV cache memory. Combined with SwiGLU activation and MuonClip training (Moonshot’s own optimizer), the model handles long context windows up to 256K tokens — a requirement for agent swarms that need to maintain state across thousands of steps. A 400M-parameter MoonViT vision encoder provides native image and video understanding without external preprocessing.

| Spec | Value |

|---|---|

| Total parameters | 1 trillion |

| Active parameters per token | 32 billion |

| Expert count | 384 (8 routed + 1 shared) |

| Context length | 256K tokens |

| Attention mechanism | Multi-head Latent Attention (MLA) |

| Vision encoder | MoonViT (400M params) |

| Native quantization | INT4 (QAT) |

| License | Modified MIT |

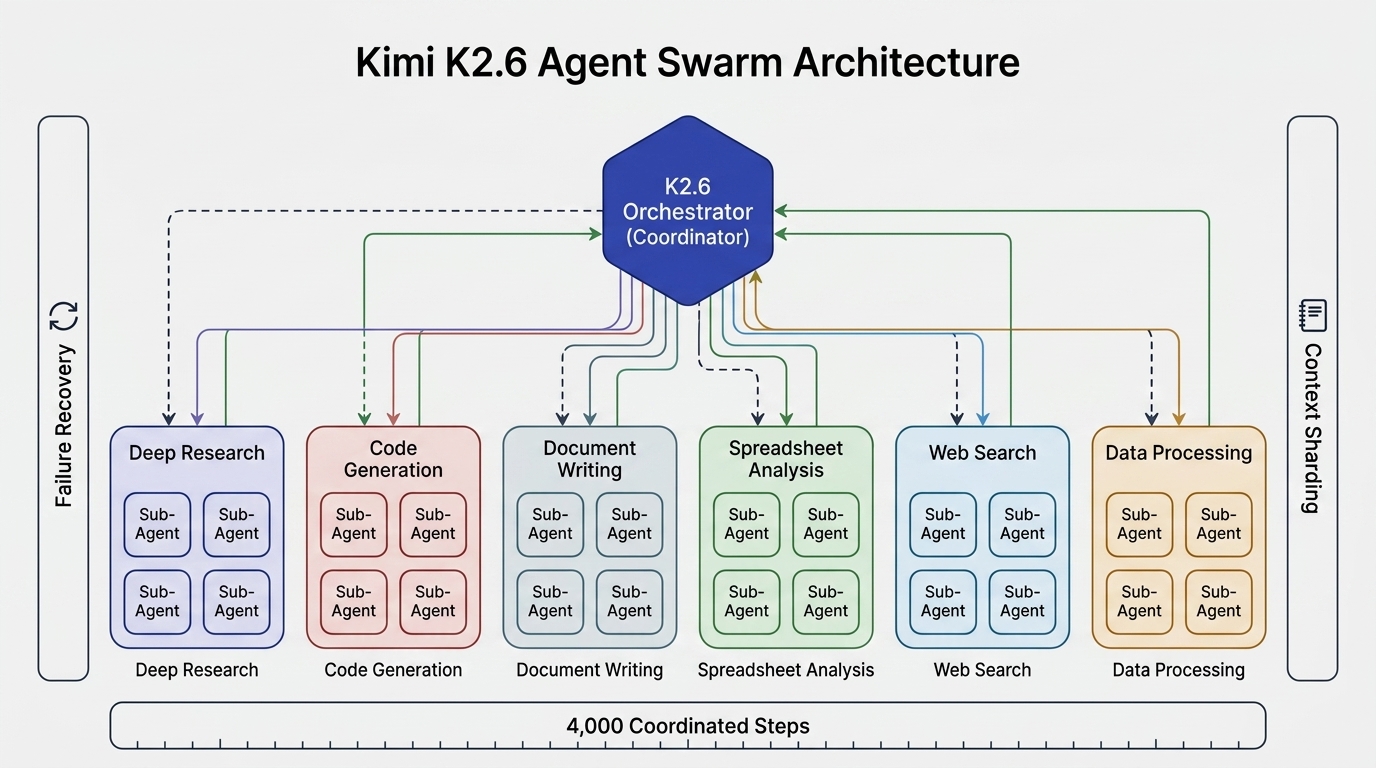

How the Agent Swarm actually works

The 300 sub-agent headline is real, but the architecture behind it is what makes it usable. The system follows a Commander + Specialists pattern. The K2.6 model itself acts as the orchestrator (the “coach”). It receives a complex task, decomposes it into heterogeneous subtasks, and spawns domain-specialized sub-agents dynamically. Some handle deep web research. Others write code, generate documents, build slides, or process spreadsheets. Each sub-agent carries its own context and toolset.

PARL training: freeze the players, train the coach

Moonshot trained the orchestrator using a method called PARL (Parallel-Agent Reinforcement Learning). The key design decision: all sub-agents retain their existing capabilities unchanged. Only the orchestrator improves through reinforcement learning. This provides clear accountability and training stability — the coach learns how to delegate, the players stay good at what they do.

To prevent common failure modes — the orchestrator handing everything to one agent (“serial collapse”) or creating meaningless sub-tasks to game parallelism metrics (“fake parallelism”) — Moonshot uses a three-dimensional reward mechanism during training:

- Quality of final result — the output has to actually be correct

- True parallelism achieved — sub-agents must genuinely work in parallel, not sequentially

- Sub-task completion rate — every spawned agent must deliver, not silently fail

Context sharding and failure recovery

Two engineering decisions keep 300 agents from stepping on each other. First, context sharding: each sub-agent operates from its own isolated “notebook,” recording relevant details independently. Only key conclusions bubble up to the orchestrator. This prevents memory bloat and cross-contamination between agents working on unrelated subtasks.

Second, the orchestrator includes a failure recovery layer. When a sub-agent stalls or crashes, K2.6 detects the interruption, reassigns the task to another agent, or regenerates the subtask entirely. The orchestrator calculates performance using a “critical steps” metric — it measures the slowest sub-agent’s time at each stage, which forces genuine process optimization rather than blind task splitting. In practice, the swarm completes tasks approximately 4.5 times faster than single-agent sequential execution, with critical steps reduced by 3x to 4.5x in large-scale search scenarios.

Claw Groups: collaborative agent clusters

Beyond the swarm, Moonshot introduced Claw Groups as a research preview — a new instantiation of the swarm architecture that extends proactive agents into collaborative clusters. Claw Groups allow agents from different devices, running different models, with different toolkits and memory contexts, to collaborate in a shared workspace. Human team members can join the same workspace alongside AI agents. The K2.6 coordinator assigns tasks based on each agent’s capability, manages dependencies between agents, and reassigns work when something breaks.

Early benchmark data shows Claw Groups scoring 62.3% on Claw Eval (pass^3) and 80.9% on pass@3 — competitive with but trailing Claude Opus 4.6’s 70.4% and 82.4% respectively. This is a research preview, not production-ready, but it signals where multi-agent orchestration is heading: cross-model, cross-device collaboration with a shared coordinator.

Triggering swarms via the API

K2.6’s API is OpenAI and Anthropic-compatible, available at platform.moonshot.ai with the kimi-k2.6 model ID. Here is a basic setup using the OpenAI Python client:

import openai

client = openai.OpenAI(

api_key="YOUR_API_KEY",

base_url="https://api.moonshot.ai/v1"

)

# Thinking mode (default) — exposes reasoning chain

response = client.chat.completions.create(

model="kimi-k2.6",

messages=[

{"role": "system", "content": "You are Kimi, an AI assistant."},

{"role": "user", "content": "Analyze 50 semiconductor stocks and build a comparison report."}

],

max_tokens=4096,

temperature=1.0,

top_p=0.95

)

# Access reasoning content

print(response.choices[0].message.reasoning)

print(response.choices[0].message.content)For multi-turn agent loops, K2.6 supports a preserve_thinking mode that retains full reasoning content across conversation turns — critical for long-running swarm tasks where the model needs to reference its prior reasoning:

# Enable preserve_thinking for multi-turn agent loops

response = client.chat.completions.create(

model="kimi-k2.6",

messages=[

{"role": "user", "content": "List three approaches to fix this bug."},

{

"role": "assistant",

"reasoning_content": "I see five possible approaches...",

"content": "Here are three approaches: ..."

},

{"role": "user", "content": "What were the other two?"}

],

extra_body={"thinking": {"type": "enabled", "keep": "all"}}

)Pricing

| Provider | Input (per 1M tokens) | Output (per 1M tokens) |

|---|---|---|

| Kimi K2.6 | $0.60 | $2.50 |

| Kimi K2.6 (cached) | $0.10–$0.15 | $2.50 |

| GPT-5.4 | $2.50 | $15.00 |

| Claude Opus 4.6 | $5.00 | $15.00 |

Automatic prompt caching provides 75–83% savings on repeated prompts, making the swarm architecture cost-effective for agentic workflows where system prompts and tool definitions are reused across many calls.

Self-hosting with vLLM and SGLang

K2.6 shares the same architecture as K2.5, so existing deployment configurations work directly. Weights are available on Hugging Face at moonshotai/Kimi-K2.6 (approximately 595GB for the full model, ~594GB for INT4 quantized). Three inference engines are supported:

- vLLM — production-grade serving with tensor parallelism, continuous batching, and PagedAttention. Best for high-throughput API deployments.

- SGLang — optimized for structured generation and multi-turn conversations. Strong for agent frameworks needing constrained output.

- KTransformers — Moonshot’s own inference engine with native INT4 quantization via Quantization-Aware Training (QAT), delivering 2x faster inference with 50% reduced GPU memory.

vLLM deployment (TP8 on H200)

# Install (stable production build)

uv pip install -U vllm \

--torch-backend=auto \

--extra-index-url https://wheels.vllm.ai/nightly

# Serve with 8-way tensor parallelism

vllm serve $MODEL_PATH \

-tp 8 \

--mm-encoder-tp-mode data \

--trust-remote-code \

--tool-call-parser kimi_k2 \

--reasoning-parser kimi_k2SGLang deployment (TP8 on H200)

# Install

pip install "sglang @ git+https://github.com/sgl-project/sglang.git#subdirectory=python"

pip install nvidia-cudnn-cu12==9.16.0.29

# Serve

sglang serve \

--model-path $MODEL_PATH \

--tp 8 \

--trust-remote-code \

--tool-call-parser kimi_k2 \

--reasoning-parser kimi_k2Two flags are critical in both engines: --tool-call-parser kimi_k2 enables tool calling support (required for agent swarm functionality), and --reasoning-parser kimi_k2 ensures the thinking mode output is correctly parsed. Without these, the swarm cannot invoke tools or maintain its reasoning chain.

For CPU+GPU heterogeneous setups, KTransformers+SGLang achieves 640 tokens/s prefill and 24.5 tokens/s decode on 8x NVIDIA L20 + 2x Intel 6454S servers — a viable option for teams without access to H200 clusters.

Orchestrating swarms from n8n workflows

For teams building multi-step business processes without writing agent coordination code from scratch, n8n provides a practical integration path. K2.6’s OpenAI-compatible API means it plugs into n8n’s HTTP Request node or the community n8n-nodes-kimi2 package, which adds streaming support, batch processing, and webhook triggers.

The typical pattern: an n8n workflow receives a trigger (webhook, schedule, or form submission), constructs a prompt, sends it to K2.6 via the Moonshot API or an OpenRouter proxy, and processes the structured response downstream. For agent swarm tasks, the workflow can poll for completion and fan out results to subsequent nodes — generating documents, updating spreadsheets, or posting to Slack — all without custom agent orchestration code. The n8n workflow library already includes Kimi K2 templates for document RAG systems, and K2.6 is a drop-in upgrade given the shared architecture.

Key takeaways

- The MoE routing is the cost enabler. Activating 32B of 1T parameters per token makes 300-agent swarms economically viable. Without sparse routing, the inference cost of coordinating hundreds of agents would be prohibitive.

- The orchestrator is the moat, not the agents. PARL training focuses on the coordinator’s delegation ability, not on making individual agents smarter. The three-dimensional reward mechanism prevents serial collapse and fake parallelism.

- Context sharding prevents memory explosions. Each sub-agent works from its own notebook. Only conclusions propagate upstream. This is what makes 300 concurrent agents feasible without running out of context.

- Failure recovery is built in, not bolted on. The critical steps metric and automatic task reassignment mean one stuck agent does not break the entire pipeline.

- Self-hosting is production-ready. K2.6 shares its architecture with K2.5, so vLLM 0.19.1, SGLang, and KTransformers all support it with day-zero compatibility. The INT4 quantized model fits in ~600GB VRAM.

Kimi K2.6’s Agent Swarm is not a benchmark trick. It is a shipping system — available via API, CLI, and self-hosted deployment — that coordinates hundreds of agents through a trained orchestrator with failure recovery baked into the architecture. Whether the execution matches the promise at full production scale across arbitrary enterprise workflows is still an open question, but the technical foundation is solid and the integration paths are immediate. For teams evaluating multi-agent orchestration in 2026, K2.6 deserves a serious look.

Leave a Comment

Sign in to join the discussion and share your thoughts.

Login to Comment