Eight days. That’s the gap between Moonshot AI quietly emailing beta testers about Kimi K2.6 Code Preview on April 13, 2026, and shipping the model as generally available across Kimi.com, the official API, the Kimi App, and the Kimi Code CLI on April 21. For teams already running K2.5 in production, the speed of that transition raises a practical question: what actually changed, and does it justify integrating now rather than waiting for the inevitable K2.7?

This piece traces the specific deltas between K2.5 and K2.6 — the architectural constants, the capability upgrades, and the new features that mark the shift from “impressive demo” to “production infrastructure.” If your business is evaluating whether to wire these capabilities into custom n8n workflows or hold off, these are the details that should drive that decision.

What stayed the same: the 1T MoE backbone

K2.6 does not reinvent the architecture. It carries forward the same Mixture-of-Experts design that Moonshot has refined across five major releases since July 2025: 1 trillion total parameters, 32 billion active per token, 384 routed experts (8 activated per token plus 1 shared), 61 layers, Multi-head Latent Attention (MLA), and SwiGLU activation. The context window remains 256K tokens. The vocabulary stays at 160K. The MoonViT 400M-parameter vision encoder introduced in K2.5 is unchanged. The Modified MIT License — free for commercial use with a credit requirement above 100 million MAU or $20 million monthly revenue — carries over as well.

This continuity matters operationally. Because K2.6 shares the same architecture as K2.5, existing deployment configurations on vLLM, SGLang, or KTransformers can be reused directly. The model swap is closer to a version bump than a platform migration. API endpoints are OpenAI-compatible on both versions, and the same tool-calling constraints apply: tool_choice stays on auto or none when thinking is enabled, and reasoning_content must be preserved across multi-step tool calls.

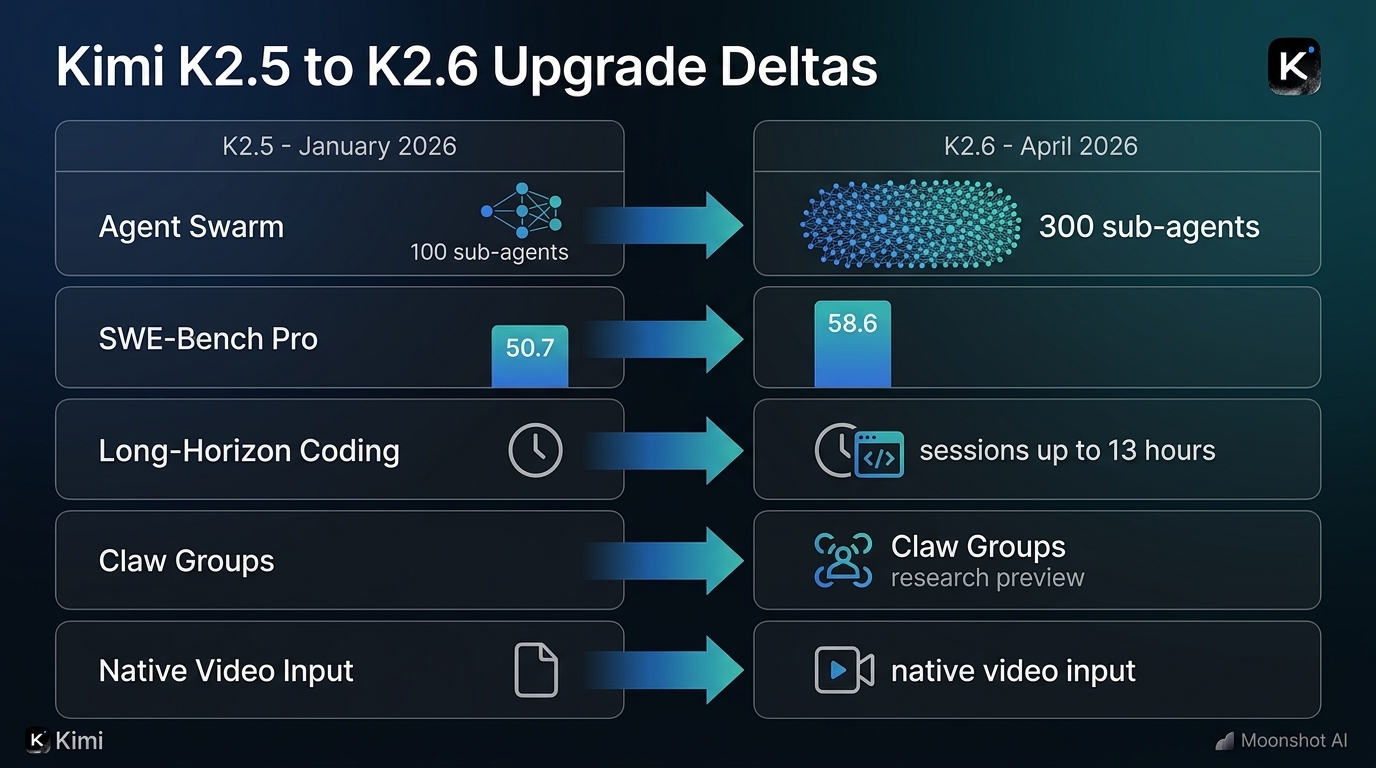

Long-horizon coding: where K2.6 earns its version number

The headline improvement is stamina. K2.5 could handle multi-step coding tasks, but it drifted as sessions stretched past a few hundred tool calls. K2.6 was specifically tuned to hold coherence over extended autonomous runs, and the benchmark deltas are sharp.

| Benchmark | K2.5 | K2.6 | Delta |

|---|---|---|---|

| SWE-Bench Pro | 50.7 | 58.6 | +7.9 |

| SWE-Bench Verified | ~75% | 80.2% | +~5 pp |

| Terminal-Bench 2.0 | 50.8% | 66.7% | +15.9 pp |

| LiveCodeBench (v6) | — | 89.6 | New |

| HLE-Full (w/ tools) | 50.2 | 54.0 | +3.8 |

| BrowseComp (Swarm) | 78.4 | 86.3 | +7.9 |

The SWE-Bench Pro jump from 50.7 to 58.6 is substantial — it puts K2.6 ahead of GPT-5.4 (57.7) and well ahead of Claude Opus 4.6 (53.4) on real-world GitHub issue resolution. The Terminal-Bench 2.0 leap from 50.8 to 66.7 is even more dramatic, confirming that K2.6’s improvements are concentrated in the agentic, terminal-based workflows where K2.5 was weakest.

Moonshot’s own case studies reinforce the benchmark story. In one documented run, K2.6 autonomously optimized Qwen3.5-0.8B inference in Zig — a language most AI models have barely seen — across 4,000+ tool calls and 12+ hours of continuous execution, ultimately outperforming LM Studio’s throughput by roughly 20%. In another, it overhauled an 8-year-old financial matching engine (exchange-core) over 13 hours, modifying 4,000+ lines of code across 12 optimization passes to deliver a 185% improvement in median throughput. These are not toy problems. They represent the kind of work a senior systems engineer would spend a long weekend on, and K2.6 did each autonomously in a single session.

For SMBs considering integration: this is the delta that matters most. If your use case involves pointing an agent at a codebase and letting it work for hours — automated refactoring, dependency upgrades, performance optimization — K2.6 is a fundamentally different tool than K2.5. Vercel reported more than 50% improvement on its Next.js benchmark when switching from K2.5 to K2.6, which is the kind of partner validation that suggests the benchmarks aren’t cherry-picked.

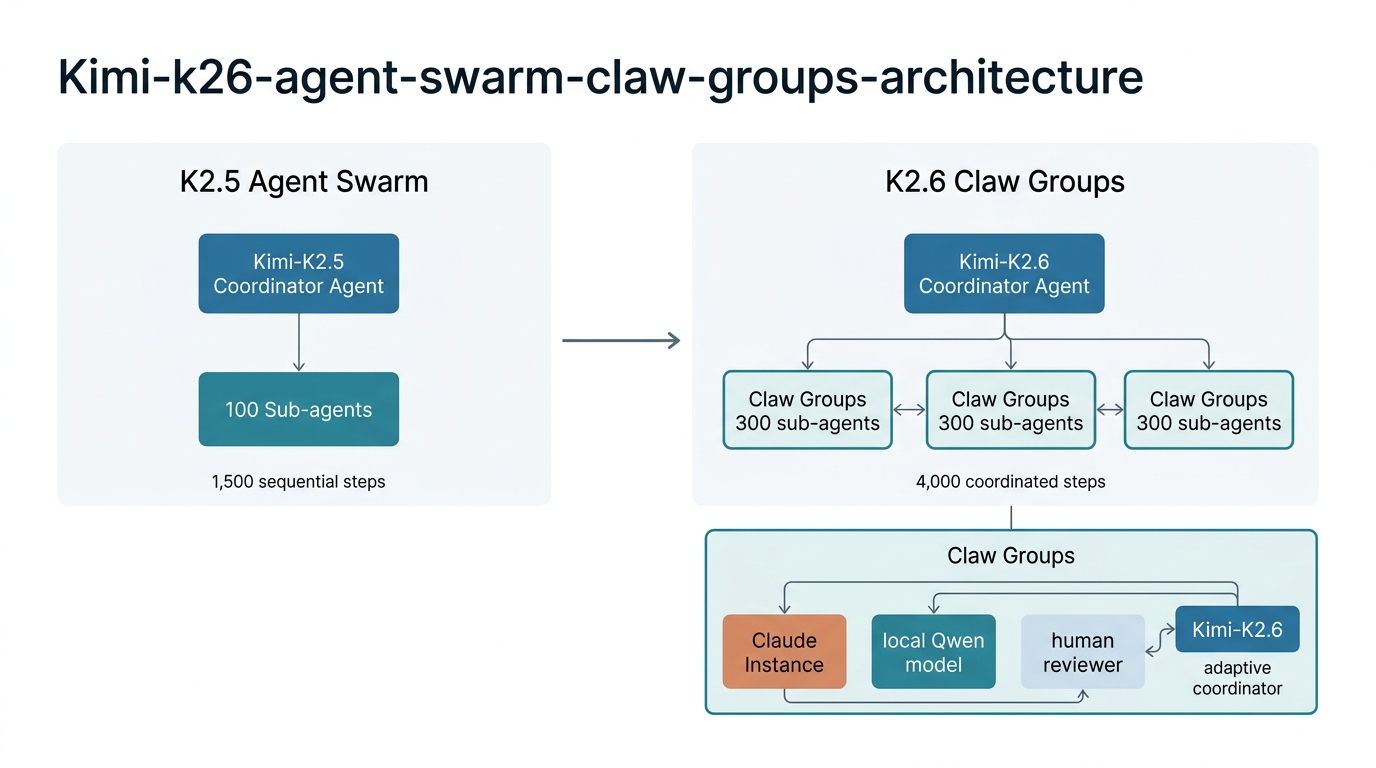

Agent Swarm: from 100 to 300 sub-agents

K2.5 introduced the Agent Swarm concept — decomposing complex tasks across parallel, domain-specialized sub-agents. K2.6 scales it aggressively: from 100 sub-agents and 1,500 coordinated steps up to 300 sub-agents and 4,000 coordinated steps. That’s a 3x increase in horizontal parallelism and nearly 3x in depth-per-agent.

In practice, this means a single prompt can now produce substantially more complex deliverables. K2.5 could generate a coordinated set of documents or a small website. K2.6 can spin up 100 sub-agents to match a single uploaded resume against 100 job openings and produce 100 customized cover letters — or identify 30 retail stores without websites from Google Maps data and generate landing pages for each. The swarm also introduces a concrete Skills capability: it can convert any high-quality PDF, spreadsheet, or slide deck into a reusable skill that preserves structural and stylistic DNA for future tasks. Think of it as teaching the swarm by example rather than prompt.

The economics matter here. At roughly $0.60 per million input tokens and $3.00 per million output tokens (with cache hits at $0.10-$0.15), K2.6 is 4-17x cheaper than GPT-5.4 or Claude Opus 4.6. Cheap inference doesn’t just save money — it unlocks different product designs. Multi-step agent loops that burn thousands of tool calls become financially viable. Background jobs that would be cost-prohibitive on closed-source models become routine. For SMBs building n8n workflows, this price gap means you can afford to experiment with 300-agent swarm patterns without watching your API bill spiral.

Claw Groups: the heterogeneous multi-model future

The most forward-looking feature in K2.6 is Claw Groups, shipping as a research preview. Where K2.5’s Agent Swarm was a homogeneous system — all agents running the same model — Claw Groups opens the architecture to external, heterogeneous participants. You can bring agents from any device, running any model, into a shared operational space. K2.6 acts as the adaptive coordinator: it routes tasks based on agent skill profiles, detects failures, automatically reassigns work, and manages the full delivery lifecycle.

The practical implication is significant. A team could run a Claude instance for creative writing, a local Qwen model for data processing, a custom fine-tune for domain-specific analysis, and a human reviewer for quality control — all coordinated by K2.6 in a single workflow. Moonshot’s own marketing team reportedly uses Claw Groups internally for end-to-end content production: specialized agents for demo creation, benchmarking, social media scheduling, and video editing, all orchestrated in parallel.

For SMBs, Claw Groups is worth watching even if it’s not production-ready today. It represents the “bring your own models” architecture that enterprise buyers have been requesting — the ability to mix open-source and closed-source models in a single pipeline without building custom orchestration. If your n8n workflows currently route tasks to different models based on complexity, Claw Groups could eventually collapse that routing logic into a single coordinated system.

What didn’t change (and when to stay on K2.5)

K2.6 is not a universal upgrade. The context window is the same 256K tokens — if your pain point was “I need a larger window,” K2.6 won’t help. Batch API support is still listed as K2.5-only in current pricing docs. Video input remains experimental and only available through the official Moonshot API; third-party deployments via vLLM or SGLang support image input but not video yet. And K2.6 does not close the gap on pure reasoning benchmarks — GPT-5.4 still leads on AIME 2026 (99.2 vs 96.4) and GPQA Diamond (92.8 vs 90.5).

Stay on K2.5 if your existing workflows are tuned and stable, if you depend on the Batch API for asynchronous high-volume processing, or if your team closely follows Moonshot’s documentation (which still defaults to K2.5 examples in many places). Move to K2.6 if you’re building new coding agent products, if long-session reliability is your primary pain point, or if the Agent Swarm scaling and Claw Groups capabilities map to workflows you’re actually planning to build.

The SMB integration calculus

For teams evaluating K2.6 through the lens of n8n workflow integration, the upgrade timeline matters. K2.6’s API is OpenAI-compatible, which means wiring it into n8n’s HTTP Request node or using the existing OpenAI node with a custom base URL is straightforward. The thinking mode (which exposes reasoning via a reasoning field) and instant mode (which disables it for lower latency) both work through standard API parameters, so you can toggle between deep analysis and fast responses within the same workflow.

The real question is whether to integrate now or wait. Moonshot has shipped five major model updates in nine months — a cadence that suggests K2.7 could land by mid-2026. But K2.6 represents a qualitative shift, not just an incremental improvement. The long-horizon coding stability, the 3x swarm scaling, and the Claw Groups preview collectively mark the point where agentic coding stops being a demo and starts being infrastructure. If you’re building workflows that depend on autonomous agent execution — code review pipelines, automated documentation generation, multi-step data processing — K2.6 is the first version where those workflows are likely to survive contact with reality.

The pricing reinforces the case. At $0.60 input / $3.00 output per million tokens, with automatic caching reducing repeated prompt costs by 75-83%, K2.6 makes long-running agent loops economically viable in a way that closed-source models simply don’t. For an SMB running n8n workflows that trigger 50-200 agent calls per day, the monthly cost difference between K2.6 and Claude Opus 4.6 could be the difference between “experiment” and “production.”

Bottom line

K2.6 retains the same 1T-parameter MoE architecture as K2.5 but adds three capabilities that change its operational profile: long-horizon coding that holds coherence past 12 hours and 4,000 tool calls, Agent Swarm scaling from 100 to 300 sub-agents with a new Skills system, and Claw Groups as a heterogeneous multi-model coordination layer. The benchmark improvements are concentrated exactly where K2.5 was weakest — SWE-Bench Pro (+7.9), Terminal-Bench 2.0 (+15.9 percentage points), and BrowseComp Swarm (+7.9). If you’re already running K2.5 in production with no pain, there’s no urgency. If you’re building new agent infrastructure or hitting the limits of K2.5’s session stability, K2.6 is the version where the math starts working in your favor.

Leave a Comment

Sign in to join the discussion and share your thoughts.

Login to Comment