The landscape of artificial intelligence underwent a tectonic shift on April 20, 2026, when Moonshot AI released Kimi K2.6. For years, the “frontier” of AI performance was a walled garden occupied exclusively by proprietary models from giants like OpenAI and Anthropic. However, as of late April 2026, the arrival of Kimi K2.6—a trillion-parameter, open-weight Mixture-of-Experts (MoE) model—has fundamentally challenged this hierarchy. By achieving state-of-the-art results on agentic coding and terminal benchmarks, K2.6 has proven that open-source infrastructure can not only match but exceed the performance of premium models like GPT-5.4 and Claude Opus 4.6. For Small and Medium Businesses (SMBs), this isn’t just a technical curiosity; it represents a massive opportunity to deploy enterprise-grade intelligence at a fraction of previous costs.

Benchmark showdown: The 2026 frontier leaderboard

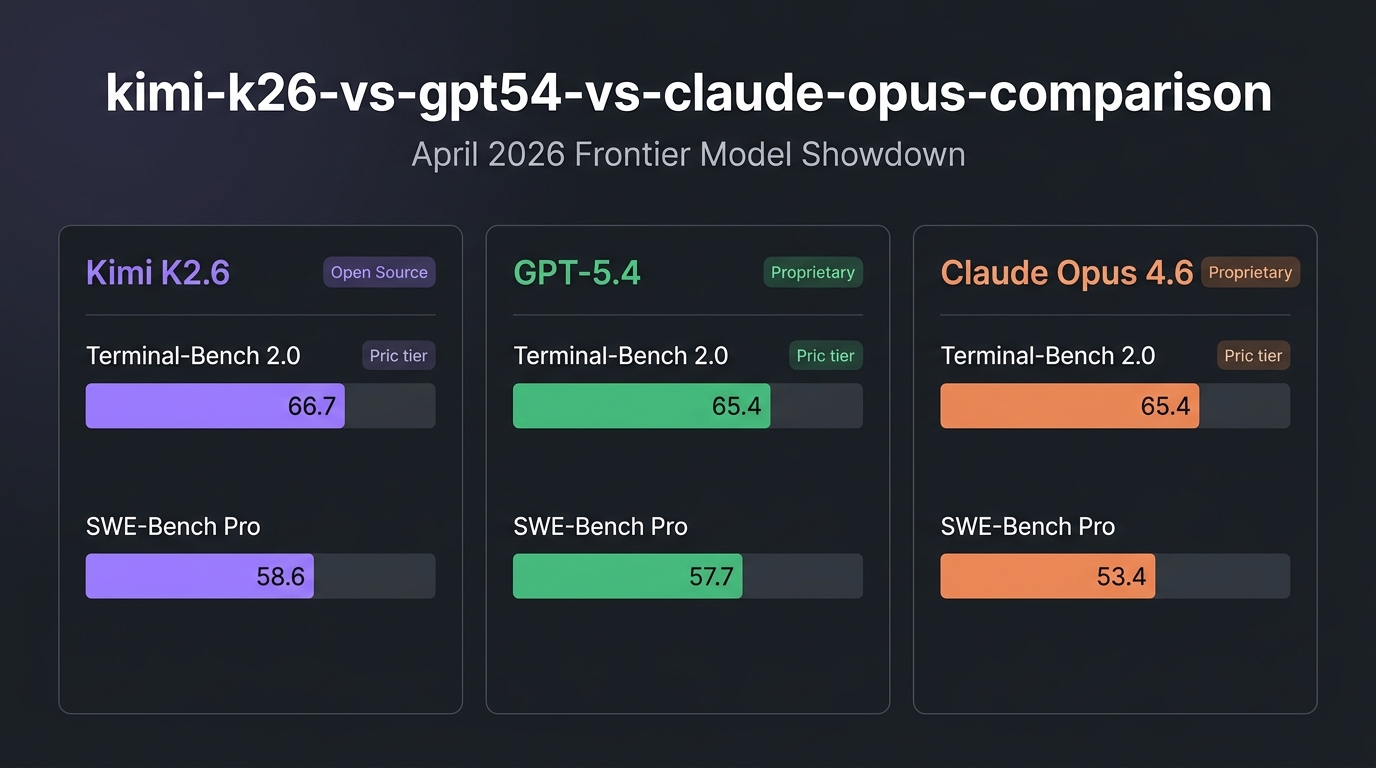

The most revealing data comes from Terminal-Bench 2.0, a benchmark specifically designed to measure an AI’s ability to navigate complex, sandboxed terminal environments. Running on the Terminus-2 agent framework, Kimi K2.6 secured a score of 66.7, surpassing both GPT-5.4 and Claude Opus 4.6, which both landed at 65.4. This specialized benchmark is critical because it reflects real-world software engineering tasks: debugging asynchronous code, managing system architectures, and executing multi-step deployment scripts.

| Benchmark / Metric | Kimi K2.6 (Open) | GPT-5.4 (Proprietary) | Claude Opus 4.6 (Proprietary) |

|---|---|---|---|

| Terminal-Bench 2.0 | 66.7 | 65.4 | 65.4 |

| SWE-Bench Pro | 58.6% | 57.7% | 53.4% |

| GPQA Diamond | 90.5% | 92.8% | 91.3% |

| AIME 2026 (Math) | 96.4% | 99.2% | 96.7% |

| Context Window | 256K tokens | 1.1M tokens | 1.0M tokens |

While GPT-5.4 retains a narrow lead in pure competition-level mathematics (AIME 2026) and scientific reasoning (GPQA), Kimi K2.6 dominates in agentic coding. On the SWE-Bench Pro leaderboard, which tests a model’s ability to resolve real GitHub issues involving multiple files and long-horizon execution, K2.6 leads the field with a 58.6% resolution rate. This suggests that while proprietary models are excellent “thinkers,” K2.6 is currently the industry’s superior “doer” for autonomous engineering tasks. For a deeper comparison of how proprietary coding models stack up against each other, our earlier showdown provides additional context on the 2026 frontier landscape.

Swarm intelligence: Orchestrating 300 sub-agents

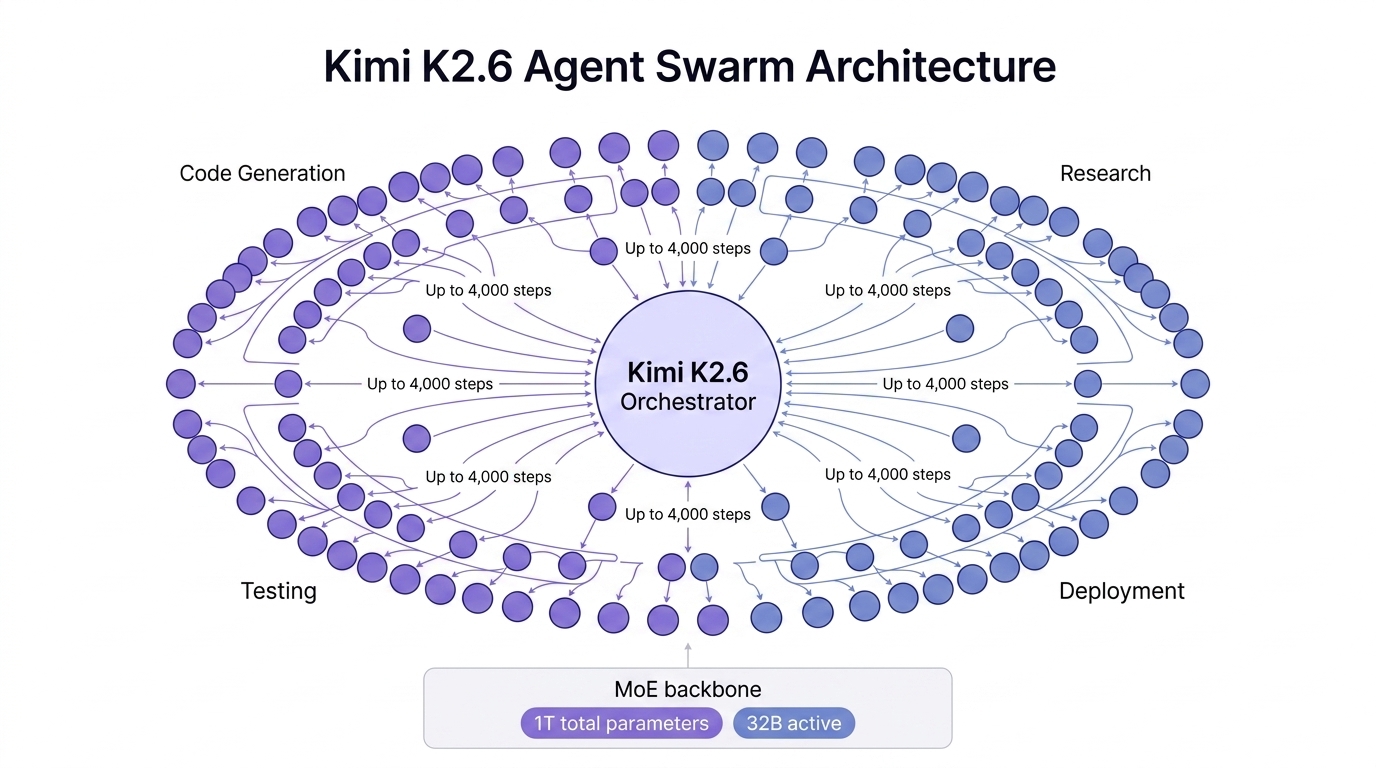

The technical “secret sauce” behind Kimi K2.6’s success is its native Agent Swarm orchestration. Unlike previous generations that relied on a single large model to handle a task sequentially, K2.6 is designed to horizontally scale to 300 sub-agents. This swarm can execute up to 4,000 coordinated steps, dynamically decomposing a massive project—such as building a full-stack application from scratch—into parallel, domain-specialized subtasks.

Architecture-wise, K2.6 utilizes a massive Mixture-of-Experts (MoE) backbone with 1 trillion total parameters. However, only roughly 32 billion parameters are active per forward pass. This efficiency allows the model to maintain extremely high intelligence while keeping inference latency low. For developers, this means the model can “think” through a problem in the background while sub-agents verify code, write tests, and document progress simultaneously. This native swarm capability is currently missing from both GPT-5.4 and Claude Opus 4.6, which still primarily rely on single-stream reasoning or external, higher-latency frameworks to achieve similar results.

Budget implications: The cost of the frontier

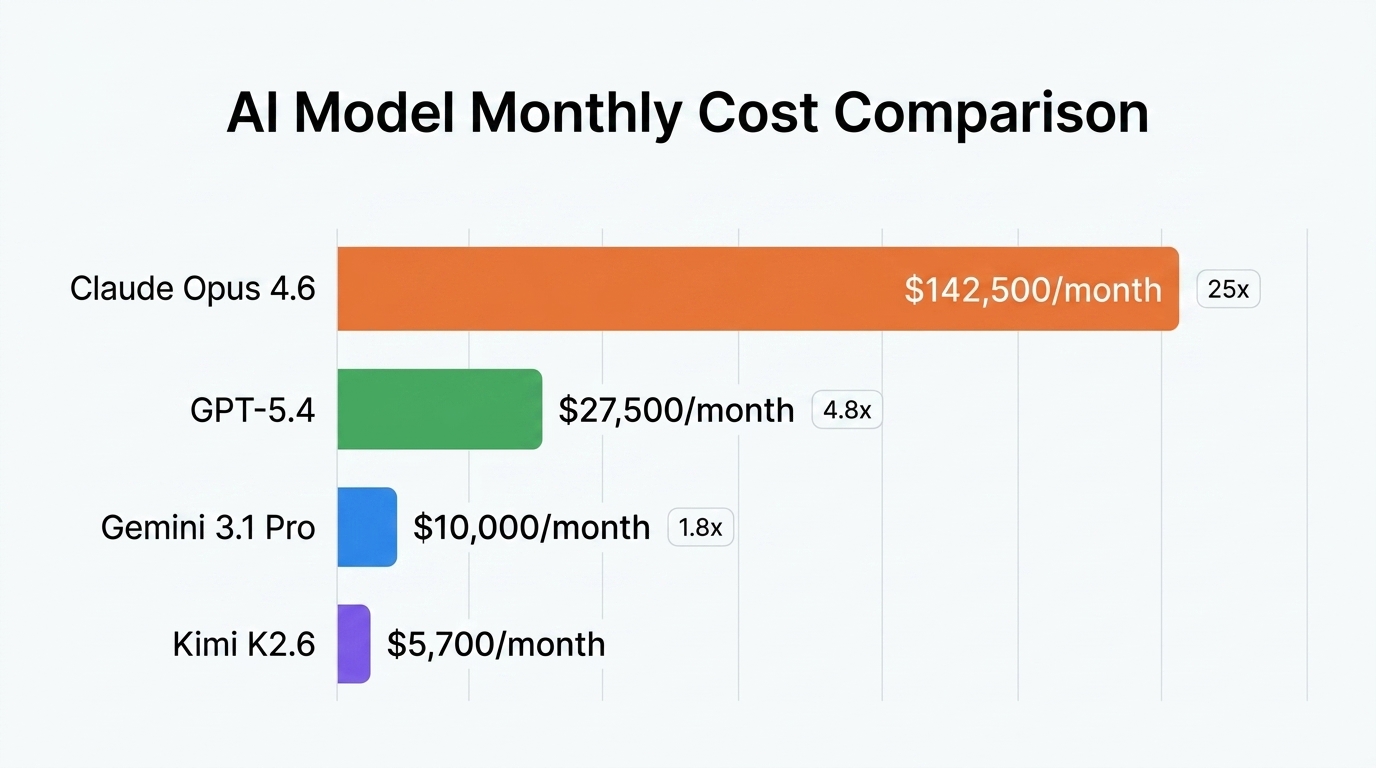

For SMBs and startups, the most compelling argument for Kimi K2.6 isn’t just the performance—it’s the radical shift in economics. As of April 2026, the pricing disparity between open-weight models and proprietary APIs has reached a breaking point. While Claude Opus 4.6 remains one of the most intelligent models for creative nuances, its API cost ($5.00/1M input, $25.00/1M output) makes high-volume agentic work prohibitively expensive for many companies.

In contrast, Kimi K2.6 is available at approximately $0.75 per million input tokens and $3.50 per million output tokens on Moonshot’s platform. Furthermore, because it is open-weight under a Modified MIT License, companies can choose to self-host the model on their own GPU clusters (such as an 8xH100 node), effectively bringing the marginal cost per token to zero after hardware depreciation. This license is exceptionally permissive, requiring attribution only for organizations exceeding 100 million monthly active users, making it the de facto standard for commercial AI development in 2026.

Productionizing the swarm with n8n and automation

Building a trillion-parameter agent swarm is one thing; wiring it into a production business workflow is another. This is where the engineering overhead often halts SMB adoption. To bridge this gap, many companies are bypassing complex custom Python deployments in favor of n8n automation. By using n8n’s LangChain nodes or the new native Kimi Orchestrator nodes, businesses can wire K2.6’s 300-agent swarms directly into their CRM, Slack, or GitHub repositories.

Working with a specialized n8n automation partner allows teams to implement “Agentic Operations” (AgOps) without hiring a dedicated AI engineering team. A typical workflow might involve K2.6 monitoring a support inbox, spawning a research sub-agent to check documentation, a coding sub-agent to verify a bug, and a summary sub-agent to draft a response—all triggered by a single incoming email. With K2.6’s native video input and 256K context, these agents can even “watch” user-submitted screen recordings to identify UI bugs before a human developer ever opens the ticket.

Conclusion: The open-source victory

As of April 2026, the question is no longer whether open-source models can compete with the frontier—they are currently leading it in the categories that drive business value. Kimi K2.6’s victory on Terminal-Bench 2.0 and SWE-Bench Pro signifies a new era where the most capable “workhorse” models are accessible to everyone, not just those with “big tech” budgets. For SMBs, the path forward is clear: leverage the cost-efficiency of open-weight infrastructure like K2.6, use swarm orchestration to handle complex tasks, and productionize these workflows through low-code platforms like n8n. The frontier has been democratized, and the competitive advantage now belongs to those who can automate the fastest.

Leave a Comment

Sign in to join the discussion and share your thoughts.

Login to Comment