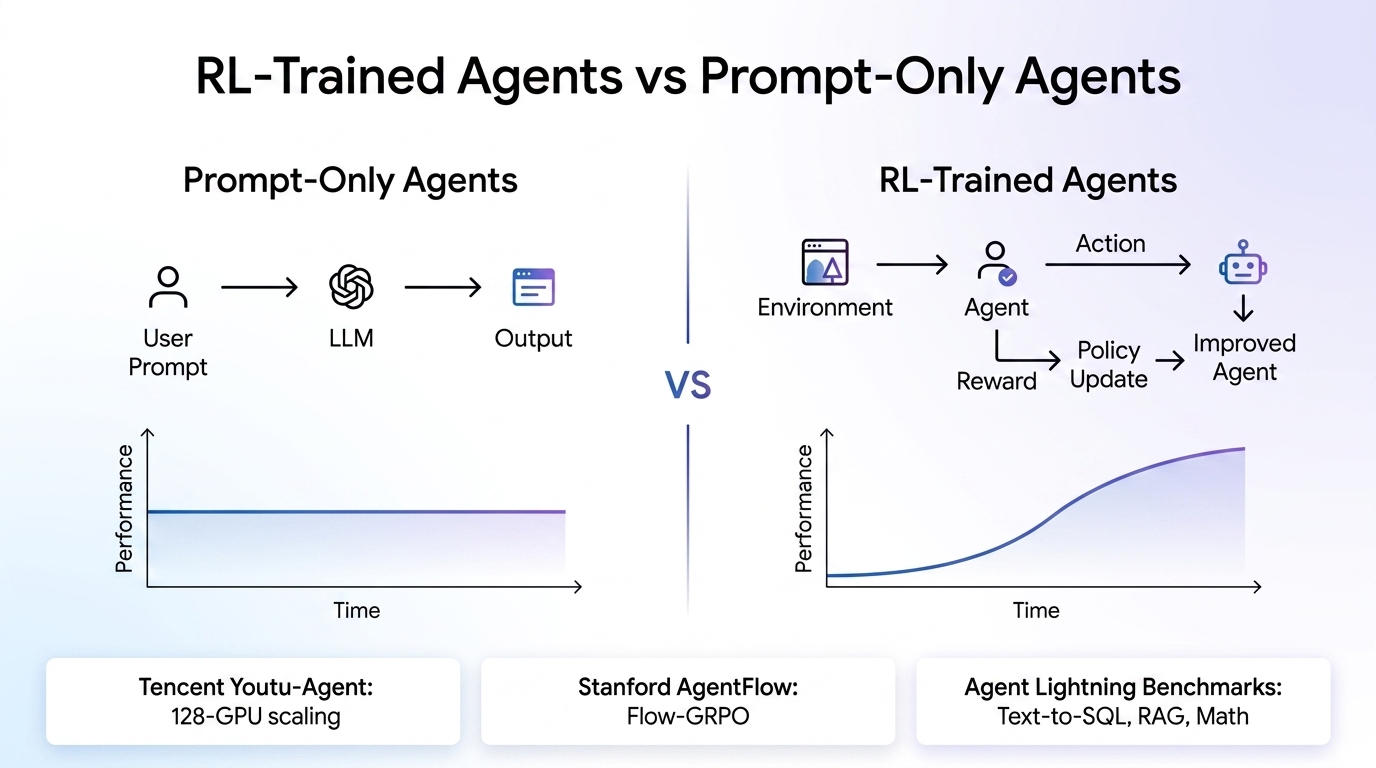

Prompt engineering has been the default strategy for getting AI agents to behave in production. You craft the perfect system prompt, add a few-shot examples, maybe layer in some retrieval-augmented generation (RAG), and ship it. But as agents tackle longer, multi-step workflows with real tools and real consequences, that approach is running into a wall. Early mistakes compound. Tool calls go off the rails. The agent can’t recover from its own errors because it was never trained to.

A growing body of production evidence from open-source RL frameworks, particularly Microsoft’s Agent Lightning, shows that reinforcement learning-fine-tuned agents are delivering measurable, sometimes dramatic improvements over prompt-only baselines. Three community projects built on or integrated with Agent Lightning—Tencent’s Youtu-Agent, Stanford’s AgentFlow, and Agent Lightning’s own benchmark suite—offer concrete proof points that this shift is already happening at scale.

The prompt-only ceiling

Prompt engineering is fundamentally static. You write instructions, test them against a handful of examples, and hope they generalize. For single-turn tasks—summarization, classification, simple question answering—this works well enough. But agents in production are different. They run multi-turn workflows. They call tools. They hit edge cases the prompt author never anticipated. A 2025 analysis from the NeurIPS Efficient Reasoning Workshop highlighted that multi-step agent trajectories often span five to ten or more turns, where an error at turn three silently corrupts everything downstream.

Reinforcement learning addresses this by letting the agent learn from experience. Instead of relying entirely on a hand-crafted prompt, the agent tries tasks, receives reward signals based on outcomes, and adjusts its policy. The key insight driving adoption in 2025 and 2026 is that RL training now works with hundreds or thousands of examples, not millions, making it viable for teams that don’t have the resources of a major AI lab.

Tencent Youtu-Agent: Stable 128-GPU scaling for real tasks

Tencent’s Youtu Lab open-sourced its Youtu-Agent framework in September 2025, and it has since become one of the most compelling case studies in production-grade agent RL training. The team built a modified branch of Agent Lightning and verified stable RL training across 128 GPUs on two distinct task categories: math and code reasoning (using the ReTool benchmark) and agentic search (using SearchR1).

The numbers are striking. On the AIME 2024 math benchmark, RL training with Youtu-Agent improved Qwen2.5-7B accuracy from 10% to 45%—a 4.5x improvement. The framework also achieved a 40% reduction in training iteration time through integration with distributed training infrastructure. Perhaps most importantly, the team demonstrated consistent convergence behavior at 128-GPU scale, a configuration where many RL implementations become unstable or inefficient due to communication overhead.

| Metric | Before RL Training | After RL Training |

|---|---|---|

| AIME 2024 accuracy (Qwen2.5-7B) | 10% | 45% |

| Training iteration time | Baseline | 40% faster |

| Verified GPU scale | – | 128 GPUs, stable |

| Task coverage | – | Math, code, search |

What makes this relevant for smaller organizations is the infrastructure implication. If Tencent can achieve stable 128-GPU distributed RL training with an open-source framework, it signals that the tooling is maturing fast enough for teams with far fewer resources to run meaningful RL experiments on smaller GPU clusters or even cloud-based training instances.

Stanford AgentFlow: Tackling the hardest RL problem in agents

If Youtu-Agent proves that RL training scales, AgentFlow proves it works on the hardest class of agent problems: long-horizon tasks with sparse rewards. Developed by researchers at Stanford and accepted at ICLR 2026, AgentFlow introduces the Flow-GRPO (Flow-based Group Refined Policy Optimization) algorithm, which is listed as an Agent Lightning community project.

The core problem Flow-GRPO solves is credit assignment. In a multi-turn agent trajectory—say, a search task where the agent must plan a query, execute it, verify results, and synthesize an answer—the reward (success or failure) only arrives at the end. Traditional RL struggles to figure out which of the ten intermediate decisions were good and which were bad. Flow-GRPO addresses this by decomposing multi-turn optimization into a sequence of tractable single-turn policy updates, broadcasting the trajectory-level outcome to every turn so local decisions can be aligned with global success.

The results are remarkable. Using a 7B-parameter backbone (Qwen-2.5-7B-Instruct), AgentFlow outperforms top baselines across ten benchmarks with the following improvements:

- +14.9% on search tasks (Bamboogle, 2Wiki, HotpotQA, Musique)

- +14.0% on agentic reasoning (GAIA benchmark)

- +14.5% on math

- +4.1% on science

Critically, this 7B model outperforms GPT-4o, which has roughly 200 billion parameters. That’s a 30x smaller model beating a frontier proprietary system after RL training. For any business evaluating the ROI of agent fine-tuning, this is the kind of result that shifts the calculus from “nice to have” to “strategic advantage.”

Agent Lightning’s own benchmarks: Continuous improvement across three domains

The Agent Lightning framework itself (v0.3.1 as of December 2025, with active development through February 2026) was evaluated on three real-world scenarios using a Llama 3.2 3B Instruct backbone—a deliberately small model to demonstrate what RL can achieve without massive compute:

- Text-to-SQL (Spider benchmark, LangChain implementation): A three-agent system handling SQL generation, checking, and rewriting. RL training simultaneously optimized two agents, significantly improving the accuracy of generating executable SQL from natural language queries.

- RAG on multi-hop QA (MuSiQue benchmark, OpenAI Agents SDK): The agent learned to generate more effective search queries and reason better from retrieved content across a large Wikipedia database.

- Math tool use (CalcX benchmark, AutoGen implementation): RL training taught the model when and how to call a calculator tool and integrate results into reasoning, directly increasing accuracy.

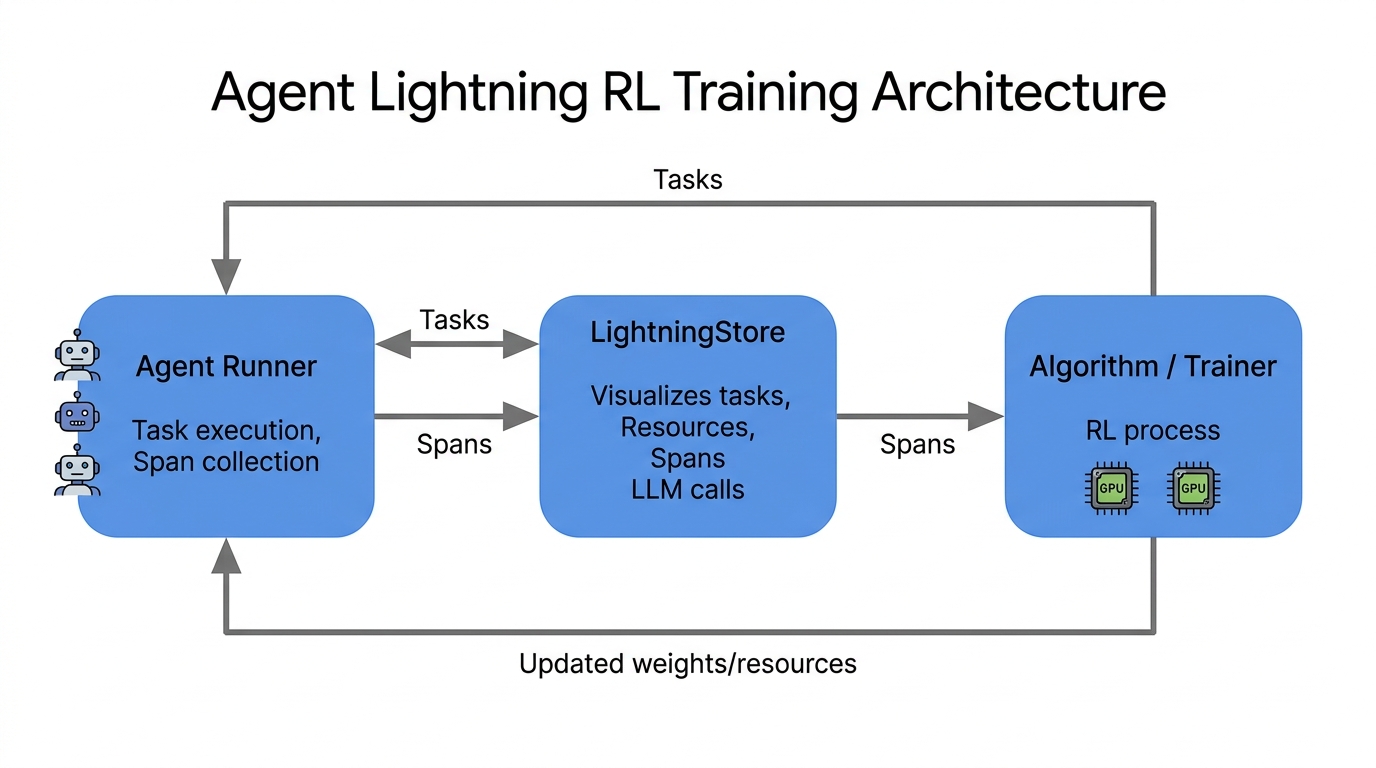

All three scenarios showed stable, continuous improvement curves on both training and test splits, with no signs of reward hacking or training instability. The framework’s core technical innovation—treating agent execution as a sequence of independent LLM transitions, each paired with its own reward via a credit assignment module—means that existing single-step RL algorithms like GRPO and PPO work out of the box. No special multi-step RL machinery required.

Why this matters for business AI automation

The practical takeaway from these case studies is straightforward: if your AI agent touches tools, runs multi-step workflows, or handles tasks where accuracy directly impacts revenue, prompt-only approaches leave significant performance on the table. The gap between a prompted agent and an RL-fine-tuned agent isn’t marginal—it’s the difference between a 7B model matching GPT-4o and the same model falling short on basic benchmarks.

For small and medium businesses investing in AI automation, the challenge isn’t whether RL fine-tuning works. The evidence is clear. The challenge is operational: setting up training infrastructure, defining reward functions, managing the training loop, and integrating the resulting model back into production workflows. This is where many organizations benefit from working with automation partners who can handle the end-to-end pipeline—from selecting the right base model and designing reward signals to deploying the trained agent inside business workflow tools like n8n.

Frameworks like Agent Lightning lower the technical barrier significantly. Because it decouples agent execution from model training, you can add RL capabilities to existing agents built with LangChain, OpenAI Agents SDK, AutoGen, CrewAI, or even plain Python OpenAI calls with virtually no code modification. The open-source ecosystem around it—including Youtu-Agent for scaling and AgentFlow for long-horizon tasks—provides battle-tested recipes rather than theoretical approaches.

Key takeaways

- RL training delivers compounding improvements that prompt engineering alone cannot achieve, especially for multi-step agent workflows. Tencent’s Youtu-Agent showed 10% to 45% accuracy gains on math benchmarks with the same base model.

- A 7B model can outperform GPT-4o after domain-specific RL training. Stanford’s AgentFlow demonstrated this across ten benchmarks in search, reasoning, math, and science.

- Infrastructure is no longer the bottleneck. Agent Lightning (17k GitHub stars, MIT license) enables RL training with near-zero code changes, and Youtu-Agent has proven stability at 128-GPU scale.

- The credit assignment problem has practical solutions. Flow-GRPO and Agent Lightning’s hierarchical reward decomposition make long-horizon, sparse-reward training tractable.

- Integration matters as much as training. For businesses, the ROI of RL-fine-tuned agents depends on connecting them to real workflows—something automation platforms and partners can accelerate.

The trajectory is clear. Prompt engineering is the starting point, not the ceiling. The teams seeing the best results from AI agents in 2026 are the ones investing in RL fine-tuning—and the tooling to make it practical is now open, documented, and production-proven.

Leave a Comment

Sign in to join the discussion and share your thoughts.

Login to Comment