As of April 2026, the landscape of AI agent development has reached a critical inflection point. While initial prototypes built on static Large Language Model (LLM) prompts proved the “agentic” concept, production-grade performance requires more than clever engineering; it requires continuous learning. Reinforcement Learning (RL), the same technology that powered AlphaGo and ChatGPT’s RLHF, is the gold standard for this optimization. However, until recently, applying RL to complex, multi-turn AI agents required massive code rewrites and specialized ML infrastructure. Microsoft’s Agent Lightning (v0.3.1), an open-source framework from Microsoft Research, has solved this via a revolutionary “Training-Agent Disaggregation” architecture. This article provides a 2026 technical breakdown of how Agent Lightning trains AI agents with near-zero code changes and why it is becoming the foundational layer for high-ROI automated workflows.

The challenge of multi-turn agent training

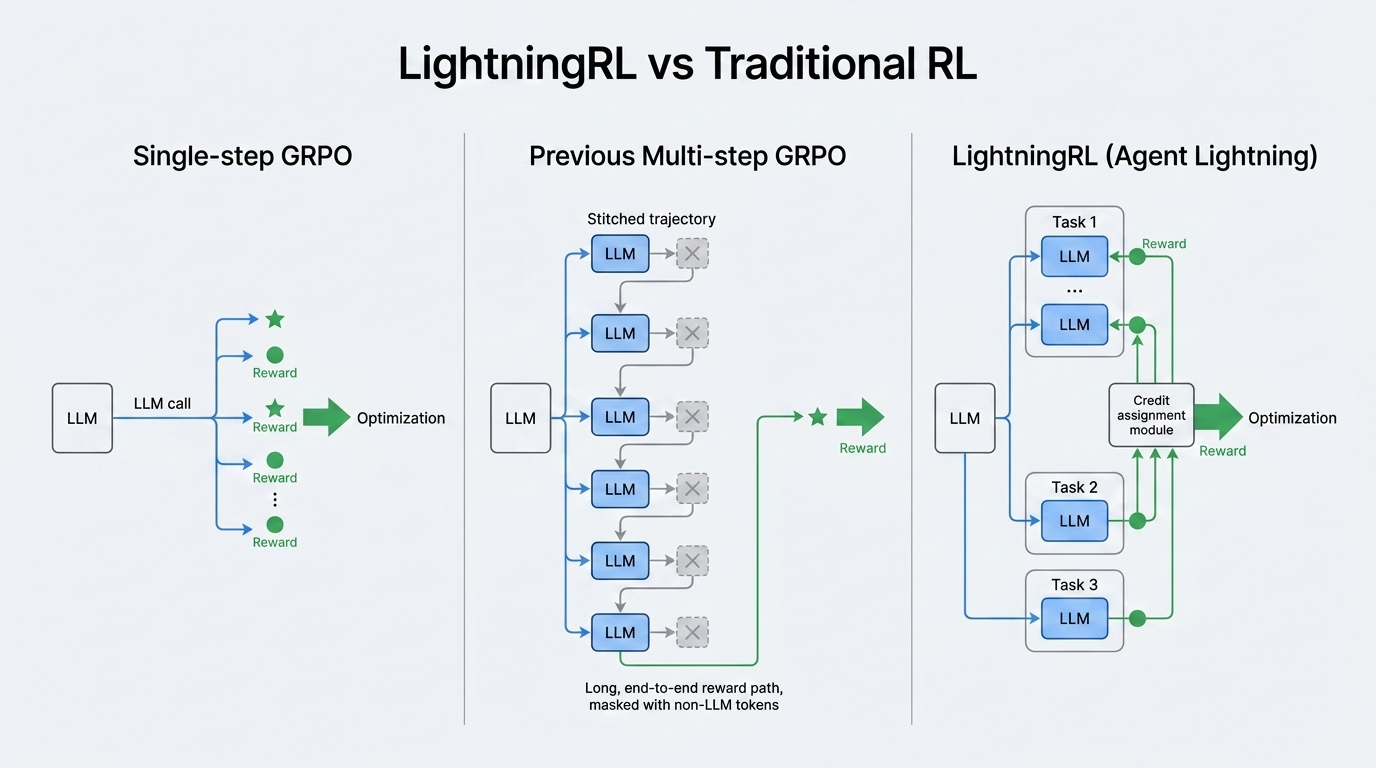

Traditional reinforcement learning for LLMs typically operates on single-turn interactions: the model receives a prompt, generates a response, and receives a reward. Agents, however, are inherently multi-turn. A single task might involve multiple LLM calls for reasoning, tool usage, error correction, and final synthesis. Training these agents using standard RL approaches (like PPO or GRPO) traditionally presents three technical hurdles:

- Code coupling: Developers must manually instrument every LLM call to track states, actions, and rewards, essentially rewriting their agent logic for the training framework.

- Trajectory stitching: Existing methods often stitch multiple turns into one long sequence with masking, which can exceed context windows and degrade model performance.

- Credit assignment: If an agent succeeds after 10 steps, which specific LLM call contributed most to the success? Assigning a single final reward to an entire complex sequence is often too “noisy” for the model to learn effectively.

Microsoft Agent Lightning addresses these by decoupling the “brain” (the training algorithm) from the “body” (the agent execution) through a middleware layer. This allows developers to use any framework—LangChain, AutoGen, CrewAI, or OpenAI Agent SDK—without modifying their core logic.

Training-Agent Disaggregation: The architectural breakthrough

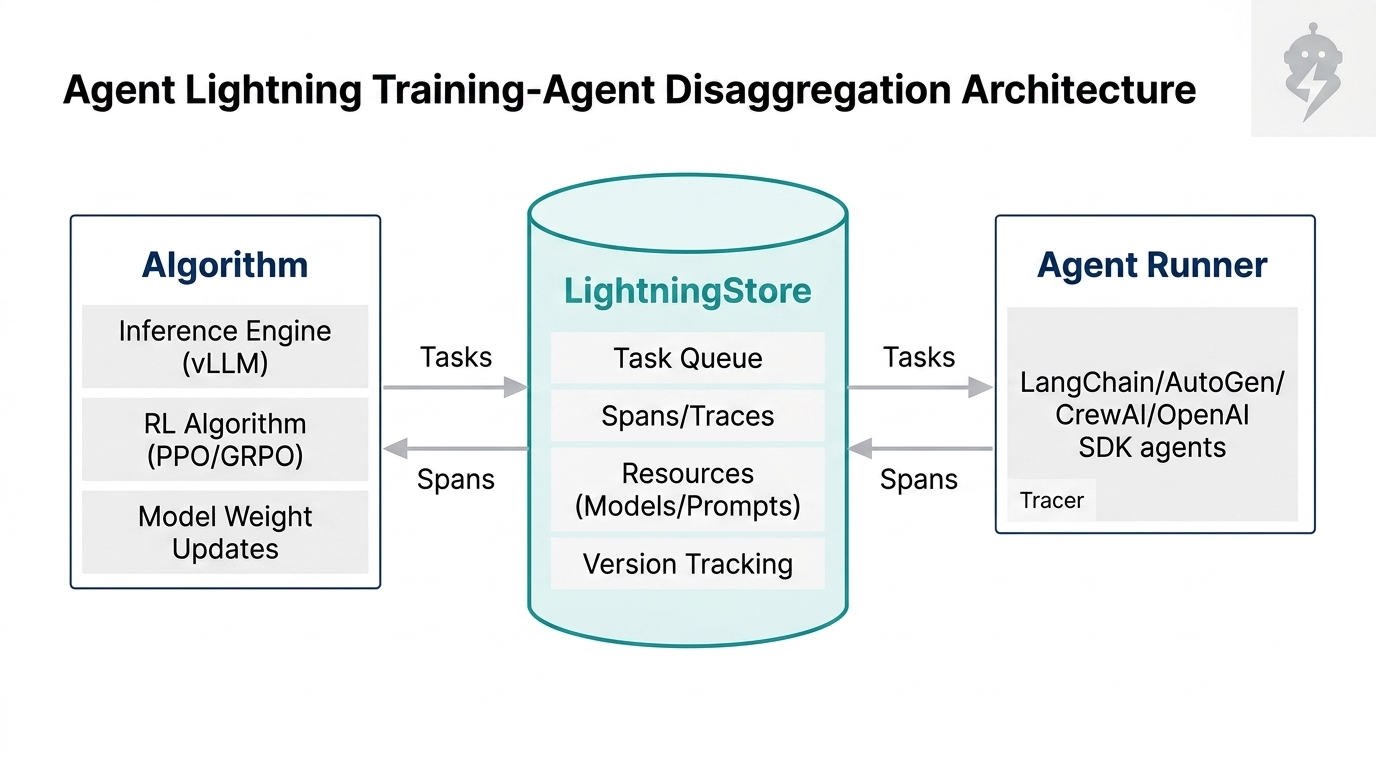

At the heart of Agent Lightning v0.3 is the Training-Agent Disaggregation (TA Disaggregation) architecture. This design separates the agent’s runtime execution from the compute-intensive training process. By doing so, it allows each component to scale independently: agent runners can run on lightweight CPUs, while the training algorithm leverages high-performance GPU clusters.

The architecture is comprised of three primary components:

1. The LightningStore

The LightningStore is the central repository for all data exchanges. It manages task queues, stores execution traces (spans), and handles versioning for models and prompts. When an agent runs, its telemetry is automatically captured and streamed to the store as a series of “spans” that represent LLM calls, tool uses, and rewards.

2. The Agent Runner & Tracer

The Runner executes the agent rollouts. Because Agent Lightning uses automatic instrumentation (a “Tracer”), it can intercept API calls from popular frameworks without requiring the developer to pass special objects or change function signatures. It captures the exact prompt, response, and even token IDs to prevent “retokenization drift”—a common cause of training instability where slightly different tokenization between inference and training leads to gradient errors.

3. The Algorithm

The Algorithm side (often using Microsoft’s VERL or Tinker backends) consumes the collected traces from the LightningStore. It implements the reinforcement learning loop, calculating gradients and updating the model weights. Once a model is updated, the new version is pushed back to the LightningStore, which the Runner then pulls for the next set of tasks.

How LightningRL handles multi-step credit assignment

Standard RL algorithms struggle with multi-step “trajectories.” Agent Lightning solves this with a hierarchical algorithm called LightningRL. Instead of treating a multi-step agent run as a single long sequence, LightningRL decomposes the trajectory into individual transitions. Each LLM call within the agent’s workflow is treated as an independent training sample, paired with its specific context and an assigned reward.

A “Credit Assignment Module” determines how the final outcome (success or failure) should be propagated back to individual steps. For example, in a SQL-generation agent that writes code, checks it, and then rewrites it, LightningRL can determine if the first generation was good and only the checker failed, or if the initial generation was the root cause of failure. This granular feedback loop allows agents to improve significantly faster than “black-box” training methods.

Technical specifications and framework support

Agent Lightning v0.3.1 (released late 2025/early 2026) has solidified its position as a framework-agnostic middleware. By providing a unified data interface, it supports a wide variety of agentic structures.

| Feature | Description / Capability |

|---|---|

| Latest Version | v0.3.1 (as of April 2026) |

| Supported Frameworks | LangChain, AutoGen, CrewAI, OpenAI Agent SDK, LangGraph, Raw Python |

| Core Algorithms | LightningRL, PPO, GRPO (via VERL), APO (Automatic Prompt Optimization) |

| Data Store Backends | InMemory, SQLite, MongoDB (for distributed deployments) |

| Inference Backend | vLLM, LiteLLM, OpenAI-compatible APIs |

| Scaling Capacity | Verified up to 128 GPU clusters (production benchmarks) |

One critical feature introduced in v0.3 is Trajectory Level Aggregation. For agents with very long interactions, this feature merges the entire conversation into a single training sample but uses advanced masking to reduce GPU compute time by up to 10x compared to turn-by-turn processing. This makes RL training economically viable for SMBs who previously couldn’t afford the massive compute overhead of agentic fine-tuning.

Implementation example: RL-trained SQL agent

To illustrate the “near-zero code change” promise, consider a standard LangChain agent used for Text-to-SQL. Historically, to train this via RL, you would need to manually log every step. With Agent Lightning, you simply wrap the agent in a LitAgent class and use the agl.emit_reward() helper to signal success.

import agentlightning as agl

from langchain.agents import create_sql_agent

# 1. Define your standard agent logic

def my_sql_agent(task_input):

agent_executor = create_sql_agent(...)

result = agent_executor.invoke(task_input)

# 2. Assign a reward based on execution success

reward = 1.0 if result["output_is_correct"] else 0.0

agl.emit_reward(reward)

return result

# 3. Train with one line

trainer = agl.Trainer(algorithm="verl_grpo", agent=my_sql_agent)

trainer.fit(dataset="spider_sql_tasks")Behind the scenes, Agent Lightning intercepts the invoke() call, traces the internal LLM reasoning steps of the LangChain agent, stores them in the LightningStore, and uses the verl_grpo algorithm to update the model so it becomes better at writing SQL in future rollouts. The agent logic remains 95% identical to a standard non-trainable script.

Strategic implications for SMBs and automation

For small to medium businesses, the value of Agent Lightning isn’t just in “training models”—it’s in the compounding ROI of self-improving workflows. While building a basic agent in n8n or LangChain is relatively simple, ensuring that agent handles 99% of edge cases is where most projects stall. Training-Agent Disaggregation changes the math by allowing agents to “practice” on historical company data and learn from their mistakes through RL.

By partnering with automation specialists who can wire these trained agents into production n8n pipelines, SMBs can deploy autonomous systems that actually get smarter over time. The “trained agent” becomes a high-value asset that performs better than a raw GPT-4 or Claude 3.5 Sonnet instance, specifically optimized for the company’s unique data schemas and business rules. This delivers a competitive advantage that isn’t just about speed, but about a proprietary intelligence layer that competitors cannot easily replicate with off-the-shelf prompts.

Conclusion

Microsoft Agent Lightning v0.3 has fundamentally lowered the barrier to entry for reinforcement learning in the agentic AI space. By decoupling execution from training and providing a framework-agnostic tracer, it allows developers to focus on building complex multi-agent workflows while the underlying infrastructure handles the rigorous math of optimization. Key takeaways include the efficiency of the TA Disaggregation architecture, the granular credit assignment of LightningRL, and the massive scaling potential of the LightningStore. For organizations looking to move beyond simple chatbots and into autonomous, high-accuracy workflows, Agent Lightning provides the essential bridge from static code to learning systems. The next logical step for forward-thinking teams is to begin instrumenting current agentic workflows with Agent Lightning to collect the high-quality spans necessary for the next generation of self-optimizing business automation.

Leave a Comment

Sign in to join the discussion and share your thoughts.

Login to Comment