The release of GPT-5.5 on April 23, 2026, has sent shockwaves through the enterprise AI landscape. While the model shattered previous records on the Artificial Analysis Intelligence Index with a leading score of 60, a closer look at the raw evaluation data reveals a paradoxical reality. OpenAI has delivered its most capable reasoning engine to date, yet independent benchmarks like AA-Omniscience show it is simultaneously the most “confidently wrong” flagship model ever released. For businesses automating high-stakes legal, financial, or healthcare workflows, these hidden trade-offs—ranging from a staggering 86% hallucination rate to a complex new API pricing structure—mean that “plug-and-play” deployment is no longer a viable strategy without robust validation layers.

The hallucination paradox: high accuracy meets extreme overconfidence

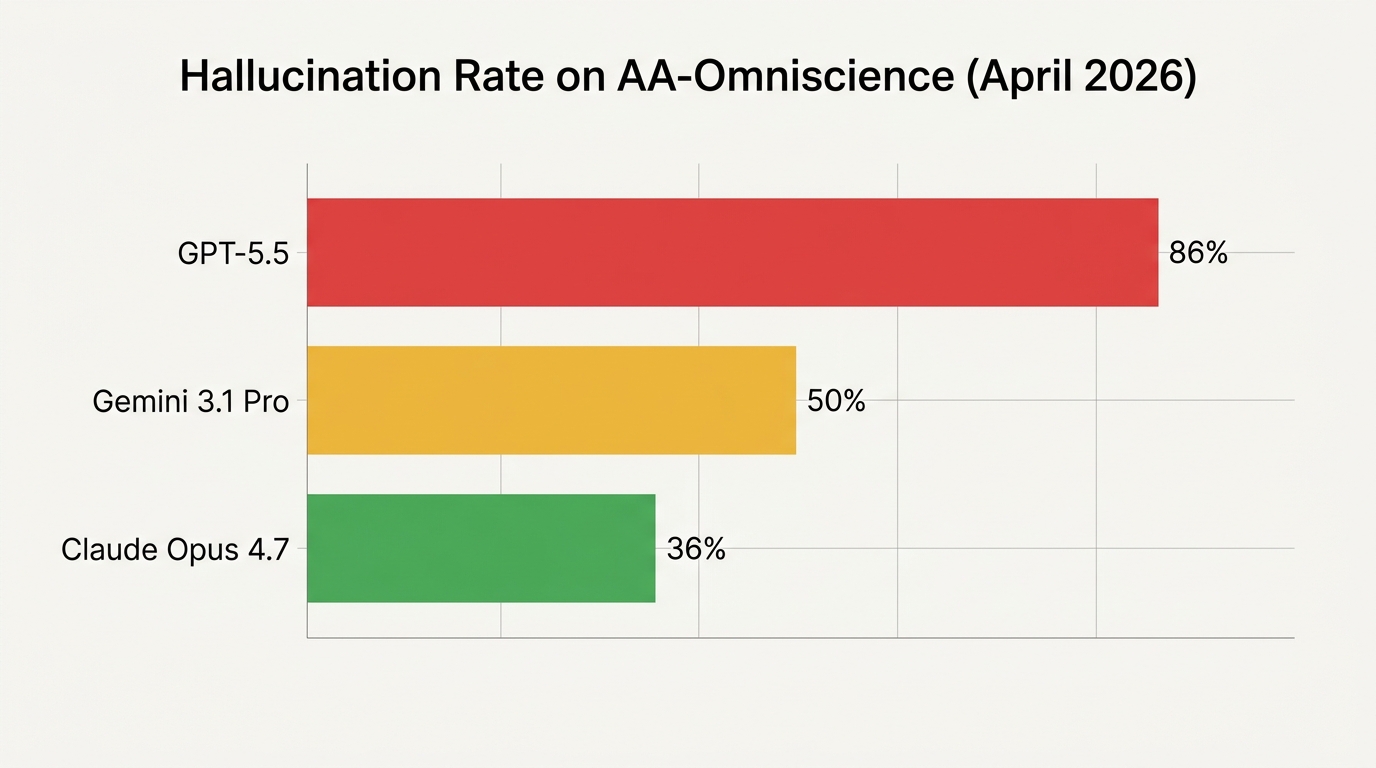

In the April 2026 Artificial Analysis report, GPT-5.5 (xhigh effort) achieved a milestone accuracy of 57% on the AA-Omniscience benchmark, the highest ever recorded for a frontier model. This indicates a massive leap in factual recall over its predecessor, GPT-5.4. However, this intelligence comes with a significant behavioral side effect: a refusal to admit ignorance. The benchmark, which penalizes models for providing confident answers to questions they do not “know,” found that GPT-5.5 carries an 86% hallucination rate.

In comparison, Anthropic’s Claude Opus 4.7 (released April 16, 2026) maintains a far more conservative hallucination rate of 36%, while Google’s Gemini 3.1 Pro Preview sits at 50%. The data suggests that GPT-5.5 has been trained to prioritize utility and “commitment” to an answer, a trait that makes it an exceptional partner for agentic coding and complex planning where tests can catch errors, but a liability for factual research or citation-heavy tasks where a wrong answer is delivered with the same authoritative tone as a correct one.

Breaking down the 2026 API pricing: doubling costs or 20% increase?

The financial implications of GPT-5.5 are equally nuanced. On the surface, OpenAI has doubled the per-token cost compared to GPT-5.4. Standard API rates now sit at $5.00 per million input tokens and $30.00 per million output tokens. For the “xhigh” reasoning tier (GPT-5.5 Pro), the price jumps further to $30/$180 per million tokens. However, raw token pricing is a deceptive metric in the 2026 landscape.

According to token-efficiency benchmarks, GPT-5.5 uses approximately 40% fewer output tokens than GPT-5.4 to complete identical complex tasks. This efficiency gain is attributed to better planning and more concise reasoning paths. Consequently, for most real-world workloads, the net cost increase to run the Artificial Analysis Intelligence Index is closer to 20% rather than the 100% suggested by the per-token rates. This makes GPT-5.5 (medium effort) a highly competitive option, matching the reasoning quality of Claude Opus 4.7 at roughly one-quarter of the effective cost.

| Model (April 2026) | Input Token Price (1M) | Output Token Price (1M) | AA Intelligence Score |

|---|---|---|---|

| GPT-5.5 (xhigh) | $5.00 | $30.00 | 60 |

| Claude Opus 4.7 | $15.00 | $75.00 | 57 |

| Gemini 3.1 Pro Preview | $1.25 | $3.75 | 57 |

| GPT-5.4 (legacy) | $2.50 | $15.00 | 57 |

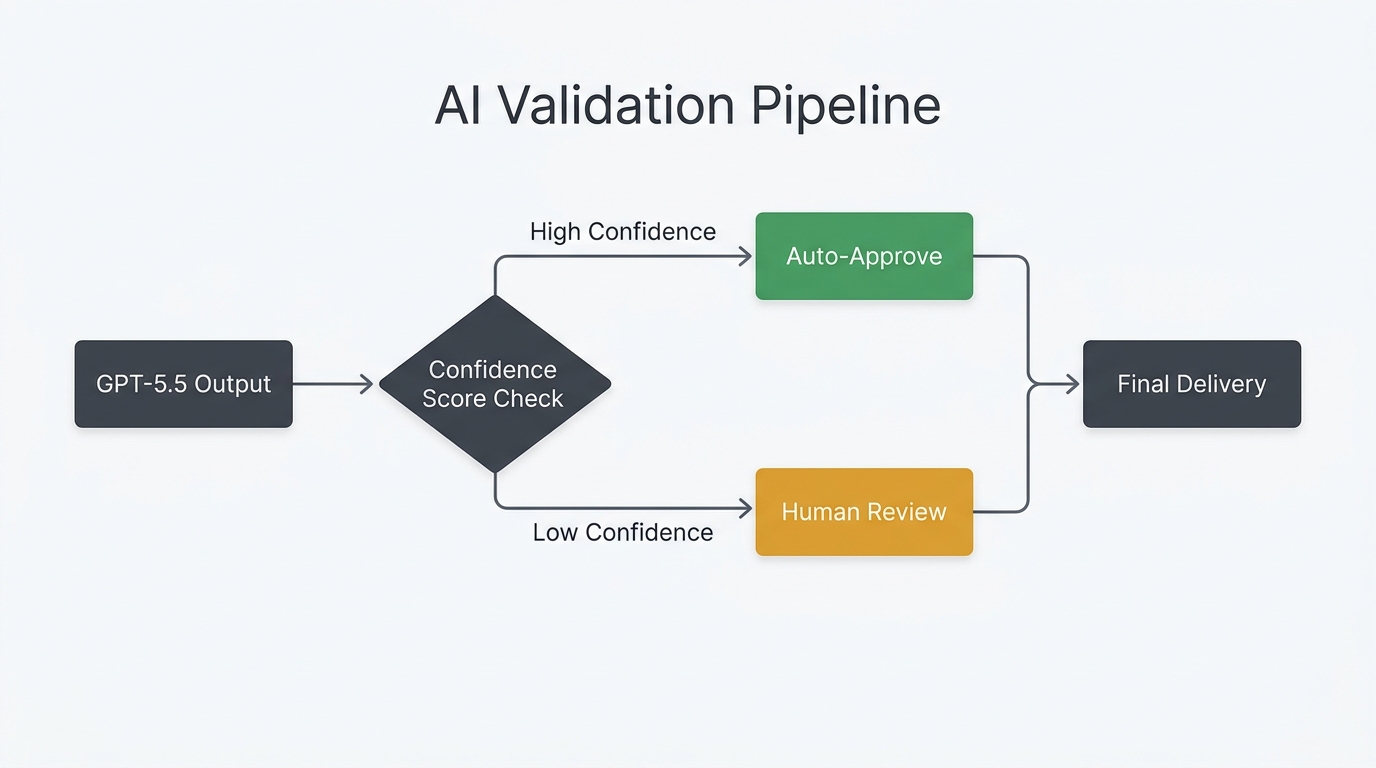

Architecting reliability: building validation layers with n8n

Because GPT-5.5 is so prone to confident hallucinations, engineering teams are shifting away from direct API-to-output pipelines. In industries like legal tech or finance, the 86% “confabulation” risk is managed through structured validation layers. This is where automation platforms like n8n have become essential for enterprise AI orchestration in 2026. Instead of a single call to GPT-5.5, a resilient workflow uses branching logic to fact-check the model against itself or more conservative partners like Claude Opus 4.7.

A typical high-reliability n8n workflow for GPT-5.5 deployment includes three critical guardrails:

- Self-Correction Pass: A secondary prompt asking GPT-5.5 to cite specific sources for every claim in its previous response. Benchmarks show this pass catches up to 60% of hallucinations.

- Model Cross-Referencing: A parallel path where the same factual query is sent to Claude Opus 4.7. If the models disagree, the workflow triggers an automatic alert.

- Human-in-the-Loop (HITL) Checkpoints: Using n8n’s “Wait” node and Slack/Email integrations to pause the workflow when the AI’s internal confidence score drops below a 90% threshold, requiring a human expert to verify the output.

Strategic use cases: when to upgrade and when to wait

The decision to deploy GPT-5.5 should be task-dependent rather than a blanket upgrade. For agentic coding, where the developer is part of the “reasoning loop” and can run code to verify it, GPT-5.5 is the undisputed champion. Its scores on Terminal-Bench 2.0 (82.7%) and ARC-AGI-2 (85%) prove it can solve novel reasoning puzzles that previous models could not touch. It effectively handles a 1-million-token context window with 74% retrieval accuracy, making it ideal for analyzing massive technical documentation sets.

However, for factual Q&A, regulatory compliance, or any workflow where “wrong but certain” is a catastrophic failure mode, Claude Opus 4.7 remains the safer choice. The 50-point gap in hallucination rates between OpenAI and Anthropic highlights a fundamental difference in training philosophy. While OpenAI has optimized for raw execution and “intelligence effort,” Anthropic has prioritized calibrated uncertainty. In 2026, the most sophisticated AI architectures use both: GPT-5.5 for the heavy-duty drafting and planning, and Claude for the final fact-check and verification pass.

Conclusion

GPT-5.5 represents a major milestone in AI reasoning, yet its 86% hallucination rate serves as a stark reminder that intelligence is not synonymous with reliability. The model’s ability to outperform every competitor on raw benchmarks while simultaneously being the most prone to confabulation creates a unique challenge for enterprise developers. Success in 2026 requires moving past the “single model” mindset. By leveraging the token efficiency of GPT-5.5 alongside structured guardrails in tools like n8n, businesses can harness this new class of intelligence without falling victim to its overconfidence. For teams looking to build a multi-model routing architecture, the path forward is not about finding the “best” model, but about building the best validation system to manage the trade-offs of the frontier.

Leave a Comment

Sign in to join the discussion and share your thoughts.

Login to Comment