Microsoft’s Agent Lightning promises to make any AI agent trainable through reinforcement learning with “almost zero code changes.” On paper, that pitch is compelling: plug in your LangChain or AutoGen agent, flip a switch, and watch it learn from experience. But behind the marketing sits a GPU-hungry training pipeline, a finicky vLLM configuration requirement, and a framework that — as of v0.3.0 (released December 24, 2025) — still carries 140 open issues on GitHub. For small and mid-sized businesses evaluating whether to build agent RL training in-house or deploy proven automated agent workflows, the infrastructure math tells a story Microsoft’s README does not.

The architecture Agent Lightning actually requires

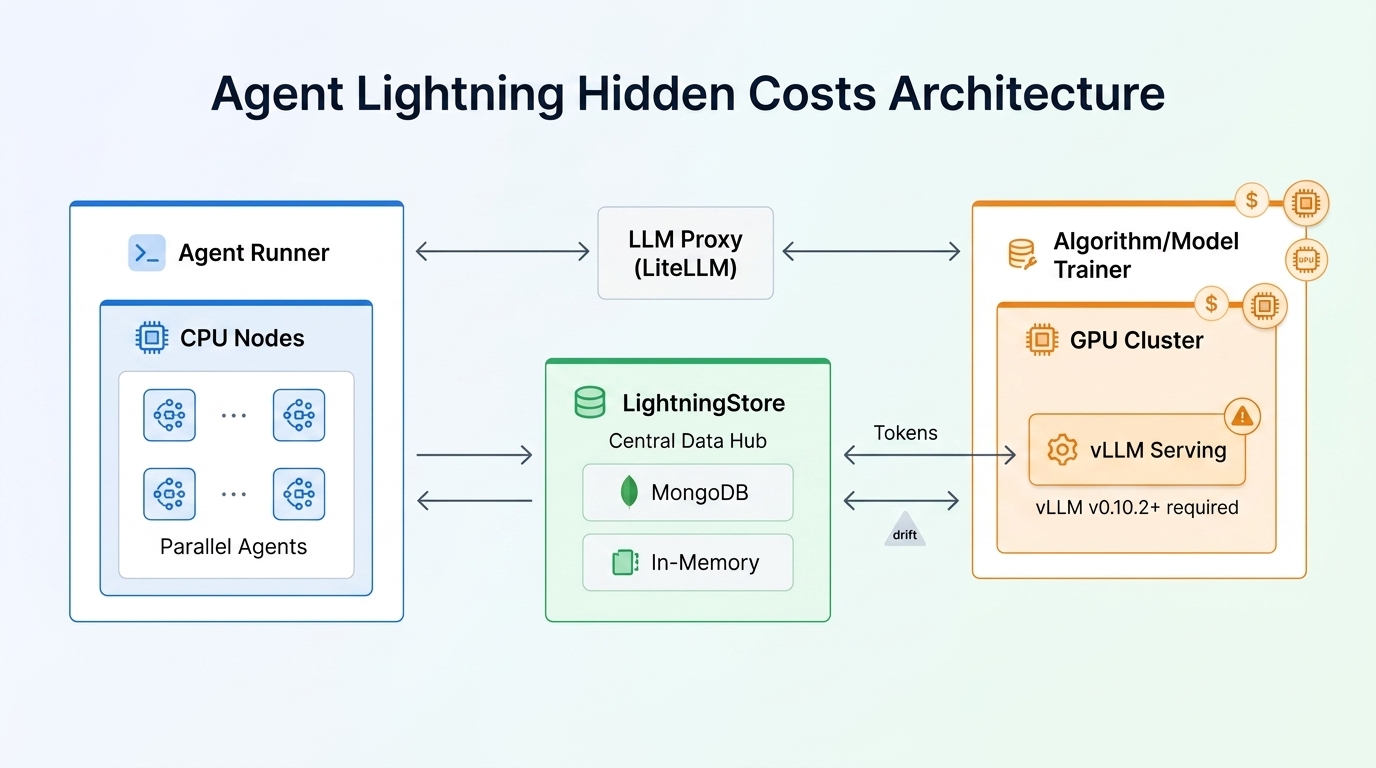

Agent Lightning (AGL) is built on a decoupled, three-component architecture. The Agent Runner executes your agents and collects interaction traces. The LightningStore serves as a central data hub, mediating all communication between components. The Algorithm/Model Trainer runs the actual RL optimization on GPUs. An LLM Proxy built on LiteLLM sits between runners and your inference engine, handling request routing and telemetry.

This is where the first hidden cost appears. The Algorithm/Model Trainer is not lightweight. It hosts LLMs for both inference and training, orchestrates the RL loop, manages rollout sampling, and performs model updates. Microsoft’s own benchmarks for LightningStore scalability tested on Azure Standard_D32as_v4 and Standard_D64ads_v5 VMs — machines that cost several dollars per hour. The v0.3.0 release notes reference verified scaling up to 128 GPUs through the Youtu-Agent project. That is enterprise-grade infrastructure, not something you casually spin up on a weekend.

Retokenization drift: the silent training killer

If you skip one configuration detail, your entire training run can produce garbage results. That detail is vLLM version 0.10.2 or later with the return_token_ids parameter enabled.

Here is why it matters. Most agents communicate with LLMs through OpenAI-compatible Chat Completion APIs, which return text strings. To use those strings for RL training, you have to retokenize them — convert the text back into token IDs. The problem is that retokenization does not always produce the same token IDs the model originally generated. A word like “HAVING” might be produced as tokens H + AVING during inference, but retokenized as HAV + ING during training. The text looks identical, but the token sequences differ.

This phenomenon, called retokenization drift, has three root causes documented in the vLLM blog post co-authored with the Agent Lightning team:

- Non-unique tokenization — The same text can be split into different tokens depending on the tokenizer’s state.

- Tool-call serialization — Generated tool calls get parsed into objects and re-rendered, potentially altering whitespace, formatting, or even auto-correcting JSON errors the model actually made.

- Chat template differences — Different frameworks implement slightly different chat templates for the same model. A single LLaMA model can use multiple templates across vLLM and HuggingFace.

The symptom is unstable learning curves. The vLLM blog shows comparison charts where the retokenization approach produces erratic reward trajectories even with identical settings, while the token-ID-preserving approach shows smooth, stable convergence. The Agent Lightning team calls this an off-policy RL effect — your trainer thinks it is optimizing one sequence, but it is actually working against a different one.

What the component stack actually looks like in production

Running Agent Lightning at scale means operating a multi-service distributed system. Here is what your infrastructure checklist looks like:

| Component | Purpose | Resource profile |

|---|---|---|

| Agent Runner(s) | Execute agents, collect traces | CPU-heavy, horizontally scaled |

| LightningStore | Central data exchange hub | In-memory (dev) or MongoDB (prod) |

| Algorithm/Model Trainer | RL loop, model inference + updates | GPU-intensive (multi-GPU common) |

| LLM Proxy (LiteLLM) | Request routing, tracing | Lightweight, co-located with trainer |

| vLLM/SGLang engine | Model serving | GPU, requires v0.10.2+ for token IDs |

| Tracer (OTel/AgentOps/Weave) | Span collection | Embedded in runner |

The v0.3.0 release added MongoDB as a LightningStore backend, which improved throughput up to 15x compared to v0.2.2 at high concurrency. But that means you now need to operate a MongoDB instance alongside everything else. For distributed training, the framework supports client-server and shared memory execution strategies, plus Ray-based parallelization through the VERL integration.

The maturity question: 140 open issues and counting

As of April 2026, Agent Lightning has over 15,400 GitHub stars — impressive for a project first released in August 2025. But stars do not equal production readiness. The repository currently tracks 140 open issues and 47 open pull requests. The latest release is v0.3.0 from December 24, 2025, with no subsequent patch in the four months since. The documentation itself carries a warning: “You are viewing development documentation.”

The release history tells a story of rapid iteration with real growing pains. Version 0.2.0 alone included 78 pull requests. Version 0.3.0 introduced significant LightningStore redesign, Tinker integration, Azure OpenAI support, MongoDB backends, and a preview dashboard. Each release has brought compatibility fixes — VERL 0.6.0 compatibility, OpenAI Agents SDK 0.6 compatibility, LiteLLM proxy restart issues, store port conflicts. These are the kinds of bugs you expect in an early-stage framework, but they are also the kinds of bugs that cost engineering time when you hit them during a training run.

The build-versus-buy math for SMBs

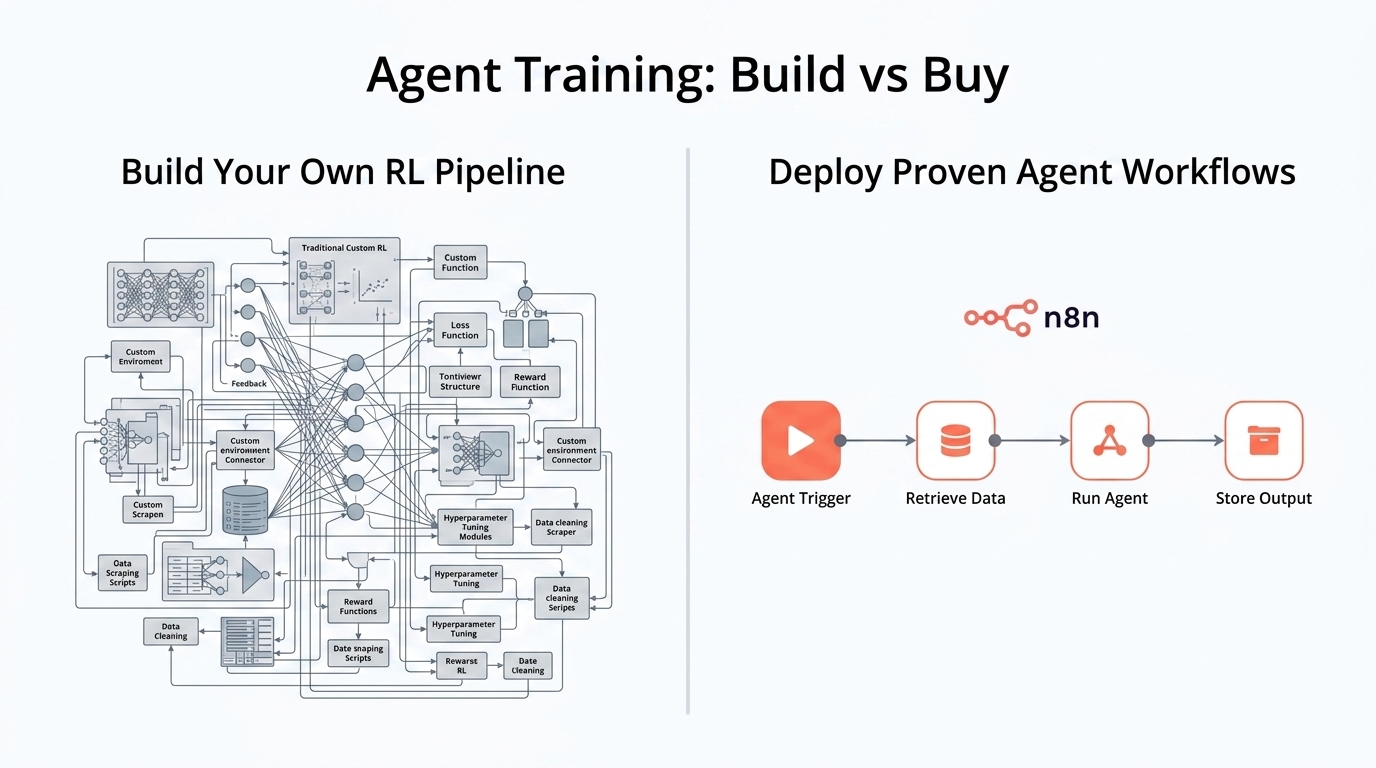

For organizations weighing whether to invest in building agent RL training pipelines, the cost breakdown is revealing. Building with Agent Lightning means provisioning GPU clusters (or paying cloud GPU rates), configuring vLLM with the exact version requirements, operating LightningStore and potentially MongoDB, designing reward signals specific to your use case, managing the training loop, and debugging retokenization drift when it silently corrupts your training data. You also need ML engineering expertise to tune hyperparameters, interpret reward curves, and determine when an agent has actually improved versus when it has overfit to its training reward.

The alternative path — deploying proven agent workflows through platforms like n8n — trades training flexibility for operational simplicity. N8n-based agent workflows are pre-built, tested, and maintained by experienced partners. They do not require GPU infrastructure, reward signal engineering, or distributed systems operations. For many business use cases (document processing, customer support routing, data pipeline automation), the marginal improvement from RL-trained agents may not justify the infrastructure investment.

The decision ultimately comes down to whether your competitive advantage lives in having a custom-trained model or in deploying working agent automations quickly. If you are building a novel AI product where model performance directly drives revenue, Agent Lightning is worth the infrastructure investment. If you are automating business processes and need reliable agent behavior now, the infrastructure math favors proven workflow deployments.

Key takeaways

- “Zero code change” is marketing — You need

agl.emitcalls, reward definitions, and algorithm configuration. The integration is simpler than a full rewrite, but it is not zero. - vLLM v0.10.2+ is non-negotiable — Without

return_token_ids, retokenization drift causes unstable learning curves that can waste entire training runs. - The GPU bill is real — The Algorithm/Model Trainer component requires significant GPU resources. Verified scaling goes up to 128 GPUs.

- 140 open issues signal early-stage maturity — The framework is actively developed but has not yet published production case studies or specific real-world performance metrics.

- SMBs should evaluate the build-buy tradeoff honestly — N8n-automated agent workflows from experienced partners avoid the infrastructure overhead entirely for use cases that do not require custom model training.

Agent Lightning represents a genuine technical contribution — a framework-agnostic middleware layer for agent RL that did not exist before. But like many powerful open-source tools, its capabilities are inversely proportional to its ease of adoption. Before committing engineering resources to building an RL training pipeline, organizations should run the actual infrastructure cost calculations: GPU hours, engineering time for reward design and debugging, MongoDB operations, and the opportunity cost of delayed agent deployments. Sometimes the most expensive path is the one you build yourself.

Leave a Comment

Sign in to join the discussion and share your thoughts.

Login to Comment