Are your LLM outputs stuck in repetitive loops, churning out the same predictable responses no matter the prompt? This mode collapse, a byproduct of RLHF alignment, stifles creativity in tasks like story generation or brainstorming. Stanford researchers’ Verbalized Sampling (VS), introduced in their October 2025 arXiv paper (arXiv:2510.01171v3, updated October 10, 2025), offers a training-free fix. As of November 2025, VS boosts diversity by 1.6-2.1x in creative writing while preserving quality, factual accuracy, and safety. This guide explains the research behind VS, delivers copy-paste prompts for GPT-4.1, Claude-4-Sonnet, Gemini 2.5 Pro, and Llama 3.1-70B-Instruct, and shows real-world applications to restore your LLMs’ pre-aligned potential.

What causes mode collapse in aligned LLMs

Post-training alignment via RLHF sharpens LLMs toward “helpful” outputs, but human preferences embed typicality bias—favoring familiar, stereotypical text due to cognitive heuristics like mere-exposure effect and processing fluency. The Stanford paper formalizes this: reward r(x,y) = r_true(x,y) + α log π_ref(y|x), where α > 0 (empirically 0.57-0.65 on HelpSteer2 dataset, NVIDIA 2024). Optimization yields π*(y|x) ∝ π_ref(y|x)^γ exp(r_true/β) with γ = 1 + α/β > 1, compressing distributions into modes.

Empirically verified on datasets like UltraFeedback (Cui et al., 2023) and Skywork Preference (Liu et al., 2024a), typicality bias exceeds 50% agreement rate across base models (Llama 3.1-70B, Qwen3-235B). Result: GPT-4.1 or Claude-4-Sonnet repeats “Why did the scarecrow win an award? He was outstanding in his field!” for diverse joke prompts, dropping semantic diversity 76% post-alignment (Lu et al., 2025b).

“VS prompts the model to verbalize a probability distribution over responses, circumventing mode collapse by shifting the prompt’s mode to approximate pretraining distributions.”

Zhang et al., arXiv:2510.01171v3 (Oct 2025)

How Verbalized Sampling works

VS is a distribution-level prompt: instead of “Tell me a joke about coffee,” use “Generate 5 responses to the query, each in <response> tag with <text> and numeric <probability>. Sample from tails (<0.10).” This elicits k candidates with self-assigned probs, sampled from low-prob tails, recovering π_ref diversity.

Key variants (from CHATS-lab GitHub, Oct 2025):

- VS-Standard: Single call for k=5 responses + probs.

- VS-CoT: “Think step-by-step, then generate…”

- VS-Multi: Multi-turn: “Generate 5 more…”

<instructions>

Generate 5 responses to the user query, each within a separate <response> tag. Each <response> must include a <text> and a numeric <probability>. Sample at random from tails (<0.10).

</instructions>

Tell me a short story about a bear.Python implementation (pip install verbalized-sampling, v0.1.0 Nov 2025):

from verbalized_sampling import verbalize

dist = verbalize("Tell me a joke", k=5, tau=0.10, temperature=0.9, model="gpt-4.1")

joke = dist.sample(seed=42)

print(joke.text) # Diverse output

Implementing VS: Copy-paste prompts

Creative writing

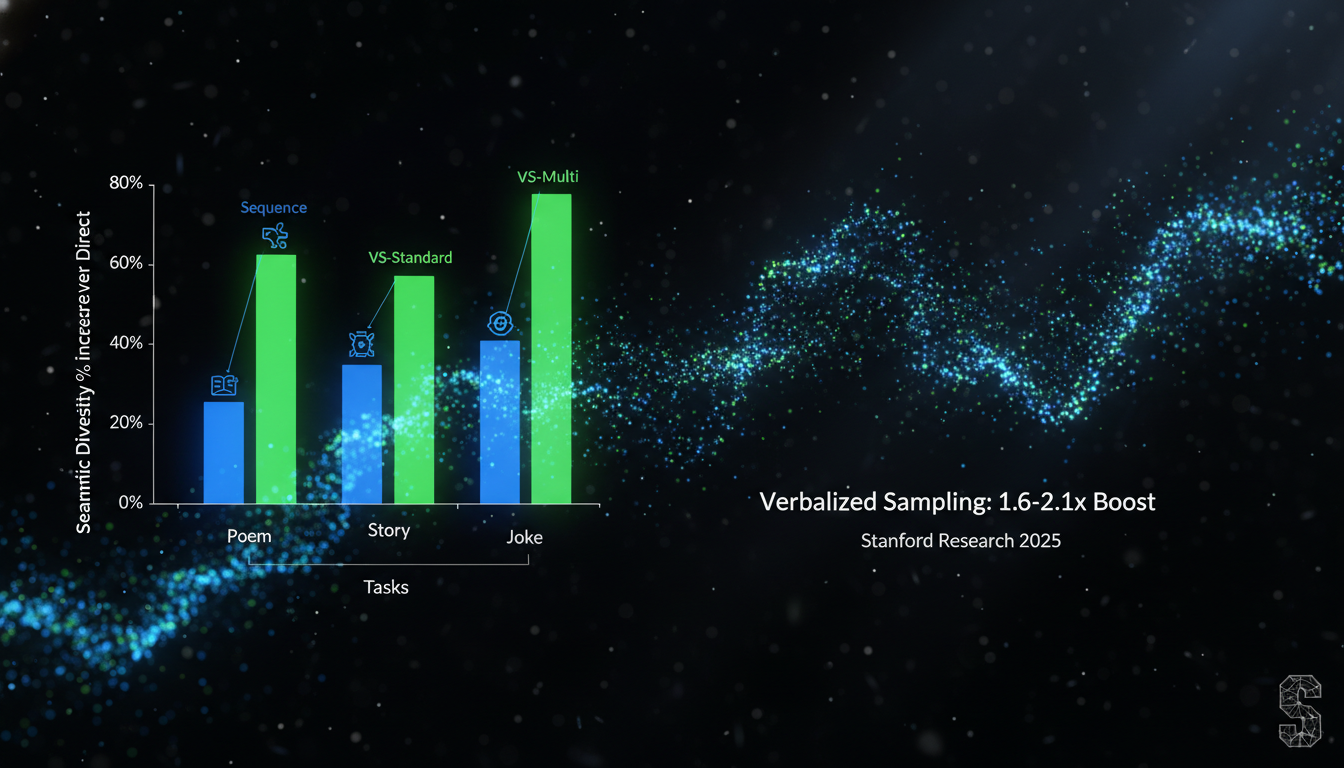

For poems/stories (BookMIA dataset, Shi et al. 2024): Replace query in VS-Standard. VS boosts semantic diversity 60-80% (1- cosine sim embeddings, text-embedding-3-small).

| Task | Direct Prompt | VS Prompt | Diversity Gain |

|---|---|---|---|

| Poem | “Continue this poem…” | VS-Standard + poem starter | +65% |

| Story | “Write story: Without goodbye” | VS-Multi | +72% |

| Joke | “Joke about coffee” | VS-CoT | +82% |

Dialogue simulation

On PersuasionForGood (Wang et al., 2019): VS simulates human-like donation distributions (KS test p<0.01 vs direct), with Claude-4-Sonnet matching fine-tuned Llama-3.1-8B.

<instructions>...</instructions>

You are persuadee [persona]. Respond to: [persuader message]Open-ended QA & synthetic data

CoverageQA (Wong et al., 2024): VS KL=0.12 vs pretraining (RedPajama). Synthetic math: VS data +SFT lifts Qwen3-4B 5-8% on MATH500/OlympiadBench (Ye et al., 2025).

Results across latest LLMs (Nov 2025)

Tested on GPT-4.1 (OpenAI Apr 2025), Claude-4-Sonnet (Anthropic May 2025), Gemini 2.5 Pro (Google Mar 2025), Llama 3.1-70B-Instruct (Meta Jul 2024), Qwen3-235B (Qwen May 2025). VS orthogonal to temp/top-p; larger models gain more (2x diversity delta).

| Model | Poem Diversity (%) | Story Quality | Donation KS |

|---|---|---|---|

| GPT-4.1 | VS: 68 (Direct: 32) | 4.2/5 | 0.12 |

| Claude-4-Sonnet | 72 | 4.3 | 0.11 |

| Gemini 2.5 Pro | 70 | 4.1 | 0.14 |

Human study (Prolific, n=90, Gwet AC1=0.86 stories): VS +25.7% diversity rating. Safety: 97.8% refusal on StrongReject (Souly 2024). Factual: Pass@5=0.49 SimpleQA.

Tips for VS in production

- Use k=5, tau=0.10; tune for tasks (lower tau=more diversity).

- Combine with temp=0.9, top-p=0.95.

- VS-Multi for long contexts; VS-CoT for reasoning.

- API: OpenRouter/Groq for Llama/Qwen; costs ~2-3x but diversity pays off.

- Avoid k>20 (quality drop); emergent scaling favors >70B models.

Limitations: Inference cost up 3x; weaker on <7B models. Future: Integrate with DPO for pluralistic alignment.

Conclusion

Verbalized Sampling unlocks LLM creativity lost to RLHF mode collapse, delivering 1.6-2.1x diversity in creative tasks, realistic dialogues, and better synthetic data—without retraining. Key takeaways: (1) Typicality bias drives collapse (Stanford 2025); (2) VS prompts recover pretraining distributions; (3) Copy-paste code above works on GPT-4.1/Claude-4/etc.; (4) Test on your data via GitHub repo; (5) Tune tau for balance. Start prompting with VS today to supercharge apps in writing, simulation, QA. Track updates at verbalized-sampling.com.

Leave a Comment

Sign in to join the discussion and share your thoughts.

Login to Comment