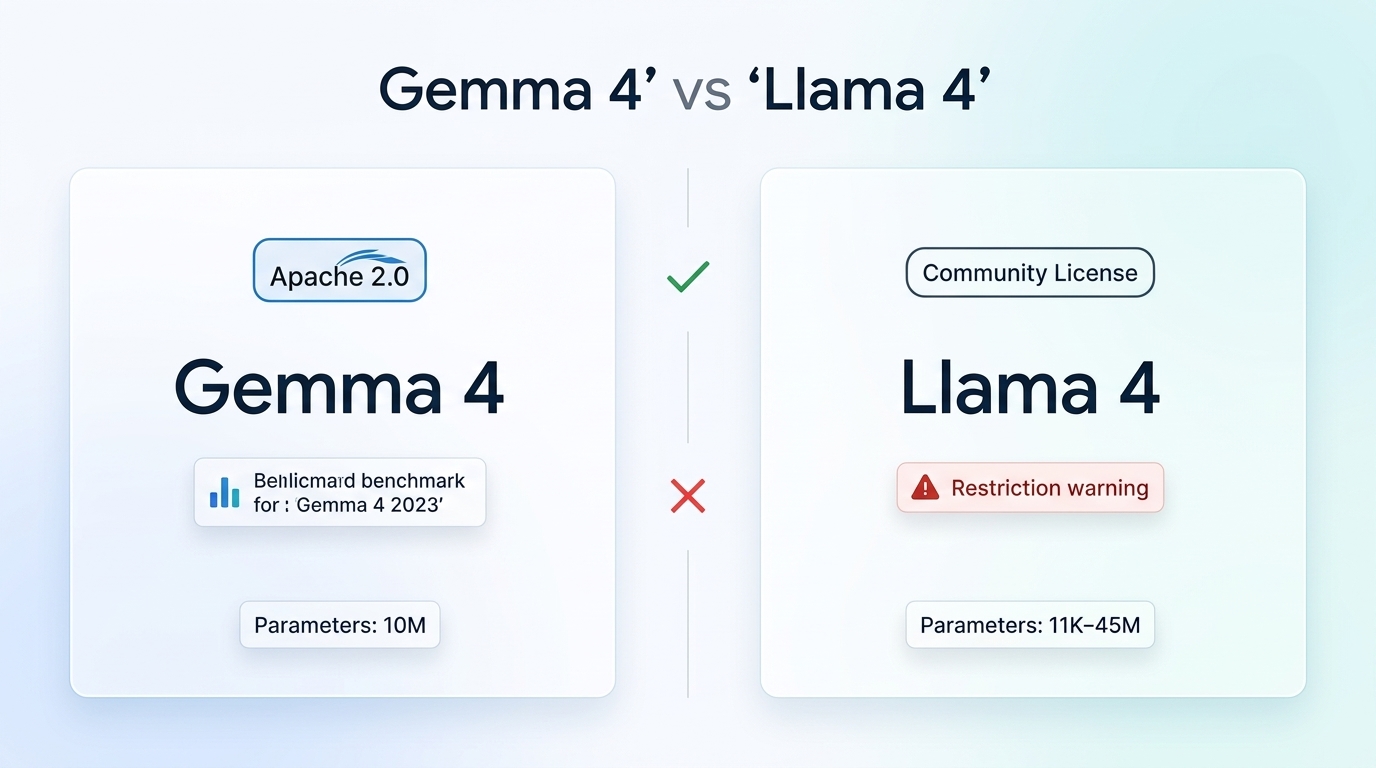

The open-source AI landscape shifted dramatically in April 2026 when Google DeepMind released Gemma 4 under the Apache 2.0 license—the first fully permissive, industry-standard open license in the Gemma family history. This release challenges Meta’s Llama 4, which arrived a year earlier in April 2025 but continues to operate under a custom Community License with significant restrictions.

For developers, startups, and SMBs building AI-powered applications, the choice between these two flagship open-weight models now hinges on more than just benchmark scores. Licensing freedom, commercial use rights, and deployment flexibility have become equally critical factors in 2026’s competitive AI ecosystem. Small and medium businesses increasingly turn to orchestration tools like n8n to deploy these models locally, avoiding vendor lock-in and maintaining complete data sovereignty.

Understanding Gemma 4: Google’s Apache 2.0 gambit

Released on April 2, 2026, Gemma 4 represents a pivotal shift in Google’s approach to open AI. Unlike previous Gemma releases—which used custom licenses with certain restrictions—Gemma 4 models ship under Apache 2.0, the same permissive license governing Kubernetes, Android, and Go. This provides three foundational freedoms: autonomy to modify and build upon the models, control over private local execution, and clarity about developers’ rights without navigating prescriptive terms of service.

The Gemma 4 family includes four distinct variants covering the full spectrum of deployment scenarios—E2B (2.3B effective parameters), E4B (4.5B effective), 26B A4B (Mixture-of-Experts with 4B active parameters), and the flagship 31B dense model. All variants handle text, images, video, and audio inputs natively, with the flagship 31B model ranking third among all open models globally as of April 2026. With over 89% score on AIME mathematics benchmarks and 80% on LiveCodeBench coding evaluation, these models match or exceed proprietary model performance on specialized reasoning tasks.

Understanding Llama 4: Meta’s community approach

Meta released Llama 4 in April 2025 with significant architectural improvements over Llama 3, but retained the Llama Community License that restricts commercial use. The family includes three variants: Llama 4 Scout (17 billion active parameters), Maverick (34 billion active parameters), and the unreleased Behemoth flagship. The defining feature is Scout’s massive 10 million token context window—roughly equivalent to processing 80 novels simultaneously.

However, the Community License comes with significant strings attached. If, on Llama 4’s release date (April 5, 2025), your products or services had greater than 700 million monthly active users as a licensee or affiliate, you must request a commercial license from Meta—a grant Meta may provide in its “sole discretion.” This creates legal uncertainty for developers building fast-scaling applications, as hitting the MAU threshold suddenly triggers licensing obligations beyond their control.

License freedom: Apache 2.0 vs. Community restrictions

The licensing distinction creates fundamentally different risk profiles for production deployment. Apache 2.0—a license approved by the Open Source Initiative for over two decades—grants broad permissions including patent rights, explicit commercial use authorization, and unambiguous modification rights. Developers know exactly where they stand from day one, with no usage thresholds that could trigger unexpected compliance requirements.

Meta’s Community License, while more permissive than some proprietary alternatives, introduces legal complexity that Apache 2.0 avoids. The 700 million MAU threshold creates an uncertain cliff for successful applications—developers cannot predict their compliance obligations at conception, and the requirement to request Meta’s “sole discretion” approval introduces approval risk for the largest commercial successes.

| Feature | Gemma 4 (April 2026) | Llama 4 (April 2025) |

|---|---|---|

| License Type | Apache 2.0 (OSI-approved) | Llama Community License |

| Commercial Restrictions | None | 700M+ MAU requires approval |

| Patent Grant | Explicit | Limited |

| Modification Rights | Unrestricted | Subject to usage limits |

| Distribution | Free | Free with attribution |

| Model Sizes | E2B (2.3B), E4B (4.5B), 26B-A4B MoE (4B active), 31B dense | Scout (17B), Maverick (34B), Behemoth (unreleased) |

| AIME 2025 Benchmark | ~89% | Competitive but lower |

| LiveCodeBench | 80% | Strong coding performance |

| Context Window | Standard lengths | 10M tokens (Scout) |

Local deployment for SMBs: Enter n8n

Small and medium businesses increasingly seek alternatives to cloud-hosted AI services—not just for cost control, but for data sovereignty. By running open-source LLMs locally, SMBs ensure sensitive business data never leaves their infrastructure, enable completely private AI automation workflows, and eliminate per-token API costs that scale unpredictably with usage.

n8n has emerged as the leading workflow automation platform for this use case. As a fair-code, self-hostable alternative to Zapier and Make, n8n provides native integration with local LLMs through Ollama, Hugging Face, and custom HTTP endpoints. With 400+ integrations and dedicated AI automation nodes, n8n lets SMBs build sophisticated agentic workflows orchestrating models like Gemma 4 and Llama 4 without exposing proprietary data to third-party APIs.

The combination works particularly well because both model families run efficiently on consumer hardware when quantized. The Gemma 4 E2B (2.3B) and Llama 4 Scout (though larger) can operate on GPU-equipped laptops and modest servers, making them accessible to businesses without enterprise infrastructure budgets. SMBs use n8n to route between models—smaller variants for quick responses, larger ones for complex reasoning—creating cost-efficient hybrid AI systems.

Performance at the frontier

Benchmark comparisons favor Gemma 4 on mathematical reasoning tasks, with its 89% AIME 2025 score representing a significant leap from previous open-source generations. The 26B A4B Mixture-of-Experts variant offers particular value—delivering 31B-quality outputs using only 4 billion active parameters per forward pass, dramatically reducing inference costs.

Llama 4 counters with breakthrough context window capabilities. The Scout model’s 10 million token context enables entirely new application categories: long-form document analysis, multi-novel fiction understanding, and large codebase comprehension that smaller-context models cannot match. For developers building RAG applications where context size determines application scope, this feature sometimes trumps raw benchmark performance.

Which open-source model wins?

Choose Gemma 4 when: You need Apache 2.0’s legal certainty for commercial products, prioritize mathematical reasoning and coding accuracy, require flexible modification rights for model adaptation, or want cutting-edge performance in smaller distillation-friendly architectures. The licensing freedom eliminates compliance anxiety as your application scales.

Choose Llama 4 when: Your application specifically benefits from enormous context windows (document analysis, legal research, fiction understanding), you stay comfortably below the 700M MAU threshold, or you require Meta AI integration for consumer-facing applications. The care requirements demand legal review before production deployment.

For the majority of SMBs building AI-powered products in 2026, Gemma 4’s combination of Apache 2.0 licensing and benchmark-leading performance removes the friction that previously made open-source models risky for commercial products. Paired with n8n’s orchestration capabilities, teams gain complete control over their AI infrastructure without sacrificing the performance previously available only through proprietary APIs. The result is vendor independence that scales responsibly—a strategic advantage as the AI landscape continues shifting.

Leave a Comment

Sign in to join the discussion and share your thoughts.

Login to Comment