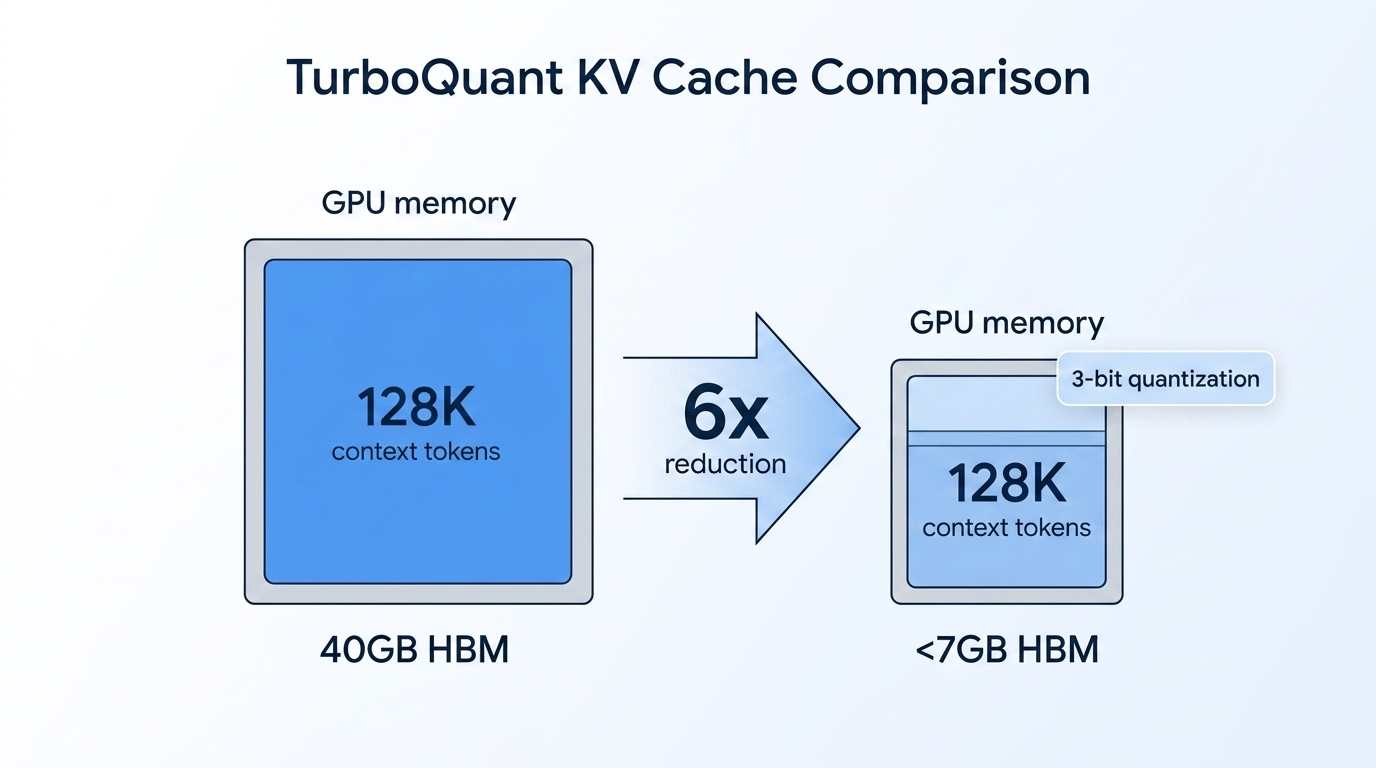

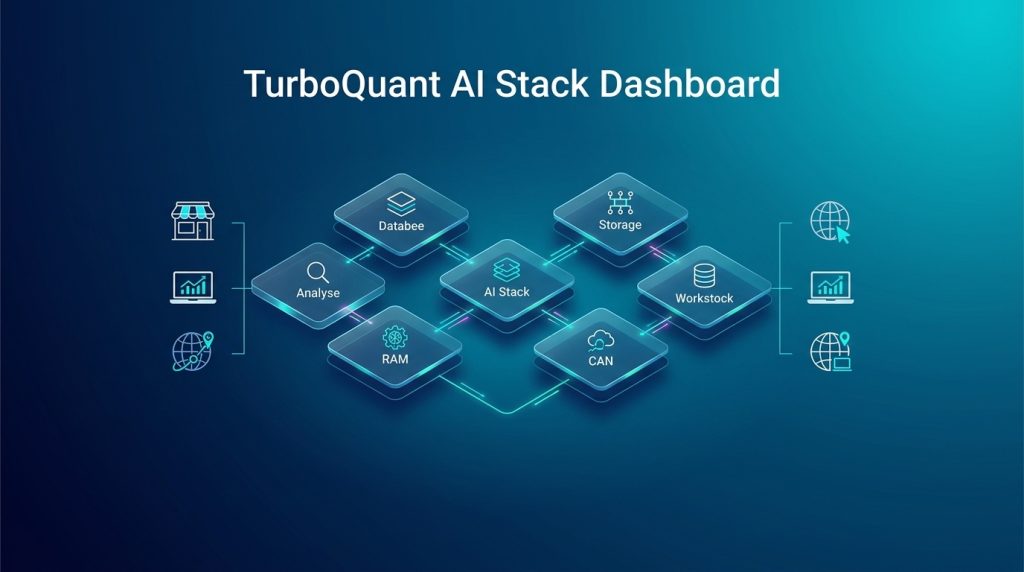

Long-context large language models have long been the exclusive domain of enterprises with deep pockets and racks of H100 GPUs. But Google’s TurboQuant, introduced in March 2026 and presented at ICLR 2026, fundamentally shifts that equation. By compressing KV caches to just 3 bits with zero accuracy loss, TurboQuant delivers a 6x memory reduction and up to 8x inference speedup — enabling SMBs to run 128K context models on a single high-end consumer GPU.

For businesses that rely on n8n automation workflows and custom AI agents, this breakthrough changes everything. Previously, a single 128K context prompt consumed approximately 40GB of HBM memory. With TurboQuant, that drops below 7GB. Here’s what this means for your AI stack and how to integrate these efficiency gains into production-ready solutions.

Why the KV cache matters for SMB AI deployment

Every time an LLM processes a conversation, document, or workflow, it caches intermediate computations in what’s called a key-value (KV) cache. This cache grows linearly with context length. As modern models push context windows beyond 128K tokens — with GPT-5.4 and Gemini 3.1 Pro now supporting up to 1 million tokens — this cache becomes the primary memory bottleneck during inference.

Before TurboQuant, the economics were brutal. A document processing AI agent handling legal contracts or multi-page financial reports might require multiple A100 GPUs just to store one user’s context. Cloud inference costs scaled accordingly, with per-token pricing for long-context models often exceeding $0.03 per 1K tokens input. For SMBs running high-volume automation workflows, these costs compound quickly.

How TurboQuant achieves 6x compression with zero loss

TurboQuant isn’t another quantization hack that sacrifices quality for efficiency. It’s a theoretically-grounded compression algorithm combining two complementary techniques:

- PolarQuant converts vectors from Cartesian to polar coordinates, allowing direct quantization on a fixed circular grid without normalization overhead. This eliminates the 1-2 bits of memory overhead that traditional methods require.

- Quantized Johnson-Lindenstrauss (QJL) applies a 1-bit correction layer to residual error, using the Johnson-Lindenstrauss transform to preserve distance relationships while adding zero memory overhead.

Crucially, TurboQuant is data-oblivious. It requires no training, no calibration data, and no fine-tuning. You apply it at inference time as a drop-in optimization. On the comprehensive LongBench evaluation covering question answering, code generation, and summarization, TurboQuant matched or exceeded baseline accuracy while using 6x less memory.

On “needle-in-haystack” tests — designed to test whether a model can find specific information buried in massive context — TurboQuant achieved perfect recall scores identical to uncompressed models. This isn’t merely “good enough” compression; it’s lossless for practical purposes.

Practical deployment scenarios for SMBs

The 6x memory reduction opens several practical deployment paths that were previously impractical for SMBs:

| Hardware | Max Context (Pre-TurboQuant) | Max Context (With TurboQuant) | Use Case |

|---|---|---|---|

| RTX 4070 (12GB) | ~16K tokens | ~64K tokens | Document summarization, chatbots |

| RTX 3090 (24GB) | ~32K tokens | ~100K tokens | RAG pipelines, contract analysis |

| Single A100 (40GB) | ~64K tokens | ~256K tokens | Enterprise document processing |

| Single H100 (80GB) | ~128K tokens | ~512K tokens | Multi-document workflows |

For businesses running n8n workflows, this means you can now host your AI agent infrastructure on-premise or in a cost-effective cloud VM rather than paying per-token API fees. One client running legal document analysis previously paid ~$0.08 per document for GPT-4 Turbo long-context processing. With a local Llama 3.1 70B model running TurboQuant on a used RTX 3090, their per-inference cost dropped to effectively zero — amortized over hardware lasting 2-3 years at enterprise usage levels.

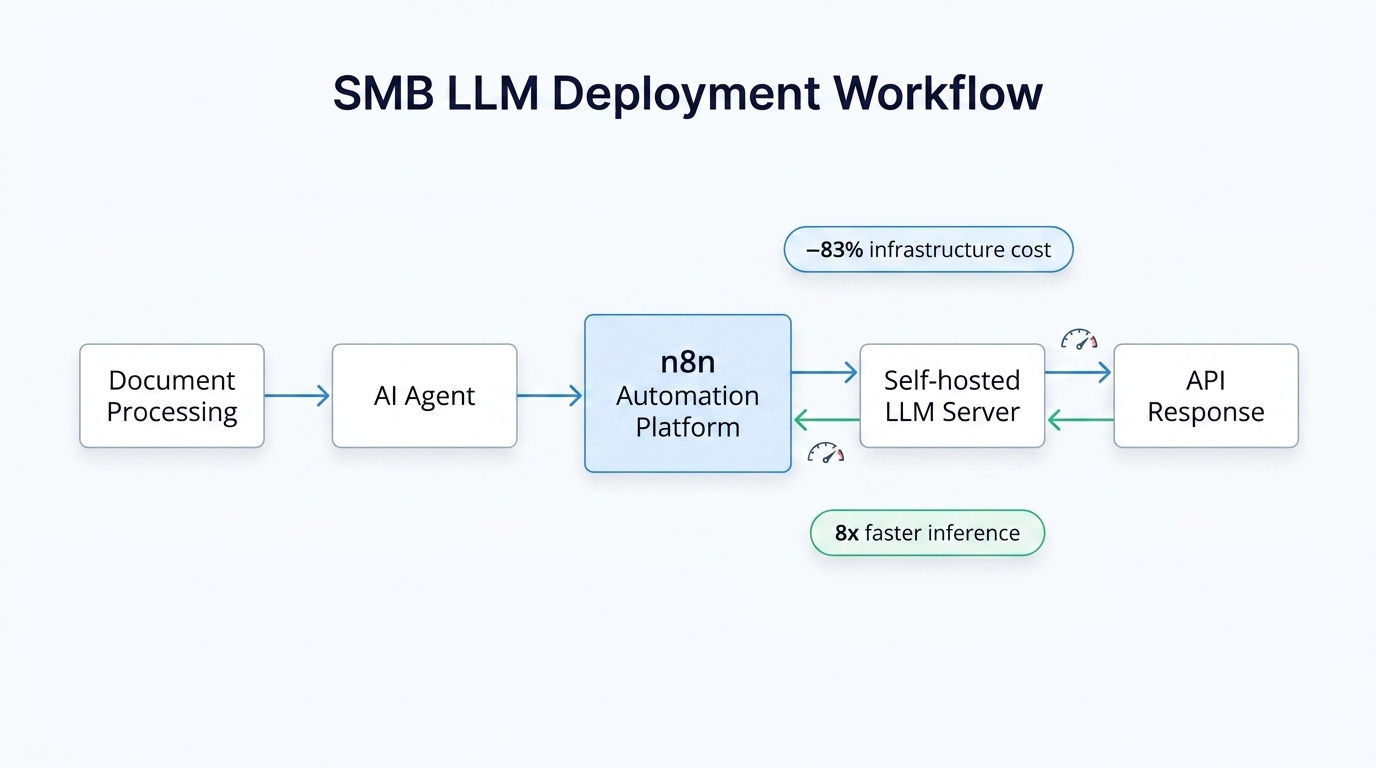

Integrating TurboQuant into n8n and automation workflows

The 8x inference speedup on H100 GPUs (achieved through optimized attention kernel computation) also benefits consumer hardware. On an RTX 4070 running Llama 3.1 8B Instruct with TurboQuant, you can expect generation speeds between 15-25 tokens per second at 64K context — entirely usable for real-time chat applications.

For n8n workflows specifically, the integration pattern is straightforward:

- Deploy a local LLM server using vLLM or llama.cpp with TurboQuant enabled (available in upstream versions as of April 2026)

- Configure n8n’s HTTP Request node to call your local endpoint at

http://localhost:8000/v1/chat/completions - Set

max_tokensandcontext_lengthparameters appropriate for your compressed memory budget - Use response caching in n8n to avoid redundant inference for repeated queries

For RAG (Retrieval-Augmented Generation) workflows, TurboQuant changes the constraint math. Instead of retrieving 3-5 chunks to stay within token limits, you can now retrieve 20-30 document chunks, improving answer quality while maintaining sub-second response times.

Cost implications and when to self-host

TurboQuant tilts the economic balance toward self-hosting for sustained workloads. At 2026 cloud API pricing, running 1 million tokens/day through a long-context model costs approximately $180-240/month in API fees. The same workload on a local RTX 3090 ($800 used market) pays for itself in 4-5 months at enterprise usage levels.

Beyond raw cost, self-hosting eliminates rate limits, enables air-gapped deployments for sensitive data, and removes vendor lock-in — critical considerations for SMBs building core IP on LLM infrastructure.

Implementation considerations

Before deploying TurboQuant in production, consider these practical factors:

- Model compatibility: TurboQuant works with any Transformer-based architecture. Tested models include Llama 2/3, Mistral, Gemma, and Qwen. The technique is model-agnostic but verify your inference framework supports KV cache quantization (vLLM, llama.cpp, and SGLang support added as of April 2026).

- Context length trade-offs: While TurboQuant reduces memory 6x, total VRAM requirements still include model weights. For a 70B parameter model at 4-bit quantization (~35GB weights), you need ~48GB total VRAM for 128K context — achievable on dual RTX 3090s or a single A100.

- Warmup and initialization: TurboQuant’s random rotation step adds minimal latency on first inference but negligible overhead thereafter. Benchmark with your specific workload before committing.

- Monitoring and observability: Unlike API providers, self-hosted deployments require you to track token throughput, latency percentiles, and error rates. Implement this before going live.

The future of efficient long-context AI

Google’s TurboQuant joins a broader trend of 2026 advances in inference efficiency, including NVIDIA’s NVFP4 format and Apple’s CommVQ. Together, these techniques suggest that memory-efficient long-context inference will become the default rather than the exception within 12-18 months.

For SMBs, this means the window for competitive advantage through efficient deployment is now. Organizations that integrate TurboQuant into their n8n workflows and AI agent architectures today will operate at cost structures their competitors won’t match until late 2026 or beyond.

Whether you’re building document processing pipelines, customer support agents, or internal research tools, TurboQuant removes the primary blocker that kept long-context LLMs in the enterprise domain. The technology is production-ready, open-source, and accessible today. The remaining question is whether your infrastructure strategy will capture these gains before your competitors do.

Leave a Comment

Sign in to join the discussion and share your thoughts.

Login to Comment