On March 18, 2026, Google pushed an update to AI Studio that did something no other browser-based coding tool has managed at scale: it turned a simple natural-language prompt into a production-ready application with authentication, a database, API key management, and real-time multiplayer support, all without the user writing a single line of infrastructure code. The upgrade, powered by the Antigravity coding agent and native Firebase integration, marks a fundamental shift in how “vibe coding” moves from weekend hackathon territory into something businesses can actually ship.

If you have been watching the AI coding tool race from the sidelines, the headlines blur together quickly. Another agent launch, another model upgrade, another demo that looks impressive but falls apart when you need a real backend. What makes Google’s full-stack vibe coding announcement different is that it specifically targets the gap between a flashy prototype and something that survives contact with real users. Here is how it works, what it can do today, and where the rough edges still are.

What Antigravity actually does inside AI Studio

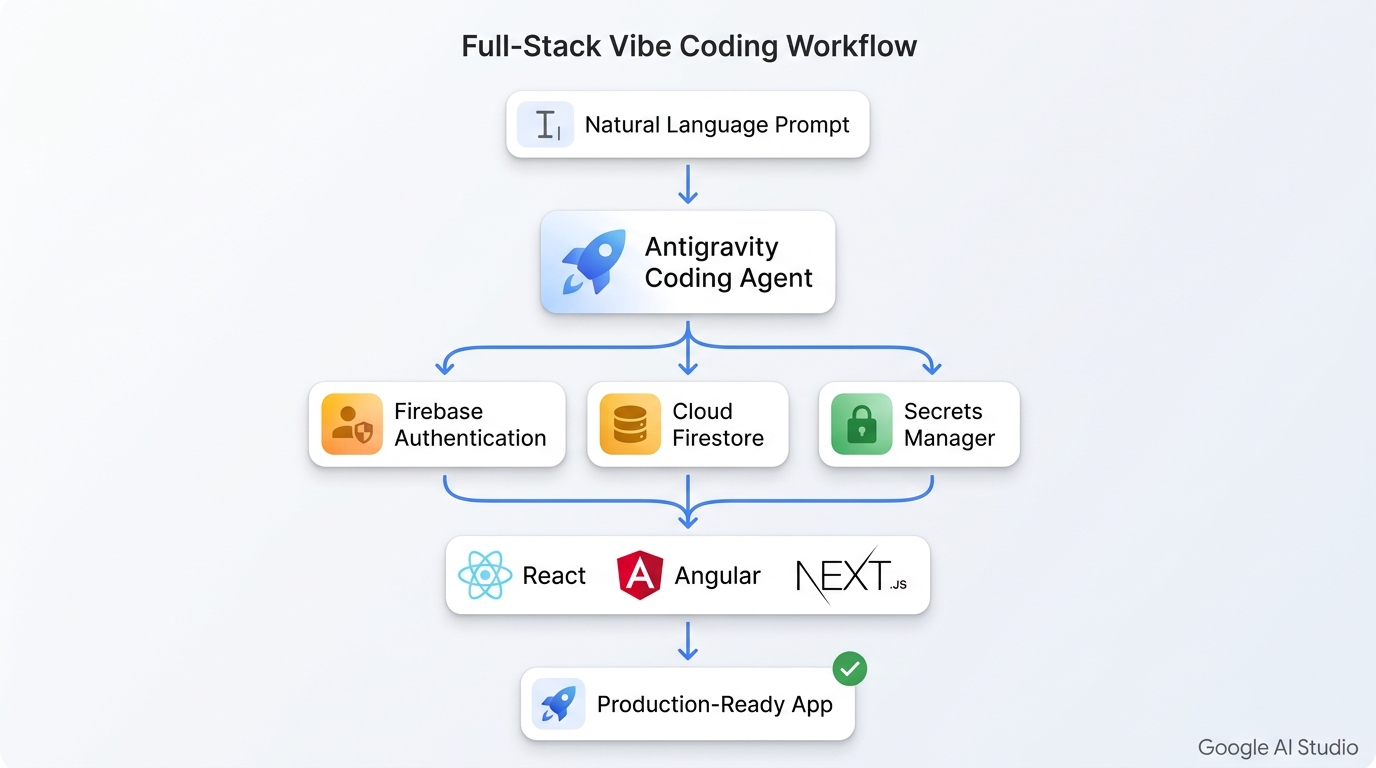

Antigravity started life as a standalone agent-first IDE, originally announced alongside Gemini 3 on November 18, 2025. It is built on a heavily modified fork of Visual Studio Code, but Google has repositioned it as the intelligence layer inside the browser-based AI Studio experience. The agent does not just generate code snippets or suggest completions. It maintains a deep understanding of your entire project structure, chat history, and the dependencies your application needs across sessions.

When you describe an app in plain English, Antigravity scaffolds the project, selects the appropriate framework (React, Angular, or Next.js), and automatically pulls in external libraries like Framer Motion for animations, Shadcn for UI components, or Three.js for 3D rendering. The agent handles multi-step code edits, tracks context across conversational turns, and can pick up where you left off after closing the browser tab. This persistent session capability works across devices, which means you can start building on a laptop and continue on a desktop without losing state.

How Firebase integration eliminates backend configuration

The real innovation in this update is not code generation. Plenty of tools do that well. The differentiator is how Antigravity handles backend infrastructure. The agent proactively detects when your app needs persistence, authentication, or external service connections. When it identifies that a database or sign-in flow is required, it proposes a Firebase integration. Once you approve, it provisions the backend automatically.

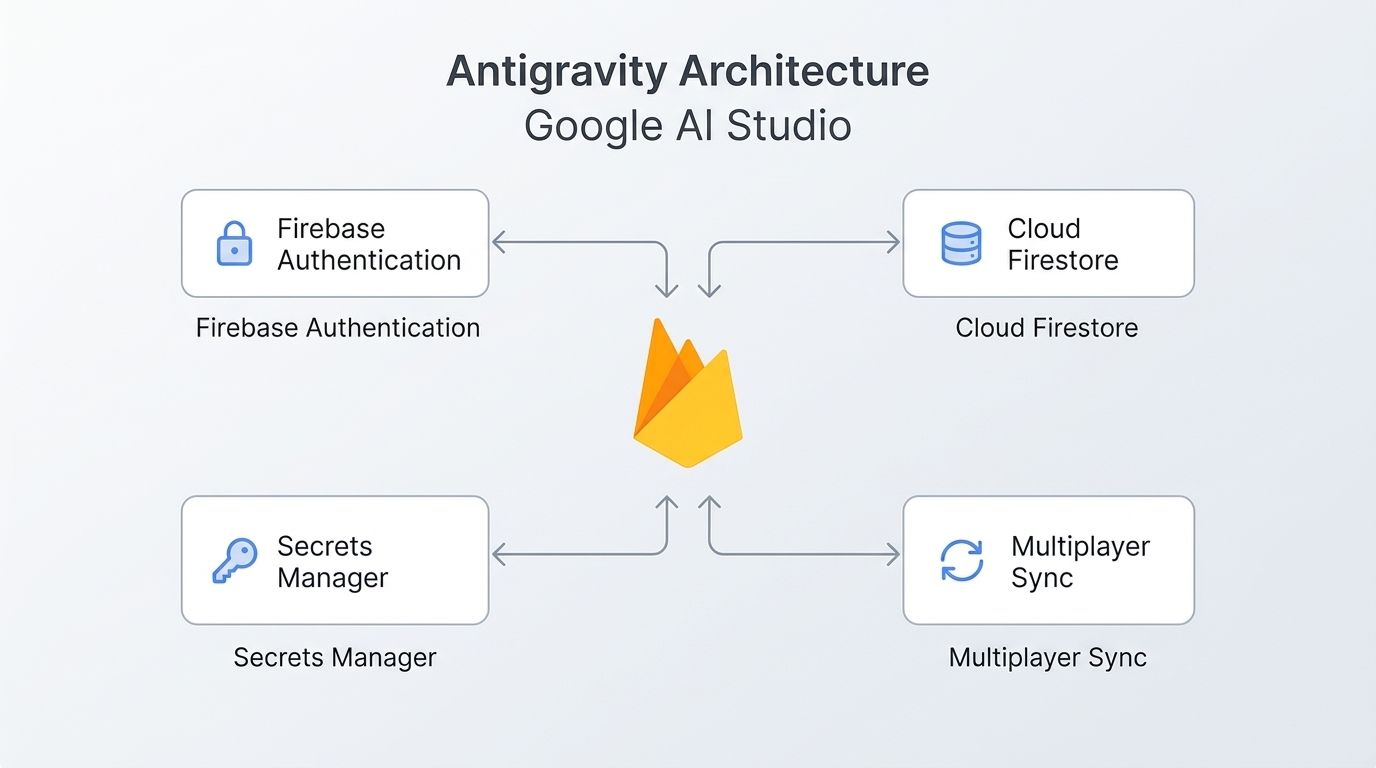

Specifically, three Firebase services wire up without manual configuration:

- Cloud Firestore provisions a NoSQL database for storing application data, user preferences, game state, or any structured content your app generates.

- Firebase Authentication sets up secure sign-in with Google, handling the entire OAuth flow, session management, and user identity without you writing auth logic.

- Secrets Manager provides a secure vault inside the Settings tab for storing API credentials. When the agent detects your app needs to connect to Google Maps, Stripe, or any third-party service, it prompts you to add the relevant key to the Secrets Manager rather than hard-coding it into the source.

This is the piece that previous vibe coding tools consistently missed. You could generate a beautiful front-end in Bolt, Replit, or Lovable, but the moment your app needed a login page, a persistent database, or a secure API connection, you were on your own. Google’s approach bakes those services into the development loop itself, removing the most common reason vibe-coded prototypes never make it past the demo stage.

Real apps built with the system

Google showcased several production-quality examples built entirely through prompts inside the upgraded AI Studio. These are not toy demos. They represent the range of what the platform can handle:

| App | What it demonstrates |

|---|---|

| Neon Arena | Real-time multiplayer first-person laser tag game with live leaderboards, AI bots, and retro aesthetics |

| Cosmic Flow | Collaborative 3D particle visualization using Three.js with real-time syncing across users |

| Neon Claw | 3D claw machine with physics engine, timers, and competitive leaderboard |

| GeoSeeker | App connecting to Google Maps API for live geospatial data, using Secrets Manager for API keys |

| Heirloom Recipes | Recipe catalog with Gemini-powered content generation and multi-user family collaboration |

Each of these apps can be remixed directly inside Google AI Studio, which means you can fork them, modify the prompt, and generate a derivative version in minutes. Google reports that internal teams have built hundreds of thousands of applications using this system over the past several months, suggesting the platform has been stress-tested well beyond what the public demos show.

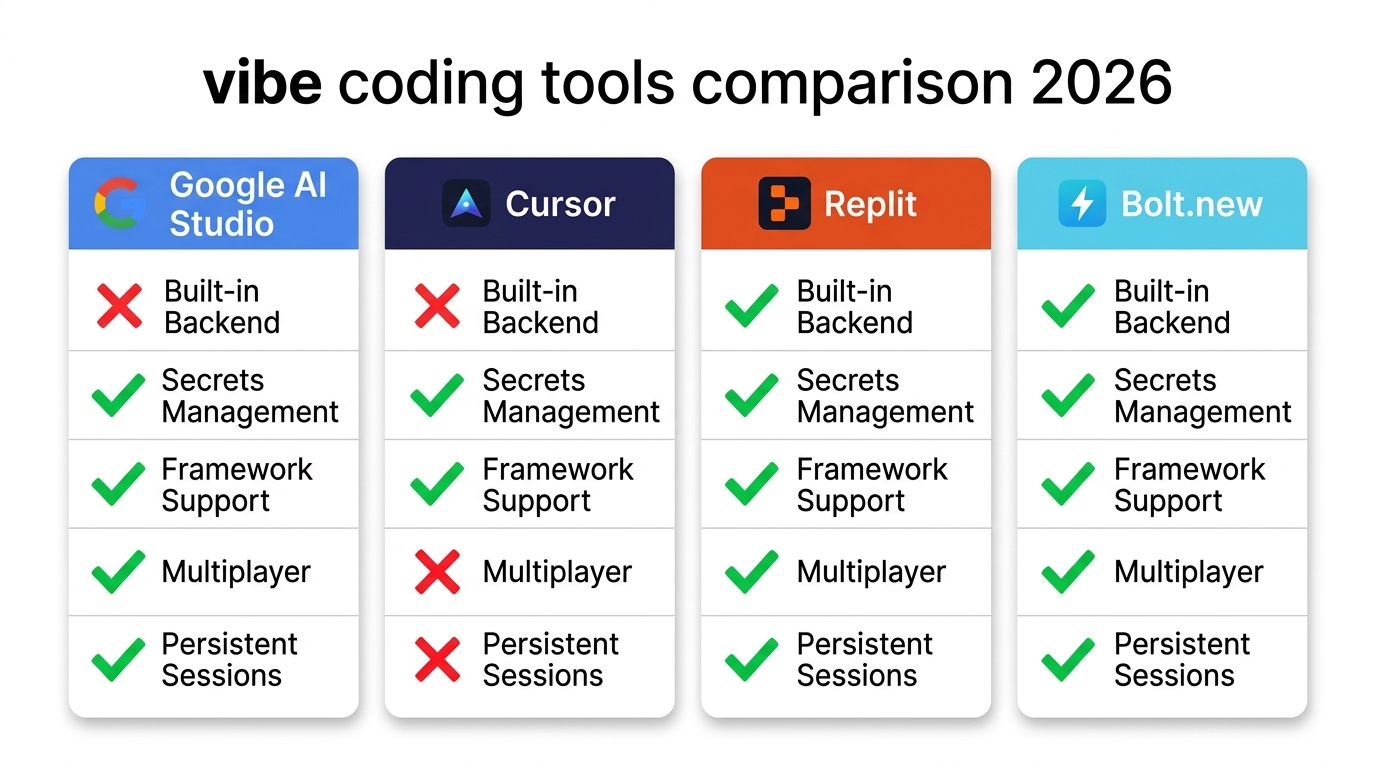

How it compares to other vibe coding tools

The 2026 vibe coding landscape has split into two categories: AI app builders optimized for speed (Lovable, Bolt, Replit, v0) and AI-native editors designed for professional developers (Cursor, Claude Code, Windsurf). Google AI Studio is attempting to straddle both. Here is how the key capabilities break down:

Google’s unique edge right now is the combination of model access through Gemini 3.1 Pro, automatic Firebase backend provisioning, and framework flexibility (React, Angular, Next.js) in a single workflow. No other tool offers this exact combination. Cursor excels at deep codebase understanding and multi-file refactors but requires you to set up your own backend. Replit handles full-stack hosting but with less intelligent agent behavior. Bolt and Lovable are fastest for non-technical founders but lack the infrastructure layer Google has built in.

Pricing, limitations, and what to watch for

AI Studio remains free for prototyping and experimentation. The business model kicks in when applications move to production and start consuming Gemini API tokens, Firebase read/write operations, and Vertex AI services. This is a classic land-and-expand strategy: reduce friction at the prototype stage, then monetize gradually as usage scales.

There are legitimate concerns worth flagging before going all-in:

- Vendor lock-in: When your app is deeply tied to Firebase, Gemini models, and future Workspace connectors, extracting it to run on a different provider becomes technically complex and expensive.

- Cost unpredictability: Token consumption, real-time database operations, and authentication flows accumulate costs as usage grows. Budget carefully before committing to production.

- Architecture control: Antigravity suggests sensible defaults, but you still need to verify that security settings, data flows, and infrastructure decisions match your requirements.

- Code quality over iterations: It remains unclear whether generated code maintains quality through dozens of iterative edits or whether drift becomes a problem.

Google has also signaled that tighter Workspace integrations (connecting Drive, Sheets, and other Google services) and one-click deployment from AI Studio to Antigravity-managed environments are coming. For businesses already in the Google ecosystem, this could make AI Studio a compelling rapid-application-development environment for internal tools.

Bridging generated apps into business systems

For small and midsize businesses, the appeal of full-stack vibe coding is obvious: describe what you need and get a working application in hours instead of weeks. But most businesses do not operate in isolation. A vibe-coded dashboard, inventory tracker, or customer portal needs to connect to existing systems, whether that is a CRM, an ERP, an email marketing platform, or a custom database.

This is where the gap between “AI generated a working app” and “the app is integrated into our business” becomes visible. The Firebase backend handles data persistence and authentication inside the generated application, but orchestrating data flows between that application and your existing tools requires a separate automation layer. Platforms like n8n, which combine AI capabilities with business process automation, are increasingly the bridge that connects these generated applications to the systems businesses already run on. Specialized n8n automation partners can help SMBs design those integration workflows, connecting a vibe-coded app to Salesforce, syncing Firestore data with an existing PostgreSQL database, or triggering notifications through Slack when specific events occur in the generated application.

Who should try it now

The full-stack vibe coding experience in Google AI Studio is worth exploring right now if you fall into one of these categories: an indie founder wanting to validate an idea with real authentication and a database in a day or two, a small team needing an MVP without hiring backend infrastructure help, an internal tools team building dashboards or admin panels, or anyone already invested in the Google ecosystem (Firebase, Maps, Cloud).

You should probably wait if you have a professional workflow with Cursor or VS Code plus a custom backend already dialed in, your project requires complex architecture like microservices or custom database schemas, or you need full control over the deployment pipeline and long-term cost structure.

The strategic signal from Google’s March 18 update is clear. AI Studio is no longer a prompt playground. It is becoming the entry point for an entire application lifecycle: prompt, generate, provision backend, iterate, and eventually deploy, all within Google’s infrastructure. Whether that convenience is worth the lock-in trade-off depends entirely on what you are building and how long you plan to maintain it.

You can try the full-stack vibe coding experience today at Google AI Studio. The example apps (Neon Arena, Cosmic Flow, Neon Claw, GeoSeeker, and Heirloom Recipes) are available to play or remix directly in the platform.

Leave a Comment

Sign in to join the discussion and share your thoughts.

Login to Comment