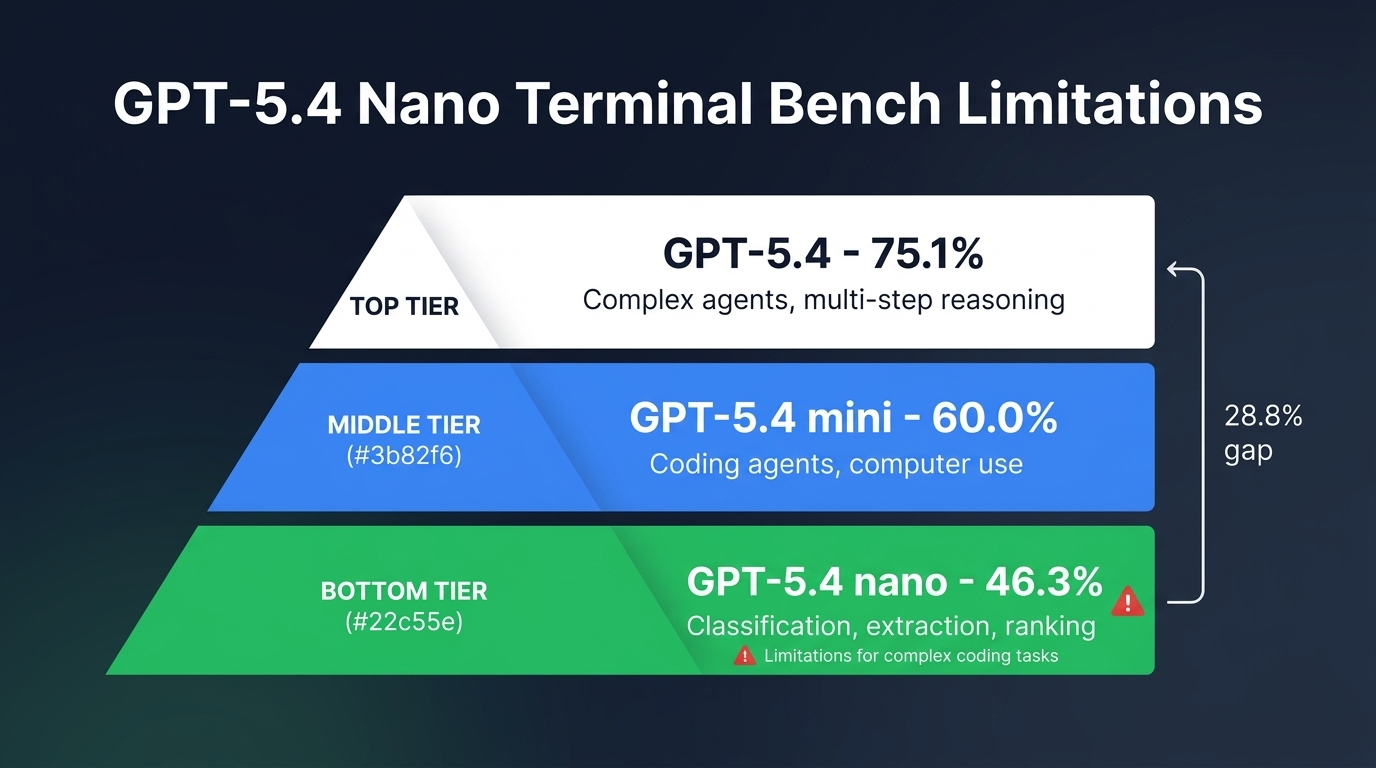

At $0.20 per million input tokens, GPT-5.4 nano looks like an obvious choice for teams trying to keep AI costs down. But that price tag hides a steep capability gap. Adam Holter’s benchmark analysis, published alongside OpenAI’s March 17, 2026 release of the mini and nano variants, reveals that GPT-5.4 nano scores just 46.3% on Terminal-Bench 2.0—the benchmark that most closely mirrors how coding agents actually work in production. That is nearly 29 percentage points behind the full GPT-5.4 model and almost 14 points behind GPT-5.4 mini. If your team is running complex coding workflows, debugging sessions, or multi-step agent loops through nano to save on API costs, the hidden expense of failed tasks and repeated errors will eat those savings quickly.

What Terminal-Bench 2.0 actually measures

Terminal-Bench 2.0 is not another function-completion benchmark. It tests whether an AI model can work inside a terminal environment: inspect files, run commands, debug failures, manage state across steps, and deliver a working end-to-end result. As BenchLM’s March 2026 explainer notes, “models that look identical on HumanEval often separate sharply here.” That separation is exactly what makes it the right benchmark for evaluating whether a model can serve as a real coding agent rather than just a code generator.

Strong Terminal-Bench 2.0 scores correlate with four capabilities that matter in production: solid coding fundamentals, step-by-step reasoning under uncertainty, effective recovery from errors, and reliable tool-use discipline. A model that aces single-turn completions but collapses when it needs to recover from a failed build is not a model you want running unsupervised in your codebase. Terminal-Bench 2.0 exposes that gap by design.

The benchmark numbers tell a clear story

OpenAI’s official benchmark tables, confirmed by Holter’s independent analysis, show a clean performance ladder from GPT-5.4 down through mini and nano. But the gaps are not uniform, and reading them carefully reveals exactly where nano falls short.

| Benchmark | GPT-5.4 | GPT-5.4 mini | GPT-5.4 nano | GPT-5 mini |

|---|---|---|---|---|

| Terminal-Bench 2.0 | 75.1% | 60.0% | 46.3% | 38.2% |

| SWE-Bench Pro | 57.7% | 54.4% | 52.4% | 45.7% |

| OSWorld-Verified | 75.0% | 72.1% | 39.0% | 42.0% |

| Toolathlon | 54.6% | 42.9% | 35.5% | 26.9% |

| GPQA Diamond | 93.0% | 88.0% | 82.8% | 81.6% |

| MRCR v2 (64K-128K) | 86.0% | 47.7% | 44.2% | 35.1% |

Notice what happens across these benchmarks. On knowledge-intensive tests like GPQA Diamond, nano’s 82.8% sits respectably close to mini’s 88.0%. But on the agentic execution benchmarks—Terminal-Bench 2.0, OSWorld-Verified, and Toolathlon—the gap between nano and the models above it balloons. Nano loses nearly 14 points to mini on Terminal-Bench and drops a staggering 33 points on OSWorld-Verified. This is not a model that struggles slightly with complex tasks. It is a model that struggles profoundly with the kind of multi-step, stateful workflows that define real coding agent work.

Where nano fails hardest

Multi-step agentic coding

Terminal-Bench 2.0 requires models to inspect environments, read and edit files, run commands, and recover from errors across multiple steps. At 46.3%, nano fails more than half these tasks. That means for a typical coding agent workflow—say, diagnosing a build failure, reading stack traces, patching source files, and re-running the suite—nano will not complete the job more often than it will. The cost savings from using nano at $0.20 per million input tokens quickly evaporate when every second or third task needs to be re-run on a more capable model or manually fixed by a human developer.

Computer use and visual interface navigation

Nano’s OSWorld-Verified score of 39.0% is not just weak—it is actually below the older GPT-5 mini at 42.0%. This is the only benchmark where nano fails to beat its predecessor generation. OSWorld tests computer use: reading screenshots, navigating desktop interfaces, completing visual workflows. If your product involves interpreting UI screenshots or navigating applications autonomously, nano is the wrong model regardless of cost. GPT-5.4 mini at 72.1% handles this workload competently and approaches the full model’s 75.0%.

Long-context reasoning

The long-context picture is particularly stark. On OpenAI’s MRCR v2 benchmark at 64K-128K context, nano scores 44.2%. The full GPT-5.4 model hits 86.0%. That 42-point gap means nano cannot reliably track information across long documents or large codebases. If your workflow requires the model to maintain context while reviewing a 50-page API specification or tracing through hundreds of lines of interconnected code, nano will lose track of critical details. Even mini struggles here at 47.7%, but nano’s deficit is worse.

What nano is actually built for

OpenAI’s own positioning is explicit. The official announcement recommends GPT-5.4 nano for “classification, data extraction, ranking, and coding subagents that handle simpler supporting tasks.” Microsoft’s Azure AI team echoes this, describing nano as designed for “ultra-low latency automation at scale” and short-scoped tasks where extended multi-step reasoning is not required. The word “subagent” appears deliberately throughout OpenAI’s documentation. Nano is not meant to be the brain of your system. It is meant to be the fast, cheap worker that handles well-defined, narrow tasks delegated by a more capable model.

This framing makes nano genuinely useful in the right context. Classification pipelines that label incoming support tickets, data extraction jobs that pull structured fields from thousands of invoices, ranking tasks that sort search results—these are narrow, well-defined operations where nano’s speed and cost advantage matter and its reasoning limitations are irrelevant. Perplexity Deputy CTO Jerry Ma summarized the split concisely: “Mini delivers strong reasoning, while Nano is responsive and efficient for live conversational workflows.”

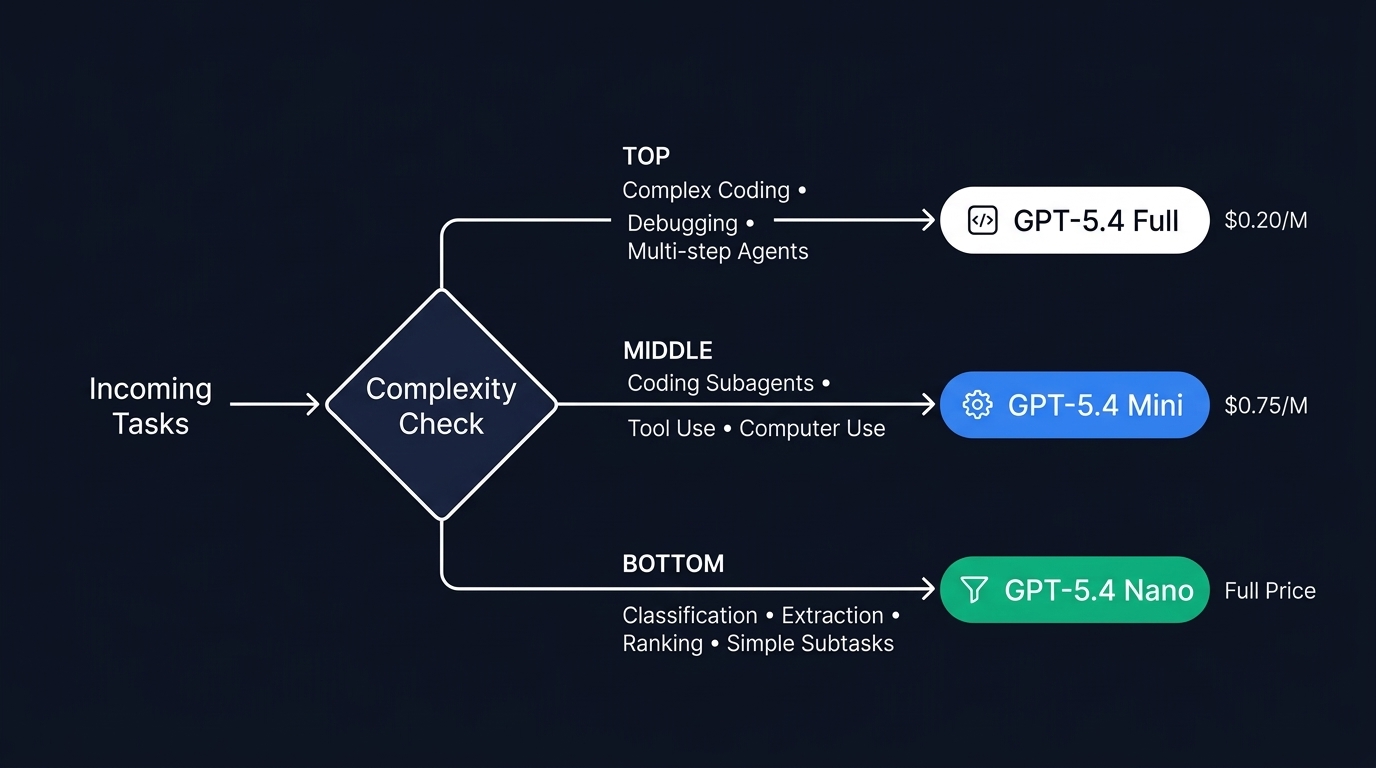

Building a model routing strategy

The practical answer for teams running production AI systems is not to pick a single model. It is to implement a routing layer that matches task complexity to model capability. OpenAI’s Codex integration demonstrates this pattern concretely: GPT-5.4 handles planning and coordination while GPT-5.4 mini subagents execute narrower tasks in parallel at 30% of the quota cost. The same principle extends to nano.

A well-designed routing strategy follows a simple decision tree. If the task involves multi-step terminal execution, debugging complex systems, or navigating visual interfaces, it goes to GPT-5.4 or GPT-5.4 mini. If the task is a well-scoped classification, extraction, or ranking operation with clear inputs and outputs, nano gets the call. The complexity check can be as simple as task-type tagging in your orchestration layer, or as sophisticated as using nano itself as the router—classify incoming requests, then escalate to mini or full GPT-5.4 when the classification flags complexity beyond nano’s capability.

Hidden costs of using the wrong model

The danger of defaulting to nano for cost reasons is not that it fails obviously. It is that it fails subtly. A model that scores 46.3% on Terminal-Bench 2.0 does not refuse tasks—it attempts them and gets them wrong more than half the time. In practice, this shows up as bugs that pass initial review but break in production, incomplete code fixes that require multiple re-attempts, and agent loops that spiral into incorrect state. Each failed attempt costs tokens, developer time for manual review, and latency in your delivery pipeline.

Consider a concrete scenario: your team runs an automated debugging agent that processes 500 issue reports per day. Using nano at $0.20 per million input tokens saves roughly $275 per day compared to mini at $0.75 per million. But if nano only resolves 46% of tasks correctly versus mini’s 60%, you are paying engineers to manually handle an additional 70 failed resolutions daily. At even a modest engineering cost, those manual interventions dwarf the token savings within the first week.

Key takeaways

- GPT-5.4 nano’s 46.3% Terminal-Bench 2.0 score means it fails more agentic coding tasks than it completes—reserve it for classification, extraction, and ranking, not complex workflows.

- The 33-point OSWorld-Verified gap between nano (39.0%) and mini (72.1%) makes nano unsuitable for any task involving visual interface navigation or computer use.

- Nano actually scores below the older GPT-5 mini on OSWorld-Verified, confirming it is not designed for tasks requiring deep multimodal reasoning.

- Implement a model routing layer that sends simple, well-scoped tasks to nano but escalates complex coding, debugging, and multi-step agent work to mini or full GPT-5.4.

- The hidden cost of failed automation—repeated retries, manual fixes, broken deployments—will exceed nano’s token savings if you route the wrong tasks to it.

GPT-5.4 nano is a genuinely useful tool for high-volume, low-complexity workloads. At $0.20 per million input tokens, it undercuts Google’s Gemini Flash-Lite and makes bulk classification pipelines economically viable. But treating it as a general-purpose coding agent because of its price is a strategic mistake. The benchmarks are clear, OpenAI’s own documentation is explicit, and the production evidence from teams running hierarchical agent systems confirms the same conclusion: use nano for what it is built to do, and route everything else up the capability ladder. The cost of getting that routing wrong is not measured in tokens—it is measured in broken deployments and wasted engineering time.

Leave a Comment

Sign in to join the discussion and share your thoughts.

Login to Comment