The release of Cursor Composer 2 on March 19, 2026, has sparked a significant debate in the developer community: is the aggressively low price point a sustainable shift in AI economics, or is it a calculated move to lock teams into the Cursor ecosystem? As of late March 2026, Cursor’s in-house model series has reached its third generation, promising “frontier-level” coding intelligence at a fraction of the cost of third-party giants like Anthropic’s Claude Opus 4.6 and OpenAI’s GPT-5.4. For SMB engineering teams, the decision of where to invest their automation budget has never been more complex, as the difference between “standard” and “fast” variants can swing a monthly bill by hundreds of dollars.

The economics of Composer 2 pricing

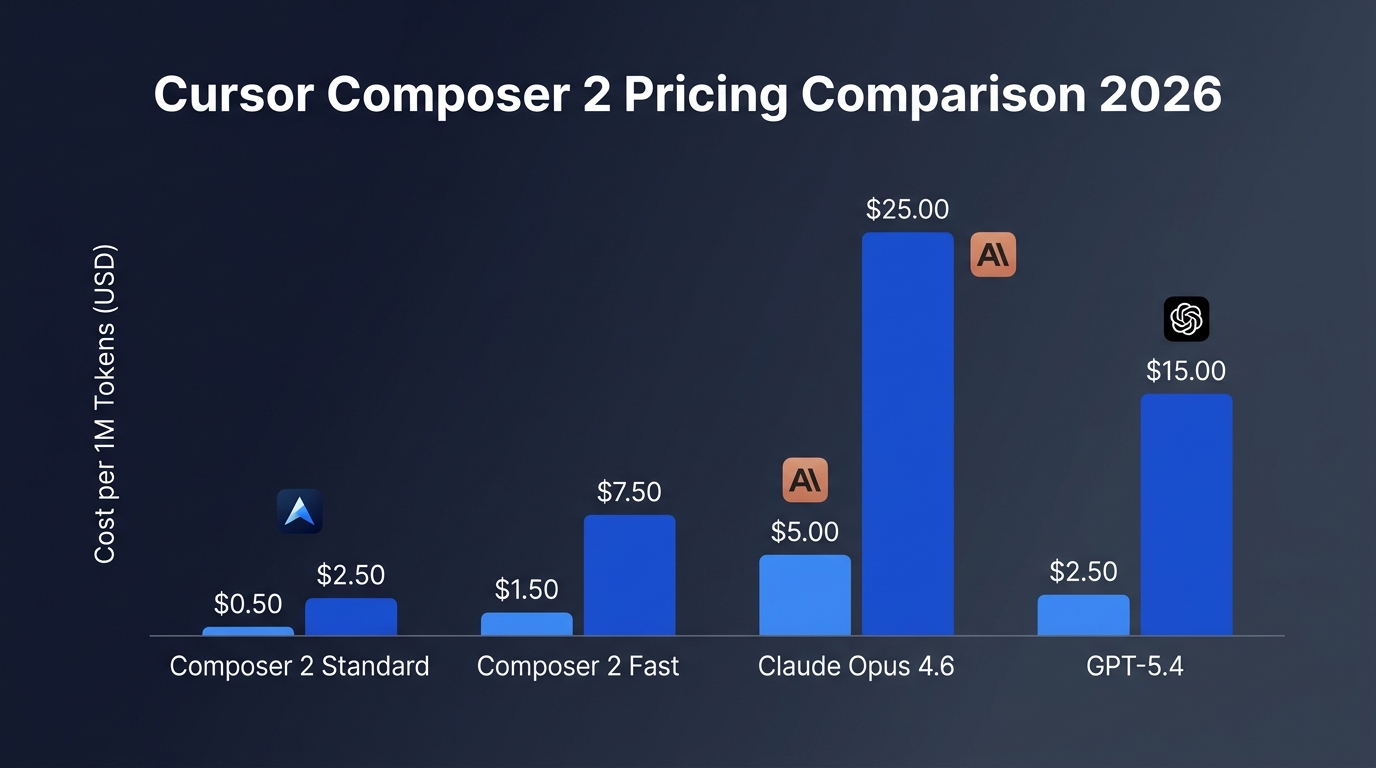

Cursor Composer 2 represents a dramatic 86% price drop from its predecessor, Composer 1.5, which launched just a month earlier in February 2026. The new pricing structure is split into two distinct tiers: a Standard variant for cost-conscious development and a Fast variant designed for real-time interactivity. The Fast variant has become the default setting within the Cursor IDE, a move that highlights Cursor’s confidence in its internal speed-to-intelligence ratio.

| Model Variant | Input Cost (per 1M) | Output Cost (per 1M) | Default Status |

|---|---|---|---|

| Composer 2 Standard | $0.50 | $2.50 | Optional |

| Composer 2 Fast | $1.50 | $7.50 | Default |

| Composer 1.5 (Feb 2026) | $3.50 | $17.50 | Deprecated |

| Claude Opus 4.6 | $5.00 | $25.00 | External |

| GPT-5.4 | $2.50 | $15.00 | External |

For an SMB team generating 10 million output tokens per month—a typical volume for 20-30 developers utilizing agentic workflows—the shift from Claude Opus 4.6 to Composer 2 Standard translates to a monthly saving of approximately $225 on output tokens alone. Even the Fast variant, while three times the price of Standard, remains 70% cheaper than Anthropic’s flagship model. This aggressive pricing is largely possible because Composer 2 is restricted to the Cursor IDE; you cannot access it via a standalone API, allowing Cursor to optimize inference specifically for code-related tasks.

Intelligence vs practical value: The benchmark gap

Pricing is only half the story; value requires performance. Cursor claims that Composer 2 matches or exceeds the coding intelligence of many third-party frontier models. According to official data released on March 19, 2026, Composer 2 scores 61.7 on Terminal-Bench 2.0, slightly edging out Claude Opus 4.6’s score of 58.0. However, it still trails OpenAI’s GPT-5.4, which leads the benchmark at 75.1.

The “same intelligence” claim for both Standard and Fast variants is a critical part of Cursor’s value proposition. Both run the same underlying architecture—a Mixture-of-Experts (MoE) system built on a Kimi K2.5 foundation with Cursor’s proprietary continued pretraining and reinforcement learning. The difference lies solely in the compute resources allocated for inference speed. For teams where developer flow is paramount, the $1.00/M input surcharge for “Fast” is often viewed as a productivity tax rather than a quality upgrade.

Self-summarization and long-horizon tasks

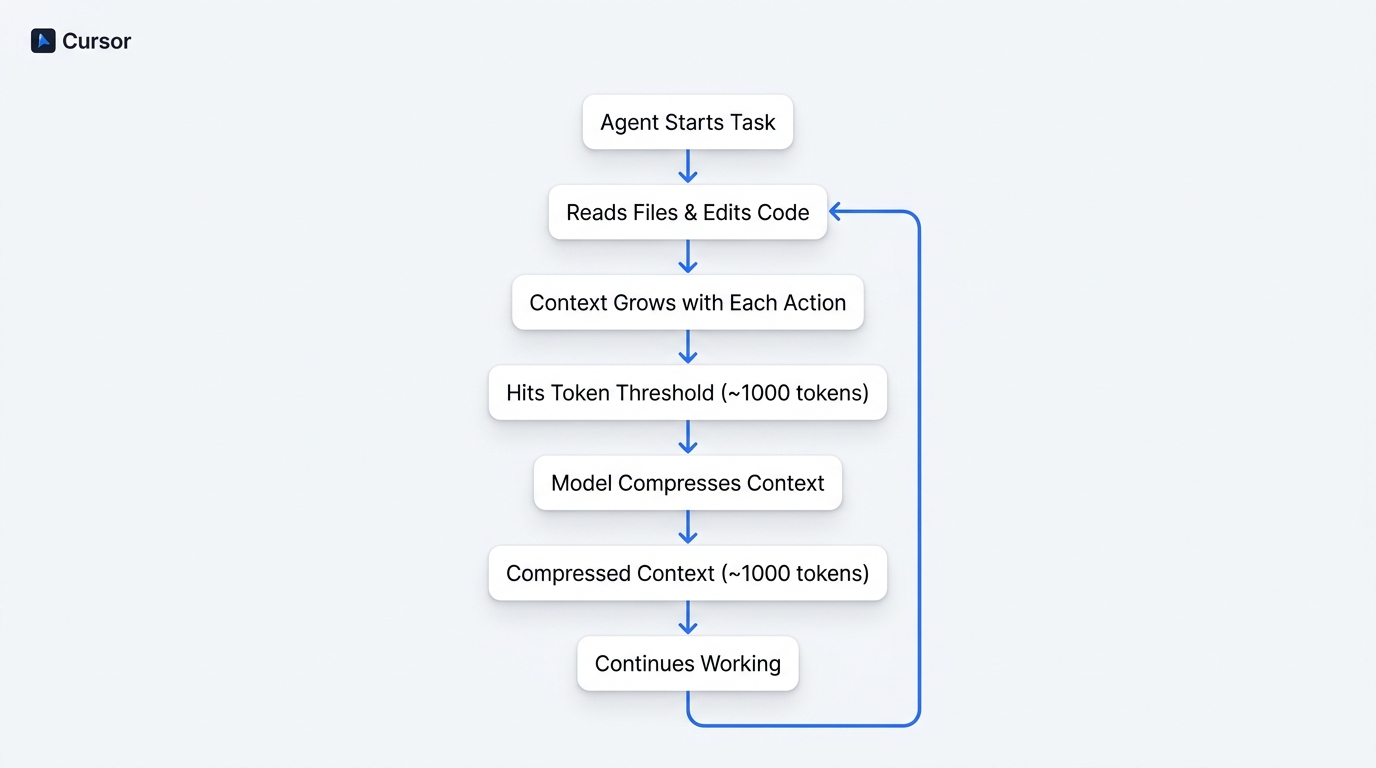

One of the most innovative technical features driving Composer 2’s value is “self-summarization.” In agentic coding, a session can quickly balloon to thousands of tokens as the AI reads files, executes terminal commands, and tracks its own history. Traditional models eventually “forget” earlier context or use crude summarization that loses critical details. Cursor has integrated summarization directly into the reinforcement learning loop.

When a session hits a specific token threshold, Composer 2 compresses its own context into a highly dense 1,000-token summary. Because the model was trained to perform this compression, it knows exactly which technical details are vital for the next steps. Cursor reports a 50% reduction in “compaction errors” compared to external summarization methods. For SMBs, this means the agent can handle sessions involving hundreds of sequential actions without the “hallucination spiral” that often occurs when cheaper models lose context, effectively saving money by reducing the need for manual corrections.

The contrarian view: Is the savings a mirage?

While the per-token costs are undeniably lower, some critics argue the “practical value” is more nuanced. First, the dependency on Kimi K2.5 (developed by Moonshot AI) was not initially disclosed, raising sovereignty and compliance concerns for some enterprise teams. Though Cursor layers 75% of the model’s performance through its own training, the foundational origin remains a factor for sensitive industries.

Second, Cursor’s “Auto” mode—which dynamically selects the “best” model—will increasingly default to Composer 2 Fast. While this ensures a smooth experience, it can obscure the actual token spend. Unlike using a transparent API through a tool like OpenRouter, Cursor’s internal credit system can make it difficult for teams to see if they are actually saving money or if the model’s increased “chattiness” in agentic mode is consuming more tokens than a more concise, more expensive model like GPT-5.4 would.

SMB team recommendations

For small to medium-sized engineering teams, the current 2026 landscape suggests a tiered approach to utilizing Composer 2:

- Use Standard for background tasks: If you are running long refactors or test generation where you aren’t waiting for the AI, switch manually to the Standard variant to save 66% on output costs.

- Audit your “Auto” mode: Periodically check your usage dashboard to see how many tokens are being routed to third-party models versus Composer. If your team is hitting credit limits, Composer 2 is your primary lever for cost reduction.

- Reserve GPT-5.4 for system architecture: While Composer 2 beats Opus 4.6 in terminal tasks, it still lacks the deep reasoning capabilities of GPT-5.4 for high-level system design. Use the cheaper model for the “labor” and the frontier model for the “blueprints.”

Conclusion

In 2026, Cursor Composer 2 is mathematically cheaper, but its true value is found in the intersection of deep IDE integration and self-summarizing context management. The 86% price drop is not just a marketing tactic; it reflects a shift toward specialized “vertical” AI models that out-compete generalist models within their specific domain. For SMB teams, the primary takeaway is clear: while you should remain skeptical of vendor lock-in, the efficiency gains of a model trained specifically for the IDE environment are now significant enough that sticking exclusively to general-purpose APIs like Claude or GPT is becoming a luxury few teams can afford. The next step for most teams is to audit their current credit burn and experiment with the Standard variant for non-interactive automation tasks.

Leave a Comment

Sign in to join the discussion and share your thoughts.

Login to Comment