The AI coding model landscape shifted dramatically in March 2026. Within a two-week span, OpenAI shipped GPT-5.4 on March 5 and Cursor launched Composer 2 on March 19, joining Anthropic’s Claude Opus 4.6 (released February 5) in what amounts to a three-way standoff at the frontier of agentic coding. For developers and engineering leaders choosing where to invest their tooling budget, the question is no longer “which model is best?” but “which model is best for the task I’m actually doing?” The answer depends heavily on what you’re building, how much you’re willing to spend, and whether you need a general-purpose model or a specialized one.

The three contenders at a glance

Each of these models comes from a different strategic position. Cursor’s Composer 2 is a code-only model purpose-built for agentic software engineering inside the Cursor IDE. Claude Opus 4.6 is Anthropic’s flagship general-purpose model that also happens to be very strong at coding and multi-agent orchestration. GPT-5.4 is OpenAI’s newest all-in-one frontier model, combining the coding prowess of GPT-5.3-Codex with native computer use and professional knowledge work capabilities.

Their release dates tell part of the story: Opus 4.6 arrived February 5, GPT-5.4 on March 5, and Composer 2 on March 19. That’s three major coding model releases in six weeks, an acceleration that has become the new normal.

Benchmark comparison: where each model wins

The most direct comparison comes from Terminal-Bench 2.0, a public agent evaluation benchmark maintained by the Laude Institute that tests how well AI agents handle real-world software engineering tasks in a terminal environment. This benchmark is the most transparent of the major coding evaluations because it uses a public framework with documented methodology.

| Benchmark | Composer 2 | Claude Opus 4.6 | GPT-5.4 |

|---|---|---|---|

| CursorBench | 61.3 | ~58.2 | ~63.9 |

| Terminal-Bench 2.0 | 61.7 | ~58.0* | 75.1 |

| SWE-bench Multilingual | 73.7 | Not reported | Not reported |

| SWE-bench Verified | Not reported | ~80.8% | ~80.0% |

| OSWorld-Verified | N/A | 72.7% | 75.0% |

The picture is nuanced. GPT-5.4 holds a commanding lead on Terminal-Bench 2.0 at 75.1%, roughly 13 points ahead of Composer 2 and 17 points ahead of Opus 4.6 (measured on the Claude Code harness). On CursorBench, which is Cursor’s own proprietary benchmark sourced from real Cursor sessions, GPT-5.4 also leads (~63.9), with Composer 2 close behind (61.3) and Opus 4.6 trailing (~58.2).

But there’s an important caveat about Terminal-Bench 2.0 scores: they measure model-plus-agent pairs, not raw models. Cursor used the official Harbor evaluation framework for its score, while GPT-5.4’s 75.1 corresponds to the Simple Codex harness on the official leaderboard. Different harnesses can produce meaningfully different results for the same underlying model. Anthropic, for instance, reports Opus 4.6 at 65.4% on Terminal-Bench 2.0 when using its own measurement methodology.

The real story here is Composer 2 punching well above its weight. It edges out Opus 4.6 on CursorBench and Terminal-Bench 2.0 while costing roughly 90% less per token. It still trails GPT-5.4 significantly on raw coding benchmarks, but the gap narrows when you factor in cost-efficiency.

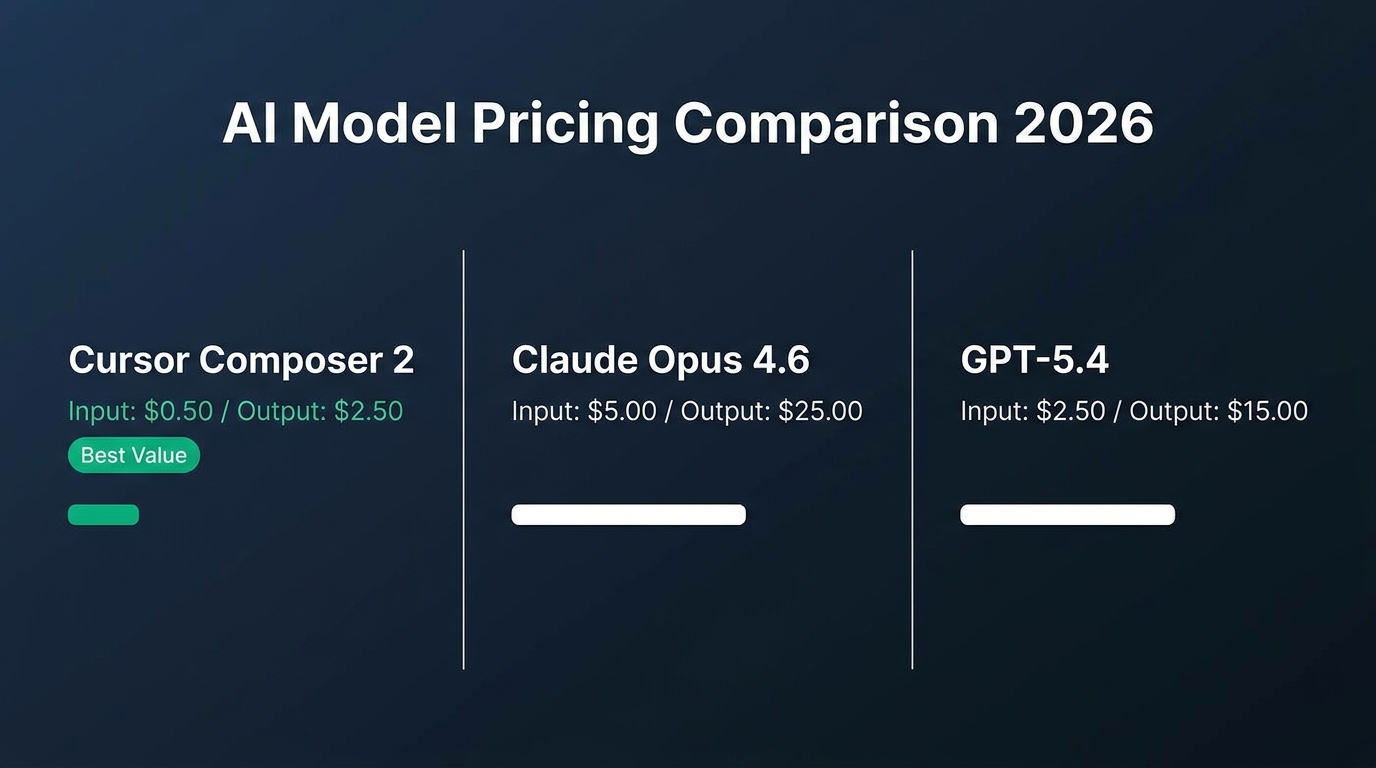

Pricing: the cost chasm

Where these models diverge most sharply is price. Composer 2 Standard comes in at $0.50 per million input tokens and $2.50 per million output tokens. That is an order of magnitude cheaper than Opus 4.6 ($5.00/$25.00) and roughly 80% cheaper than GPT-5.4 ($2.50/$15.00) on a per-token basis.

Cursor also offers a Fast variant at $1.50/$7.50 that delivers the same intelligence with lower latency. For teams running thousands of agentic requests daily, the cost difference compounds quickly. A team generating 50 million input tokens per month would pay $25 with Composer 2 Standard versus $250 with GPT-5.4 or $125 with Opus 4.6 for input alone.

| Model | Input (per 1M tokens) | Output (per 1M tokens) | Context window |

|---|---|---|---|

| Composer 2 Standard | $0.50 | $2.50 | 200K |

| Composer 2 Fast | $1.50 | $7.50 | 200K |

| Claude Opus 4.6 | $5.00 | $25.00 | 200K (1M beta) |

| GPT-5.4 | $2.50 | $15.00 | 1.05M (experimental) |

However, per-token pricing does not tell the full story. GPT-5.4 is significantly more token-efficient than its predecessors, using fewer output tokens per task, which reduces effective cost. Opus 4.6 offers up to 90% cost savings with prompt caching and 50% savings with batch processing. The real cost calculus depends on your specific workload, how many tokens each model consumes per task, and which caching or batching optimizations you can leverage.

What each model does best

Cursor Composer 2: the specialist

Composer 2 is a code-only model locked to the Cursor IDE. It cannot write emails, answer trivia, or handle non-code tasks. Its singular focus is its strength. The model is built on Moonshot AI’s Kimi K2.5 as a base, with roughly three-quarters of total compute coming from Cursor’s own continued pretraining and reinforcement learning pipeline, including a technique called “compaction-in-the-loop RL” or self-summarization.

Self-summarization works by training the model to compress its own context when the context window fills up, reducing roughly 100K tokens of history down to about 1,000 tokens while preserving critical architectural decisions, variable states, and error logs. Cursor reports this reduces compaction errors by 50% compared to traditional prompt-based summarization. In practice, it lets Composer 2 handle tasks requiring hundreds of sequential actions, like solving complex build problems that span dozens of files.

The trade-off is obvious: Composer 2 only works inside Cursor. You cannot call it via a standalone API for custom agent pipelines or integrate it into other IDEs. If your workflow lives entirely in Cursor, that is fine. If you need a model accessible from your own infrastructure, Composer 2 is not an option.

Claude Opus 4.6: the orchestrator

Opus 4.6, released February 5, 2026, is Anthropic’s most capable model across coding, agents, and enterprise workflows. It features a standard 200K context window with a 1M token beta, hybrid reasoning that allows developers to toggle between instant responses and extended thinking, and Agent Teams for multi-agent orchestration where one model coordinates sub-agents working in parallel.

Where Opus 4.6 excels is architectural reasoning on large codebases. In head-to-head testing on massive React codebases, it maintained a 94% success rate in identifying cross-component state bugs. Anthropic’s benchmark page reports 65.4% on Terminal-Bench 2.0 and 72.7% on OSWorld for computer use. Customer testimonials from companies like Replit, Cognition, and Factory highlight its ability to break complex tasks into independent subtasks and run them in parallel.

The downside is cost. At $5/$25 per million tokens, Opus 4.6 is the most expensive of the three models for routine coding work. It makes sense when you need the most capable general-purpose model for complex multi-step reasoning or multi-agent coordination, not for everyday file edits.

GPT-5.4: the all-rounder

GPT-5.4, released March 5, 2026, unifies OpenAI’s Codex coding capabilities with its general-purpose reasoning into a single model. It leads on the hardest coding benchmarks (75.1% on Terminal-Bench 2.0, 57.7% on SWE-bench Pro) and is the first OpenAI model with native computer-use capabilities, achieving 75.0% on OSWorld-Verified, which exceeds human performance at 72.4%.

One of GPT-5.4’s most practical features is tool search, which allows the model to work efficiently with large tool ecosystems by looking up tool definitions on demand rather than loading everything into context. In testing with Scale’s MCP Atlas benchmark, this reduced total token usage by 47% while maintaining accuracy. The model also supports a 1.05M token context window, making it viable for very large codebase analysis.

The trade-off is that GPT-5.4 is expensive to run at scale. At $2.50/$15.00 per million tokens, it sits between Composer 2 and Opus 4.6 on price, but its greater token efficiency per task partially offsets the higher per-token cost. Still, for teams with heavy daily usage, GPT-5.4 can become a budget line item that warrants careful monitoring.

Which model should you choose in March 2026?

The honest answer for most teams is “use more than one.” Roughly 70% of developers now use two to four AI tools simultaneously, and the smartest approach in 2026 is a multi-model strategy that plays to each model’s strengths.

- Default to Composer 2 for everyday IDE work. If your coding happens inside Cursor, Composer 2 handles routine file edits, refactoring, and multi-file changes at a fraction of the cost. Its self-summarization makes it reliable for long-running agentic sessions. Use it as your workhorse and save more expensive models for harder problems.

- Reach for Opus 4.6 when you need architectural planning and multi-agent coordination. Its Agent Teams feature, long-context reasoning, and strength at navigating unfamiliar codebases make it the best choice for complex planning tasks, large-scale migrations, and debugging sessions that require deep cross-file analysis.

- Use GPT-5.4 for the hardest coding tasks and computer-use workflows. When you need top benchmark performance, native computer control, or agent pipelines that operate across dozens of tools, GPT-5.4 is the strongest single model available. Its tool search feature makes it particularly efficient for tool-heavy agentic workflows.

Key takeaways

- Composer 2 beats Opus 4.6 on Terminal-Bench 2.0 and CursorBench at roughly 90% lower cost, making it the best value proposition for Cursor-native workflows as of March 2026.

- GPT-5.4 leads on the hardest coding benchmarks (75.1% Terminal-Bench 2.0) and is the only model with native computer-use capabilities, but costs 5x more than Composer 2 per input token.

- Opus 4.6 excels at multi-agent orchestration and architectural reasoning, with Agent Teams and a 1M context beta, but is the most expensive model per token at $5/$25.

- The market is moving toward multi-model workflows. Most professional developers now use 2-3 AI coding tools, selecting models based on task complexity and cost.

- Benchmark transparency matters. CursorBench is proprietary and not independently reproducible, and Terminal-Bench scores vary significantly depending on which agent harness is used. Always validate benchmarks against your own workload.

The AI coding model space is evolving faster than ever. Cursor shipped three generations of Composer in five months. Anthropic and OpenAI are releasing major updates on a similar cadence. The model that tops benchmarks today will likely be challenged within weeks. Rather than betting on a single model, the practical move is to build workflows that can swap between them as new releases arrive.

Leave a Comment

Sign in to join the discussion and share your thoughts.

Login to Comment