Nano Banana 2: How to get Pro quality at Flash speeds

On February 26, 2026, Google introduced Nano Banana 2, officially named Gemini 3.1 Flash Image, positioning it as the first truly practical option for creators and product teams who need production-ready visuals up to 4K without waiting on the latency and cost profile of heavier “Pro” image models. Instead of choosing between fast drafts and slow, high-fidelity renders, Nano Banana 2 aims to make “fast” the default and “Pro” the exception. The two features that most change day-to-day workflows are (1) search grounding, including Google Image Search grounding unique to 3.1 Flash Image, and (2) multi-turn editing designed for iterative, chat-based refinement.

This article explains what Nano Banana 2 is, what actually changed versus Nano Banana Pro (Gemini 3 Pro Image), and how to start using it immediately in the Gemini app and via the Gemini API for high-throughput visual workflows.

What Nano Banana 2 is (and why it matters for 4K workflows)

Nano Banana is Google’s “native” Gemini image generation and editing capability. As of February 26, 2026, the Gemini API documentation distinguishes three relevant options:

- Nano Banana 2:

gemini-3.1-flash-image-preview(Gemini 3.1 Flash Image Preview) - Nano Banana Pro:

gemini-3-pro-image-preview(Gemini 3 Pro Image Preview) - Nano Banana:

gemini-2.5-flash-image(Gemini 2.5 Flash Image)

The practical shift with Nano Banana 2 is that it targets the “creator’s middle”: fast enough to stay interactive while still supporting 4K output, precise instruction following, and consistent subjects suitable for production iteration. Google also frames it as bringing “Pro” capabilities to “Flash” speed and rolling it out broadly across products like Gemini and Search.

For teams shipping content daily (marketing, ecommerce, social, design systems), the bottleneck is rarely “can the model do it?” It’s “can we do it ten times before the meeting ends?” That’s why Nano Banana 2’s speed matters: it reduces the cost (time, compute, human attention) of iteration, which is where most visual work actually happens.

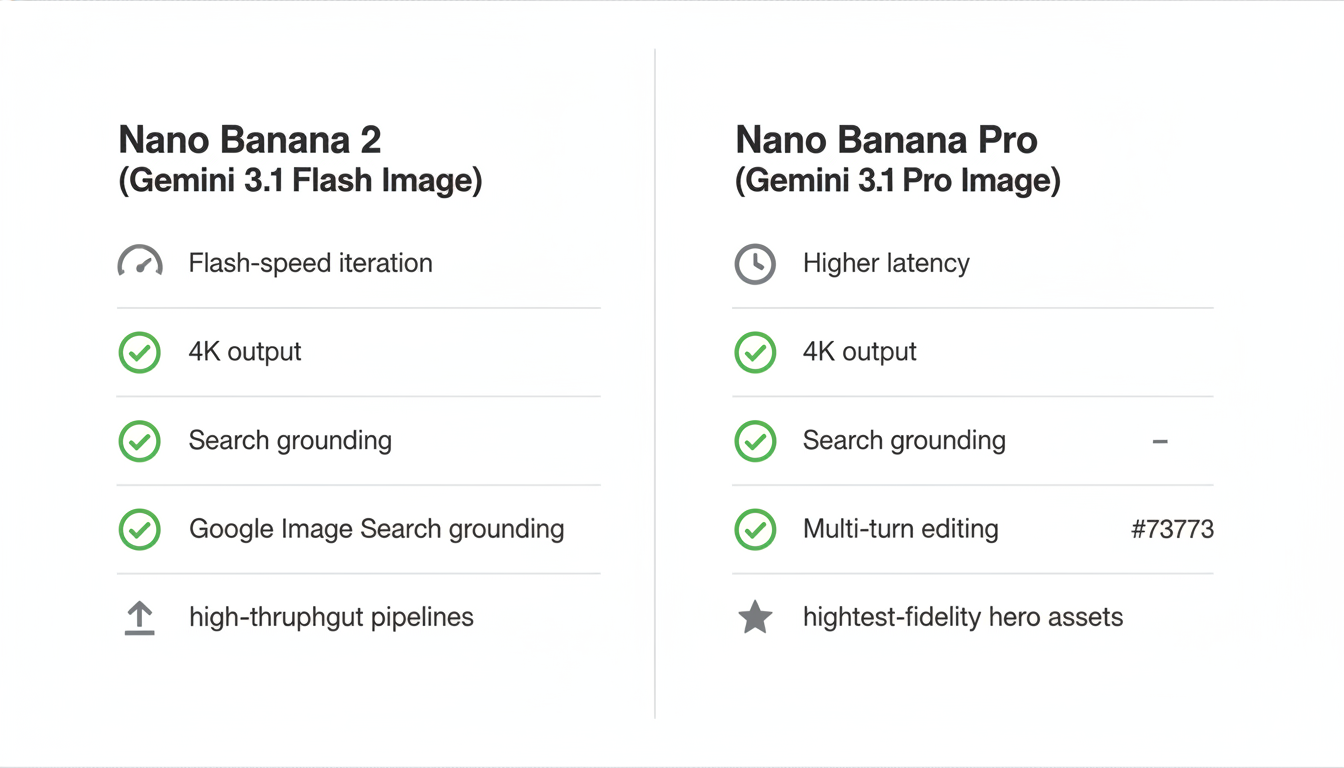

Nano Banana 2 vs Nano Banana Pro: what’s new, what’s different

Think of Nano Banana Pro as your “hero asset” model (maximum fidelity, heavier reasoning) and Nano Banana 2 as your “production line” model (high-volume, low-latency, still high quality). Both are “Gemini 3 image models” and both support multi-turn creation and edits, but Nano Banana 2 adds a key capability: Google Image Search grounding as a first-class tool inside grounded generation.

Feature comparison (creator and developer view)

| Capability | Nano Banana 2 (Gemini 3.1 Flash Image) | Nano Banana Pro (Gemini 3 Pro Image) |

|---|---|---|

| API model name | gemini-3.1-flash-image-preview | gemini-3-pro-image-preview |

| Release date (Preview) | Feb 26, 2026 | Referenced by Google as released Nov 2025 |

| Max output resolution | Up to 4K | Up to 4K |

| New ultra-fast iteration tier | 512px output option (lower latency) | No 512px tier mentioned |

| Grounding with Google Search (web) | Supported | Supported |

| Grounding with Google Image Search | Supported (3.1 Flash Image only) | Not listed as available the same way |

| Multi-turn editing (chat) | Supported | Supported |

| Reference images (total) | Up to 14 (with limits per character/object category) | Up to 14 (with different character/object limits) |

| Best fit | High-throughput, interactive design and editing pipelines | Maximum-fidelity assets and complex instruction-heavy “finals” |

One more practical difference: Google’s product rollout notes that Nano Banana 2 is becoming the default in the Gemini app’s modes, while Pro remains accessible for specialized tasks (for example, via regeneration options). That changes how most users experience image generation day to day: Nano Banana 2 becomes the “first click.”

How Nano Banana 2 gets “Pro quality” faster: search grounding + multi-turn editing

The speed story isn’t only about faster inference. It’s also about reducing rework. When the model has better context (grounding) and you can iterate more precisely (multi-turn editing), you spend fewer cycles correcting basics like “that’s the wrong bird,” “the sign text is misspelled,” or “the layout drifted.”

1) Search grounding that’s actually useful for visuals

Grounding with Google Search lets Gemini retrieve real-time information for time-sensitive or factual tasks (weather, stock charts, recent events) and then generate imagery based on that context. The Gemini API documentation explicitly describes this as using Google Search as a tool for verification and real-time data, and it returns groundingMetadata so you can trace sources and show search suggestion UI where required.

What’s new and especially relevant for creators: Nano Banana 2 adds Google Image Search grounding. Instead of only grounding on text snippets from the web, the model can use retrieved web images as visual context for generation. This is a big deal for “render this specific subject accurately” prompts, where textual descriptions alone often fail (rare species, niche products, architecture styles, obscure locations).

2) Multi-turn editing: turning image generation into a real design loop

Multi-turn editing is where Nano Banana 2 becomes a workflow tool rather than a one-shot generator. In practice, you generate once, then keep a chat open to refine:

- Fix or localize text without changing layout

- Adjust lighting and color grading while preserving identity

- Swap props, backgrounds, or wardrobe while keeping the same subject

- Iterate aspect ratios for different placements (ads, stories, banners)

The Gemini API docs recommend chat for iteration and show how to keep editing in the same conversation. Under the hood, Gemini 3 image models use a “thinking” process for complex prompts, and the docs note that thought signatures are included and should be passed forward across turns (the official SDK handles this automatically if you use the chat feature).

How to access Nano Banana 2 right now (Gemini app, AI Studio, and API)

As of February 26, 2026, Google says Nano Banana 2 is rolling out across Google products including the Gemini app, Search, AI Studio + Gemini API, and Vertex AI. For creators, the easiest entry points are the Gemini app (no code) and Google AI Studio (interactive prototyping). For teams, the Gemini API is the path to automation and scale.

Option A: Gemini app (fastest way to try Nano Banana 2)

In the Gemini app, Nano Banana 2 is positioned as the default image model in “Fast” workflows as it rolls out. If you’re a subscriber and need Nano Banana Pro for a specialized task, Google notes you can regenerate images via menu options to access the Pro model when needed.

Option B: Google AI Studio (interactive prompts + developer features)

Google AI Studio exposes Nano Banana 2 as gemini-3.1-flash-image-preview and is ideal for quickly testing prompts, aspect ratios, and grounded generation before you wire anything into your app. Google’s developer announcement also states a paid API key is required to use the model in AI Studio.

Option C: Gemini API (production integration)

For programmatic image generation and editing, use the Gemini API with the model name gemini-3.1-flash-image-preview. The official examples show calling client.models.generate_content() for single-shot generation, or using client.chats.create() for multi-turn editing and iterative refinement.

# Python (Gemini API) — Nano Banana 2 (Gemini 3.1 Flash Image Preview)

# Requires: google-genai SDK and an API key configured in your environment.

from google import genai

from google.genai import types

client = genai.Client()

prompt = (

"Create a 4K, studio-lit product photo of a matte-black reusable water bottle "

"on a light gray seamless background. Add crisp, legible label text: "

"\"NANO BANANA 2\" in a modern sans-serif. 16:9."

)

response = client.models.generate_content(

model="gemini-3.1-flash-image-preview",

contents=prompt,

config=types.GenerateContentConfig(

response_modalities=["TEXT", "IMAGE"],

image_config=types.ImageConfig(

aspect_ratio="16:9",

image_size="4K",

),

),

)

# Save the image output

for part in response.parts:

if part.inline_data is not None:

img = part.as_image()

img.save("nano_banana_2_product_4k.png")Using search grounding safely (and compliantly) in real products

Search grounding is powerful, but it comes with product requirements that matter if you’re shipping a creator tool or an internal design system. The Gemini API documentation calls out that when using Image Search grounding, you must provide clear attribution by linking to the webpage that contains the source image (the “containing page”), and if you display source images you must provide a direct single-click path to that containing page.

In practical terms, plan for a UI pattern that always includes:

- A “Sources” module that lists the top retrieved pages used for grounding

- A clear, recognizable link treatment (not hidden behind icons)

- Optional thumbnails that click straight through to the source page

This is also where Nano Banana 2 becomes especially attractive for brand and marketing teams: you can build workflows that are faster and more auditable, with metadata attached to how an asset was produced.

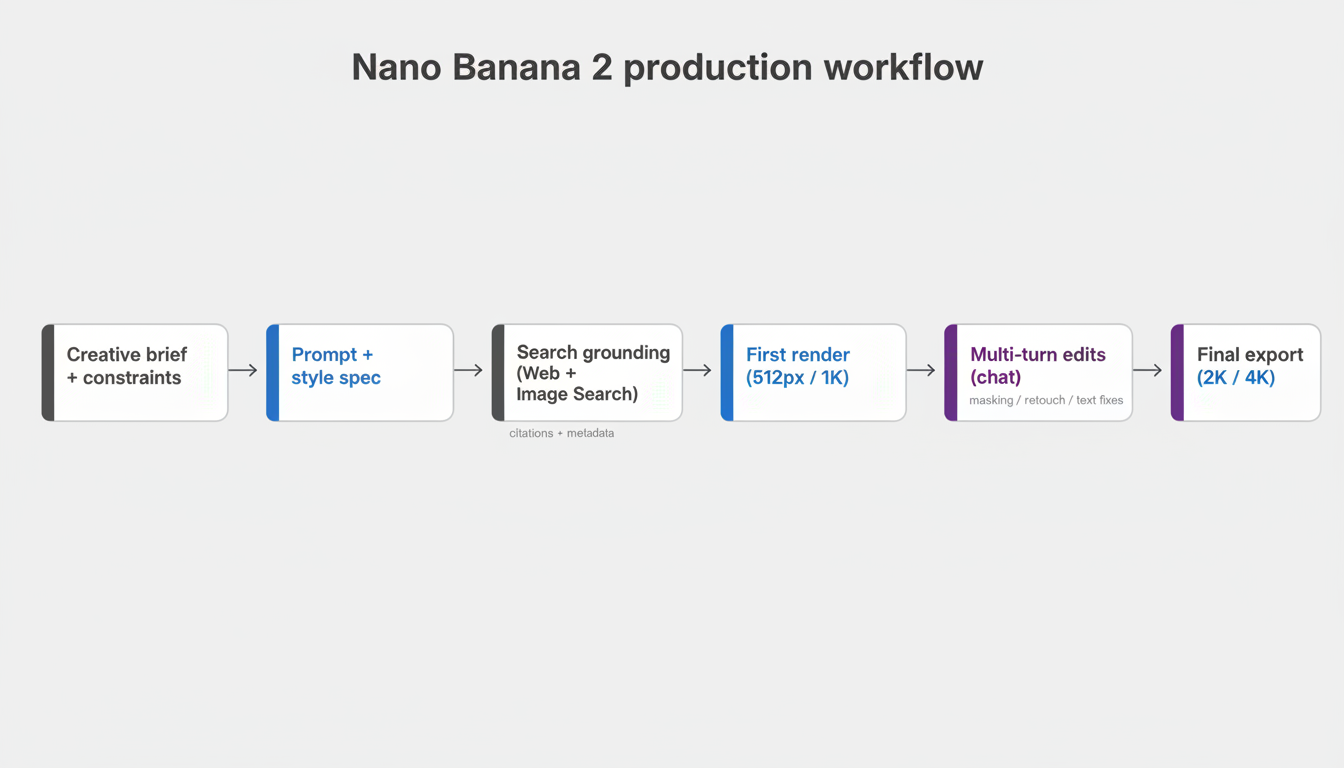

Speed tactics: how to get 4K deliverables without paying the “4K tax” every time

Even with a Flash model, 4K is more expensive than smaller outputs. Nano Banana 2 supports 512px, 1K, 2K, and 4K outputs, so the best production approach is to iterate cheaply and upscale only when the design is locked.

- Start at 512px or 1K for composition, subject placement, and rough typography.

- Use multi-turn edits to correct specifics (text, lighting, background, props) while preserving identity.

- Switch aspect ratios once the design is stable (e.g., 1:4 for stories, 16:9 for hero banners).

- Export at 2K or 4K only for final delivery or when you need crisp text/texture for print or high-resolution placements.

On the pricing side, Google’s Gemini API pricing page lists Nano Banana 2 image output as token-priced with explicit approximate per-image equivalents by resolution (including 0.5K/512px through 4K), which helps teams estimate budgets for batch generation and A/B creative testing.

Conclusion: when to use Nano Banana 2 vs Nano Banana Pro

Nano Banana 2 (Gemini 3.1 Flash Image) is a meaningful shift because it treats “fast” as a production requirement, not a compromise. As of February 26, 2026, it brings three workflow upgrades that matter most to creators: 4K-ready output with Flash iteration, search grounding that can include Google Image Search, and multi-turn editing that turns image generation into a repeatable design loop.

If you’re building high-throughput visual pipelines, Nano Banana 2 should be your default: iterate at 512px/1K, ground when accuracy matters, then export at 2K/4K. Keep Nano Banana Pro in your toolkit for the cases where you need the absolute highest-fidelity hero assets or the most complex instruction-heavy compositions. The fastest way to start is to try Nano Banana 2 in the Gemini app or Google AI Studio today, then move your best prompts into the Gemini API for automated workflows.

Leave a Comment

Sign in to join the discussion and share your thoughts.

Login to Comment