Engineering teams today face a critical bottleneck: routine tasks like boilerplate coding, testing, and debugging consume up to 70% of developers’ time, slowing the software development lifecycle (SDLC). As of November 2025, OpenAI Codex—powered by the latest GPT-5.1-Codex-Max model (released November 19, 2025)—changes this by enabling AI-native engineering teams. This guide walks through building such a team step-by-step: from setup to full SDLC integration. Leverage Codex CLI, IDE extensions, and cloud agents to automate low-value work, letting engineers focus on architecture and innovation. Teams using Codex report shipping 70% more pull requests weekly, with 95% adoption at OpenAI itself.

What is an AI-native engineering team?

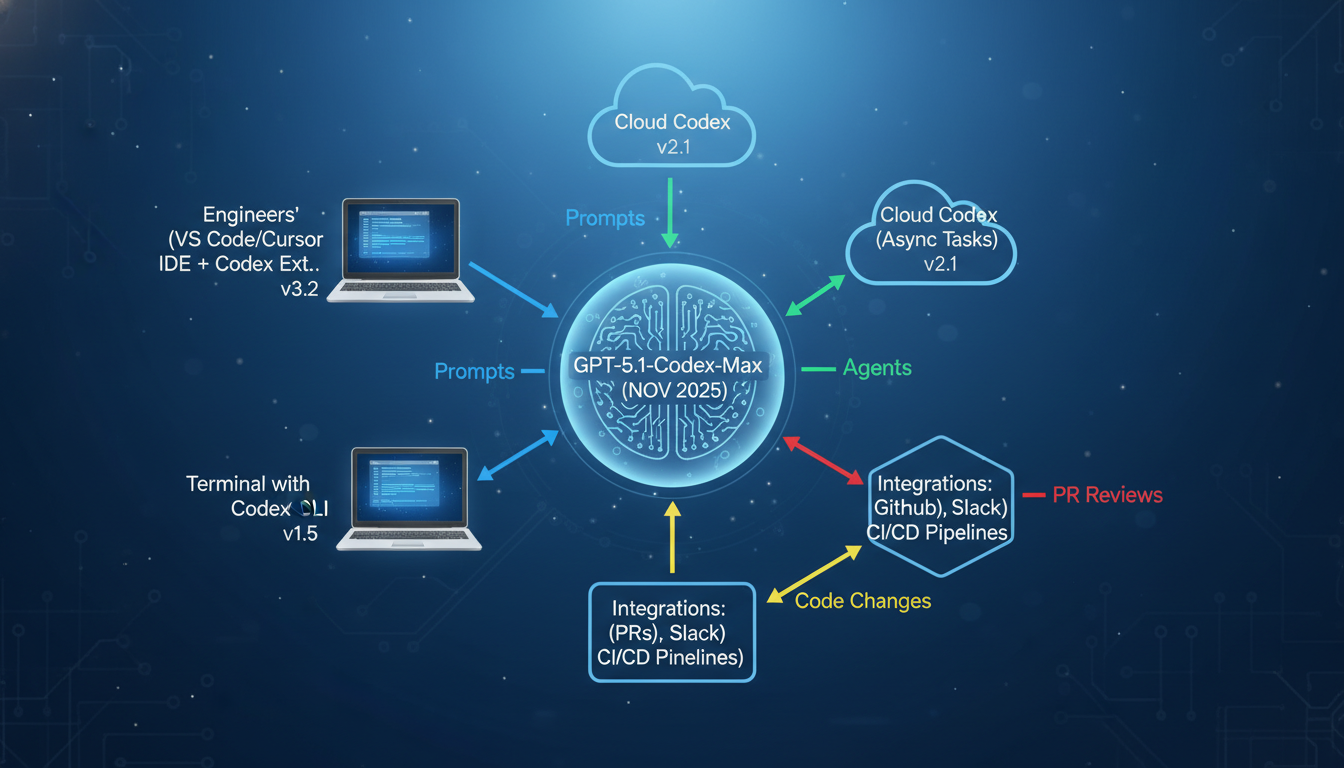

An AI-native engineering team treats AI coding agents like Codex as first-class collaborators, not tools. Instead of humans grinding through prototypes or tests, agents handle them autonomously in sandboxes, proposing changes via PRs for review. Codex, relaunched in May 2025 and upgraded with GPT-5-Codex (September 2025) and GPT-5.1-Codex-Max (November 2025), excels here. It runs locally via CLI (npm i -g @openai/[email protected], latest November 2025), in IDEs like VS Code/Cursor, or cloud for async tasks. Key: agents operate in isolated environments, citing logs/tests for trust.

Benefits include 30% token efficiency gains in GPT-5.1-Codex-Max over priors, enabling long tasks like project refactors. Official docs confirm availability in ChatGPT Plus/Pro/Business plans, with API access via models like gpt-5.1-codex-max.

| Aspect | Traditional Team | AI-Native with Codex |

|---|---|---|

| SDLC Speed | Weeks for prototypes | Minutes via agents |

| Test Coverage | Manual, inconsistent | Auto-generated, 90%+ pass rates |

| PR Reviews | Human bottlenecks | Codex + human, 70% faster |

| Model (Nov 2025) | N/A | GPT-5.1-Codex-Max |

Step 1: Setting up OpenAI Codex infrastructure

Start with prerequisites: ChatGPT Plus ($20/mo) or higher for included usage (up to 5-50 hours/month based on plan). Install Codex CLI:

npm install -g @openai/[email protected] # Latest Nov 21, 2025

# Or brew install --cask codexSign in: codex then “Sign in with ChatGPT”. For IDEs, install Codex extension in VS Code/Cursor (v0.5.46, Nov 2025). Configure ~/.codex/config.toml:

[model]

default = "gpt-5.1-codex-max" # Frontier model, Nov 19, 2025 release

[exec]

auto_approve_safe = true # For routine commandsTest: codex in a repo, prompt “Refactor this function for efficiency.” Review diffs/logs before applying.

Step 2: Automating SDLC planning and prototyping

In planning, Codex generates user stories/Jira tickets from requirements. Prompt: “From this spec, create epics/stories in Markdown.” It scans repos via AGENTS.md for context (e.g., “Run tests with npm test”).

- Connect GitHub: Authorize in Codex web/CLI.

- Prompt Codex: “Plan Q4 features for our e-commerce app.”

- Review/iterate: Agent proposes branches/PRs.

Prototyping: Delegate “Prototype dark mode toggle using React 18.3 (latest 2025).” Codex CLI scaffolds code, runs prototypes in sandbox, cites tests. Humans merge via GitHub PRs where Codex auto-reviews.

Example prototyping workflow

# In repo root

codex "Build a REST API endpoint for user auth with JWT"

# Codex: scaffolds /api/auth.js, runs tests, proposes PRStep 3: AI-driven testing and deployment

Codex writes/runs unit/integration tests: “Add tests for this function, aim 90% coverage.” It uses pytest/Jest (detects via AGENTS.md), fixes failures iteratively. For deployment, integrate SDK in CI/CD:

import { Codex } from "@openai/codex-sdk"; // Latest SDK

const agent = new Codex();

await agent.run("Review this PR and deploy if tests pass");GitHub: Tag @codex on PRs/issues for auto-reviews (since Oct 2025). Slack: Assign “@codex fix prod bug #123” (GA Oct 2025).

| SDLC Stage | Codex Prompt Example | Output |

|---|---|---|

| Planning | “Generate stories from RFP” | Markdown epics/PRs |

| Prototyping | “Prototype feature X” | Code diffs/tests |

| Testing | “Write tests + fix fails” | 90% coverage PR |

| Deployment | “Automate CI/CD” | Pipeline YAML |

Step 4: Team processes and scaling

Train team: Weekly Codex workshops. Define guidelines in AGENTS.md: “Always run npm test; prefer TypeScript.” Use Execpolicy for safe commands (docs Nov 2025). Monitor via ChatGPT analytics (Enterprise).

- Pair programming: Codex CLI for real-time.

- Async: Cloud for overnight refactors.

- Reviews: Codex + juniors (70% faster).

- Scale: SDK in GitHub Actions.

Real-world: Cisco/Temporal use Codex for features/debugging (May 2025 testers). OpenAI: 95% weekly use, 70% more PRs.

“Codex accelerates feature development and keeps engineers in flow.” – Temporal, early tester

OpenAI Codex announcement, May 2025

Best practices and pitfalls

Always review agent code—Codex cites evidence but errs on edge cases. Start small: prototypes/tests. Customize prompts/execpolicy. Latest changelog (Nov 24, 2025): Improved credits/usage. Pitfalls: Over-reliance (keep humans for architecture); sandbox escapes (network off by default).

Measure: Track PR velocity, bug rates pre/post-Codex. Benchmarks: GPT-5.1-Codex-Max hits 77.9% SWE-bench Verified (xhigh effort).

Conclusion

Building an AI-native team with OpenAI Codex transforms SDLC: automate routine tasks, boost agility 70%. Key takeaways: Install CLI/extension (v0.63.0), default to GPT-5.1-Codex-Max, integrate GitHub/Slack, review always. Next steps: Run “codex” in a repo today; pilot on one sprint; scale with SDK. As Codex evolves (compaction for million-token tasks), expect even greater gains—position your team at the forefront of 2025 engineering.