Struggling with high latency and privacy concerns from cloud-based AI agents for web automation? Microsoft’s Fara-7B, released on November 24, 2025, offers a compelling solution. This 7-billion-parameter agentic small language model (SLM) runs entirely on-device, processing screenshots to execute browser actions like clicks, typing, and scrolling. As of November 2025, Fara-7B sets state-of-the-art benchmarks for its size class on tasks like WebVoyager (73.5% success) and the new WebTailBench (38.4%), rivaling larger models such as GPT-4o while keeping data local. This guide walks you through setup, usage, and secure web task automation with Fara-7B, emphasizing its visual perception for real-world efficiency.

What is Fara-7B?

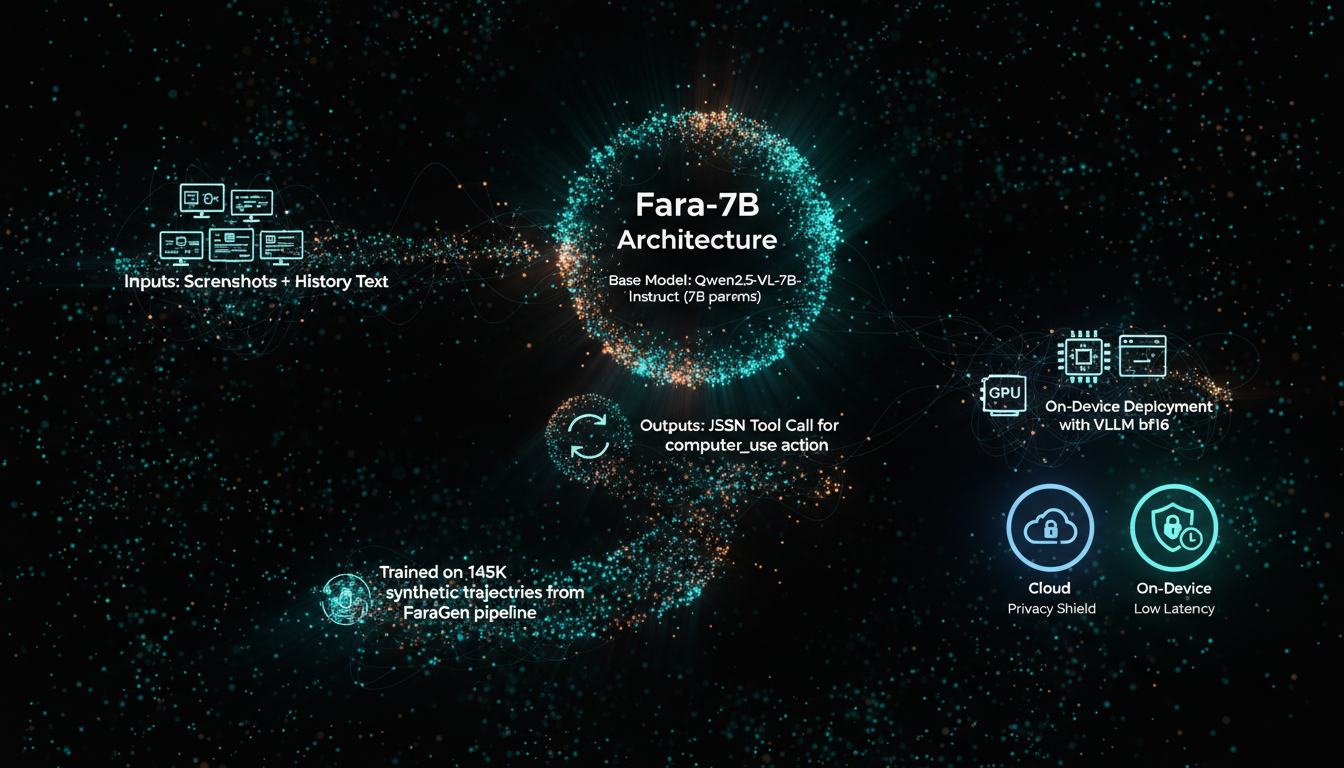

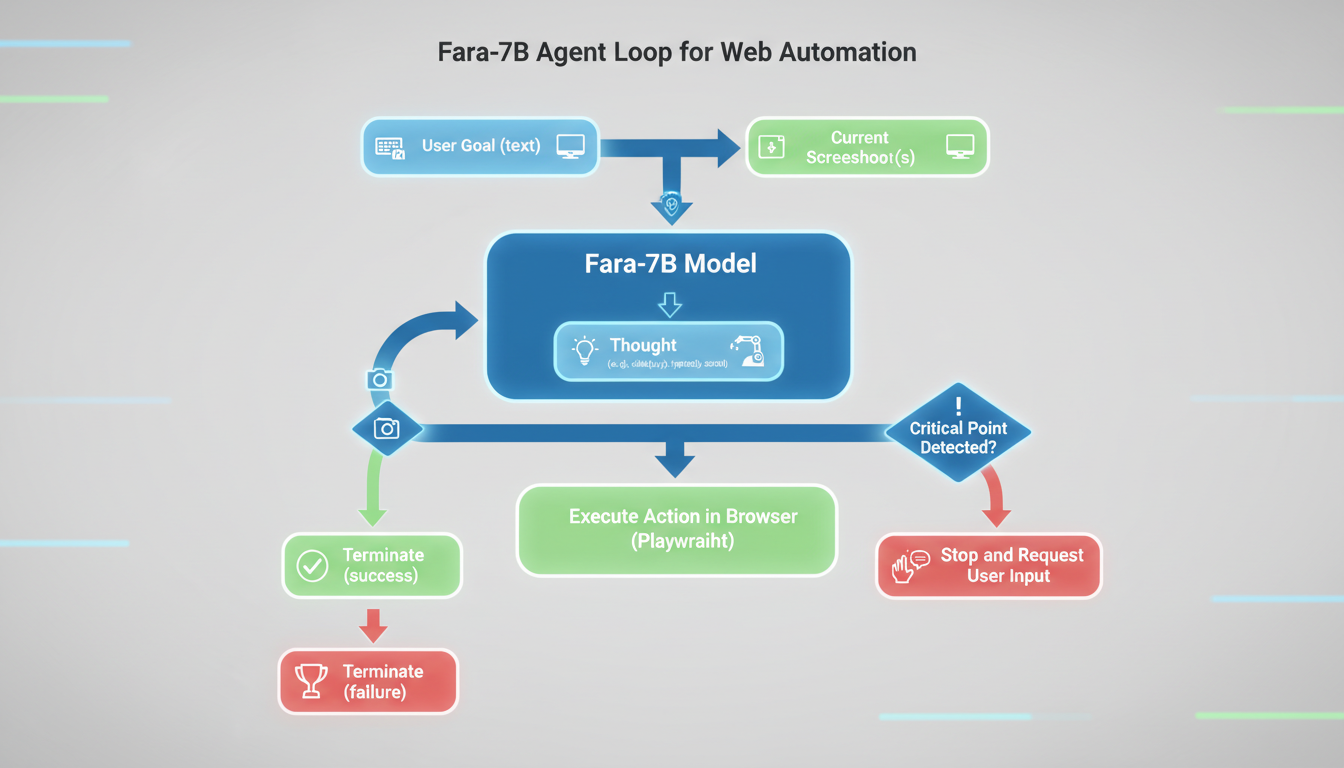

Fara-7B is Microsoft’s first native computer-use agent (CUA), fine-tuned from Qwen2.5-VL-7B-Instruct for multimodal web interaction. It takes user goals, screenshots, and action history as input, then outputs chain-of-thought reasoning followed by grounded actions via JSON tool calls. Unlike system-of-mark (SoM) agents relying on accessibility trees, Fara-7B operates pixel-to-action, mimicking human browsing without extra parsing.

Key specs as of release: 7B parameters, 128k context length, MIT license, available on Hugging Face (microsoft/Fara-7B) and Azure AI Foundry. Trained on 145k synthetic trajectories from the FaraGen pipeline spanning shopping, bookings, and info-seeking. It excels in on-device deployment via vLLM (bf16 precision), averaging 16 steps per task—half that of peers like UI-TARS-1.5-7B.

Safety features include refusing harmful tasks (94% on AgentHarm-Chat) and halting at critical points like payments or logins, ensuring secure automation.

Installing Fara-7B locally

Setup requires Python 3.10+, Git, and NVIDIA GPU (A6000+ recommended for vLLM). Tested on Ubuntu 24.04.

- Clone the repo:

git clone https://github.com/microsoft/fara - Install deps:

cd fara && pip install -e . && playwright install - Download model (16.6GB):

python scripts/download_model.py --output-dir ./model_checkpoints --token YOUR_HF_TOKEN(login viahuggingface-cli login) - Host vLLM server:

cd src/fara/vllm && pip install -r requirements.txt && python az_vllm.py --model_url /path/to/model_checkpoints/fara-7b --device_id 0,1(port 5000)

torch>=2.7.1, transformers>=4.53.3, vllm>=0.10.0 required.

Run on Copilot+ PCs with quantized version via VSCode AI Toolkit.Azure Foundry offers no-GPU hosting: Deploy from https://ai.azure.com/explore/models/Fara-7B, get endpoint/API key.

Running your first web automation task

Test with Playwright browser. Use system prompt from model card for ChatML format.

# test_fara_agent.py --task "Search Wikipedia page count" --start_page "https://bing.com" --endpoint_config endpoint_configs/vllm_config.json --headful --max_rounds 100

# Outputs: Thought -> Action (e.g., {"name":"computer_use","arguments":{"action":"web_search","query":"wikipedia pages"}} -> Screenshot loop until terminate(success).

Actions: key(), type(), mouse_move(x,y), left_click(x,y), scroll(pixels), visit_url(), web_search(), etc. Integrates Magentic-UI Docker sandbox for safe testing.

Performance and benchmarks

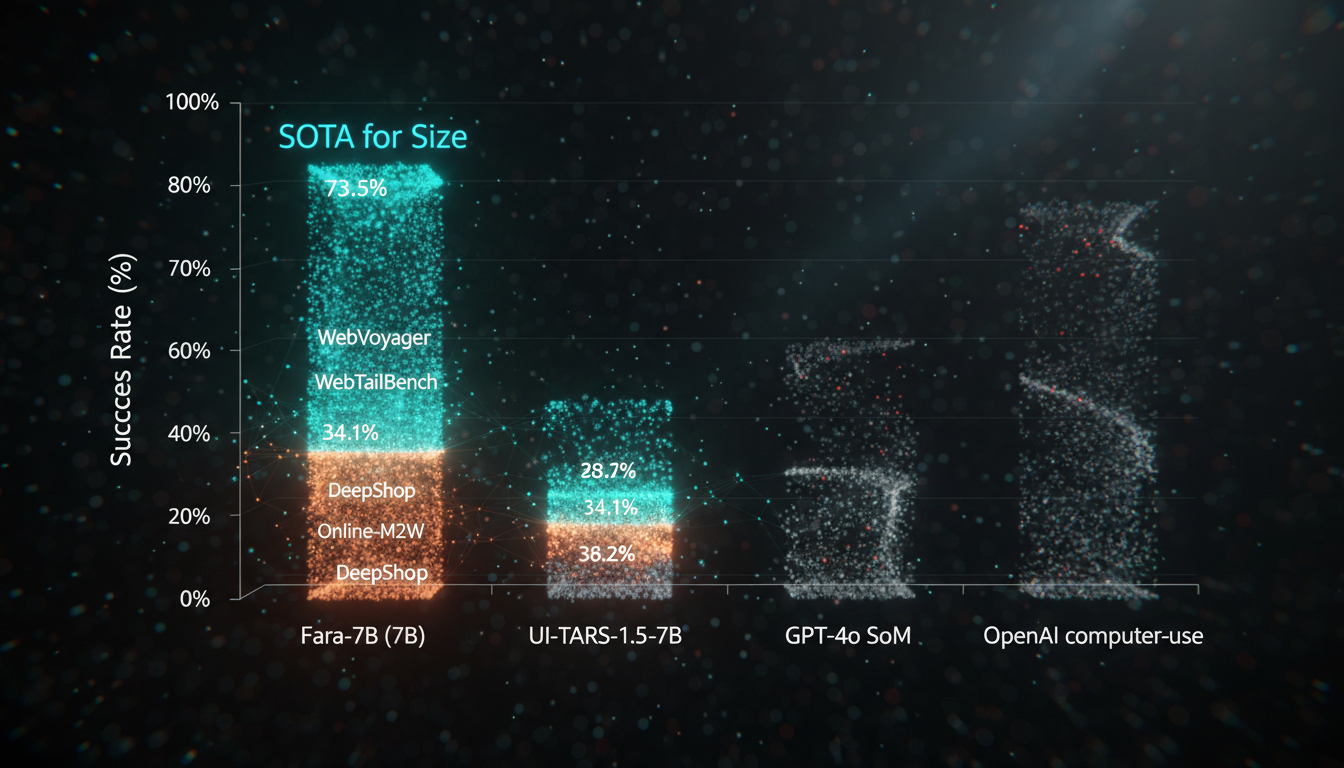

| Model | Params | WebVoyager | Online-M2W | DeepShop | WebTailBench |

|---|---|---|---|---|---|

| Fara-7B | 7B | 73.5% | 34.1% | 26.2% | 38.4% |

| UI-TARS-1.5-7B | 7B | 66.4% | 31.3% | 11.6% | 19.5% |

| GPT-4o SoM | – | 65.1% | 34.6% | 16.0% | 30.8% |

| OpenAI CUA-preview | – | 70.9% | 42.9% | 24.7% | 25.7% |

WebTailBench (new from Microsoft) tests underrepresented tasks like multi-item shopping (Fara-7B: 49.0%). Costs ~$0.025/task vs $0.30+ for GPT-4o.

Secure on-device usage best practices

On-device means no cloud data leaks—ideal for sensitive automation. Run sandboxed: Use Browserbase for sessions, limit via allow-lists. Human-in-loop at critical points (e.g., payments). Monitor via –save_screenshots.

- Sandbox with Docker/Magentic-UI.

- Verify outputs: Hallucinations possible on complex sites.

- English-only; regulated domains (medical/legal) out-of-scope.

- Scale: Multi-GPU vLLM for production.

Advanced: Integrate Fara-Agent class for custom loops; eval on WebTailBench (Hugging Face).

Conclusion

Fara-7B delivers secure, low-latency web automation: Install via GitHub, host locally, automate tasks like bookings or research. Key takeaways: SOTA 7B performance (e.g., 73.5% WebVoyager), on-device privacy, critical point safeguards. Next: Experiment with test_fara_agent.py on GitHub/microsoft/fara. Quantized for Copilot+ PCs soon. As agentic SLMs evolve, Fara-7B pioneers efficient, private alternatives to cloud giants—try it for your workflows today.