In the rapidly evolving landscape of AI development tools, a CTO’s primary challenge is separating marketing hype from measurable impact on the bottom line. With Anthropic’s release of Claude Opus 4.5 on November 24, 2025, and OpenAI’s recent launch of GPT-5.1, engineering leaders are faced with a critical decision. While Anthropic has significantly adjusted its pricing, the true calculation of coding ROI goes far beyond per-token costs. As of late 2025, making the right choice requires a data-driven framework that weighs API expenses against tangible gains in developer productivity.

This guide provides a CTO-focused methodology for evaluating Claude Opus 4.5 against GPT-5.1. We’ll break down the nuanced differences in pricing, performance, and efficiency to help you determine which model offers the superior return on investment for your specific engineering workflows.

The new contenders: a high-level overview

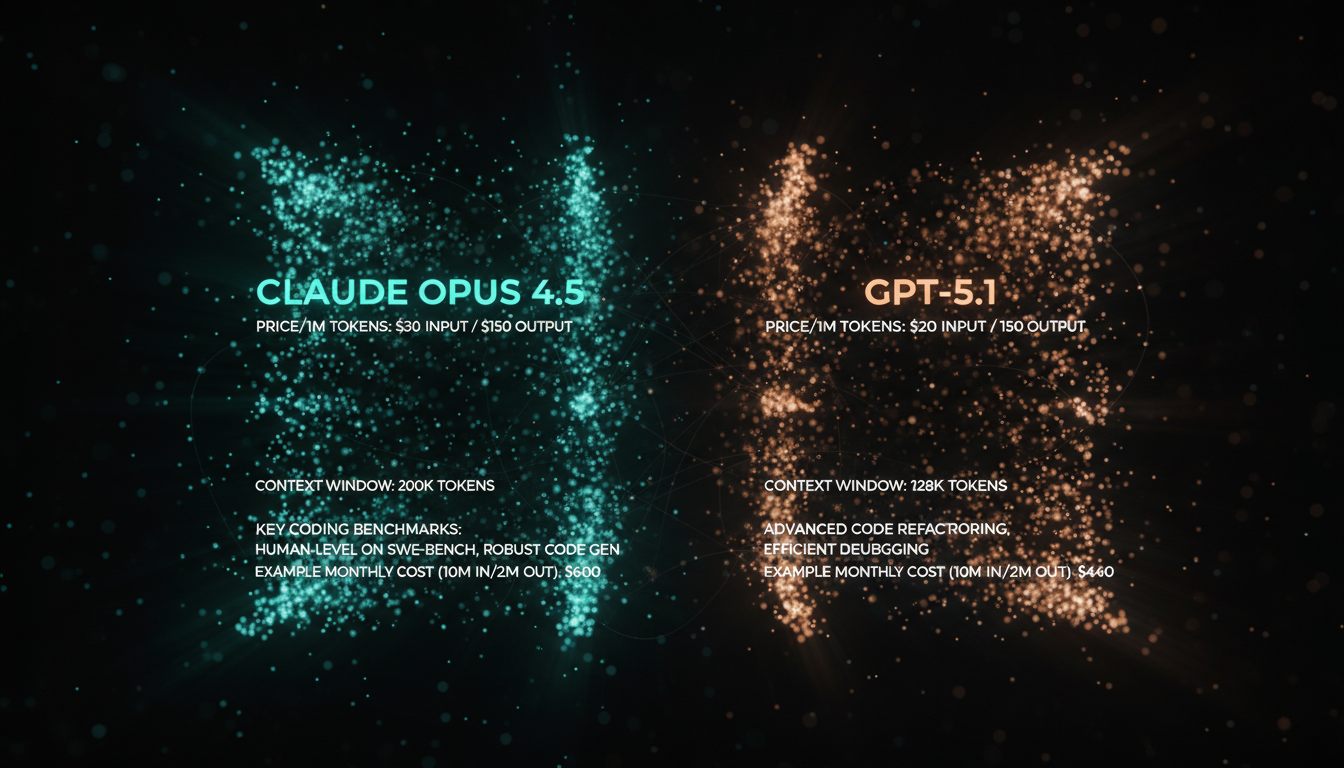

The final quarter of 2025 has been pivotal for frontier AI models. OpenAI launched GPT-5.1 on November 12, 2025, positioning it as their premier model for complex reasoning and agentic tasks. Anthropic followed shortly after, releasing Claude Opus 4.5 on November 24, 2025, with a clear focus on setting a new standard in coding, enterprise workflows, and token efficiency.

- GPT-5.1 (OpenAI): Released November 12, 2025, GPT-5.1 is an evolution of the GPT-5 series, optimized for high-performance reasoning and instruction adherence. It boasts a large 400,000-token context window and is positioned as a powerful general-purpose model for a wide range of tasks, including sophisticated code generation.

- Claude Opus 4.5 (Anthropic): Released November 24, 2025, this model is Anthropic’s most intelligent offering. The company highlights its state-of-the-art performance on software engineering benchmarks, its ability to handle long-horizon tasks, and a significant reduction in token usage compared to previous versions, even while delivering superior results.

The core decision-driver: API cost analysis

At first glance, a direct price comparison seems to favor OpenAI. However, the total cost of ownership depends heavily on token efficiency—how many tokens a model needs to achieve the desired outcome. A model with a higher per-token price might be cheaper overall if it solves problems more concisely. Below is a direct comparison based on standard API pricing as of November 2025.

| Feature | Claude Opus 4.5 | GPT-5.1 |

|---|---|---|

| Release Date | November 24, 2025 | November 12, 2025 |

| Input Price (per 1M tokens) | $5.00 | $1.25 |

| Output Price (per 1M tokens) | $25.00 | $10.00 |

| Context Window | 200K+ | 400K |

| Monthly Cost Scenario* | $100.00 | $32.50 |

The table clearly shows that for raw token-for-token usage, GPT-5.1 is significantly cheaper. A development team using 10M input and 2M output tokens monthly would see an API bill of $32.50 with GPT-5.1 versus $100.00 with Claude Opus 4.5. This makes the concept of “token efficiency” the central pillar of the ROI calculation.

Beyond price: a framework for benchmarking coding performance

API costs are only one part of the equation. The real value comes from accelerating developer velocity. Anthropic claims Claude Opus 4.5 can solve complex coding problems using up to 65% fewer tokens than competitors. If true, this could not only close the cost gap but also signify a more intelligent model that requires less prompt engineering and delivers better results faster. To validate these claims for your team, you must establish an internal benchmarking process.

Step 1: Define your core coding use cases

Identify the most common and time-consuming tasks for your developers where an AI assistant provides value. These typically fall into four categories:

- Complex Code Generation: Generating boilerplate for a new microservice, creating a complex SQL query with multiple joins, or scaffolding a new component in your frontend framework.

- Bug Remediation: Pasting a code snippet and an error log, then asking the model to identify the root cause and suggest a fix.

- Code Refactoring & Optimization: Providing a working piece of code and asking the model to improve its performance, readability, or adherence to style guides.

- Documentation & Unit Tests: Generating comprehensive documentation or creating a suite of unit tests for an existing function or class.

Step 2: Run head-to-head trials

Create a standardized set of 5-10 prompts based on your core use cases. Run these same prompts through both Claude Opus 4.5 and GPT-5.1. For each trial, measure the following:

- Quality of First Output: How close was the initial response to a production-ready solution? (Score 1-5)

- Number of Follow-up Prompts: How many additional interactions were needed to get a final, working solution?

- Total Tokens Used: Log the input and output tokens for the entire interaction, from initial prompt to final answer. This is crucial for calculating real-world costs.

- Developer Time Saved (Estimated): Ask the developer running the test to estimate how much time this interaction saved them compared to doing it manually.

Anthropic’s official benchmarks show Claude Opus 4.5 outperforming all other models on the SWE-bench Verified test, which evaluates real-world software engineering tasks. Your internal benchmark will determine if this academic performance translates to your specific codebase and challenges.

The final ROI calculation: blending cost and productivity

Once you have your benchmark data, you can calculate the true ROI. The goal is to determine the “effective cost per task,” which combines API fees and developer time.

Consider this hypothetical scenario based on your benchmarking:

- Task: Refactor a complex 200-line Python function.

- GPT-5.1 Result: Requires 3 prompts, uses 4,000 input and 10,000 output tokens. Total token cost: $0.105. Saves the developer 25 minutes.

- Claude Opus 4.5 Result: Solves it in 1 prompt, using 2,000 input and 4,000 output tokens (60% fewer output tokens). Total token cost: $0.11. Saves the developer 35 minutes because the first answer was correct and more insightful.

In this scenario, while Claude Opus 4.5 was marginally more expensive in API cost for this single task, it saved an additional 10 minutes of a developer’s time. If a developer’s fully-loaded cost is $100/hour, that extra 10 minutes is worth ~$16.67 in productivity. This is where the ROI shifts dramatically. The model that gets to the correct answer faster with less back-and-forth is almost always the more cost-effective choice, even with a higher API price.

The true cost of an AI model isn’t the API bill; it’s the cost of a developer’s time spent iterating on a wrong answer. Prioritize the model that maximizes developer velocity and minimizes rework.

Conclusion: a data-driven path forward

As of late 2025, the choice between Claude Opus 4.5 and GPT-5.1 is not a simple matter of price. While GPT-5.1 currently holds a significant advantage in raw per-token cost, Anthropic’s claims of superior intelligence and token efficiency for coding tasks cannot be ignored. For CTOs, the decision must be rooted in empirical evidence drawn from their own team’s workflows.

The definitive winner is the one that performs best on your codebase, with your developers, on your most critical tasks. By implementing the benchmarking framework outlined above—defining use cases, running head-to-head trials, and analyzing both API cost and developer time saved—you can move beyond speculation. This data-driven approach will allow you to calculate the true coding ROI and select the AI partner that will best accelerate your team’s innovation and productivity for the foreseeable future.