The field of artificial intelligence is moving at a breakneck pace, and as of late 2025, developers are faced with a dizzying array of flagship large language models (LLMs). The recent releases of Anthropic’s Opus 4.5, Google’s Gemini 3 Pro, OpenAI’s GPT-5.1, and xAI’s Grok 4.1 have once again redefined the state-of-the-art. For developers, choosing the right model is no longer about picking the one with the highest benchmark score; it’s about understanding the nuanced strengths and weaknesses that align with specific use cases. This in-depth guide provides a data-driven comparison to help you navigate this new landscape, focusing on the key areas of performance, coding, reasoning, and multimodal capabilities to inform your next project.

The late 2025 flagship LLM landscape

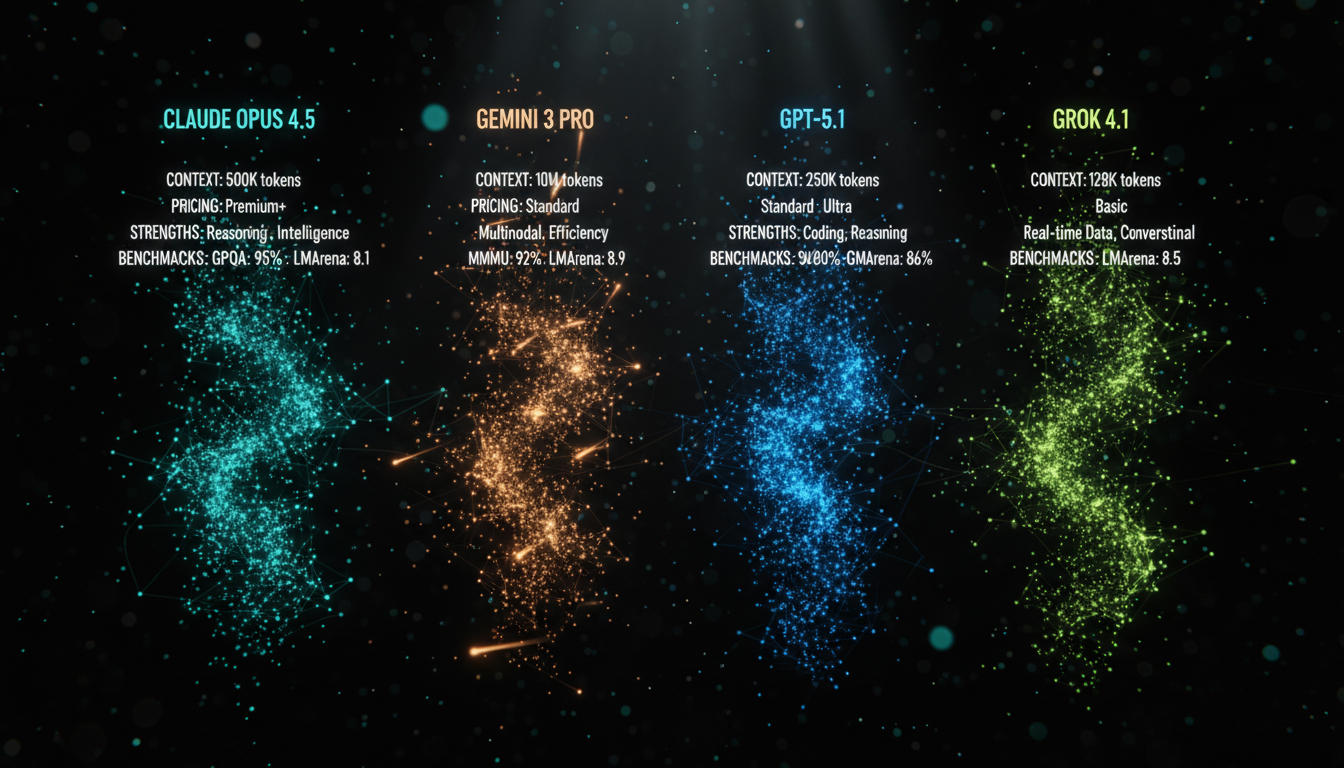

November 2025 has been a pivotal month for AI, with major releases from all key players. Each new model brings a unique architecture and philosophy, targeting different aspects of artificial intelligence, from raw reasoning power to conversational finesse. Understanding the top contenders is the first step in making an informed decision.

- Claude Opus 4.5 (Anthropic): Released on November 24, 2025, Opus 4.5 is positioned as the premier model for complex, multi-day software development projects and sophisticated AI agentic workflows. It emphasizes reliability and sustained, high-quality performance on enterprise-level tasks.

- Gemini 3 Pro (Google): Also released in November 2025, Gemini 3 Pro is Google’s most powerful agentic and coding model. It boasts a massive 1 million token context window and is deeply integrated with Google’s ecosystem, excelling at tasks that require real-time information and multimodal understanding.

- GPT-5.1 (OpenAI): A November 12, 2025, upgrade to the GPT-5 series, GPT-5.1 is presented as a faster, more adaptive, and conversational model. It includes specialized variants like GPT-5.1-Codex-Max, specifically fine-tuned for the most demanding software engineering challenges.

- Grok 4.1 (xAI): Rolled out in mid-November 2025, Grok 4.1 focuses on improving conversational intelligence, emotional perception, and creative collaboration. It aims to provide more natural, fluid dialogue while maintaining the sharp intelligence of its predecessors and has quickly topped the LMArena leaderboard.

Head-to-head: Key specifications and benchmarks

While benchmarks don’t tell the whole story, they provide a crucial starting point for performance comparison. The latest models have been tested on a new generation of challenging benchmarks that measure everything from graduate-level reasoning to real-world software engineering. Below is a breakdown of their key technical specifications and how they stack up.

| Model | Developer | Release Date | Context Window (Input) | Knowledge Cutoff |

|---|---|---|---|---|

| Opus 4.5 | Anthropic | Nov 24, 2025 | 200K tokens | N/A (real-time aware) |

| Gemini 3 Pro | Nov 20, 2025 | 1M tokens | Jan 2025 | |

| GPT-5.1 | OpenAI | Nov 12, 2025 | 400K tokens | Sep 30, 2024 |

| Grok 4.1 | xAI | Nov 17, 2025 | Not Publicly Stated | N/A (real-time aware) |

In terms of raw reasoning, the models are fiercely competitive. Gemini 3 Pro has shown leading performance on benchmarks like GPQA Diamond, while Grok 4.1 has taken a commanding lead on the human-preference LMArena leaderboard, suggesting it generates the most pleasing and helpful responses in conversational settings. Opus 4.5 shines in agentic tasks, achieving a state-of-the-art 66.3% on OSWorld, a benchmark that tests a model’s ability to use a computer. For multimodal tasks, Gemini 3 Pro’s deep integration with video and image understanding, demonstrated by its high scores on MMMU-Pro, makes it a standout choice.

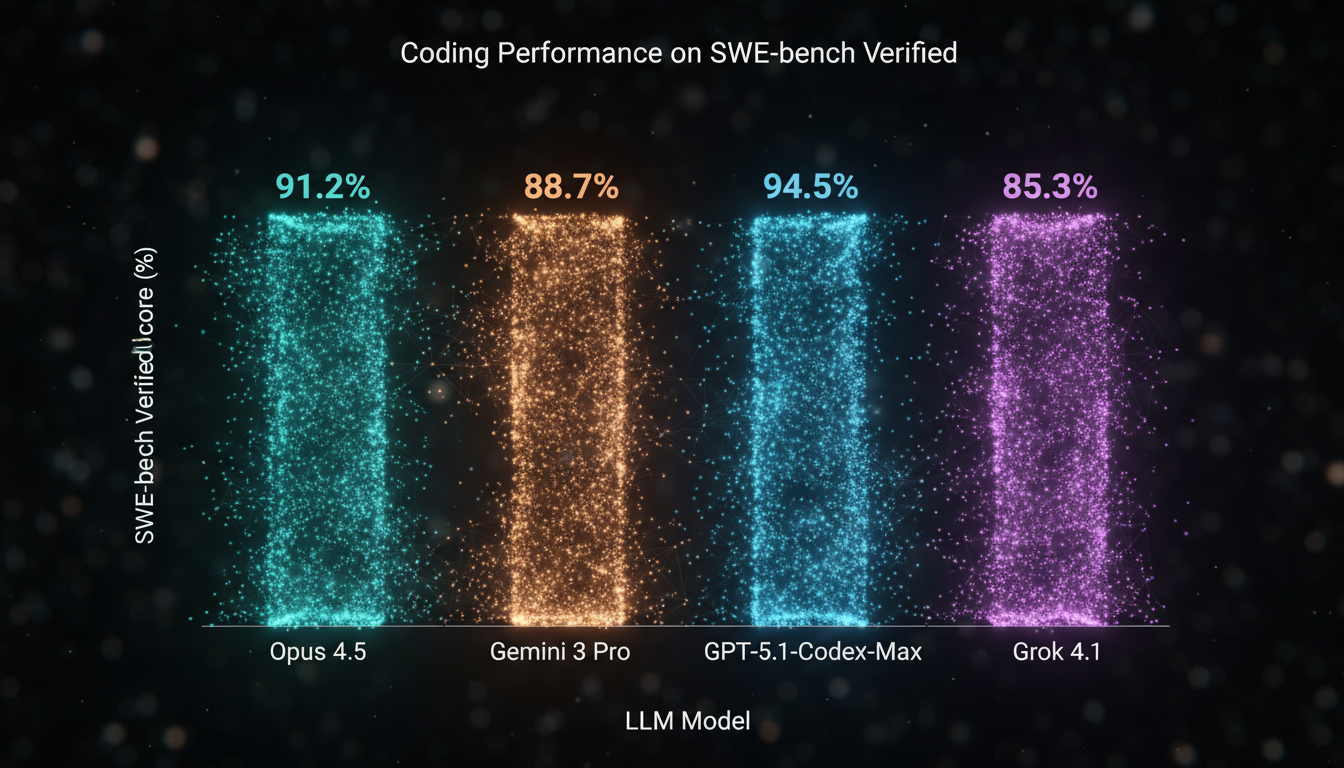

The developer’s deep dive: Coding prowess

For many developers, the single most important capability of an LLM is its ability to write, debug, and refactor code. The latest models have all made significant strides in this area, moving beyond simple function generation to tackling complex, multi-file software engineering tasks. The industry-standard benchmark for this is SWE-bench, which measures a model’s ability to resolve real-world GitHub issues.

Anthropic’s Opus 4.5 has set a new record, becoming the first model to score over 80% on the rigorous SWE-bench Verified subset. This demonstrates an exceptional ability to handle complex, multi-day development projects with a high degree of autonomy and quality. Not far behind, OpenAI’s specialized GPT-5.1-Codex-Max and Google’s Gemini 3 Pro also deliver state-of-the-art performance, showcasing their power in code generation, bug fixing, and complex refactoring.

Nuanced strengths and choosing the right tool

Beyond the numbers, each model has a distinct “feel” and excels in different domains. Choosing the right one depends entirely on your project’s specific needs.

Choose Claude Opus 4.5 for:

- Complex, Long-Horizon Coding: Its leading SWE-bench score makes it the top choice for agentic coding assistants that can handle entire projects, from planning to execution and testing.

- Enterprise-Grade Workflows: Built for reliability, it excels at tasks requiring sustained, high-quality output, such as creating complex spreadsheets, documents, and presentations.

- Cost-Effective Intelligence: With new features like prompt caching and batch processing, Anthropic has made its most powerful model more accessible and affordable for high-volume tasks.

Choose Gemini 3 Pro for:

- Unmatched Multimodality and Massive Context: With its 1 million token context window and superior ability to process and reason about text, images, audio, and video simultaneously, it’s the ideal choice for applications that need to understand complex, mixed-media inputs.

- Real-Time Data Integration: When your application needs to be grounded in the latest information from the web, Gemini’s native integration with Google Search is a significant advantage.

- Structured Data and Grounded Image Generation: The ability to combine structured output schemas with tools like Google Search allows for powerful, factually-grounded data extraction and image generation.

Choose GPT-5.1 for:

- Best All-Around Performance and Flexibility: GPT-5.1 provides a powerful and versatile foundation for a wide range of tasks, from conversational AI to complex reasoning. Its configurable reasoning effort allows developers to balance cost, latency, and performance.

- Specialized Coding Excellence: For projects where coding is the absolute priority, the GPT-5.1-Codex-Max variant offers performance that is highly competitive with other leaders in the field.

- Mature Ecosystem and Tooling: As an iteration on the GPT series, it benefits from a vast and mature ecosystem of developer tools, libraries, and community support.

Choose Grok 4.1 for:

- Superior Conversational and Creative Interaction: As the leader on the LMArena preference benchmark, Grok 4.1 is the model to beat for user-facing applications where a natural, engaging, and coherent personality is key.

- Emotional Intelligence: Its high scores on EQ-Bench3 make it uniquely suited for applications that need to understand and respond to nuanced human emotion.

- Reduced Hallucinations: xAI has focused heavily on reducing factual errors, making Grok 4.1 a more reliable choice for information-seeking tasks where accuracy is paramount.

Conclusion

As of late 2025, the flagship LLM market is not about a single “best” model, but a portfolio of specialized tools. The competition has driven incredible advancements, giving developers unprecedented power and choice. For pure coding endurance on large projects, Anthropic’s Opus 4.5 has a slight edge. For massive-scale multimodal understanding, Google’s Gemini 3 Pro is the clear leader. OpenAI’s GPT-5.1 remains a formidable all-rounder with specialized coding power, while xAI’s Grok 4.1 has carved out a unique niche in creating more natural and emotionally intelligent AI interactions. The best decision is a data-driven one; analyze your project’s core requirements, consult the latest benchmarks, and choose the model whose nuanced strengths will bring your vision to life.