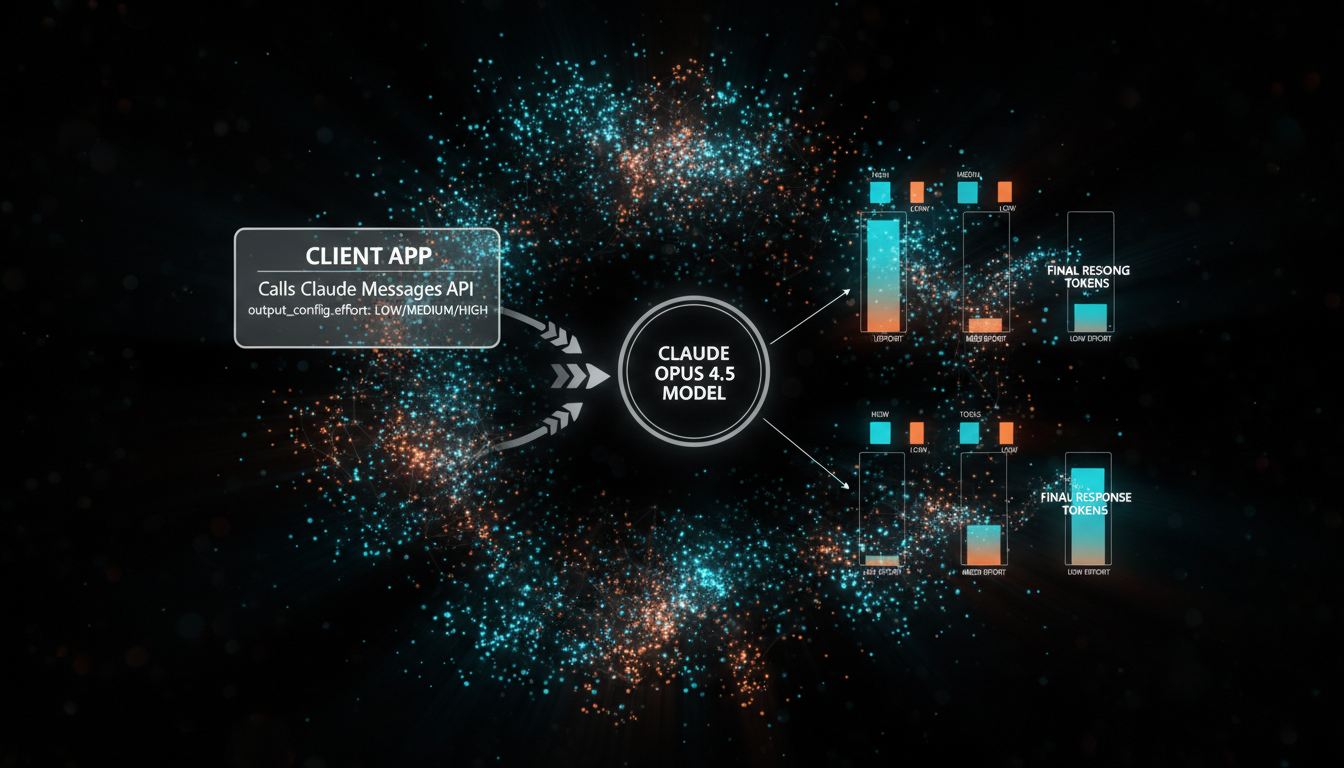

The release of Anthropic’s Claude Opus 4.5 in November 2025 marked a significant leap forward in AI-driven software development, promising unparalleled performance in coding, reasoning, and agentic tasks. But with great power comes great cost, and managing token consumption is a critical challenge for developers building scalable applications. Anthropic has introduced a novel solution: the ‘effort’ parameter, a powerful new lever in the Messages API that allows for precise control over the cost-performance balance. This guide provides a comprehensive deep-dive into this new feature, showing you how to cut costs and optimize your workflows by strategically tuning token usage for any task.

What is the Claude Opus 4.5 effort parameter?

The effort parameter is a new configuration option available in the Claude Opus 4.5 API that directly controls how liberally the model expends tokens to generate a response. It is a beta feature, exclusively available for the claude-opus-4-5-20251101 model, that provides a simple yet effective way to manage the trade-off between response thoroughness, latency, and cost.

By default, Claude Opus 4.5 operates at high effort, ensuring it uses as many tokens as necessary—across text generation, tool calls, and internal reasoning—to produce the highest quality output possible. While ideal for complex, mission-critical tasks, this maximum-capability setting isn’t always necessary or cost-effective. By adjusting the effort level, developers can instruct the model to be more conservative with token usage, which can lead to significant cost savings and faster response times for simpler or high-volume tasks.

The effort parameter affects all tokens in a response, including text, tool use, and even the internal ‘thinking’ tokens if that feature is enabled. This provides a holistic way to control token expenditure without complex prompt engineering.

Understanding the three effort levels

The effort parameter can be set to one of three distinct levels, each designed for different scenarios. Understanding the characteristics of each level is key to applying them effectively in your software development lifecycle. The default is high, which is equivalent to not setting the parameter at all.

| Effort Level | Description | Best For |

|---|---|---|

high | Maximum Capability: The model uses as many tokens as needed to achieve the best possible outcome. This is the default setting. | Complex reasoning, critical code generation, nuanced analysis, and multi-step agentic tasks where quality is the absolute priority. |

medium | Balanced Approach: Provides a middle ground, achieving moderate token savings while maintaining strong performance. | General-purpose agentic tasks, content summarization, or workflows that require a balance of speed, cost, and high-quality output. |

low | Maximum Efficiency: Delivers significant token savings and the lowest latency, with a potential reduction in response nuance and thoroughness. | Simple, high-volume tasks like data classification, intent routing, or quick API lookups where speed and cost are the primary concerns. |

Choosing the right level depends entirely on the specific requirements of the task at hand. For a customer-facing chatbot that needs to respond instantly, low or medium effort might be ideal. For a backend process that analyzes complex legal documents, high effort is the more prudent choice.

How to implement the effort parameter

Using the effort parameter requires two small but crucial additions to your API call. First, because the feature is in beta, you must include the effort-2025-11-24 header in your request. Second, you specify the desired effort level within an output_config object in the request body.

Python API example

Here is a practical example using the official Anthropic Python SDK. The code sends a request to the Claude Opus 4.5 model with the effort level set to medium for a balanced response.

import anthropic

client = anthropic.Anthropic()

# As of November 2025, the effort parameter is in beta and requires a specific header.

response = client.beta.messages.create(

model="claude-opus-4-5-20251101",

betas=["effort-2025-11-24"], # Mandatory beta header

max_tokens=4096,

messages=[{

"role": "user",

"content": "Generate a Python function to calculate the Fibonacci sequence up to n, and include docstrings and type hints."

}],

output_config={

"effort": "medium" # Can be "low", "medium", or "high"

}

)

print(response.content[0].text)cURL API example

For those working in other languages or environments, a cURL request demonstrates the raw API structure. Note the inclusion of the anthropic-beta header and the output_config JSON object.

curl https://api.anthropic.com/v1/messages \

--header "x-api-key: $ANTHROPIC_API_KEY" \

--header "anthropic-version: 2023-06-01" \

--header "anthropic-beta: effort-2025-11-24" \

--header "content-type: application/json" \

--data '{

"model": "claude-opus-4-5-20251101",

"messages": [

{"role": "user", "content": "Explain the difference between SQL and NoSQL databases."}

],

"max_tokens": 2048,

"output_config": {

"effort": "low"

}

}'Strategic use cases for AI cost optimization

The true power of the effort parameter lies in applying it dynamically based on the context of the task. A one-size-fits-all approach is rarely optimal. Instead, developers should build logic into their applications to select the most appropriate effort level on a per-request basis.

- Dynamic routing based on complexity: Implement a preliminary analysis step (perhaps using a much cheaper model like Claude Haiku or a simple keyword analysis) to gauge the complexity of a user’s request. Simple requests like “What time is it?” can be routed to a call with

loweffort, while complex prompts like “Refactor this entire legacy codebase” should usehigheffort. - Tiered user experiences: For SaaS products, you can offer different performance tiers. A “standard” plan could use

mediumeffort for all calls, while a “premium” or “enterprise” plan could unlockhigheffort for more demanding tasks, creating a clear value proposition for upselling. - Optimizing agentic sub-tasks: In a multi-step agentic workflow, not every step requires maximum intelligence. A planning step might benefit from

higheffort, but subsequent, simpler steps like reading a file or performing a basic data extraction could be executed withloweffort to save tokens without compromising the final outcome.

Impact on token consumption, cost, and latency

The financial and performance implications of using the effort parameter are direct and significant. By instructing the model to be more concise, you reduce the number of output tokens generated, which lowers both the direct cost of the API call and the time it takes to receive the full response (latency).

Let’s consider a hypothetical scenario. A task to summarize a 5,000-token document might consume the following output tokens at different effort levels:

- High Effort: 1,500 output tokens (detailed, nuanced summary).

- Medium Effort: 900 output tokens (balanced summary).

- Low Effort: 400 output tokens (brief, high-level summary).

As of November 2025, Claude Opus 4.5 is priced at approximately $5.00 per million input tokens and $25.00 per million output tokens. For 1,000 summary operations, the cost difference would be substantial:

| Effort Level | Total Output Tokens (1k calls) | Estimated Cost | Potential Savings (vs. High) |

|---|---|---|---|

high | 1,500,000 | $37.50 | – |

medium | 900,000 | $22.50 | 40% |

low | 400,000 | $10.00 | 73% |

While this is a simplified example, it illustrates the powerful cost-cutting potential. Furthermore, generating 400 tokens is significantly faster than generating 1,500, making low effort an excellent choice for applications where near-instant responses are critical for user experience.

Conclusion

The introduction of the effort parameter for Claude Opus 4.5 is a game-changer for developers seeking to build sophisticated AI applications that are both powerful and economically viable. It moves beyond the one-size-fits-all model, offering granular control over the delicate balance between capability and cost. By understanding and strategically implementing the three effort levels—high, medium, and low—you can ensure you are only paying for the intelligence you need, when you need it.

The key takeaway is to embrace dynamic configuration. Analyze your application’s workflows, identify tasks of varying complexity, and adjust the effort parameter accordingly. Start with the default high setting as a benchmark, then test lower levels to quantify the savings and ensure the quality remains acceptable for your use case. This thoughtful approach to AI resource management will be a defining factor in building the next generation of scalable, efficient, and cost-effective software.