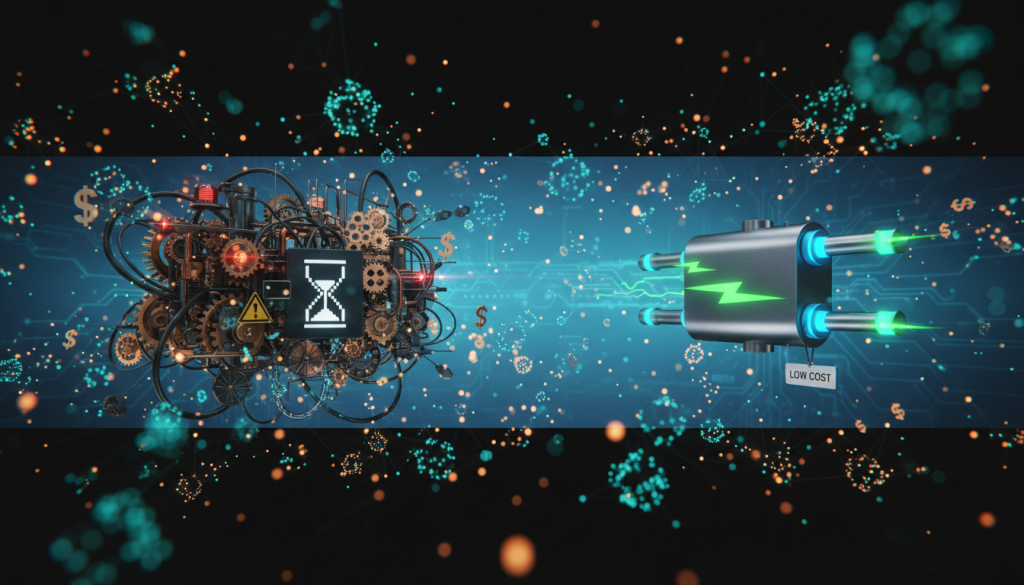

Your basic Retrieval-Augmented Generation (RAG) system is up and running. It’s pulling context from your knowledge base and reducing hallucinations, but a new set of problems is emerging. As of November 2025, many teams are discovering that their once-simple RAG pipelines have become slow and expensive. The culprit? A creeping complexity often born from adding every new, advanced component without a clear understanding of the trade-offs. This is the hallmark of an over-engineered RAG.

This article dives deep into the architecture of retrieval systems, breaking down the cost and latency implications of advanced components like query transformers and re-rankers. We’ll explore when these powerful tools are a sound investment and when they are just over-engineering, helping you build a RAG system that is both powerful and cost-efficient.

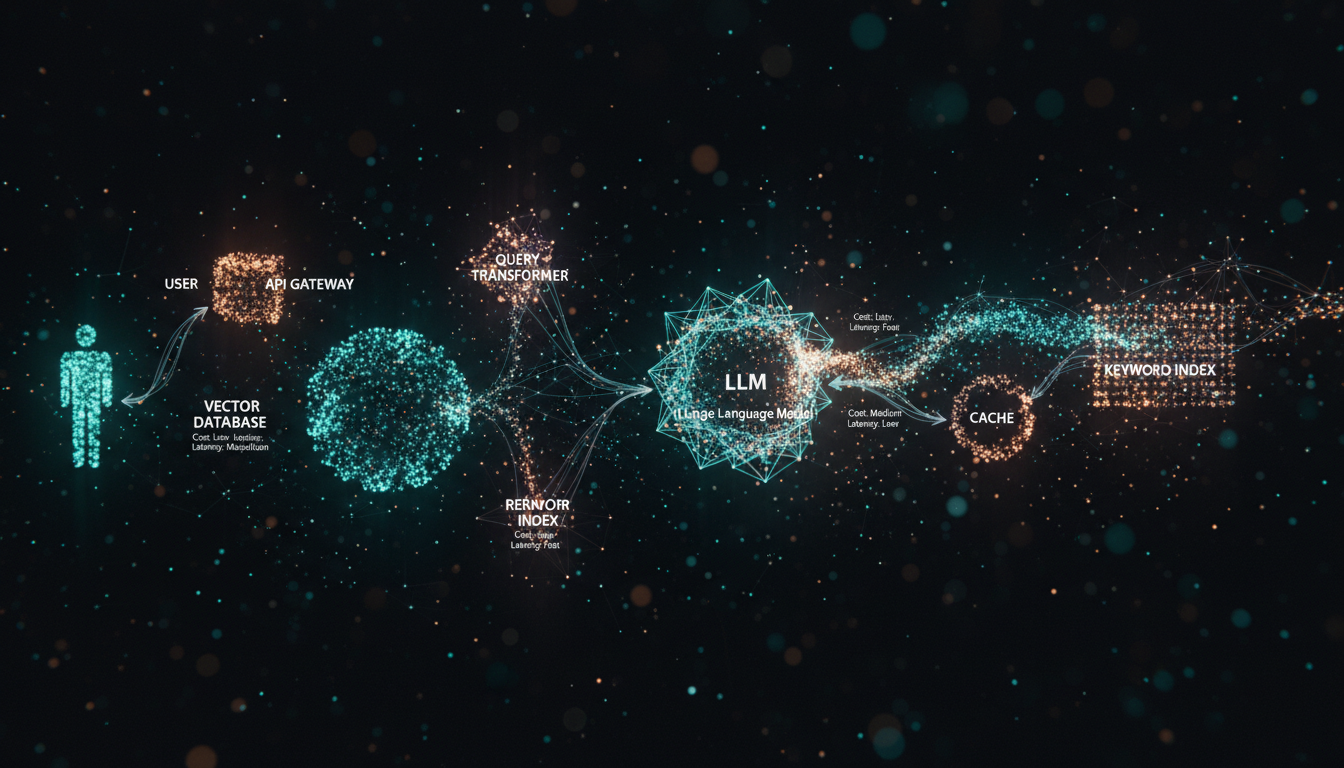

Anatomy of RAG: Where cost and latency hide

Every component you add to your RAG pipeline contributes to the total cost and latency. In a basic setup, the process is straightforward: a user query is converted into an embedding, used to find similar documents in a vector database, and the results are fed to a Large Language Model (LLM) to generate an answer. The costs are predictable: embedding model API calls, vector database hosting, and the final LLM generation call. Latency is the sum of these sequential steps.

However, an advanced, or potentially “over-engineered,” RAG system introduces multiple new stages, each with its own performance tax. Query transformers, multiple retrieval strategies, and post-retrieval re-rankers can dramatically improve accuracy but at a steep price. Understanding this trade-off is the key to building a sustainable system.

| Component | Simple RAG Impact | Advanced RAG Impact |

|---|---|---|

| Query Processing | Low (single embedding call) | High (adds an extra LLM call for transformation) |

| Retrieval | Medium (one vector DB query) | High (multiple queries to vector DB, keyword indexes) |

| Ranking | None (relies on vector score) | Very High (adds a separate model inference step) |

| Generation | High (single LLM call with context) | High (same as simple RAG) |

The retrieval foundation: Is hybrid search necessary?

The core of any RAG system is its ability to retrieve relevant documents. For years, vector search has been the default, using dense embeddings to find semantically similar content. This works well for conceptual queries but often fails when specific keywords or identifiers (like product codes or legal terms) are critical.

This is where hybrid search comes in. As of late 2024, most production-grade systems combine dense retrieval (vector search) with a sparse retrieval method like BM25, which excels at keyword matching. According to 2024 benchmarks, hybrid approaches can improve retrieval accuracy by over 25% in domains where term matching is important.

- Vector Search (Dense Retrieval): Uses models like OpenAI’s

text-embedding-3-smallto find conceptually related text. Fast and great for general topics. - Keyword Search (Sparse Retrieval): Uses algorithms like BM25 to match exact words. Essential for codes, names, and specific jargon.

- Hybrid Search: Runs both queries and merges the results using a method like Reciprocal Rank Fusion (RRF) to get the best of both worlds.

The Verdict: Implementing hybrid search is often worth the complexity. The cost increase is manageable—it involves maintaining a second index (e.g., in Elasticsearch or natively in vector databases like Weaviate or Qdrant)—but the gains in retrieval relevance are substantial. For most applications beyond simple chatbots, hybrid search should be considered a baseline, not an over-engineering step.

Pre-retrieval optimization: The query transformer tax

A common failure point in RAG is a poorly phrased user query. If the user’s question is vague or uses different terminology than the source documents, the retrieval step will fail to find relevant context. Query transformers aim to solve this by rewriting the user’s input into a more optimal format for the retrieval system.

Popular techniques include:

- HyDE (Hypothetical Document Embeddings): An LLM generates a hypothetical answer to the user’s query. The embedding of this *hypothetical answer* is then used for retrieval, which can be more effective than embedding the vague query itself.

- Multi-Query Retriever: An LLM generates several variations of the user’s query from different perspectives. Each variant is run against the vector database, broadening the search net.

The catch is obvious: this adds an entire LLM call *before* you even begin searching for documents. This can easily add 300-800ms of latency and the associated API cost. The key to making this viable is to use a very small, fast, and cheap model for this task. Using your main, powerful generation model (like GPT-4-Turbo) is almost certainly over-engineering. However, using a smaller, optimized model can be a cost-effective way to significantly improve retrieval on ambiguous queries.

Post-retrieval optimization: The re-ranker dilemma

Your retriever (simple or hybrid) returns a list of candidate documents, typically 10 to 50 of them. The problem is that the most relevant document might be ranked #5, not #1. A re-ranker is a specialized model that takes this initial list of documents and re-orders them based on a more sophisticated understanding of relevance, pushing the best matches to the top.

Models from providers like Cohere or Voyage AI, as well as open-source alternatives, are highly effective at this. However, re-ranking is often the single most significant source of added latency in an advanced RAG pipeline. You must process each of the top N documents through the re-ranker model, which can add anywhere from 200ms to over a second to your response time, depending on the number of documents and the model size.

The Verdict: A re-ranker is a classic case of “it depends.” If your base retrieval is already highly accurate (a “hit rate” where the best document is in the top 3), a re-ranker might be expensive overkill. But if you are struggling with relevance and need to pass more documents to the LLM, a re-ranker can be cheaper than expanding your LLM’s context window. The best approach is to benchmark: measure your retrieval accuracy without a re-ranker. Only add one if you can prove it measurably improves the final response quality.

Strategies for a lean and effective RAG system

Avoiding an over-engineered system doesn’t mean sticking to a simplistic design. It means applying complexity judiciously and optimizing at every layer.

- Start with a Strong Baseline: Use a high-quality, modern embedding model (e.g., OpenAI

text-embedding-3-smallor an open-source alternative) and implement hybrid search. This solid foundation often solves many of the problems that teams try to fix with more complex components. - Cache Everything: Implement semantic caching. If a new user query is highly similar to a previous one, you can serve the cached response directly, bypassing the entire RAG pipeline. This is the single most effective way to slash both cost and latency for common queries.

- Use Tiered Models: Don’t use your most powerful LLM for every task. Use small, fast models for query transformation and a larger, more capable model for the final generation. This balances cost and performance effectively.

- Optimize Your Infrastructure: For self-hosted systems, techniques like continuous batching for embedding and re-ranking can dramatically increase GPU utilization and throughput, reducing the per-query cost and latency.

Conclusion: From over-engineered to optimized

The journey from a basic RAG to a production-ready system is paved with tempting, complex components. An over-engineered RAG isn’t one that uses advanced techniques; it’s one that uses them without measuring their impact on performance and cost. The guiding principle should be incremental, data-driven optimization. Before adding a query transformer or a re-ranker, establish a baseline and ask a simple question: “Does this component provide enough value to justify its latency and cost?”

As of late 2025, the most effective RAG systems are not the most complex ones, but the smartest. They rely on a strong retrieval foundation, aggressive caching, and a tiered approach to model selection. By focusing on targeted, measurable improvements, you can build a sophisticated RAG architecture that is both highly performant and economically viable. The next step is to go back to your pipeline, identify your biggest bottleneck, and test one change at a time.