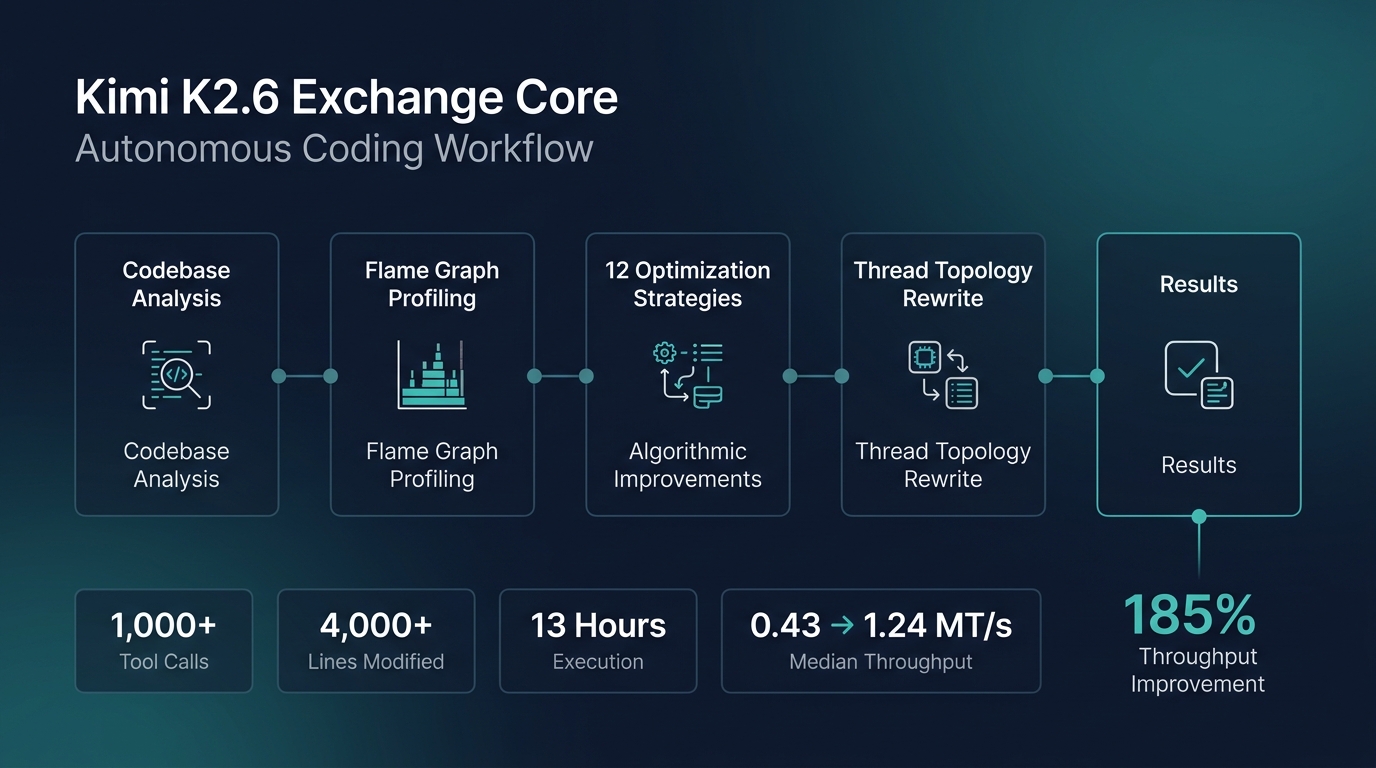

On April 20, 2026, Moonshot AI released Kimi K2.6, a trillion-parameter open-weight Mixture-of-Experts model that immediately distinguished itself not through benchmark numbers, but through a single, arresting demonstration: 13 consecutive hours of autonomous coding on a real production codebase, resulting in a 185% throughput improvement. The target was exchange-core, an 8-year-old open-source financial matching engine written in Java. No human intervened. No prompts were refined mid-run. The model was given a goal and left alone for half a day.

What actually happened in those 13 hours

Kimi K2.6 approached exchange-core the way a seasoned systems architect would. It began by profiling the existing code, analyzing CPU and allocation flame graphs to locate bottlenecks buried deep inside the matching engine’s core. Over the course of the run, it iterated through 12 distinct optimization strategies, made over 1,000 tool calls, and modified more than 4,000 lines of code with surgical precision.

The pivotal decision came when the model reconfigured the engine’s thread topology from a 4ME+2RE layout to a leaner 2ME+1RE configuration. This was not a safe, incremental tweak. It was a structural gamble that a human engineer would deliberate over for days. K2.6 made it autonomously, and the numbers justified the call. Median throughput jumped from 0.43 MT/s to 1.24 MT/s (a 185% gain), and peak throughput climbed from 1.23 MT/s to 2.86 MT/s (a 133% improvement). The engine was already operating near its performance ceiling before K2.6 touched it.

A second case proves it was not a fluke

Moonshot also published a second demonstration. K2.6 was tasked with deploying Qwen3.5-0.8B locally on a Mac and implementing inference in Zig, a niche systems programming language that barely registers in most training datasets. Over 12 hours and 4,000+ tool calls, the model iterated 14 times and pushed throughput from roughly 15 tokens per second to 193 tokens per second, roughly 20% faster than LM Studio. The Zig case matters because it tests out-of-distribution generalization. K2.6 had no rich corpus of Zig examples to lean on. It figured out syntax, idioms, and optimization patterns on the fly, sustaining coherent reasoning across thousands of steps in unfamiliar territory.

Why this matters beyond the benchmarks

These are vendor-reported results, not independently verified benchmarks. But the shape of the claim is significant regardless. Long-horizon autonomous coding, where a model sustains stateful work across thousands of tool calls without drifting from the objective, is the capability that separates code-generation assistants from systems that can operate as independent engineering agents. For companies sitting on legacy codebases in fintech, logistics, or any domain with aging infrastructure, the exchange-core case study suggests a concrete path forward. Paired with structured automation platforms like n8n, which already offers a native Moonshot Kimi node for workflow integration, businesses can move from one-shot demonstrations to repeatable processes. Kimi K2.6 is available now through the Kimi API at $0.60 per million input tokens, roughly 4-8x cheaper than comparable frontier models, making long-duration autonomous runs financially viable for the first time.

Leave a Comment

Sign in to join the discussion and share your thoughts.

Login to Comment