Security vulnerabilities in critical open-source software are now being discovered at a pace that renders manual code review obsolete. With Claude Mythos Preview—Anthropic’s most capable cybersecurity model to date—defenders can autonomously identify zero-day flaws that conventional tooling misses entirely. Yet for small and mid-sized businesses (SMBs), access to frontier AI capabilities has traditionally required dedicated security engineering teams that few can afford.

This is where n8n changes the equation. By building a scheduled n8n workflow that connects Claude Mythos Preview via API and automatically raises tickets for discovered vulnerabilities, SMBs gain enterprise-grade security scanning without the enterprise headcount. The following guide walks you through constructing this automation pipeline directly, following the April 2026 Project Glasswing announcement structure.

Understanding Claude Mythos Preview and Project Glasswing

Anthropic announced Project Glasswing on April 7, 2026, as a defensive cybersecurity initiative providing select organizations early access to Claude Mythos Preview. This frontier model has demonstrated a 16.5 percentage point improvement on the CyberGym vulnerability research benchmark over Claude Opus 4.6, autonomously discovering vulnerabilities that survived decades of human review—including a 27-year-old OpenBSD bug and a 16-year-old FFmpeg vulnerability that survived five million automated test runs.

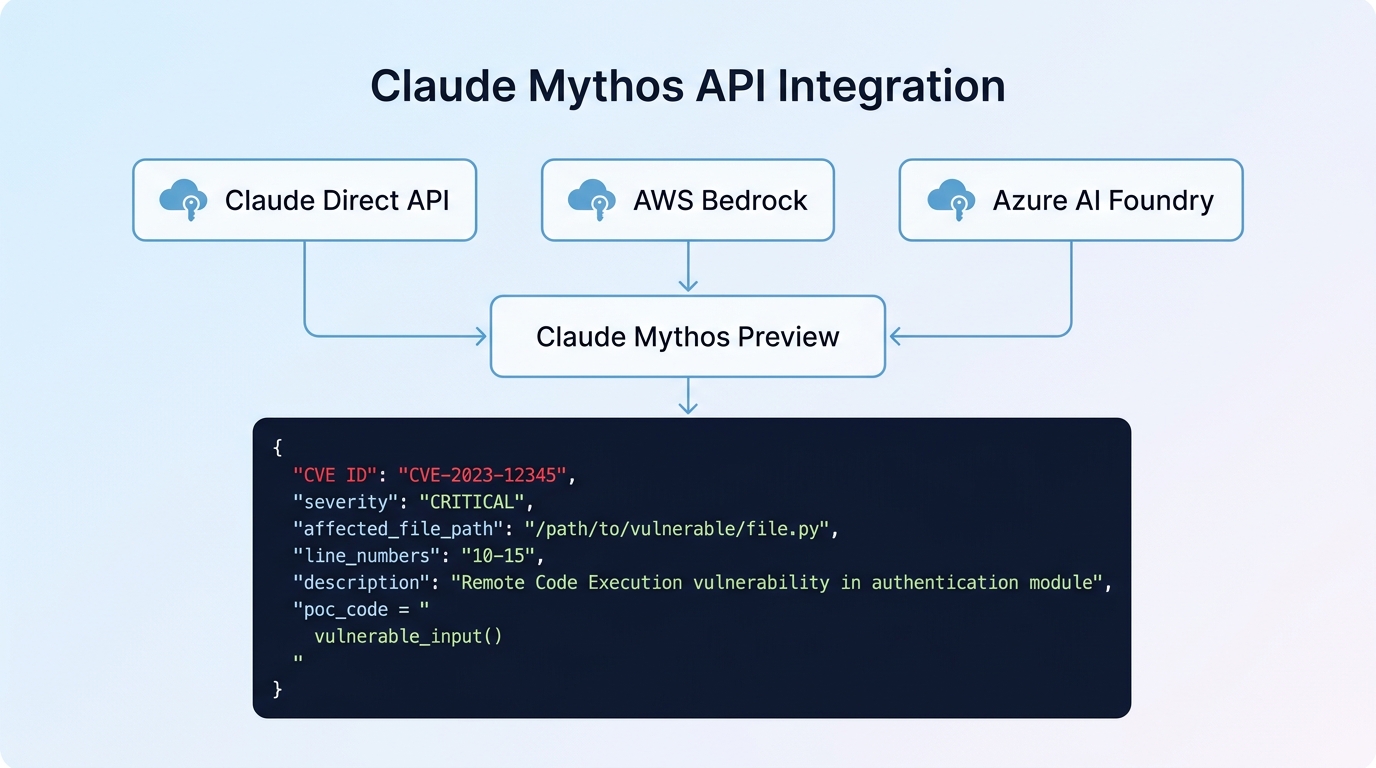

Crucially for n8n automation, Mythos Preview is accessible via API through the Claude API direct, Amazon Bedrock, Google Cloud Vertex AI, and Microsoft Foundry. As of April 2026, pricing for Project Glasswing participants is $25 per million input tokens and $125 per million output tokens—approximately five times Opus 4.6 pricing—reflecting the specialized capability depth.

Prerequisites and API setup

Before constructing the workflow, ensure you have the following components configured:

- n8n instance (self-hosted or cloud) version 1.85.0 or later

- Claude Mythos Preview API credentials via one of: Anthropic direct API, AWS Bedrock, or Azure Foundry

- Code repository access to the target codebase (GitHub, GitLab, or local Git repository)

- Ticketing system credentials for Jira, GitHub Issues, Linear, or similar

For API authentication, Anthropic provides Bearer token authentication. In AWS Bedrock, you will use IAM credentials with appropriate Bedrock permissions. The Claude API accepts standard headers: Authorization: Bearer YOUR_API_KEY and Anthropic-Version: 2026-04-01 (current as of the Glasswing announcement).

Building the vulnerability scanning workflow

The complete workflow architecture follows this data flow: Scheduled trigger → Code extraction → Mythos API analysis → Finding parsing → Severity filtering → Ticket creation. Below is the node-by-node implementation.

Step 1: Schedule trigger and code extraction

Begin with a Schedule trigger node configured to run daily or weekly. Connect this to a GitHub/GitLab node (or HTTP Request node for generic Git access) to fetch source code files. The key is extracting files that Mythos can analyze—typically Python, JavaScript, C/C++, Rust, or Go source files within a readable size limit.

// Example code extraction output structure

{

"repository": "my-org/critical-app",

"files": [

{"path": "src/auth.js", "content": "...", "checksum": "abc123"},

{"path": "src/database.py", "content": "...", "checksum": "def456"}

]

}Step 2: HTTP Request to Claude Mythos API

The HTTP Request node configures the API call to Claude Mythos Preview. Use the following JSON body structure for vulnerability analysis:

{

"model": "claude-mythos-preview-20260407",

"max_tokens": 8192,

"system": "You are an expert security researcher. Analyze the provided source code for security vulnerabilities including buffer overflows, injection flaws, authentication bypasses, and privilege escalation vectors. For each vulnerability found, output: CVE-recommended severity (CRITICAL/HIGH/MEDIUM/LOW), file path, line numbers, vulnerability type, description, and proof-of-concept exploitation steps.",

"messages": [

{

"role": "user",

"content": "Analyze the following source code for security vulnerabilities:\n\nFILE: {{ $json.path }}\n\n{{ $json.content }}"

}

]

}Configure authentication as Header Auth with Authorization set to Bearer YOUR_MYTHOS_API_KEY. For Bedrock deployments, use AWS credentials with Signature Version 4 authentication instead.

Step 3: Parse and filter findings

Add an IF node immediately after the HTTP Request to filter by severity. Mythos Preview outputs severity classifications with 89% agreement to human expert review. Route only CRITICAL and HIGH findings to automatic ticket creation, while MEDIUM and LOW severities log to a monitoring dashboard for manual review.

// IF node condition for severity filtering

{{ $json.content[0].text.includes('"severity": "CRITICAL"') ||

$json.content[0].text.includes('"severity": "HIGH"') }}Step 4: Automated ticket creation

The final step routes findings to your ticketing system. Use a Code node to extract structured vulnerability data from the Claude response, then pass to a Jira or GitHub Issues node:

// Code node: Parse Mythos findings to structured tickets

const findings = [];

// Parse the JSON response from Claude

const response = JSON.parse($input.first().json.content[0].text);

findings.push({

title: `[Mythos] ${response.vulnerability_type} in ${response.file_path}`,

description: `**Severity:** ${response.severity}\n**File:** ${response.file_path}:${response.line_numbers}\n\n**Description:**\n${response.description}\n\n**Proof of Concept:**\n\`\`\`\n${response.proof_of_concept}\n\`\`\`\n\n*Automatically detected by Claude Mythos Preview via n8n automation*`,

labels: ["security", "mythos", `severity-${response.severity.toLowerCase()}`]

});

return findings;Workflow optimization patterns

Production deployments require several optimizations to handle Mythos’s token costs and runtime characteristics:

- File pre-filtering: Use regex-based detection to identify only files modified since the last scan, reducing API token consumption significantly.

- Rate limiting: Implement Split in Batches nodes with delays between API calls—Mythos sustained throughput is lower than standard Claude models due to its depth of analysis.

- Error handling: Add Retry configurations to the HTTP Request node with exponential backoff; Mythos Preview may occasionally timeout on deeply complex codebases.

- Cost tracking: Log approximate token usage per file via an Airtable or database node to monitor your $100M Glasswing credit allocation.

| Component | Configuration | Purpose |

|---|---|---|

| Schedule Trigger | Daily at 02:00 UTC | Off-hours scanning |

| GitHub Node | Main branch only | Latest stable code |

| HTTP Request | Timeout: 300s | Mythos analysis time |

| IF Node | Severity ≥ HIGH | Critical-only auto-tickets |

| Jira Node | Security project type | Proper triage routing |

Managing false positives and severity calibration

Mythos Preview demonstrates 89% exact severity agreement with human security professionals on the 198-sample validation dataset, and 98% within one severity level. However, automation still requires human validation for exploitation feasibility. Configure your workflow to include estimated severity confidence metrics in ticket descriptions, and route chains of vulnerabilities (which Mythos identifies through autonomous multi-step analysis) for manual verification before production patching.

Consider implementing a manual review queue using an n8n Wait node or approval data store for findings involving privilege escalation chains or remote code execution vectors—categories Mythos excels at identifying but which carry high deployment risk. For a deeper dive into how structured AI pipelines suppress false positives while maximizing true vulnerability discovery, see our analysis of GitHub Security Lab’s three-stage audit architecture.

Conclusion

The April 2026 Project Glasswing announcement democratizes access to frontier AI security capabilities through standardized APIs. For resource-constrained organizations, an n8n workflow automating Claude Mythos Preview vulnerability scanning eliminates the traditional tradeoff between security coverage and engineering headcount.

The workflow described above—scheduled code extraction, Mythos API analysis via Bedrock or direct Claude API, severity-filtered parsing, and automatic ticket creation—provides a foundation that scales from a single repository to enterprise-wide dependencies. With Anthropic’s $100M in Glasswing usage credits available to qualifying organizations, the cost barrier has shifted from “whether you can afford security engineers” to “whether you can afford to ignore zero-day discovery automation.” The window between vulnerability discovery and exploitation has collapsed from months to hours. Your patching velocity must keep pace.

Leave a Comment

Sign in to join the discussion and share your thoughts.

Login to Comment