The landscape of artificial intelligence is continually evolving, with multimodal AI emerging as a transformative force. As of November 2025, the ability to seamlessly integrate and interpret information from various data types—text, images, and video—has moved beyond theoretical discussions into practical applications. This paradigm shift demands a new approach to interacting with AI: mastering multimodal prompts. No longer confined to mere text input, users can now leverage visual and temporal cues alongside natural language to unlock unprecedented creative and analytical capabilities from advanced AI models. This article delves into the art and science of crafting effective multimodal prompts for both image and video generation and analysis, providing a comprehensive guide for developers, content creators, and AI enthusiasts looking to harness the full power of the latest AI technologies.

The evolution of multimodal AI

Historically, AI models specialized in single modalities, excelling at either understanding text, recognizing images, or processing audio. The last few years, particularly leading up to 2025, have witnessed a rapid convergence of these capabilities. Breakthroughs in neural network architectures and massive, diverse datasets have enabled the development of truly multimodal models that can interpret complex relationships across different forms of data simultaneously. This evolution signifies a move towards AI that more closely mimics human perception and reasoning.

Leading the charge in this multimodal revolution are models from tech giants and innovative AI labs. OpenAI’s ecosystem, including the powerful GPT-5 (launched August 7, 2025) and its predecessors like GPT-4o (March 2025) and GPT-4.1 (May 2025), offers sophisticated text and image understanding. Google’s Gemini 2.5 Pro (March 25, 2025) and the newer Gemini 3.0 Pro stand out with their advanced reasoning over complex multimodal inputs, including the impressive capability to process up to three hours of video content. Anthropic’s Claude 4.1 Opus (August 2025 update for agentic improvements) and Claude 4.5 Haiku (October 15, 2025) demonstrate robust vision capabilities, excelling in interpreting charts and diagrams. Meta’s Llama 4 Scout and Llama 4 Maverick (April 5, 2025) further expand the open-source landscape with natively multimodal architectures. These advancements underscore a future where AI understands context from every available sensory input.

Understanding multimodal prompts

A multimodal prompt is an instruction given to an AI model that combines different types of input data. Instead of solely relying on text descriptions, you might provide an image, a video clip, or even audio, alongside textual commands. The AI then processes these diverse inputs to generate a coherent and contextually relevant output, which could be anything from a detailed text description to a new image or video.

The key differentiator from traditional text-only prompting is the AI’s ability to establish connections and derive insights from the interplay between modalities. For example, you might provide an image of a bustling city street and ask the AI to “Describe the mood of this scene and suggest a short story premise based on the central figure’s expression.” The AI must not only identify elements in the image but also infer emotions and creative narratives—a task significantly enhanced by multimodal understanding.

Core principles for effective multimodal prompting

Crafting effective multimodal prompts requires a blend of clarity, context, and creativity. Here are fundamental principles that apply across image and video tasks:

- Specificity and detail: Vague prompts lead to generic outputs. Be as precise as possible about what you want the AI to perceive and generate. Describe objects, actions, styles, colors, lighting, and mood.

- Provide clear context: Explain the purpose of your prompt. Is it for creative writing, technical analysis, or summarization? The more context the AI has, the better it can tailor its response.

- Iterative refinement: Start with a simpler prompt and gradually add complexity. If the initial output isn’t quite right, adjust your prompt by adding more details, constraints, or rephrasing unclear instructions.

- Chain-of-thought prompting: For complex tasks, break them down into smaller, logical steps. Guide the AI through a thought process. For instance, “First, identify all objects in the image. Second, analyze their spatial relationship. Third, based on this, generate a caption.”

- Leverage few-shot examples: If you have a desired style or format, provide one or two examples within your prompt. This helps the AI understand the pattern you’re looking for, especially useful for specialized outputs.

- Role assignment: Give the AI a persona. “Act as a professional photographer” or “You are a film critic” can significantly influence the tone and depth of the AI’s response.

Advanced multimodal prompting techniques for image

Image-based multimodal prompting combines visual input with textual instructions to generate new images, analyze existing ones, or derive insights. Models like OpenAI’s DALL-E 3 and vision capabilities of GPT-5, Gemini 2.5 Pro, and Claude 4.1 Opus excel here. DALL-E 3, in particular, leverages synthetic captions during training, allowing it to adhere much more closely to textual descriptions than its predecessors.

Descriptive richness

When generating images, a highly descriptive prompt is crucial. Think like a director or a painter:

// Poor prompt

"A dog."

// Effective prompt for DALL-E 3 (as of November 2025)

"A golden retriever, mid-leap, catching a frisbee in a sun-drenched park. The dog's fur is glistening, and motion blur is subtly visible on its paws. Lush green grass, dappled sunlight, a clear blue sky in the background. Shot with a prime lens, golden hour lighting, cinematic, high detail."Style and artistic direction

Specify artistic styles, famous artists, or photographic techniques:

"An ancient samurai warrior meditating under a cherry blossom tree, painted in the style of traditional Japanese ukiyo-e woodblock prints, with soft pastel colors and intricate details."

"A futuristic cityscape at dusk, inspired by Syd Mead's concept art, with neon lights reflecting on wet streets, flying vehicles, and towering skyscrapers. Volumetric lighting, highly detailed, cyberpunk aesthetic."Object relationships and actions

Clearly define how objects interact and what actions are taking place:

"A curious cat perched on a bookshelf, playfully swatting at a dangling string from a hot air balloon model. The room is cozy with warm lighting, books neatly arranged, and a window showing a snowy landscape."Using reference images for analysis

When analyzing images with models like Gemini 2.5 Pro or Claude 4.1 Opus, upload the image and ask specific questions:

// Prompt with image upload

"IMAGE_UPLOADED"

"Analyze this architectural drawing. Identify any structural inconsistencies or potential design flaws related to load-bearing walls. Provide a bulleted list of concerns and suggest improvements for each, referencing specific sections of the drawing."Advanced multimodal prompting techniques for video

Video generation and analysis present unique challenges due to the temporal dimension. AI models like Midjourney V1 Video (released June 18, 2025), Stable Video 4D 2.0 (May 20, 2025) from Stability AI, and Google’s Gemini 2.5 Pro (with its 3-hour video processing capability) are at the forefront. As of November 2025, video generation is rapidly advancing but still typically yields shorter clips (e.g., Midjourney’s 5-second videos).

Describing motion and narrative

For video generation, focus on dynamics and a progression of events:

// For Midjourney V1 Video (input: a still image of a serene forest)

"ANIMATE: The serene forest. Subtle wind rustling leaves, gentle sunlight filtering through the canopy, a deer slowly walks into frame from the right and pauses. Dreamy, cinematic, slow camera pan."Ensuring consistency

Maintaining character, object, and environmental consistency across frames is critical:

// For advanced video models (future-proofing)

"Generate a 10-second video. A single astronaut, consistently depicted with a white suit and reflective gold visor, floats gracefully through a vibrant nebula. The nebula's colors shift and swirl organically. Maintain a slow, continuous forward motion of the astronaut relative to the background. Smooth camera movement, cosmic, awe-inspiring."Temporal understanding for video analysis

When analyzing video, models like Gemini 2.5 Pro excel at temporal reasoning. Upload the video and provide detailed queries:

// Prompt with video upload

"VIDEO_UPLOADED"

"This is a security camera footage. Identify all instances where an unknown individual enters the building. For each instance, log the timestamp, a brief description of their appearance, and the duration of their stay within the visible frame. Summarize any unusual activities observed."Scene transitions and continuity

For more complex video generation, consider how scenes transition and maintain narrative flow:

// Example of a multi-part video prompt (conceptual for future models)

"Generate a three-part video sequence.

Part 1: A serene forest, camera slowly pans upwards to reveal a hidden waterfall. (5 seconds)

Part 2: Transition via a dissolve to an ancient, moss-covered temple ruins nestled in dense jungle. A beam of sunlight illuminates a forgotten artifact. (7 seconds)

Part 3: A swift, energetic cut to a modern archaeologist examining the artifact in a high-tech lab. Zoom in on their determined expression. (4 seconds)

Maintain a consistent adventurous tone and high visual fidelity throughout."Practical applications and future outlook

The mastery of multimodal prompting opens up a vast array of practical applications. In content creation, it allows for rapid prototyping of visual concepts, scene generation for films, and dynamic advertisements. Educators can use it to create interactive learning materials that adapt to visual cues. Designers can iterate on product concepts by feeding sketches and textual specifications. In complex analytical fields, such as medical imaging or autonomous driving, multimodal AI provides enhanced diagnostic and environmental understanding by correlating visual data with expert text prompts.

Looking ahead, as of late 2025, the trend is towards even greater integration and autonomy. Future models will likely handle longer video sequences with improved consistency, support real-time interaction across modalities, and exhibit more sophisticated reasoning capabilities. The development of agentic AI, where models can plan and execute complex, multi-step tasks involving various modalities, promises to further revolutionize how we interact with and leverage artificial intelligence. The emphasis will shift from mere generation to intelligent co-creation and problem-solving, with multimodal prompts serving as the primary interface for these advanced systems.

Conclusion

Mastering multimodal prompts for image and video is no longer a niche skill but a fundamental requirement for anyone engaging with advanced AI as of November 2025. The ability to articulate intentions across diverse data formats—text, images, and video—empowers users to unlock the full potential of sophisticated models like GPT-5, Gemini 3.0 Pro, and Claude 4.1 Opus.

- Specificity is paramount: Detailed instructions yield superior results.

- Context and iteration are key: Guide the AI with clear goals and refine your prompts based on initial outputs.

- Understand model strengths: Different models excel at different multimodal tasks; tailor your approach accordingly.

- Embrace the multimodal future: As AI continues to evolve, the seamless integration of visual and textual inputs will become even more critical for groundbreaking applications.

By diligently applying these principles and staying abreast of the latest model advancements, you can transform your interactions with AI, moving beyond simple commands to orchestrate truly intelligent and creative outcomes across both image and video domains. The journey into multimodal prompting is an ongoing one, promising exciting new frontiers for human-AI collaboration.

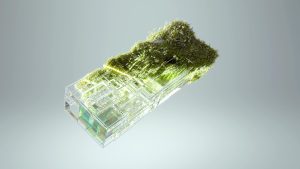

Image by: Google DeepMind https://www.pexels.com/@googledeepmind