For small and medium businesses wrestling with escalating AI API costs, Google’s Gemma 4 (released April 2, 2026) represents a paradigm shift. While cloud-based AI providers charge by the token—a pricing model that can quickly spiral out of control for high-volume operations—Gemma 4 enables businesses to deploy state-of-the-art language models locally, eliminating the dreaded “token tax” entirely. Purpose-built for agentic workflows and multi-step planning, Gemma 4 lets SMBs move far beyond simple chatbots to build autonomous voice AI agents that handle complex customer interactions without the overhead of per-call API fees.

Understanding the token tax burden on SMBs

Most businesses rely on cloud API services like GPT-4o or Claude for AI-powered customer interactions, typically paying between $2.50 to $15 per million input tokens and $10 to $75 per million output tokens. For a customer service operation handling 10,000 conversations monthly—each averaging 2,000 tokens—you’re looking at $50 to $150+ just for basic text processing. Add voice transcription (via Whisper API at $0.006/minute), speech synthesis, and the costs compound rapidly.

The real pain point emerges with agentic workflows. Unlike simple Q&A chatbots, agents require multi-step reasoning, function calling to external systems, and memory management across extended conversations. Each reasoning step, each tool invocation, each context retrieval adds tokens to your bill. For an SMB scaling from hundreds to thousands of customer interactions monthly, this token-based pricing creates a variable cost structure that makes budgeting nearly impossible.

Gemma 4: purpose-built for local agentic deployment

Gemma 4 distinguishes itself from previous open models by being explicitly designed for agentic workflows from the ground up. Released under the permissive Apache 2.0 license, it’s not just an open-weight model—it’s a complete toolkit for building autonomous AI agents that can plan, reason, and execute complex multi-step tasks.

| Model | Size | Architecture | Context Window | Best Use Case |

|---|---|---|---|---|

| E2B | 2.3B effective (5.1B total) | Dense | 128K tokens | Edge devices, mobile apps |

| E4B | 4.5B effective (8B total) | Dense | 128K tokens | IoT, Raspberry Pi, Jetson Nano |

| 26B A4B | 25.2B total (3.8B active) | MoE | 256K tokens | Fast inference with large context |

| 31B Dense* | 30.7B | Dense | 256K tokens | Maximum capability, workstations |

What sets Gemma 4 apart for SMBs is its native function‑calling support and multi‑step reasoning capabilities—features that were bolted onto earlier models but trained natively into Gemma 4’s architecture. This means the 31B model can tackle complex agentic tasks that previously required cloud APIs, while the smaller E2B and E4B variants can run offline on affordable hardware like a $200 Jetson Nano or a mid‑range laptop.

The model’s context window—up to 256K tokens for larger variants—enables sophisticated memory management in agentic workflows. Your voice AI agent can reference entire conversation histories, knowledge bases, and customer records without the context truncation that plagues smaller models.

Building voice AI agents with n8n and Gemma 4

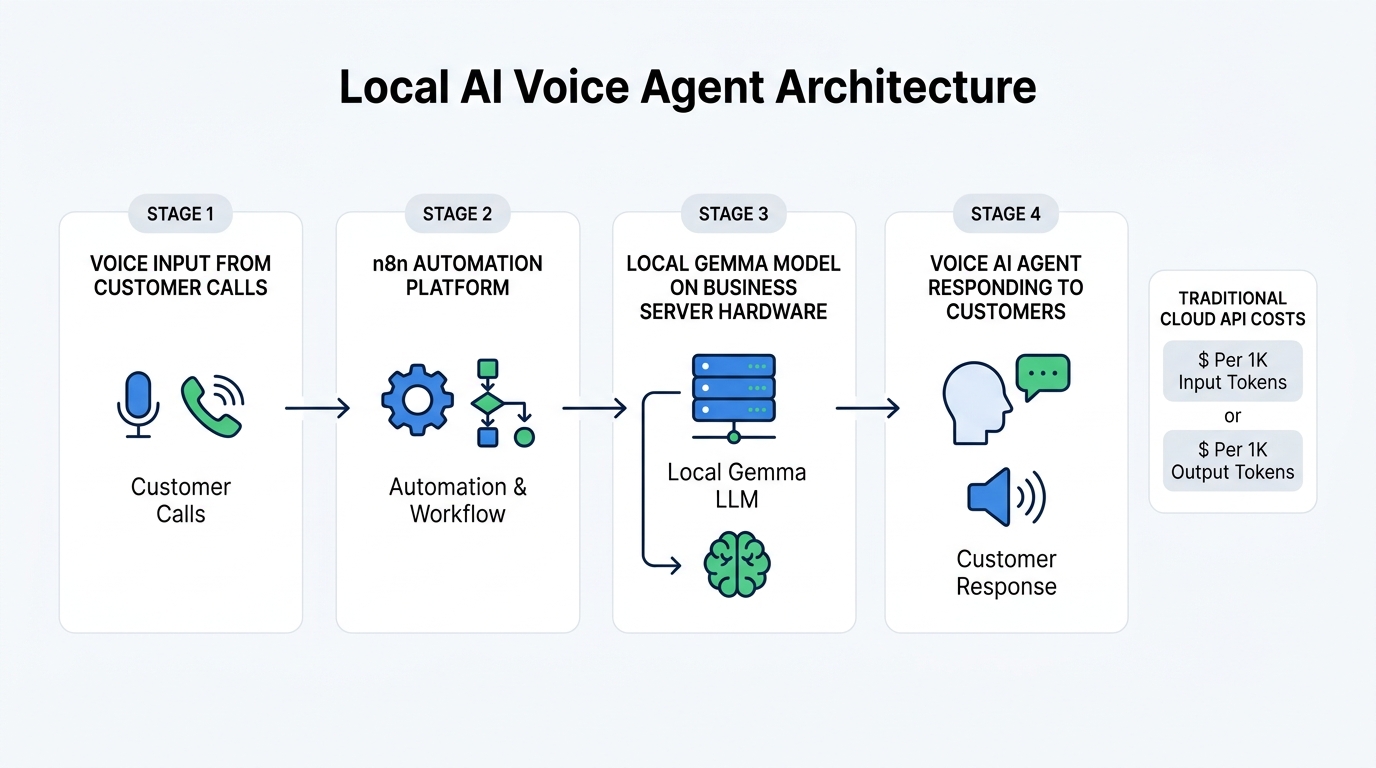

The combination of Gemma 4 for local intelligence and n8n for workflow automation creates a powerful, cost-effective stack for SMBs building voice AI agents. n8n is an open-source workflow automation tool that excels at orchestrating AI agents, connecting to APIs, and managing complex business logic—all without writing extensive code.

Here’s how a typical agentic voice AI workflow comes together:

- Voice input capture: Phone systems (Twilio, Vapi, or Retell) receive customer calls and stream audio to your infrastructure.

- Speech-to-text: Local Whisper or Gemma 4 E2B/E4B’s native audio processing converts speech to text.

- n8n orchestration: The workflow triggers, maintaining conversation context across multiple steps.

- Agentic reasoning: Gemma 4 26B or 31B runs locally to interpret intent, plan actions, and generate responses using function calling.

- Tool execution: n8n executes function calls—checking calendars, querying order databases, updating CRM records, scheduling follow-ups.

- Multi-step planning: For complex requests, the agent chains multiple reasoning steps and tool calls before responding.

- Text-to-speech: Local TTS models or integrated services convert the response back to natural speech.

The critical advantage is zero per‑call AI costs. Once you’ve invested in the hardware (a $2,000‑4,000 workstation or local server), every conversation costs only electricity—typically pennies per hour of operation. Compare this to cloud voice agents costing $0.05‑0.15 per minute, and the economics become compelling: a business handling 500 hours of calls monthly could save $1,500‑4,500 in API costs alone.

Calculating real ROI: local deployment vs. cloud APIs

Let’s break down the actual costs for an SMB handling 10,000 customer interactions monthly with agentic capabilities:

| Cost Factor | Cloud API (GPT-4o) | Gemma 4 Local |

|---|---|---|

| Initial setup | $0 | $2,500‑4,500 (workstation/server) |

| Monthly tokens (40M input, 20M output) | $200‑350 | $0 |

| Voice transcription + synthesis | $500‑800 | $0 (local processing) |

| 12‑month TCO | $8,400‑13,800 | $2,700‑4,800 |

| 24‑month TCO | $16,800‑27,600 | $3,100‑5,400 |

Beyond raw cost savings, local deployment offers operational advantages cloud APIs cannot match: data privacy (sensitive customer conversations never leave your infrastructure), sub‑100ms latency for real‑time voice interactions, offline capability, and compliance with data sovereignty requirements. For regulated industries like healthcare, finance, or legal services, these benefits often outweigh cost considerations entirely.

Agentic workflows: the real value beyond cost savings

While slashing operating costs is compelling, the transformation potential lies in Gemma 4’s native support for agentic workflows. Unlike first‑generation chatbots that handle single‑turn Q&A, agentic AI agents can:

- Plan multi‑step sequences: Break down complex requests like “reschedule my appointment and send a confirmation to my team” into actionable steps.

- Execute function calls: Interact with calendars, CRMs, inventory systems, and databases through structured API calls.

- Self‑correct: When a calendar is full or an item is out of stock, reason about alternatives and negotiate solutions.

- Maintain state: Remember context across extended conversations spanning multiple topics and tool interactions.

- Think before responding: Gemma 4’s configurable thinking mode lets the model work through reasoning steps before generating final output.

For an SMB, this means a voice AI agent can handle complete customer service workflows—taking a complaint, looking up order history, initiating a refund, scheduling a replacement delivery, and logging the interaction—all without human intervention. The 31B model’s 89.2% score on AIME 2026 reasoning benchmarks and 80% on LiveCodeBench v6 demonstrate capabilities that rival commercial cloud models costing 50× more per token.

Getting started: deployment pathways for SMBs

Deploying Gemma 4 locally has become remarkably straightforward thanks to tools like Ollama, LM Studio, and native integration with n8n. For most SMBs, the optimal path looks like this:

- Start with the 26B A4B MoE model: It delivers performance approaching the 31B model while using only 3.8B active parameters—running efficiently on consumer GPUs like the RTX 4090 or systems with 24GB+ VRAM.

- Use n8n’s AI agent templates: The platform offers pre‑built workflows for AI agents, function calling, and voice integrations that work out of the box with local LLMs.

- Connect to existing systems: Leverage n8n’s 500+ integrations to hook into your CRM, calendar, inventory, and other business tools.

- Test and iterate: Start with simpler use cases (appointment booking, FAQ handling) before graduating to complex agentic workflows.

For edge deployment—running agents on devices at distributed locations—the E2B and E4B models open possibilities previously impossible. A retail chain could deploy voice‑enabled kiosks running entirely offline, or a service business could equip field technicians with voice AI assistants that work without internet connectivity.

Conclusion: autonomy over dependency

The shift from cloud API dependency to local AI deployment isn’t merely about cost reduction—it’s about operational sovereignty. By running Gemma 4 locally, SMBs gain predictable costs, data privacy, offline capability, and the freedom to customize AI agents for their specific workflows without vendor lock‑in.

As of April 2026, Gemma 4 stands as the most capable open model family for agentic workflows, with benchmark results proving its ability to compete with proprietary cloud alternatives. For SMBs ready to move beyond simple chatbots and token‑based pricing, the combination of Gemma 4’s local deployment capabilities and n8n’s workflow automation represents the most compelling path to truly autonomous customer experiences—at a fraction of the ongoing cost.

The token tax doesn’t have to be a cost of doing business. With Gemma 4, SMBs can reclaim control over their AI infrastructure while delivering voice AI experiences that were previously the domain of enterprises with million‑dollar budgets.

Leave a Comment

Sign in to join the discussion and share your thoughts.

Login to Comment