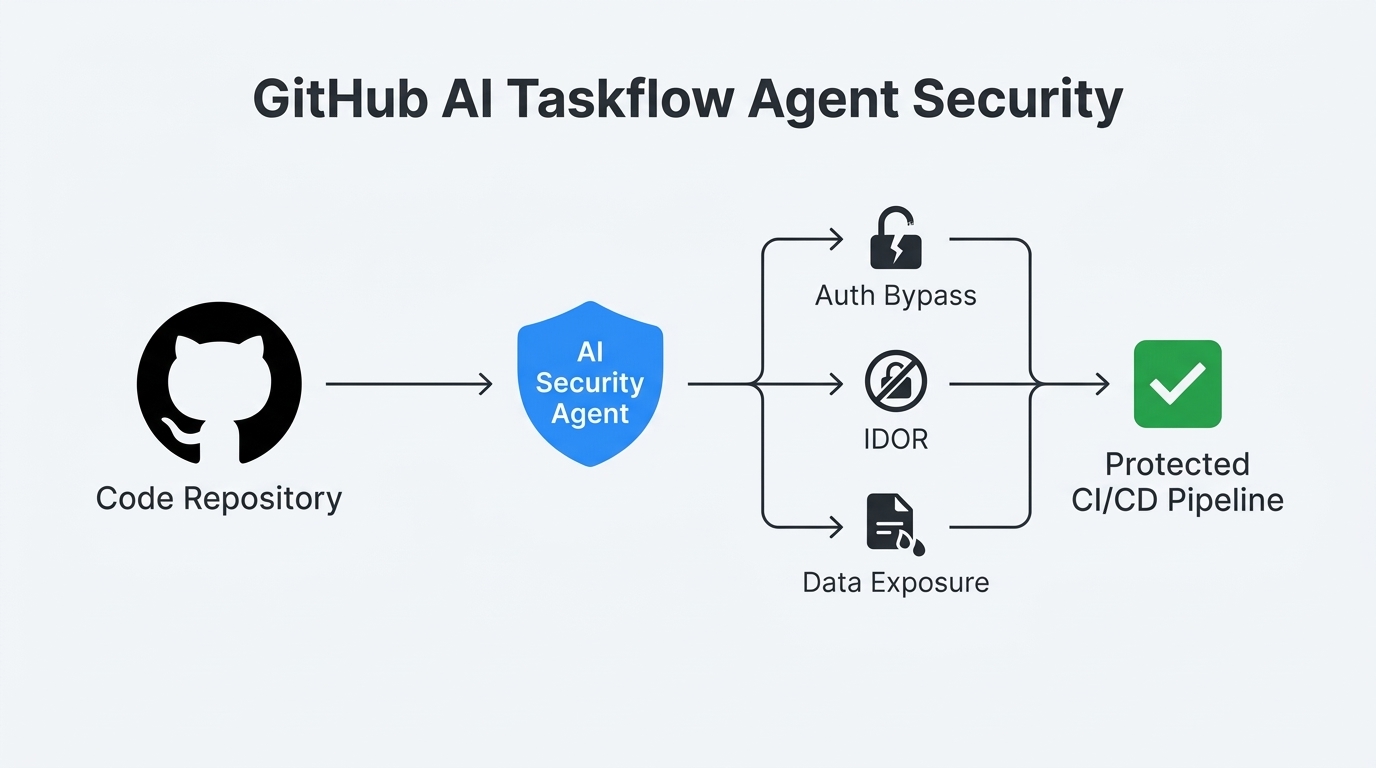

Imagine running an online store where any logged-in customer can browse through the names, addresses, and phone numbers of every guest order placed on your site. Or deploying a chat platform where anyone can sign in as any user using literally any password. These are not hypothetical scenarios. They are real vulnerabilities discovered in early 2026 by GitHub Security Lab’s AI-powered Taskflow Agent, an open source auditing framework that has already uncovered over 80 security flaws across more than 40 open source repositories. For small and midsize businesses that rely on platforms like WooCommerce, Rocket.Chat, and countless other open source tools, this is both a wake-up call and a roadmap for what AI-driven security can accomplish.

What GitHub’s Taskflow Agent actually found

On March 6, 2026, GitHub Security Lab researchers Man Yue Mo and Peter Stöckli published a detailed blog post documenting months of internal work using the SecLab Taskflow Agent. The framework uses large language models to perform structured security audits on code repositories. The results are striking: from 1,003 initially suggested issues across 40+ repositories, the agent narrowed the field to 91 verified vulnerabilities after deduplication, and 19 of those were deemed high-impact enough to report to maintainers. Approximately 20 have been publicly disclosed so far.

The standout findings paint a clear picture of what business logic flaws look like in production software:

| CVE | Platform | Type | CVSS | What happened |

|---|---|---|---|---|

| CVE-2026-28514 | Rocket.Chat | Authentication bypass | 9.3 | Missing await let anyone log in with any password |

| CVE-2025-64487 | Outline | Privilege escalation | 7.6 | Low-privilege users could grant admin access to groups |

| CVE-2025-15033 | WooCommerce | IDOR / data exposure | Varies | Logged-in users could view all guest order PII |

| CVE-2026-25758 | Spree Commerce | IDOR / data exposure | Varies | Unauthenticated users could enumerate guest addresses |

Why these flaws evade traditional security tools

The vulnerabilities discovered by the Taskflow Agent share a common trait: they are business logic flaws, not pattern-matching bugs. A traditional Static Application Security Testing (SAST) tool like CodeQL detects known vulnerability patterns—SQL injection, cross-site scripting, buffer overflows. But it cannot determine whether a user should be allowed to call a particular endpoint, or whether a missing await keyword turns a Promise into a truthy value that bypasses password validation.

Consider the Rocket.Chat flaw (CVE-2026-28514). The root cause was that bcrypt.compare returns a Promise<boolean>, but the calling code never settled that Promise with await. In JavaScript, a Promise object is always truthy. So the authentication check always passed for any user with a stored bcrypt hash. No SAST tool flags this because the code is syntactically correct and uses standard library functions. Only an agent that reads the code, understands the intended behavior, and traces the data flow across multiple files can catch it.

GitHub’s own data confirms this pattern. Of the vulnerabilities suggested by the agent, IDOR and access control issues had a 15.8% validation rate, authentication issues hit 16.5%, and business logic issues achieved the highest rate at 25%. These are precisely the categories where traditional tools are weakest.

How the Taskflow Agent audit pipeline works

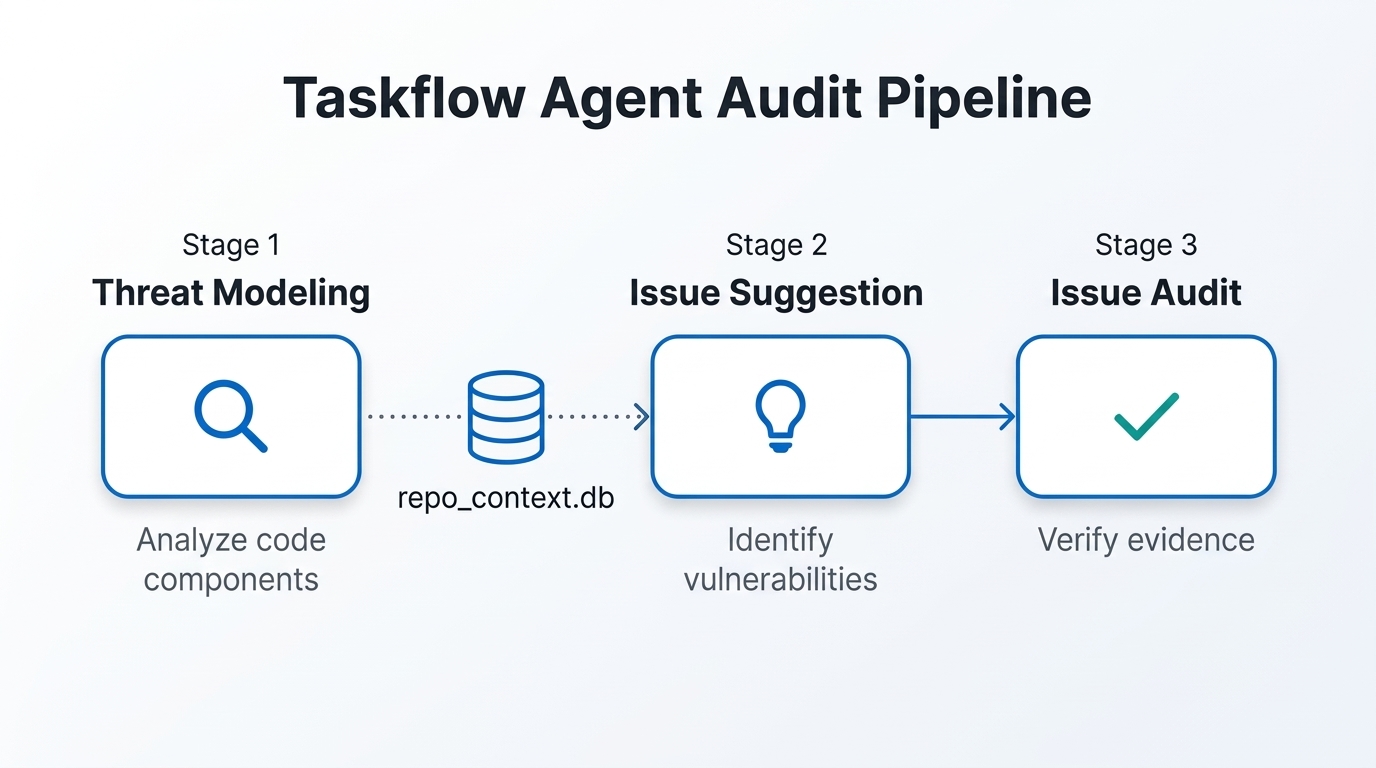

The framework uses a three-stage pipeline designed to maximize true positives while suppressing hallucinations. Each stage operates with fresh context and different prompts, preventing the LLM from validating its own assumptions.

Stage 1: Threat modeling

The agent divides the repository into functional components, identifies entry points exposed to untrusted input, catalogs web entry points with HTTP methods and paths, and maps user actions to baseline privileges. This information is stored in an SQLite database (repo_context.db) for use by later stages. The key insight is understanding what the application intends to do, which establishes whether a given behavior constitutes a vulnerability or is simply expected functionality.

Stage 2: Issue suggestion

Using the threat model context, the agent proposes vulnerability types likely to appear in each component. This stage is deliberately permissive—the goal is to generate a broad set of candidates without auditing them. The agent is explicitly instructed not to verify suggestions at this point, keeping the brainstorming and verification phases cleanly separated.

Stage 3: Issue audit

Each suggested issue is examined with fresh context and strict criteria. The agent must provide concrete evidence with file paths and line numbers, a realistic attack scenario, and an assessment of what privilege an attacker gains. It is also told that there may be no vulnerability at all and that concluding “no issue found” is a valid outcome. This discipline keeps false positives low: only 22% of the agent’s verified findings were later rejected as false positives during manual review.

What this means for SMB security

Small and midsize businesses face a particular dilemma with application security. They often run web applications built on open source platforms—WooCommerce for ecommerce, Rocket.Chat for internal communications, Outline for documentation—yet lack dedicated security engineers who can perform deep code audits. The vulnerabilities found by GitHub’s agent are exactly the type that sit undetected for years in production: the WooCommerce guest order data leak (CVE-2025-15033) affected versions 8.1 through 10.4.2, meaning it persisted across multiple major releases.

The Taskflow Agent is open source and can be run on any repository with a GitHub Copilot license. As of March 2026, Copilot plans start at $10/month for individuals, $19/user/month for Business, and $39/user/month for Enterprise. However, running the audit taskflows consumes premium model requests at a significant rate—the GitHub researchers note that “running the taskflows can result in many tool calls, which can easily consume a large amount of quota.” Multiple runs are recommended due to the non-deterministic nature of LLMs, and the team suggests using different models (e.g., GPT-5.x on one run, Claude Opus 4.6 on another) to maximize coverage.

Practical implementation for growing businesses

For an SMB that wants to integrate AI-powered vulnerability scanning into its development workflow, the path forward involves several concrete steps:

- Assess your dependency footprint. Catalog the open source platforms, libraries, and frameworks your business relies on. The Taskflow Agent works best on multi-user web applications with complex authorization logic—exactly the profile of ecommerce platforms, collaboration tools, and customer-facing portals.

- Secure the appropriate Copilot licensing. At minimum, you need a Copilot plan that includes premium model access. For teams, the Business tier at $19/user/month is the entry point. Budget for additional premium request capacity, as a single audit run on a medium-sized repository can take one to two hours and generate substantial token consumption.

- Run multiple audit passes. The GitHub team recommends running the taskflows multiple times on the same codebase, ideally with different LLMs. Create a recurring schedule—perhaps tied to major releases or quarterly reviews—rather than treating security scanning as a one-time event.

- Integrate into CI/CD pipelines. The taskflows can be adapted to run within GitHub Actions or other CI/CD systems. The seclab-taskflows repository includes scripts and configuration templates for automation.

- Consider external automation partners. For businesses without in-house security expertise, configuring and maintaining agentic security workflows within CI/CD pipelines can be a significant engineering overhead. This is where managed security automation providers add value—they handle the taskflow configuration, model selection, quota management, and result triage that would otherwise require dedicated security engineering talent.

The ROI of AI-powered security scanning

The economics are worth examining. GitHub’s data shows that the Taskflow Agent, using GPT-5.x as the primary analysis model, produced 1,003 suggested issues. After the audit stage, 139 were marked as genuine vulnerabilities—a 13.9% yield rate from suggestion to verified finding. After manual review, 19 were deemed reportable. That means roughly 1 in 53 suggested issues turned into a real, high-impact vulnerability report.

Compare this to traditional SAST tools, which typically produce hundreds or thousands of alerts with false positive rates often exceeding 40%. The Taskflow Agent’s false positive rate after its own audit stage was only 22%—and none of those false positives were hallucinations in the traditional sense. Every report had sound evidence backing it, with incorrect findings stemming from genuine analytical mistakes similar to what a human auditor would make.

For an SMB, the practical ROI calculation looks like this: the cost of a Copilot Business license plus premium request tokens versus the cost of a single data breach. The average cost of a data breach for small businesses continues to climb, with incidents involving exposed PII carrying particularly heavy regulatory penalties. Finding and fixing an IDOR vulnerability before it reaches production is not just a technical win—it is a financial safeguard.

Limitations and realistic expectations

The Taskflow Agent is not a silver bullet. It struggles most with desktop application threat modeling, where the trust boundary between processes on a user’s machine is ambiguous. It also shows limited effectiveness with memory safety issues—only three such issues were suggested during testing, and none were validated. This is expected, since most tested repositories were written in memory-safe languages, but it also suggests that tools like fuzzers remain better suited for memory corruption bugs.

The token consumption is a real constraint. Running multiple audit passes on a large codebase can exhaust premium request quotas quickly, and the non-deterministic nature of LLMs means you cannot guarantee comprehensive coverage from a single run. Businesses need to budget for this and plan their scanning schedule accordingly.

Finally, the agent’s findings still require human verification. The 22% false positive rate, while low, means that one in five verified findings may not be exploitable in practice. Someone on your team—or a partner—needs the security expertise to reproduce and validate each result before escalating to maintainers or deploying patches.

Key takeaways

- GitHub’s Taskflow Agent has found 80+ real vulnerabilities in popular open source projects, including critical auth bypasses and PII exposure flaws that traditional SAST tools cannot detect.

- Business logic flaws—IDOR, authentication bypasses, privilege escalation—are the sweet spot for AI-powered security auditing, with a 25% validation rate for business logic issues specifically.

- The framework is open source and runnable today with a GitHub Copilot license, but practical deployment requires planning for token costs, multiple audit runs, and manual result verification.

- SMBs running web applications on open source platforms like WooCommerce, Rocket.Chat, or Spree Commerce should treat AI-powered security scanning as a complement to existing SAST tools, not a replacement.

- For teams without dedicated security engineers, managed automation partners can configure and maintain these agentic workflows within CI/CD pipelines, reducing the engineering overhead while preserving the security benefits.

The era of AI-powered vulnerability discovery is no longer theoretical. The flaws found by GitHub’s Taskflow Agent were real, exploitable, and in software used by millions of businesses. The question for SMBs is no longer whether AI security scanning works—it is how quickly they can put it to work in their own pipelines.

Leave a Comment

Sign in to join the discussion and share your thoughts.

Login to Comment