Most AI-powered security scanners have a signal-to-noise problem. They either cast a wide net and drown teams in false positives, or they play it safe and miss critical bugs. GitHub Security Lab’s Taskflow Agent takes a different approach: it splits vulnerability detection into a carefully engineered three-stage pipeline that gives the LLM room to brainstorm while forcing it to back up every claim with hard evidence. The results speak for themselves: 80+ real vulnerabilities reported across open source projects, including a critical authentication bypass in Rocket.Chat where a missing await let anyone sign in with any password (CVE-2026-28514, CVSS 9.3). Here’s how the architecture works and why it matters.

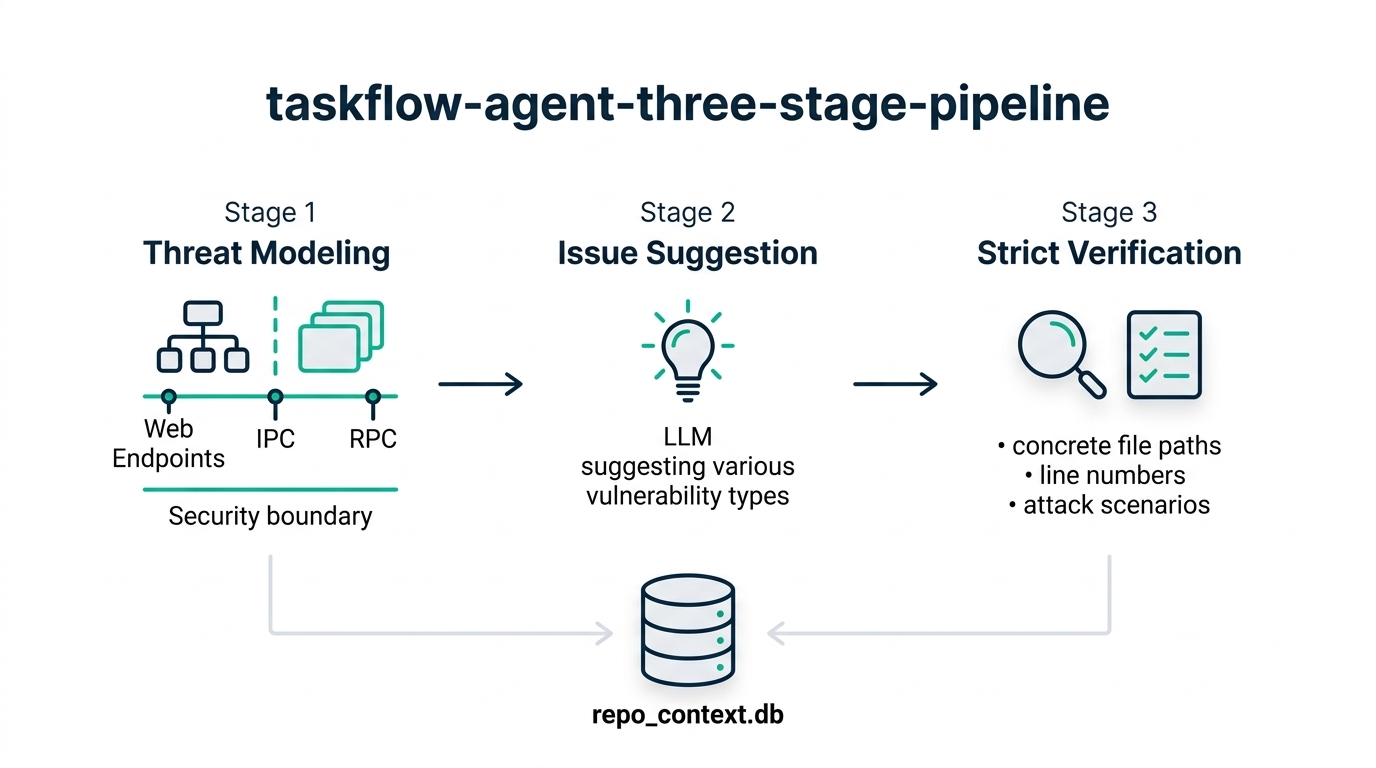

Stage 1: Threat modeling builds the security context

The pipeline begins not by hunting for bugs, but by understanding the codebase. This is a deliberate design choice rooted in a hard-won lesson from static analysis: a large proportion of false positives come from improper threat modeling. Most SAST tools don’t account for the intended usage and security boundaries of the code they scan, so they flag issues that have no real security implications. A reverse proxy application, for example, will naturally contain patterns that look like SSRF vulnerabilities but are actually intended behavior.

The Taskflow Agent’s threat modeling stage divides the repository into distinct components based on functionality, then systematically gathers intelligence about each one. It identifies applications within the repo (since a single repo may contain multiple components with different security boundaries), maps entry points where untrusted input arrives (web endpoints, IPC channels, RPC calls), catalogs web-specific entry points with HTTP methods and paths, and documents user actions to establish baseline privileges.

All of this information gets stored in an SQLite database (repo_context.db) that subsequent stages query. This is not just bookkeeping. The threat model directly informs what counts as a vulnerability. As the team’s prompts explicitly state: “You need to take into account the intention and threat model of the component to determine if an issue is a valid security issue or if it is an intended functionality.” A command injection in a CLI tool designed to execute user scripts, for instance, is a bug but not a security vulnerability.

Stage 2: Unrestricted brainstorming with a critical constraint

Once the threat model is built, the pipeline enters its most intentionally permissive phase. Using the component-level context from stage 1, the LLM suggests types of vulnerabilities likely to appear in each component. The prompts ask the model to consider whether the component takes untrusted input, whether it involves complex access control logic, and what functionality it exposes to users.

What makes this stage effective is what it explicitly prohibits. The LLM is instructed not to audit the issues it suggests. This is a subtle but critical design decision. When left unchecked, the model tends to start verifying its own suggestions within the same context, which defeats the purpose of separating brainstorming from verification. The prompt directly tells the LLM: do not start auditing. Just suggest.

The stage also filters for severity upfront, instructing the model to exclude low-severity issues and scenarios requiring unrealistic conditions like misconfiguration or an already-compromised system. The goal is to produce a curated list of high-probability vulnerability candidates, not a comprehensive dump of every possible weakness. This brainstorming output then becomes the input for the most rigorous phase of the pipeline.

Stage 3: Strict verification with fresh context and hard evidence

The final stage is where the pipeline earns its low false-positive rate. Every suggestion from stage 2 is re-examined from scratch, with fresh context and different prompts. The suggestions are explicitly marked as unvalidated: “The issues suggested have not been properly verified and are only suggested because they are common issues in these types of application. Your task is to audit the source code to check if this type of issue is present.”

The verification demands are exacting. The LLM must provide concrete file paths and line numbers. It must describe a realistic attack scenario detailing every step an attacker would take. It must explain what the attacker gains. And it is explicitly told that there might be no vulnerability at all: “Remember, the issues suggested are only speculation and there may not be a vulnerability at all and it is okay to conclude that there is no security issue.”

This design creates a deliberate tension. Stage 2 gives the LLM freedom to explore widely. Stage 3 forces it to defend every suggestion with evidence. The separation of contexts between stages is what prevents self-validation bias. The LLM cannot simply agree with itself because the second pass starts fresh, treating the suggestions as external claims that need independent verification.

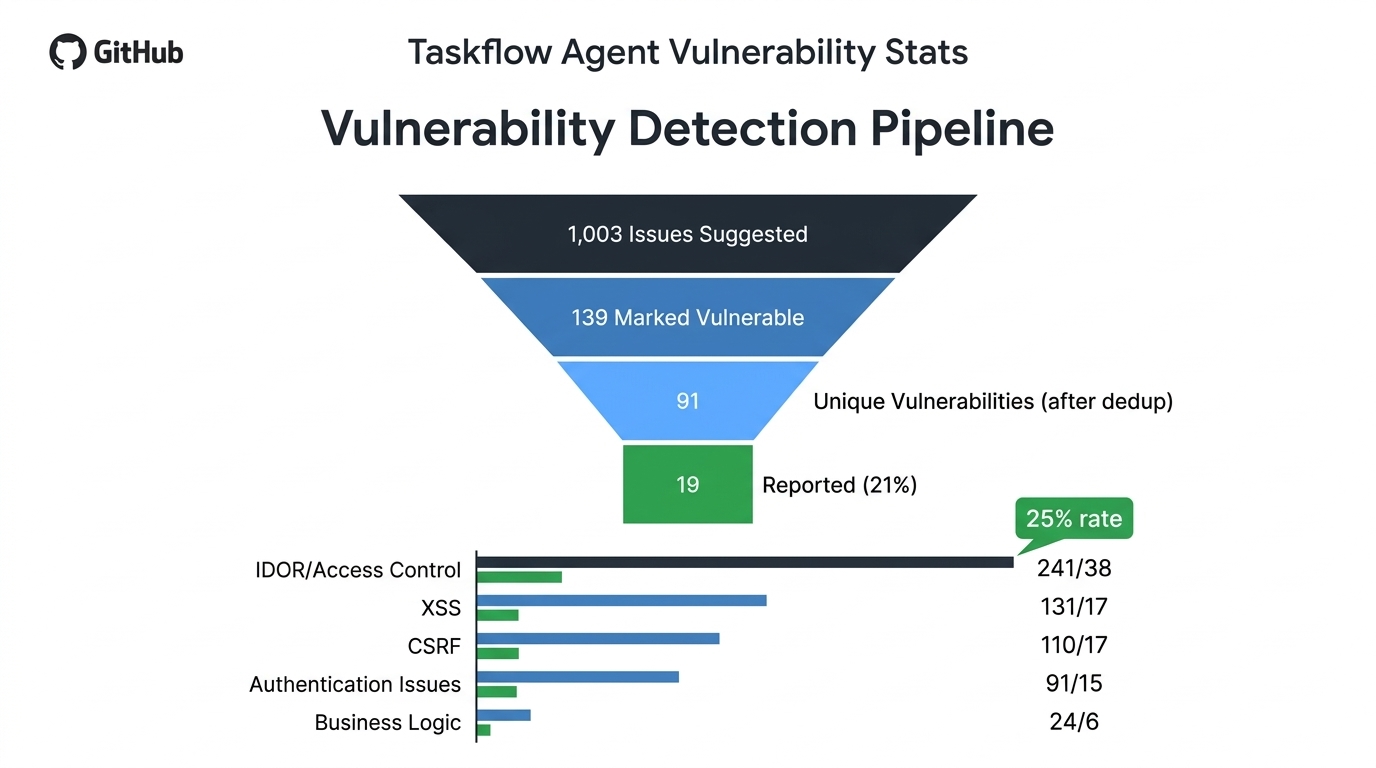

What the numbers reveal about AI vulnerability detection

GitHub Security Lab ran this pipeline across more than 40 repositories, primarily multi-user web applications. The results, documented in detail in their March 2026 blog post, provide the most comprehensive public dataset on LLM-driven security auditing to date.

| Metric | Count |

|---|---|

| Issues suggested | 1,003 |

| Marked as having vulnerabilities | 139 |

| Unique vulnerabilities after dedup | 91 |

| Manually rejected as false positives | 20 (22%) |

| Rejected as low severity | 52 (57%) |

| Reported as impactful vulnerabilities | 19 (21%) |

Several patterns stand out from the category-level data. Business logic issues had the highest confirmation rate at 25%, reinforcing that LLMs excel at understanding code semantics and access control models. IDOR and access control issues accounted for the most confirmed vulnerabilities (38), more than XSS and CSRF combined. The LLM’s ability to reason about authorization logic, including complex multi-layer permission checks, gives it a genuine advantage over traditional SAST tools in this domain.

Notably, the false positives were not hallucinations in the traditional sense. Every report, including the false positives, contained sound evidence with correct file paths and line numbers. The errors came from missing mitigating factors beyond what the source code revealed, such as browser-level XSS protections or additional authentication layers the model couldn’t see.

Practical considerations for running the framework

The Taskflow Agent is open source and can be run by anyone with a GitHub Copilot license. Getting started takes four steps: create a personal access token with model permissions, add it as a codespace secret, start a codespace from the seclab-taskflows repository, and run a single command. A medium-sized repository takes one to two hours to complete.

There are important caveats. The taskflows consume significant model quota due to the number of tool calls involved. Multiple runs are recommended because LLM non-determinism means a second run can surface entirely different findings. GitHub’s own researchers often use different models for sequential runs (for example, GPT-5.x for the first pass and Claude Opus 4.6 for the second) to maximize coverage.

The framework is built on the OpenAI Agents SDK and uses a YAML-based grammar that resembles GitHub Actions workflows. Each task starts with fresh context, and results are passed between tasks via a memcache key-value store or SQLite database. This architecture makes individual tasks debuggable and rerunnable without restarting the entire pipeline.

What this means for security teams

The Taskflow Agent’s three-stage architecture demonstrates a principle that extends beyond vulnerability scanning: structured AI workflows with clear guardrails outperform monolithic prompts. By separating context gathering from brainstorming, and brainstorming from verification, the framework gives each stage clear objectives and constraints. The threat modeling stage ensures the LLM understands what it’s looking at. The suggestion stage gives it freedom to explore. The verification stage forces it to prove its claims.

The pipeline’s results also validate LLMs as particularly strong at finding logic bugs, the class of vulnerability that traditional tools struggle with most. Authorization bypasses, IDOR issues, and business logic flaws require understanding the intent behind code, not just its structure. That’s exactly where large language models, with their ability to reason about semantics, deliver the most value.

For teams considering AI-assisted security, the Taskflow Agent offers a ready-to-use starting point and a design template. The framework is open source at github.com/GitHubSecurityLab/seclab-taskflow-agent, with example taskflows available at github.com/GitHubSecurityLab/seclab-taskflows. The YAML-based taskflow grammar makes it straightforward to customize prompts for your own threat models and codebases, or to extend the pipeline with additional verification stages tailored to your security requirements.

Leave a Comment

Sign in to join the discussion and share your thoughts.

Login to Comment