As of early 2026, the landscape of Application Security (AppSec) is undergoing a fundamental shift. For decades, Static Application Security Testing (SAST) was synonymous with “grep-on-steroids”—rule-based engines that matched code patterns against known vulnerability signatures. While these tools, such as Semgrep (v1.156) and SonarQube (v2026.2), are incredibly fast and effective at finding classic technical flaws like SQL injection or hard-coded secrets, they have historically struggled with the “logic layer.” Modern applications, particularly those utilizing complex orchestration platforms like n8n or AI-driven voice agents, rely on intricate authorization boundaries that traditional pattern matching simply cannot see. Enter GitHub Security Lab’s Taskflow Agent: an open-source, agentic AI framework that doesn’t just scan code, but reasons through it. This article explores the critical differences between AI-driven agentic scanning and traditional rule-based SAST in the 2026 security ecosystem.

The rule-based wall: Why traditional SAST misses logic flaws

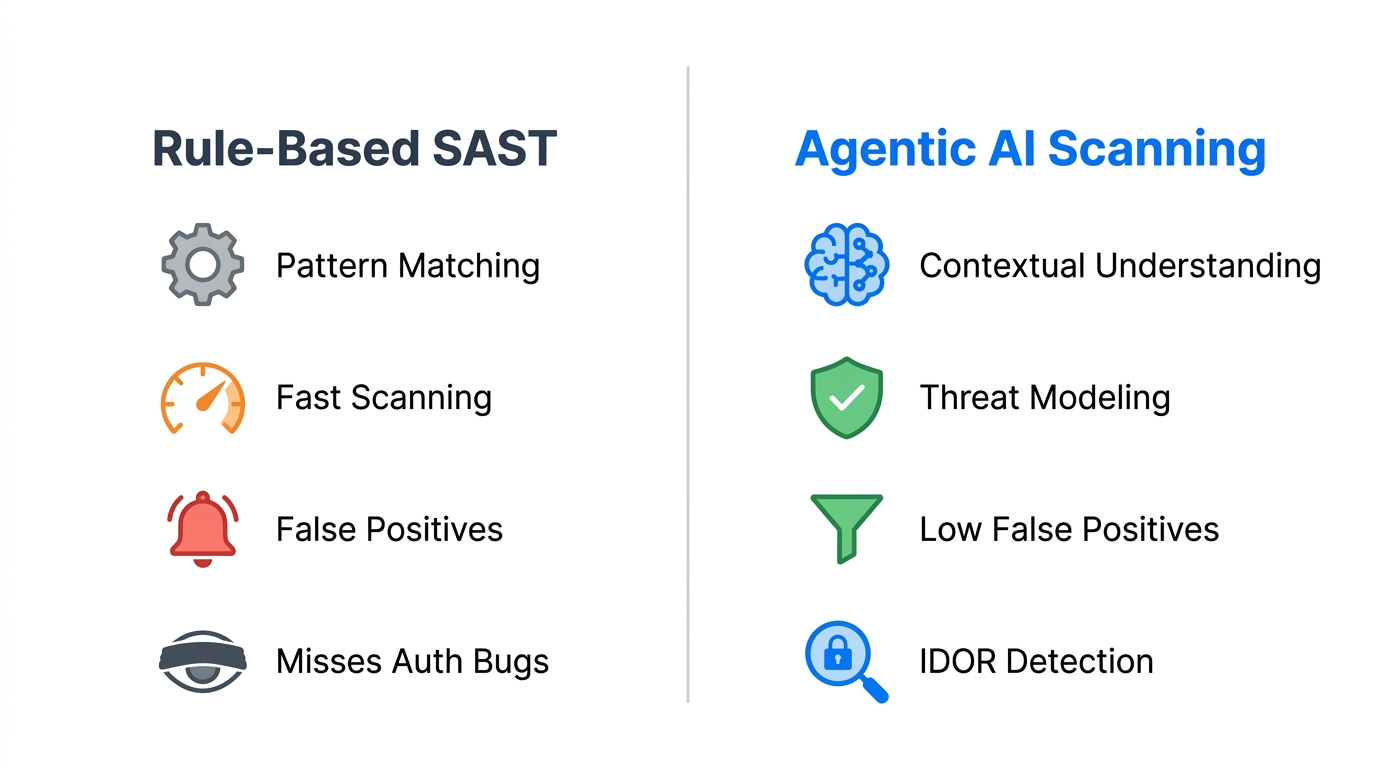

Traditional SAST tools are deterministic. They rely on “Taint Analysis” and “Abstract Syntax Trees” (AST) to track data from an untrusted source to a dangerous sink. While this is perfect for identifying a missing await in a database call or an unescaped string, it fails when the “vulnerability” is perfectly valid code used in the wrong context. For example, an Insecure Direct Object Reference (IDOR) often looks like a standard database query: db.orders.find(id). To a rule-based engine, this is clean code. To a security researcher, it’s a critical flaw if there is no check to ensure the current_user owns that id.

In mid-2025, reports indicated that traditional SAST tools often generated noise levels exceeding 90% in modern frameworks. This “alert fatigue” forces developers to ignore mid-to-low severity findings, often burying high-impact authorization bypasses. Rule-based tools excel at speed and breadth—scanning millions of lines of code in seconds—but they lack the “semantic understanding” required to identify if a specific business logic flow violates the intended security boundary of the application.

GitHub Security Lab Taskflow Agent: The agentic revolution

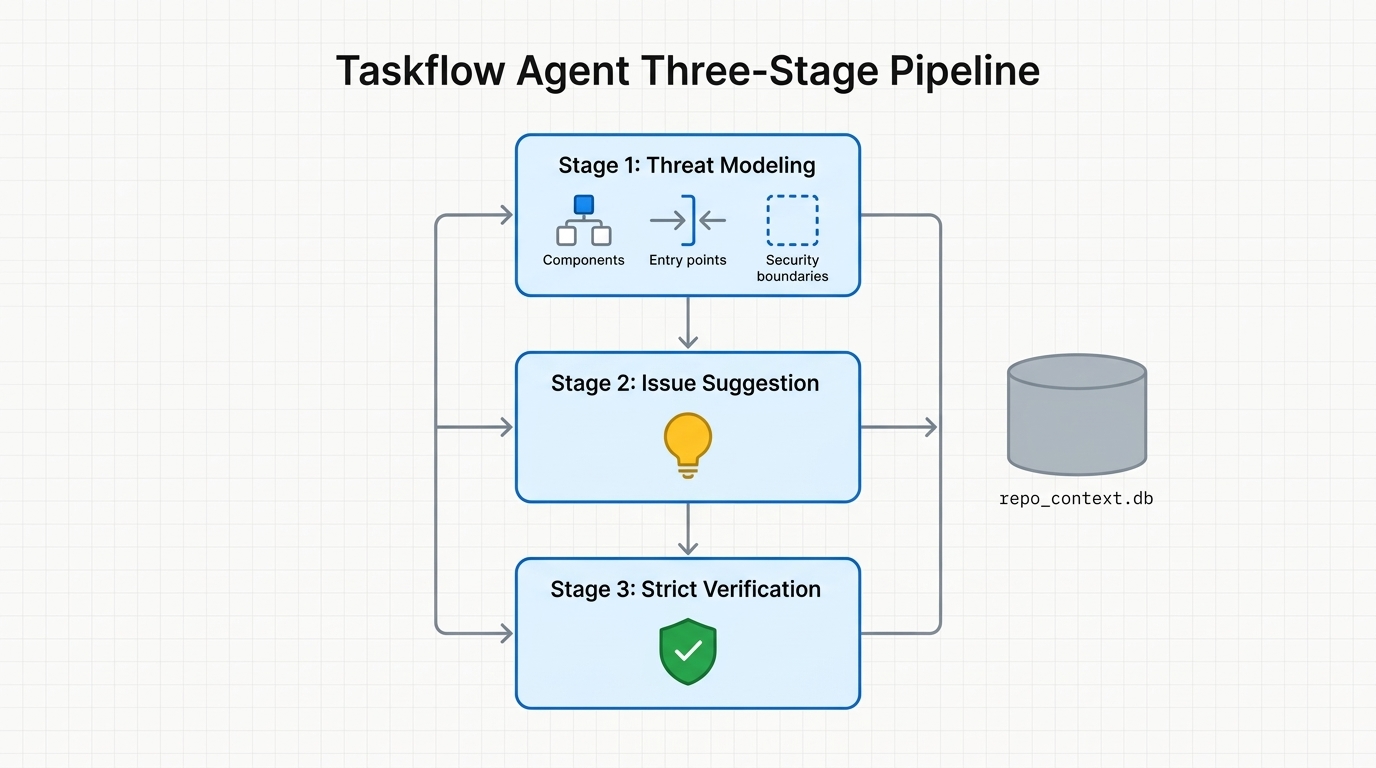

Released in late 2025 and matured by early 2026, the GitHub Security Lab Taskflow Agent represents a paradigm shift. Unlike a single-pass scanner, the Taskflow Agent is an “agentic” framework. It breaks the auditing process into a series of interconnected, autonomous tasks managed by Large Language Models (LLMs) like GPT-5.2 or Claude 4.6. Instead of looking for patterns, it performs a simulated security audit through a structured three-stage pipeline:

- Threat modeling: The agent first “reads” the entire repository to identify components, entry points, and intended user actions. It creates a

repo_context.dbthat defines what the application is supposed to do. - Issue suggestion: Using the context from stage one, a “brainstorming” agent suggests potential high-risk areas based on the component’s purpose (e.g., “This is a shopping cart; look for IDORs in order history”).

- Strict verification: A final agent attempts to “exploit” the suggested issues by tracing the code and generating a realistic attack scenario. If it cannot prove the flaw is reachable and impactful, the issue is discarded.

Performance benchmarks: Finding the “un-findable”

In the initial pilot across 40+ large-scale repositories, the Taskflow Agent demonstrated a unique ability to catch logical vulnerabilities that traditional tools missed for years. The most striking statistic from the 2026 data is that IDOR and Access Control issues accounted for 15.8% of all flagged vulnerabilities, the highest category by far. Traditional SAST tools typically catch near-zero of these without heavy custom rule configuration.

| Feature | Traditional SAST (e.g., Semgrep OSS) | Agentic AI (Taskflow Agent) |

|---|---|---|

| Core Engine | Pattern Matching / Dataflow Rules | Multi-Agent LLM Reasoning |

| Scan Speed | Ludicrous (Seconds to Minutes) | Deliberate (1-2 Hours) |

| False Positive Rate | High (90%+) | Low (Filtered via Verification) |

| Logic Flaw Detection | Weak / Not Supported | Excellent (Context-Aware) |

| Primary Strength | Catching technical bugs early in CI | Catching complex authorization bypasses |

One notable success was the discovery of CVE-2026-28514 in Rocket.Chat. The Taskflow Agent identified a subtle bug where a validatePassword function returned a Promise that wasn’t properly await-ed. Because an unsettled Promise is “truthy” in JavaScript, the code allowed logins with any password. A rule-based tool would see valid function calls; the AI agent recognized the logical failure of the authentication gate.

Security for the modern SMB: n8n and Voice AI

For Small and Medium Businesses (SMBs) building with modern low-code tools like n8n or deploying custom voice AI agents, the security stakes have changed. These systems often connect disparate APIs, CRMs, and databases, creating a vast “glue code” surface area where authorization logic is the only defense. Traditional SAST tools are often too noisy for small teams to manage, requiring a dedicated security engineer just to triage the results.

The 2026 trend for SMBs is the integration of agentic scanners directly into the development workflow. By using the Taskflow Agent to audit n8n JSON exports or voice agent prompt-chains, SMBs can identify when an AI agent accidentally leaks PII or allows a user to “prompt-inject” their way into a higher privilege tier. Because the AI-powered scanner provides a “Summary Conclusion” and “Realistic Attack Scenario,” a non-security developer can immediately understand and fix the flaw without triaging thousands of pattern-match warnings.

Conclusion

The “AI vs. Rules” debate is not a zero-sum game; it is a evolution toward layered defense. Rule-based SAST tools like Semgrep remain indispensable for the “Inner Loop”—providing instantaneous feedback on technical coding standards and simple bugs. However, as applications become more complex and interconnected, the “Outer Loop” must be protected by agentic AI. The GitHub Security Lab Taskflow Agent has proven that by sacrificing raw speed for semantic reasoning, we can finally tackle the most dangerous and elusive category of web vulnerabilities: broken business logic. For organizations building the next generation of AI-integrated software, the move from pattern-matching to agentic reasoning is no longer optional—it is the new baseline for secure development.

Leave a Comment

Sign in to join the discussion and share your thoughts.

Login to Comment