Small and midsize businesses running classification pipelines, content tagging workflows, or customer intent routing have always faced the same AI economics problem: the volume of requests is enormous, but each individual request is simple. Until recently, the cheapest models that could handle these tasks reliably still cost enough that running millions of them per month didn’t pencil out. OpenAI’s GPT-5.4 nano, released on March 17, 2026, alongside GPT-5.4 mini, changes that equation. At $0.20 per million input tokens and $1.25 per million output tokens, nano costs roughly 3.75x less than mini on input and 3.6x less on output, opening the door to automation volumes that were previously impractical for budget-conscious organizations.

What GPT-5.4 nano is built for

GPT-5.4 nano is the smallest and fastest model in the GPT-5.4 family, explicitly designed for low-latency, high-throughput API workloads where extended multi-step reasoning isn’t required. According to Microsoft’s Azure AI Foundry guidance published the same day, nano is optimized for short-turn tasks: classification, intent detection, data extraction and normalization, ranking, and lightweight coding subagents. OpenAI’s own announcement recommends it for classification, data extraction, ranking, and coding subagents that handle simpler supporting tasks.

The model supports a 400,000-token context window and can handle up to 128,000 output tokens. It accepts both text and image inputs, making it viable for basic multimodal classification tasks like image-based content categorization. Crucially, nano also supports function and tool calling, which means it can participate in agentic workflows rather than operating as an isolated text processor.

The pricing breakdown: nano vs. the rest of the GPT-5.4 family

The economics of nano become clear when you compare it against the full GPT-5.4 lineup. Here’s where each model sits as of March 2026, based on OpenAI’s published API pricing:

| Model | Input ($/M tokens) | Cached input ($/M tokens) | Output ($/M tokens) | Best for |

|---|---|---|---|---|

| GPT-5.4 Pro | $30.00 | N/A | N/A | High-stakes reasoning |

| GPT-5.4 | $2.50 | $0.25 | $15.00 | Sustained multi-step reasoning |

| GPT-5.4 mini | $0.75 | $0.075 | $4.50 | Balanced reasoning + low latency |

| GPT-5.4 nano | $0.20 | $0.02 | $1.25 | Ultra-low latency, high throughput |

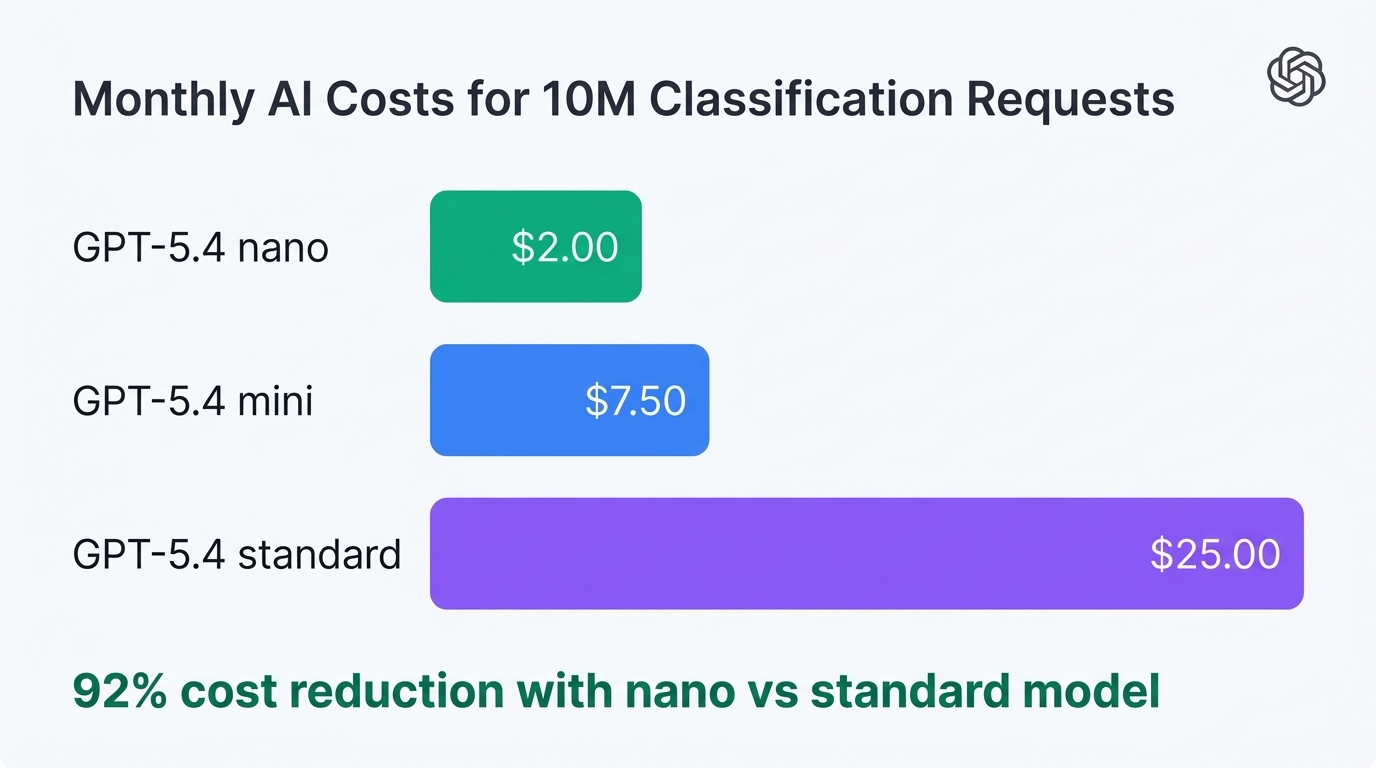

To put this in concrete terms: processing 10 million input tokens through nano costs $2.00, while the same volume through standard GPT-5.4 costs $25.00, and through mini costs $7.50. For an SMB running a customer support ticket classifier that processes 50 million tokens per month, the monthly bill drops from $125 (standard) to $37.50 (mini) to just $10 (nano) on input costs alone. Cached input pricing drops even further to $0.02 per million tokens, making repetitive classification patterns nearly free.

Real SMB automation use cases that finally make sense

At $0.20 per million input tokens, several automation patterns that were marginally viable become clearly profitable:

Customer support intent detection and routing

Every inbound support email, chat message, or social media mention can be classified by intent (billing question, technical issue, sales inquiry, complaint) and routed to the appropriate queue. At nano pricing, classifying 100,000 support interactions per day with an average of 200 input tokens each costs roughly $4 per day on input. Previously, using even mini at $0.75/M would run $15 per day for the same workload. Over a year, that’s a $4,000 difference from a single pipeline.

E-commerce content tagging and categorization

Product catalogs with thousands of SKUs need consistent tagging for search, filtering, and recommendation engines. Nano can extract structured attributes (color, material, category, price range) from product descriptions at scale. A store with 50,000 products averaging 300 tokens per description pays about $3 in input costs to tag the entire catalog. Running the same process weekly to catch new additions and updates costs under $200 per year.

Lead scoring and prioritization

Inbound leads from web forms, email campaigns, and CRM entries can be ranked by urgency, deal size, or fit score. Nano handles this as a ranking task, consuming maybe 150 tokens per lead. A sales team processing 10,000 leads per month pays roughly $0.30 in input costs. The economics become a no-brainer when the alternative is manual triage or much more expensive model calls.

The multi-model routing strategy that maximizes nano

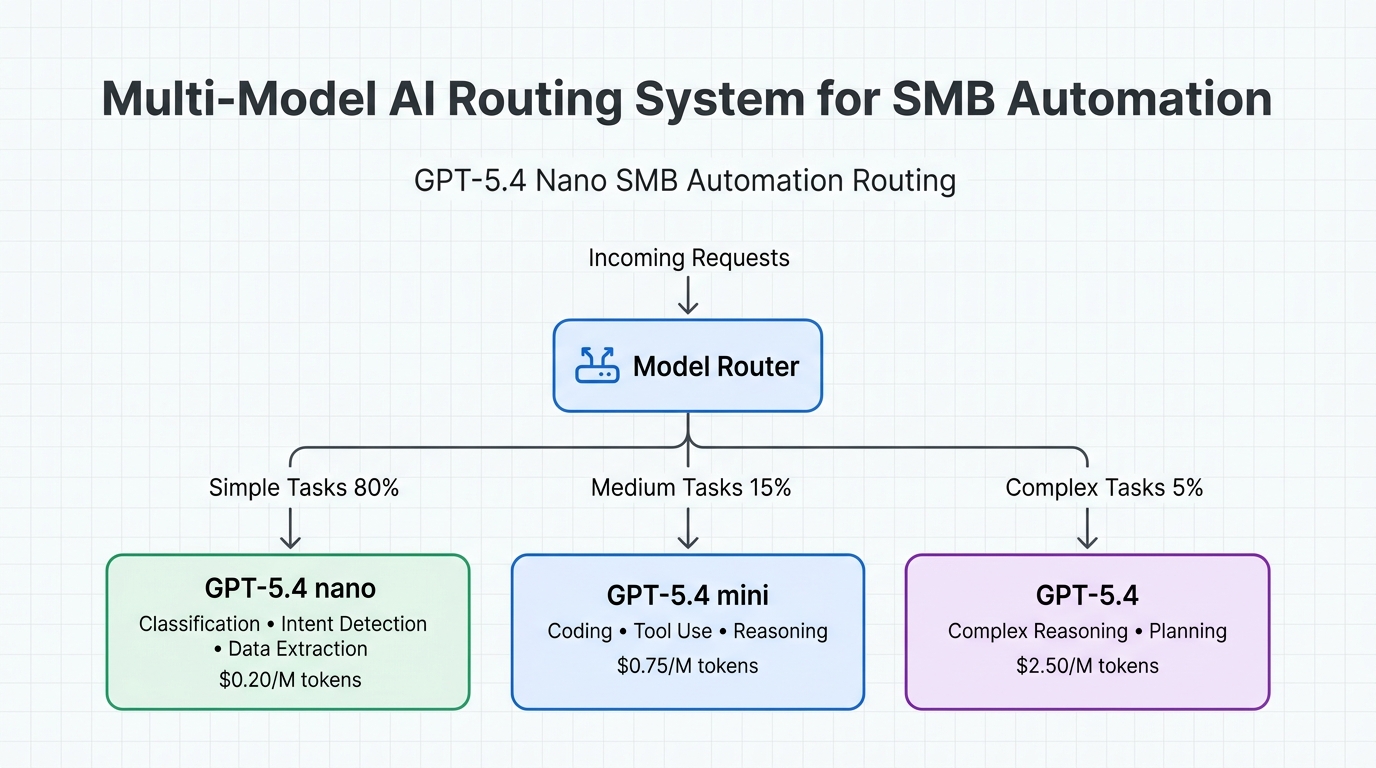

The real power of nano for SMBs isn’t using it for everything. It’s building a tiered routing architecture where nano handles the bulk of simple, repetitive tasks and escalates to more capable models only when needed. Microsoft’s Azure AI Foundry documentation explicitly recommends this approach, noting that teams can “route requests to the model that best fits each task” by deploying multiple GPT-5.4 variants side by side.

Here’s how this works in practice. A lightweight router (which can itself be nano) examines each incoming request and classifies its complexity. Simple tasks like intent detection, format validation, and field extraction go straight to nano. Requests requiring moderate reasoning, like drafting email responses or summarizing documents, route to mini. Only the most complex tasks, multi-step reasoning chains or nuanced judgment calls, escalate to the full GPT-5.4 model. In most SMB automation pipelines, 70 to 80 percent of requests fall into the simple category.

Caching amplifies the savings further. OpenAI offers cached input pricing at $0.02 per million tokens for nano, a 90% discount over standard input. For repetitive prompts with shared system instructions (which describes most classification pipelines), caching means the effective input cost approaches zero. Combined with intelligent routing, many organizations find their total API spend drops by 60 to 80 percent compared to running everything through a single larger model.

Where nano falls short: know the boundaries

Nano is not a general-purpose replacement for larger models. OpenAI’s benchmarks illustrate the tradeoffs clearly. On SWE-Bench Pro (coding), nano scores 52.4% compared to mini’s 54.4% and the full model’s 57.7%. That gap is small. But on OSWorld-Verified (computer use), nano drops to 39.0% while mini holds at 72.1% and the full model reaches 75.0%. On long-context retrieval tasks (OpenAI MRCR v2 at 128K-256K), nano manages 33.1% accuracy compared to mini’s 33.6%, but both fall far short of the full model’s 79.3%.

The practical takeaway: nano excels at short-context, well-scoped tasks with predictable input patterns. It struggles with long-context reasoning, complex multi-step planning, and tasks requiring deep domain expertise. SMBs should treat nano as a high-volume workhorse, not a reasoning engine. Push classification, extraction, and routing to nano. Keep research, analysis, and complex decision-making on larger models.

Getting started: deployment options and practical steps

GPT-5.4 nano is available through the OpenAI API and Microsoft’s Azure AI Foundry. On Azure, it’s deployed under the Standard Global pricing tier, with Data Zone US availability and a rolling release to Data Zone EU. OpenRouter also lists nano with the same pricing. The model is API-only; unlike mini, it’s not available in ChatGPT or Codex.

For SMBs looking to implement nano in their automation stack, the typical path looks like this:

- Audit existing AI workloads to identify high-volume, low-complexity tasks currently running on larger models.

- Build a classification prompt for nano that labels request complexity (simple, medium, complex) with high confidence.

- Implement a router that sends simple tasks to nano, medium tasks to mini, and complex tasks to the full model.

- Enable prompt caching on shared system instructions to capture the $0.02/M cached input rate.

- Monitor accuracy on nano-handled tasks and adjust the complexity threshold as needed.

Many organizations choose to work with automation specialists for steps 2 through 4, since the routing logic, caching configuration, and accuracy monitoring require careful tuning. The savings from proper model selection and caching strategies often pay for the implementation effort within the first month.

Bottom line

GPT-5.4 nano’s $0.20 per million input token price point removes the primary economic barrier that kept SMBs from deploying AI at scale for repetitive classification, extraction, and routing tasks. Combined with cached input pricing at $0.02/M and a tiered routing strategy that reserves larger models for genuinely complex work, organizations can realistically reduce their AI API spending by 60 to 80 percent while increasing throughput. The model is available now through OpenAI’s API and Azure AI Foundry. For any SMB running more than a million tokens per month through a single model without routing logic, building a nano-first pipeline is the single highest-ROI AI optimization available in 2026.

Leave a Comment

Sign in to join the discussion and share your thoughts.

Login to Comment