On March 17, 2026, OpenAI released GPT-5.4 mini and GPT-5.4 nano, two smaller models engineered for the workloads that actually dominate production AI systems: high-volume API calls, sub-agent delegation, real-time coding assistance, and fast-turnaround classification. These models bring many of the capabilities of the flagship GPT-5.4 into faster, dramatically cheaper packages designed for latency-sensitive applications where the full model’s horsepower is simply overkill. For developers and organizations scaling AI automation, the release represents a meaningful shift toward cost-optimized, multi-model architectures where task complexity determines which model handles the request.

What GPT-5.4 mini and nano are designed for

OpenAI’s positioning is blunt and specific. GPT-5.4 mini is the near-flagship workhorse. It runs more than twice as fast as GPT-5 mini while delivering benchmark scores that approach the full GPT-5.4 on coding, reasoning, multimodal understanding, and tool use. GPT-5.4 nano is the budget specialist: the smallest, cheapest, fastest model in the GPT-5.4 family, built for classification, data extraction, ranking, and lightweight coding sub-agents that handle narrow supporting tasks inside larger systems.

Both models share a 400,000-token context window, 128,000-token max output, text and image input support, and an August 31, 2025 knowledge cutoff. The real difference between them is not context size or knowledge freshness. It is workflow depth. Mini supports computer use and tool search. Nano does not. Mini handles screenshot interpretation and complex tool-calling chains with near-flagship reliability. Nano is optimized for throughput and predictable cost on structurally simpler tasks.

Pricing and availability

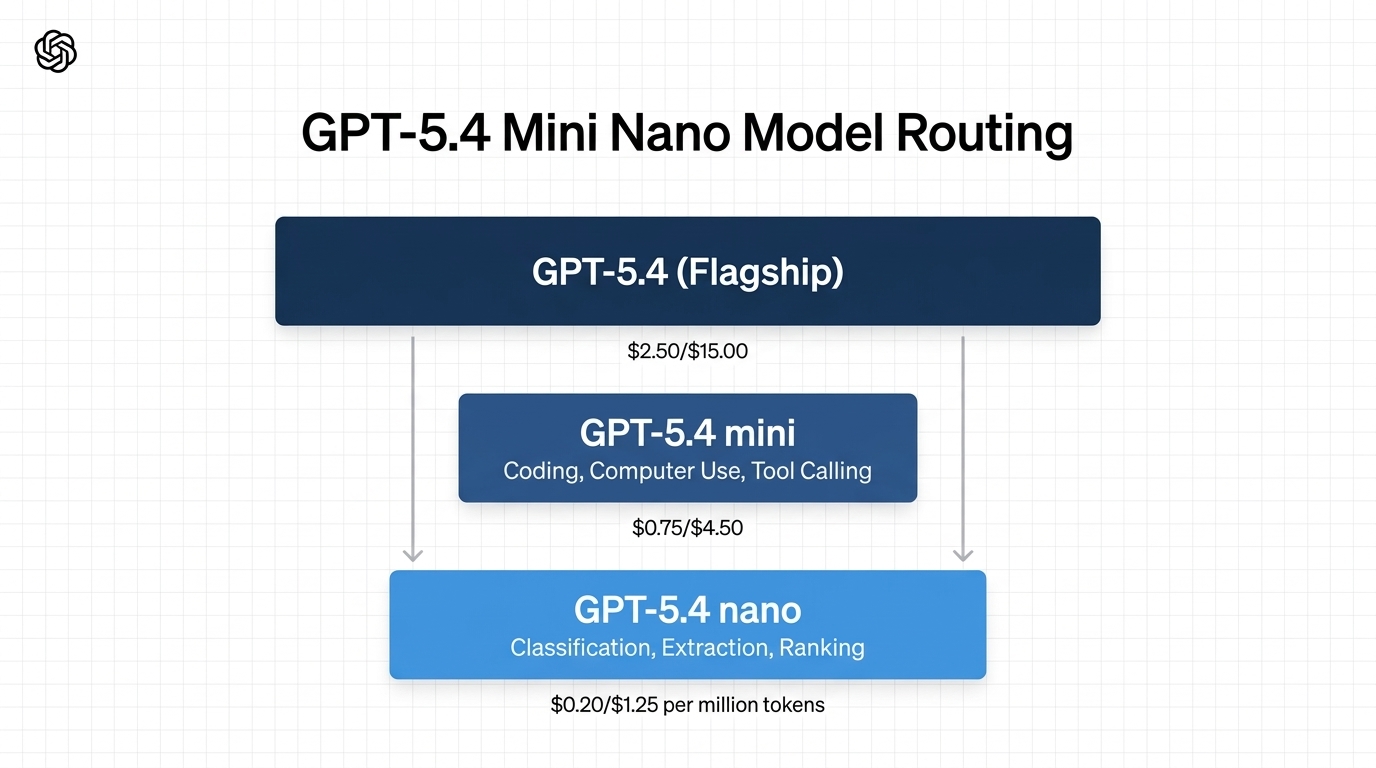

The cost gap between these models is wide enough to drive real architectural decisions. Here is the pricing breakdown as of March 2026:

| Specification | GPT-5.4 (flagship) | GPT-5.4 mini | GPT-5.4 nano |

|---|---|---|---|

| Input price (per 1M tokens) | $2.50 | $0.75 | $0.20 |

| Cached input (per 1M tokens) | — | $0.075 | $0.02 |

| Output price (per 1M tokens) | $15.00 | $4.50 | $1.25 |

| Context window | 400K | 400K | 400K |

| Availability | API, Codex, ChatGPT | API, Codex, ChatGPT | API only |

| Computer use | Yes | Yes | No |

| Tool search | Yes | Yes | No |

Mini costs roughly 3.75x more on input and 3.6x more on output than nano. Against the flagship, mini runs at roughly 30% of the per-token cost. In OpenAI’s Codex platform specifically, GPT-5.4 mini consumes only 30% of the GPT-5.4 quota, which means developers can handle routine coding tasks at about one-third the compute cost while reserving the flagship model for heavier reasoning work.

Availability also signals intent. Mini is live across the API, Codex (app, CLI, IDE extension, and web), and ChatGPT. Free and Go tier users can access it through the Thinking feature in the plus menu. Nano remains API-only, a clear indication that OpenAI sees it as infrastructure for developers building production systems rather than a consumer-facing chat model.

Benchmark performance: how close is mini to the flagship?

The benchmark data from OpenAI’s official launch post tells a clear story. On coding and reasoning benchmarks, mini consistently outperforms the previous-generation GPT-5 mini by large margins and comes surprisingly close to the full GPT-5.4. Nano also outperforms GPT-5 mini on most coding tasks despite being a significantly smaller model.

| Benchmark | GPT-5.4 | GPT-5.4 mini | GPT-5.4 nano | GPT-5 mini |

|---|---|---|---|---|

| SWE-Bench Pro (Public) | 57.7% | 54.4% | 52.4% | 45.7% |

| Terminal-Bench 2.0 | 75.1% | 60.0% | 46.3% | 38.2% |

| GPQA Diamond | 93.0% | 88.0% | 82.8% | 81.6% |

| OSWorld-Verified | 75.0% | 72.1% | 39.0% | 42.0% |

| τ2-bench (telecom) | 98.9% | 93.4% | 92.5% | 74.1% |

| Toolathlon | 54.6% | 42.9% | 35.5% | 26.9% |

Several patterns stand out. On SWE-Bench Pro, which measures real software engineering task resolution, mini scores 54.4% compared to the flagship’s 57.7%, a gap of just 3.3 percentage points. On OSWorld-Verified, the computer-use benchmark, mini hits 72.1% versus the flagship’s 75.0%, nearly matching a model that costs more than three times as much. GPQA Diamond, a graduate-level reasoning benchmark, shows mini at 88.0% against the flagship’s 93.0%.

Nano’s profile is more specialized. It scores respectably on coding benchmarks—52.4% on SWE-Bench Pro is competitive for a model at this price point—but it falls off sharply on computer use (39.0% on OSWorld-Verified) and long-context retrieval tasks (33.1% on the 128K–256K needle retrieval test). This aligns with OpenAI’s positioning: nano is the right choice when the task is narrow, repetitive, and structurally simple, not when it requires deep reasoning or interface navigation.

The sub-agent architecture: why this release matters

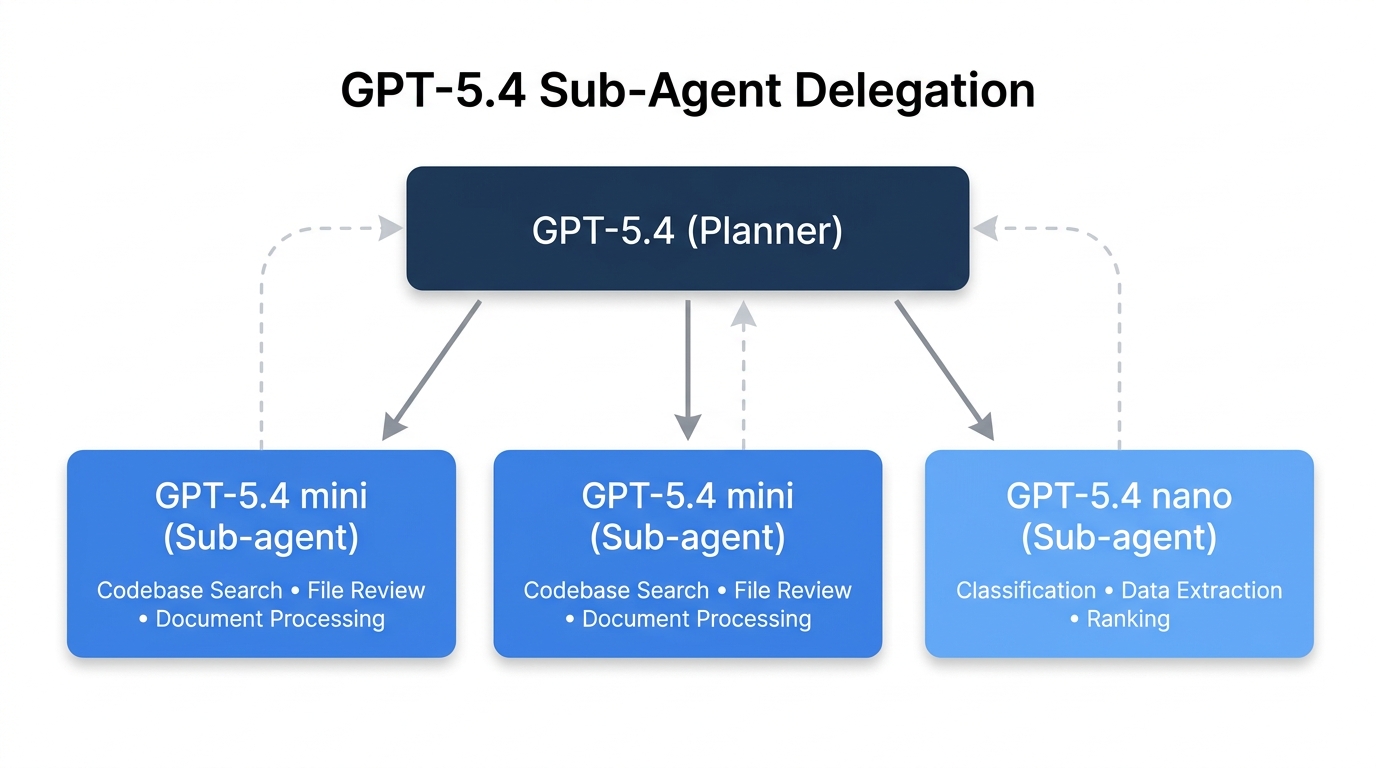

The most important context for these models is not any single benchmark score. It is the architectural pattern they enable. OpenAI is explicitly designing for a world where AI systems use multiple models of different sizes in the same workflow, with larger models handling planning, coordination, and complex judgment while smaller models execute narrower tasks in parallel.

In OpenAI’s Codex, this pattern is already operational. GPT-5.4 can handle the planning and final review of a coding task while delegating focused subtasks—searching a codebase, reviewing a large file, processing supporting documents—to GPT-5.4 mini sub-agents that run concurrently. This is not theoretical. It is how Codex works today, and it represents a significant shift in how production AI systems are built.

The practical math is straightforward. If you are running an agentic system where a flagship model delegates to sub-agents, the majority of your token spend goes to those sub-agents, not to the planner. Getting sub-agent performance that is close to flagship quality at 30% of the cost changes the unit economics of the entire system. That is why OpenAI’s competitors are pursuing the same approach: Anthropic’s Claude 4.5 Haiku serves a similar lightweight-agent role, and Google’s Gemini 3 Flash targets comparable high-throughput workloads.

When to use mini versus nano

The decision between mini and nano comes down to task complexity and the cost of failure, not raw capability. Here is a practical routing framework:

- Use GPT-5.4 mini when the model handles real coding work (codebase navigation, multi-file edits, diff reasoning), needs to interpret screenshots or navigate software interfaces, operates as a capable worker in a larger agent system with non-trivial subtasks, or works across large tool ecosystems where tool search matters. Pay for mini when a mistake, failure, or slow recovery in a tool workflow is expensive.

- Use GPT-5.4 nano when the task is classification, data extraction, ranking, or routing at very high volume. Use nano for intent detection, structured field extraction, candidate filtering, summarization before passing output to a planner, and lightweight validation or support tasks under a larger coordinating model.

- Use both in a dual-lane architecture where mini handles the harder agent work and nano handles the cheaper high-volume utility lane. Many production systems benefit from routing logic that assigns tasks to the appropriate model rather than standardizing on a single tier.

Real-world validation from early adopters

Customer testing during the pre-release phase reinforced the benchmark narrative. Hebbia, which builds document-analysis tools for finance and legal professionals, found that GPT-5.4 mini matched or exceeded competitive models on output tasks and citation recall at much lower cost while achieving stronger source attribution than the larger GPT-5.4 model itself. Aabhas Sharma, Hebbia’s CTO, noted that mini delivers “strong end-to-end performance for a model in this class.”

Notion’s AI Engineering Lead, Abhisek Modi, reported that GPT-5.4 mini “matched and often exceeded GPT-5.2 on handling complex formatting at a fraction of the compute” for page editing tasks. He emphasized that smaller models like mini and nano can now reliably handle agentic tool calling, which previously required only the most expensive models.

Key takeaways

- GPT-5.4 mini delivers near-flagship performance at roughly 30% of the per-token cost, with scores within 3 percentage points of GPT-5.4 on SWE-Bench Pro and within 3 points on OSWorld-Verified computer use.

- GPT-5.4 nano is OpenAI’s cheapest model at $0.20 per million input tokens, purpose-built for classification, extraction, ranking, and lightweight coding sub-agents.

- Both models share a 400K context window and August 2025 knowledge cutoff. The meaningful differences are in supported capabilities (computer use, tool search) and benchmark performance on complex tool-heavy workflows.

- The sub-agent delegation pattern—where a flagship model plans and smaller models execute—is now a core design principle in production AI systems. These models are built for that pattern.

- For organizations scaling automation, the practical question is no longer whether to use smaller models but how to design routing logic that sends each task to the model that handles it most cost-effectively.

[…] 17, 2026 release of the mini and nano variants, reveals that GPT-5.4 nano scores just 46.3% on Terminal-Bench 2.0—the benchmark that most closely mirrors how coding agents actually work in production. That is […]