The release of Gemini 3 has introduced a pivotal shift in how developers handle multimodal inputs. While earlier models often applied a “one-size-fits-all” approach to vision processing—frequently over-consuming tokens on simple images or struggling with fine text in complex documents—Gemini 3 provides surgical precision via the media_resolution parameter. This feature allows for granular control over the token budget allocated to every image, video frame, or PDF page within a single request. For businesses processing millions of documents or high-frequency video streams, mastering these settings is no longer optional; it is the primary lever for balancing state-of-the-art accuracy with sustainable operational costs. As of March 2026, this level of control has become the gold standard for high-volume multimodal automation.

Understanding the media_resolution parameter in Gemini 3

In previous iterations of the Gemini API, vision processing was largely opaque. The model would internally decide how to tile and scan an image, often resulting in unpredictable token usage that could quickly deplete context windows. Gemini 3 (including the 3.1 Pro and Flash variants released in early 2026) replaces this guesswork with the media_resolution parameter. This parameter explicitly sets the maximum number of tokens the model uses to represent a visual input, directly impacting both the level of detail the model can “see” and the latency of the response.

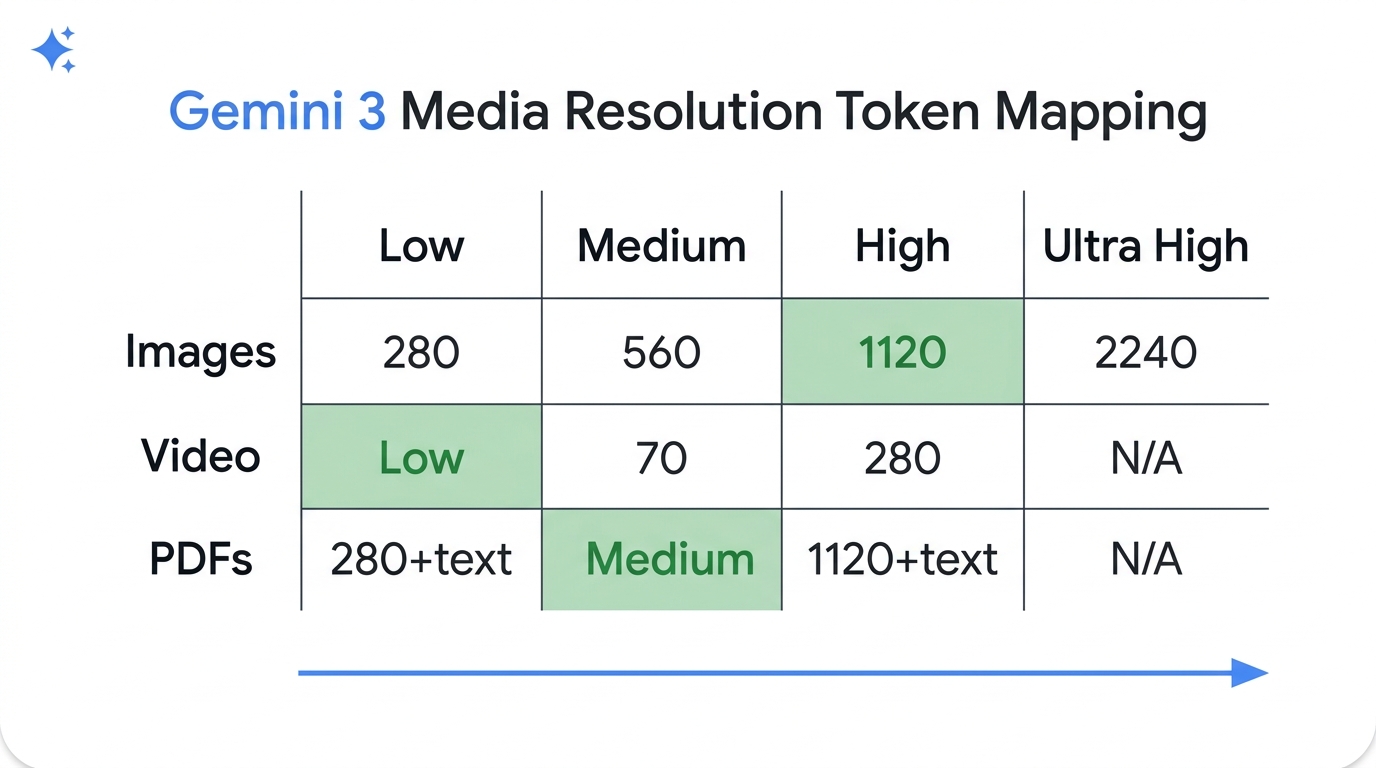

Developers can now choose from four primary resolution levels: Low, Medium, High, and Ultra High. These aren’t just arbitrary labels; they map to specific token counts that vary based on the media type. For example, setting an image to MEDIA_RESOLUTION_LOW caps it at 280 tokens, while MEDIA_RESOLUTION_HIGH permits up to 1120 tokens for capturing intricate details. This transparency allows for precise cost estimation and performance tuning before a single prompt is sent. For broader context on the model’s overall prompting behavior, see Gemini 3 Prompting: A Dev’s Guide to the Latest Techniques.

Token mapping and recommended settings

Selecting the right resolution requires understanding how Gemini 3 “budgets” its visual attention. The model doesn’t just downscale the image; it adjusts the density of its internal visual embeddings. According to official Google AI documentation updated in March 2026, the optimal balance for most tasks follows a specific pattern of diminishing returns where “higher” isn’t always “better.”

| Media Type | Recommended Level | Token Count | Best Use Case |

|---|---|---|---|

| Static Images | High | 1120 | Reading small text, identifying tiny objects, chart analysis |

| PDF Documents | Medium | 560 (+ text) | Standard OCR, document classification, invoice processing |

| General Video | Low | 70 (per frame) | Action recognition, scene description, general object detection |

| Text-Heavy Video | High | 280 (per frame) | Reading overlays, OCR on specific frames, technical tutorials |

A critical insight for document processing is that Medium resolution is often the saturation point for PDFs. Google’s benchmarks indicate that increasing a standard PDF page from Medium (560 tokens) to High (1120 tokens) rarely yields a measurable improvement in OCR accuracy but doubles the cost. Conversely, for high-fidelity image analysis—such as identifying a specific serial number on a hardware component—the High or even Ultra High (2240 tokens) settings are essential to prevent the model from “glossing over” critical pixels.

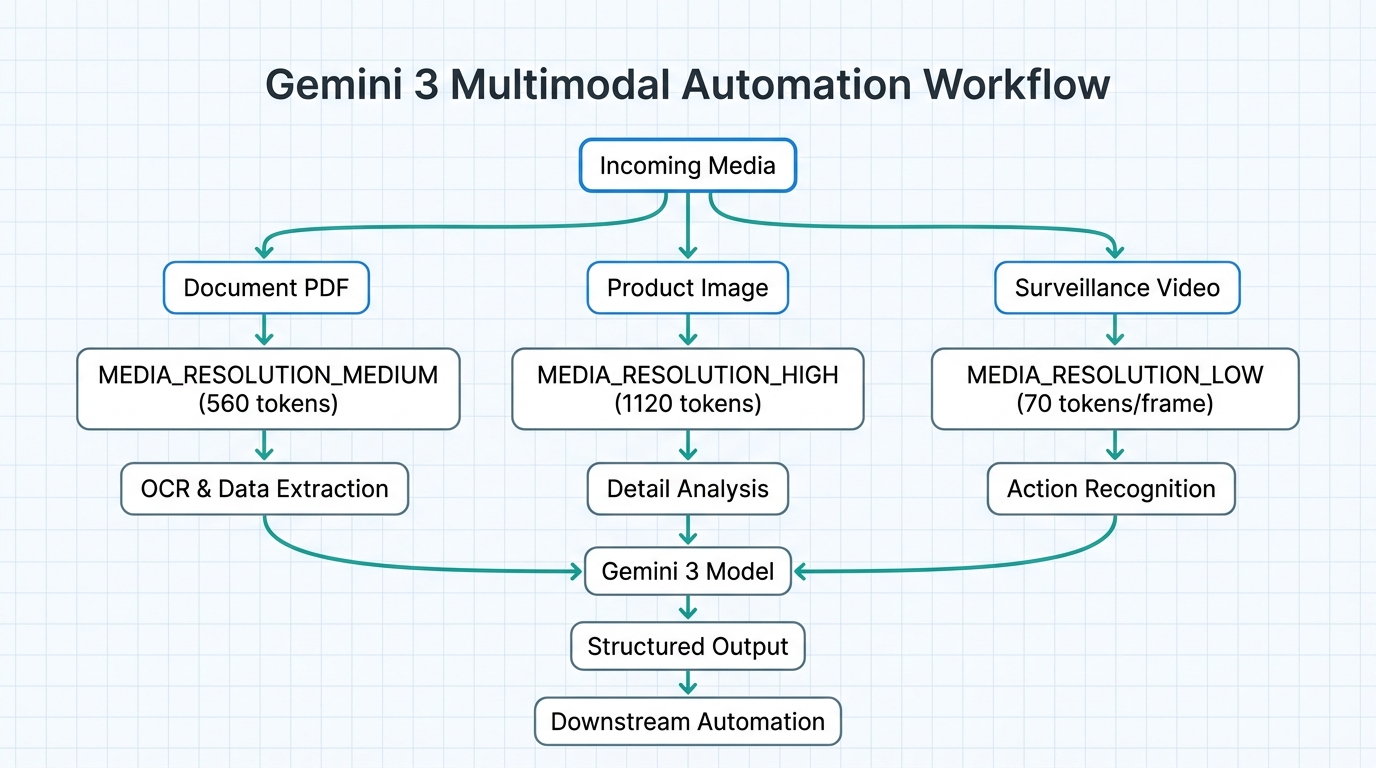

Implementing per-part resolution for mixed requests

One of the most powerful features exclusive to Gemini 3 is the ability to set resolution levels on a per-part basis. In a single multimodal prompt, you can combine multiple media objects with different resolution requirements. This is a game-changer for complex automation workflows. Imagine a customer support bot that receives a high-resolution photo of a broken product alongside a low-resolution screenshot of a receipt. In the past, both would be processed at the same quality level. With Gemini 3, you can optimize the request to save tokens on the receipt while maximizing detail on the product photo.

from google import genai

from google.genai import types

client = genai.Client(http_options={'api_version': 'v1alpha'})

# High resolution for the complex visual evidence

product_photo = types.Part.from_bytes(

data=open('product.jpg', 'rb').read(),

mime_type='image/jpeg',

media_resolution=types.MediaResolution.MEDIA_RESOLUTION_HIGH

)

# Low resolution for the simple context image

user_avatar = types.Part.from_bytes(

data=open('avatar.jpg', 'rb').read(),

mime_type='image/jpeg',

media_resolution=types.MediaResolution.MEDIA_RESOLUTION_LOW

)

response = client.models.generate_content(

model='gemini-3.1-pro-preview',

contents=["Analyze this support ticket:", product_photo, user_avatar]

)By leveraging per-part control, developers can construct “asymmetric” requests that prioritize token allocation where it provides the most value. This is particularly effective in n8n or LangGraph workflows where different steps of a process might require varying levels of visual scrutiny. Specialized n8n automation agencies often use this technique to build highly efficient pipelines that process thousands of multi-image inputs while staying well within the free tier or lower-cost brackets of the Gemini API. A related enterprise use case is model routing, where orchestration platforms dynamically choose the right model for the task; see Gemini 3 vs GPT-5.2: Which Frontier Model Wins for Enterprise AI in 2026?.

Strategic cost control and vision optimization

Beyond simple token savings, media_resolution acts as a governor for system-wide latency. Higher resolutions require the model to process more visual data before generating the first token, which can lead to “time-to-first-token” (TTFT) delays. For real-time applications, such as a video-based AI assistant, keeping frames at MEDIA_RESOLUTION_LOW (70 tokens) is mandatory to maintain a responsive feel. Gemini 3 treats Low and Medium settings for video identically (70 tokens) to optimize this exact scenario.

To maximize cost-efficiency, organizations should implement a “Resolution Tiering” strategy. In this model, initial processing (like document classification) happens at Low or Medium resolution. Only if the model identifies a “High-Confidence Detail Requirement”—such as a complex table or a blurred signature—does the workflow trigger a second, targeted call at High resolution. This tiered approach, often implemented via automated platforms like n8n, ensures that expensive “High” resolution tokens are only consumed when absolutely necessary for the task’s success.

Conclusion

Mastering Gemini 3’s media resolution settings marks the transition from basic AI implementation to sophisticated, cost-aware engineering. By moving away from default configurations and adopting granular, per-part control, developers can significantly reduce token overhead without sacrificing the model’s industry-leading reasoning capabilities. The key takeaways for 2026 are clear: prioritize High resolution for intricate images, stick to Medium for document OCR, and leverage Low resolution for the majority of video processing tasks. For businesses looking to scale these multimodal workflows, the combination of Gemini 3’s precision and robust automation platforms provides a clear path to high-ROI AI operations. Implementing these optimizations today ensures that your vision-heavy applications remain both smart and sustainable in an increasingly token-conscious landscape.

Leave a Comment

Sign in to join the discussion and share your thoughts.

Login to Comment