Every API call to Gemini 3 costs money, and the default reasoning depth is set to maximum. For teams running thousands of automated requests per day through workflows and agents, that default adds up fast. The thinking_level parameter, introduced with the Gemini 3 model family in late 2025, gives developers a straightforward lever to control how deeply the model reasons before responding. Used correctly, it can cut costs dramatically without sacrificing output quality where it matters. Used carelessly, it silently inflates bills on simple tasks that never needed deep reasoning in the first place.

What the thinking_level parameter actually controls

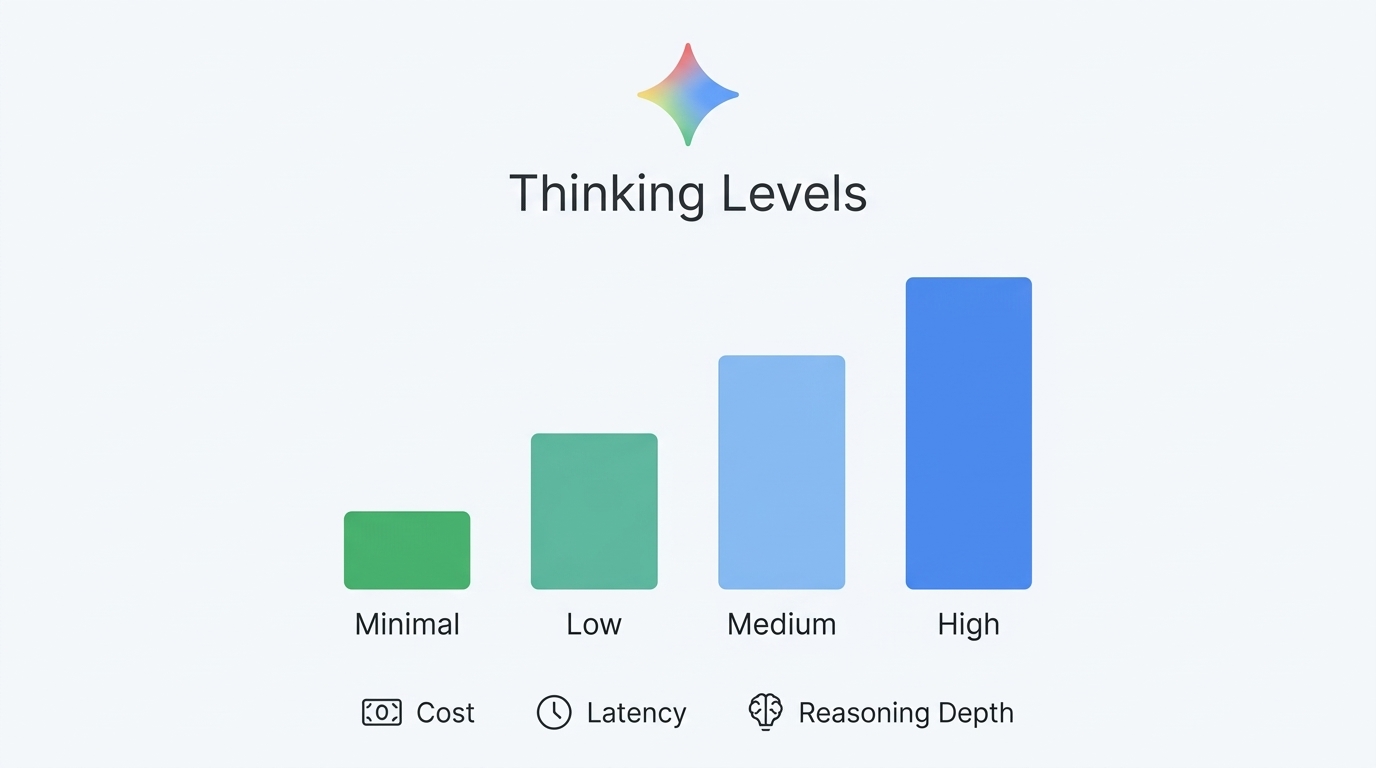

Gemini 3 models use an internal “thinking process” that runs before generating a visible response. This reasoning phase consumes tokens, and those tokens are billed at the same output rate as regular response tokens. The thinking_level parameter replaces the older thinking_budget (used by Gemini 2.5) and simplifies reasoning control into four discrete levels: minimal, low, medium, and high.

Google treats these levels as relative allowances for reasoning rather than strict token guarantees. The model still decides how much thinking to actually perform based on the prompt complexity, but the level caps the maximum reasoning depth. If you set low, the model won’t spend thousands of tokens reasoning through a simple list request, even though it might have done so under the default high setting. For a deeper primer on model prompting behavior, see Gemini 3 Prompting: A Dev’s Guide to the Latest Techniques.

How each thinking level differs across models

Not every level is available on every Gemini 3 model, and defaults vary. The official Google documentation provides the following breakdown:

| Level | Gemini 3.1 Pro | Gemini 3 Flash | Gemini 3.1 Flash-Lite | Best for |

|---|---|---|---|---|

| minimal | Not supported | Supported | Supported (default) | Chat, high-throughput apps, near-zero reasoning needed |

| low | Supported | Supported | Supported | Simple instruction following, classification, extraction |

| medium | Supported | Supported | Supported | Balanced tasks: comparisons, moderate analysis |

| high | Supported (default) | Supported (default) | Supported (dynamic) | Complex math, coding, strategic analysis |

Two important details stand out. First, Gemini 3.1 Pro does not support the minimal level at all. You cannot fully disable thinking on Pro models. Second, if you don’t specify a level, the model defaults to high for Pro and Flash, meaning maximum reasoning depth and maximum token consumption on every single request. For model selection and workflow routing ideas, it can help to compare this with Gemini 3 vs GPT-5.2: Which Frontier Model Wins for Enterprise AI in 2026?.

The cost impact in real numbers

The pricing structure makes this optimization matter. As of March 2026, Gemini 3 Flash (the model most teams use for high-volume work) charges $0.50 per million input tokens and $3.00 per million output tokens, with thinking tokens counted as output tokens. Gemini 3.1 Pro charges $2.00 per million input tokens and $12.00 per million output tokens.

When the model reasons at high for a simple prompt like “Summarize this email in one sentence,” it might generate hundreds or thousands of thinking tokens before producing a two-line answer. Those thinking tokens are billed at the same $3.00/1M (Flash) or $12.00/1M (Pro) rate as actual response text. On a workflow processing 10,000 emails per day, the difference between low and high reasoning for that task could translate to a significant monthly cost difference.

Setting thinking_level in your code

The parameter is set through the ThinkingConfig object in the Gemini API. Here’s how to configure it in Python:

from google import genai

from google.genai import types

client = genai.Client()

# Low thinking for simple tasks like summarization

response = client.models.generate_content(

model="gemini-3-flash-preview",

contents="Summarize this email: 'Hi team, the meeting has been moved to Thursday.'",

config=types.GenerateContentConfig(

thinking_config=types.ThinkingConfig(thinking_level="low")

),

)

print(response.text)

# Check token usage to verify savings

print("Thinking tokens:", response.usage_metadata.thoughts_token_count)

print("Output tokens:", response.usage_metadata.candidates_token_count)For Node.js, the syntax uses the ThinkingLevel enum:

import { GoogleGenAI, ThinkingLevel } from "@google/genai";

const ai = new GoogleGenAI({});

const response = await ai.models.generateContent({

model: "gemini-3-flash-preview",

contents: "Classify this feedback as positive or negative: 'Great product!'",

config: {

thinkingConfig: {

thinkingLevel: ThinkingLevel.LOW,

},

},

});

console.log(response.text);You can also set it directly via the REST API with curl:

curl "https://generativelanguage.googleapis.com/v1beta/models/gemini-3-flash-preview:generateContent" \

-H "x-goog-api-key: $GEMINI_API_KEY" \

-H 'Content-Type: application/json' \

-X POST \

-d '{

"contents": [{"parts": [{"text": "Extract the date from: Meet me on March 28"}]}],

"generationConfig": {

"thinkingConfig": {

"thinkingLevel": "low"

}

}

}'Building intelligent model routing for automated workflows

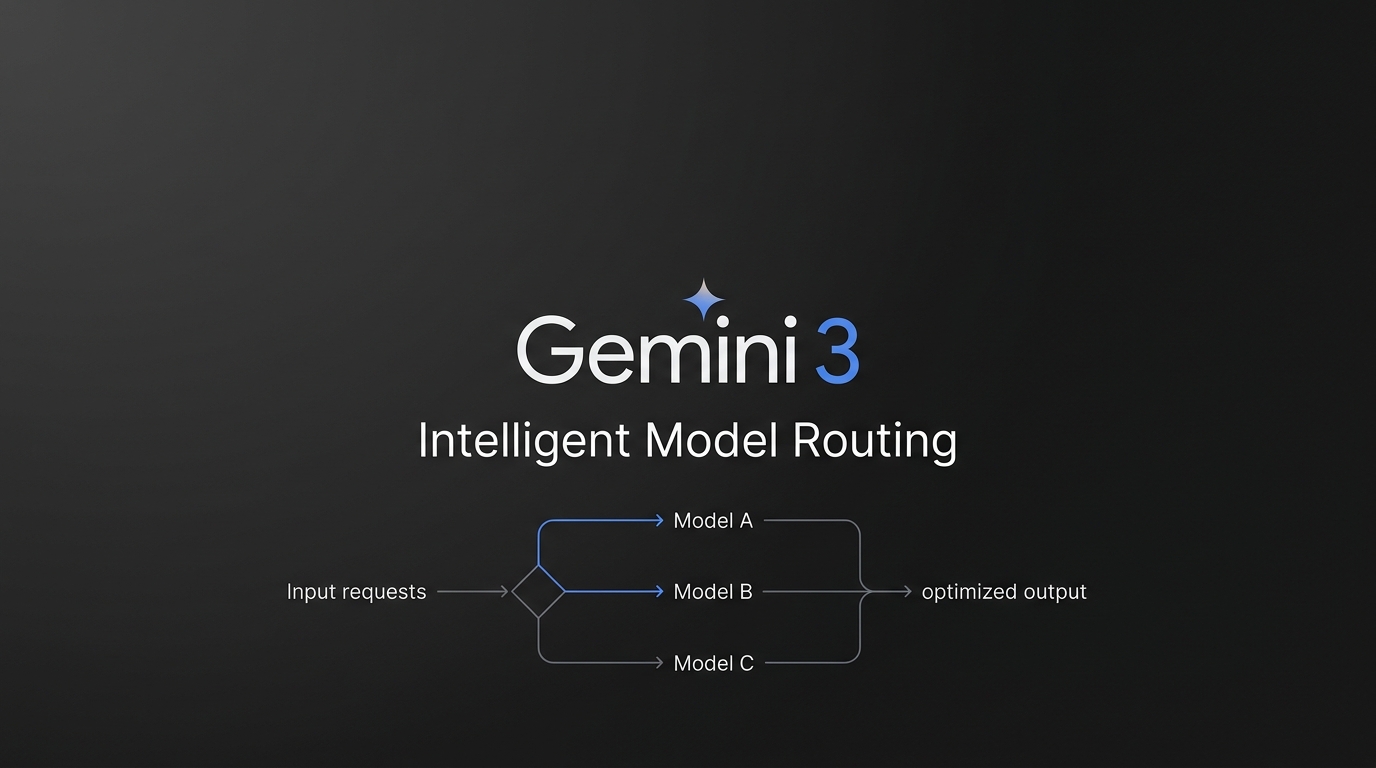

Static thinking_level settings work for simple applications. But for teams building production workflows at scale, the real savings come from dynamic routing, where each incoming request is evaluated and assigned the appropriate reasoning depth. This is where automation platforms like n8n become valuable.

The pattern works like this: a lightweight classifier (running at minimal or low) evaluates the incoming prompt, tags it by complexity, and then routes it to the appropriate thinking level. A customer support triage workflow might use low for “What are your business hours?”, medium for “Compare pricing plans for a team of 20,” and high for “Debug this Python error in our production pipeline.” Many SMBs partner with n8n automation experts to build these routing layers, since the orchestration involves multiple conditional branches, fallback logic, and monitoring that goes beyond a simple API call.

A basic routing decision tree looks like this in pseudocode:

def route_thinking_level(prompt: str) -> str:

"""Classify prompt complexity and return optimal thinking_level."""

# Quick heuristic check - runs at 'low' thinking

classifier = client.models.generate_content(

model="gemini-3-flash-preview",

contents=f"Classify this task as 'simple', 'moderate', or 'complex': {prompt}",

config=types.GenerateContentConfig(

thinking_config=types.ThinkingConfig(thinking_level="low")

),

)

classification = classifier.text.strip().lower()

routing_map = {

"simple": "low", # Fact lookup, classification, extraction

"moderate": "medium", # Comparisons, multi-step analysis

"complex": "high", # Coding, math, strategic reasoning

}

return routing_map.get(classification, "medium")Practical strategies to reduce costs right now

- Audit your current token usage. Check

response.usage_metadata.thoughts_token_counton your existing calls. If thinking tokens consistently outnumber output tokens by 5x or more on simple tasks, you’re overpaying. - Default to

lowfor chat and instruction-following. Google’s own documentation recommendslowfor structured data extraction, summarization, and high-throughput applications. Reservehighfor tasks that genuinely require multi-step reasoning. - Use

mediumas your safe middle ground. If you’re unsure about task complexity,mediumprovides balanced reasoning without the full token cost ofhigh. It’s often sufficient for tasks like comparison, moderate analysis, and structured generation. - Consider Gemini 3 Flash over Pro for most workflows. Flash at $3.00/1M output tokens is 4x cheaper than Pro at $12.00/1M. For many tasks with

mediumthinking on Flash, quality is comparable tohighthinking on Pro at a fraction of the cost. - Monitor thought signatures in multi-turn conversations. Gemini 3 returns encrypted thought signatures that must be passed back in subsequent turns. Missing signatures degrade reasoning quality, potentially forcing you to increase thinking levels to compensate, which increases costs.

- Leverage the free tier for prototyping. Gemini 3 Flash offers a free tier with limited rate limits. Use it to benchmark different thinking levels against your task types before committing to paid usage patterns.

Key takeaways

The thinking_level parameter is one of the most impactful cost controls Google has added to the Gemini API. Every request defaults to high reasoning, which means every request generates the maximum number of thinking tokens possible for that prompt. For anything beyond complex problem-solving, that’s wasted spend. Set low for straightforward tasks like classification and extraction, medium for balanced reasoning, and reserve high for the tasks that genuinely demand it. Track your thinking token counts with usage_metadata.thoughts_token_count to verify the savings. For production workflows processing large volumes of diverse requests, build or integrate a routing layer that dynamically assigns the right thinking level to each task. That single optimization, applied consistently, can reduce your Gemini API costs substantially without degrading output quality where it counts.

Leave a Comment

Sign in to join the discussion and share your thoughts.

Login to Comment