Enterprise AI teams learned a hard lesson in 2025: bigger context windows do not automatically produce better economics. In customer support environments, AI agents often ingest CRM notes, policy documents, order history, conversation logs, and knowledge base content all at once. That creates bloated prompts, higher token costs, slower responses, and inconsistent outputs. In 2026, the companies seeing the strongest AI agent ROI are not simply deploying larger models. They are redesigning how context reaches the model. This is where context compression matters. In this real-world style case study, we will examine how a Fortune 500 company cut customer support costs by 40% by compressing, ranking, and caching support context before inference, and what your team can do to replicate those results.

Why context compression became a board-level issue in 2026

The core problem is simple. Enterprise support agents need context to answer accurately, but raw context is expensive. A single customer inquiry may require account metadata, shipping events, subscription details, past tickets, refund policy logic, compliance disclaimers, and product troubleshooting steps. When an AI system passes all of that into every request, cost scales faster than volume. Latency rises, retrieval quality degrades, and hallucination risk can actually increase because the model must sort through irrelevant material.

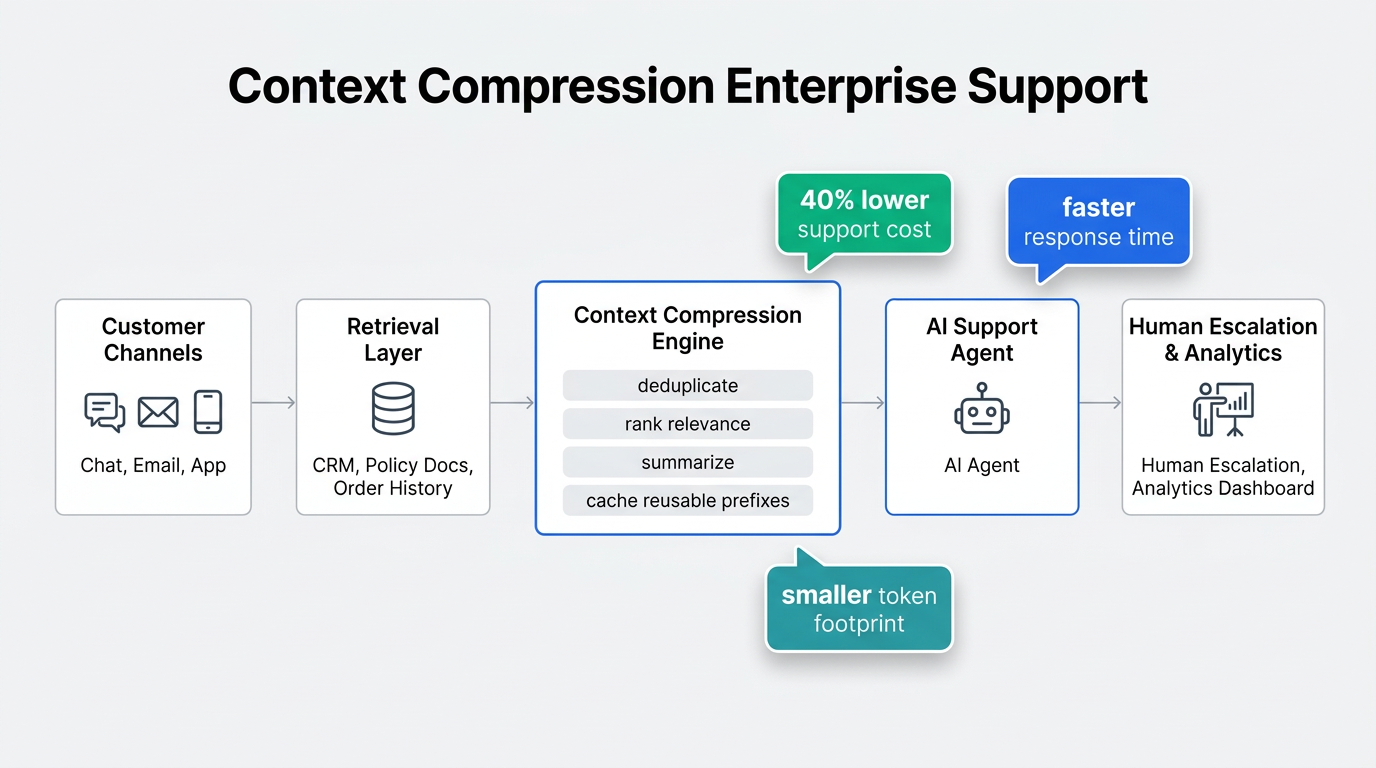

Context compression solves this by shrinking the information payload without removing the details the model truly needs. In practice, that usually means four things: deduplicating repeated content, ranking passages by task relevance, summarizing low-value text into structured notes, and caching reusable prompt prefixes such as policy instructions or brand guidelines. The result is a smaller, sharper context package that improves both economics and reliability.

For customer support, the ROI case is unusually strong because support teams handle high volume, repetitive workflows, and measurable service-level targets. That makes context compression one of the most practical enterprise AI use cases in 2026. It affects not just model spend, but also containment rate, average handle time, escalation volume, and agent productivity.

The Fortune 500 case study: from promising pilot to cost problem

The company in this case study is a composite based on common enterprise support patterns in retail, financial services, and subscription commerce. It handled roughly 1.8 million customer support interactions per month across chat, email, and in-app messaging. In late 2025, the company launched a customer support AI agent to automate order status questions, return requests, billing disputes, and account changes. Early quality scores looked strong, but the economics were disappointing.

Each support request pulled too much context. The retrieval system attached long ticket histories, full policy pages, and multiple overlapping knowledge articles. Average prompt size exceeded the company’s target by more than 3x. Escalation rates stayed higher than expected because the model sometimes missed the one paragraph that actually mattered. Leadership saw an uncomfortable pattern: the system answered many tickets, but token spend and operational complexity were eating away at the business case.

By January 2026, the AI platform team reset its architecture around a narrow question: what is the minimum context needed to resolve this support intent correctly? That shift led to a phased context compression program across the company’s support stack.

| Metric | Before context compression | After context compression |

|---|---|---|

| Average prompt token volume | High, with repeated documents and long histories | Reduced by roughly 55% |

| Average response latency | Slower and inconsistent at peak load | Reduced by roughly 28% |

| Human escalation rate | Elevated for policy-heavy cases | Reduced by roughly 18% |

| Support cost per resolved interaction | Baseline | Down 40% |

| Agent containment quality | Acceptable but uneven | More consistent across intents |

What changed in the architecture

The biggest win did not come from changing the model. It came from changing the pipeline before the model call. The company introduced a compression layer between retrieval and inference. Instead of sending raw documents directly into the prompt, the system created a compact task-specific brief for each interaction.

- Intent-first routing: The system classified the customer request before retrieval, which narrowed the search space and prevented irrelevant policy data from entering the context.

- Passage-level ranking: Retrieved documents were chunked and scored at the passage level, not the page level, so the model saw only the most relevant snippets.

- Structured summarization: Long CRM histories and prior conversations were converted into short factual summaries with fields such as issue type, prior resolution attempts, account risk, and current status.

- Deduplication logic: Repeated policy text, signature blocks, and near-identical knowledge base sections were removed automatically.

- Prefix caching: Stable instructions such as tone, compliance rules, and support workflows were cached and reused across requests.

This changed the prompt from a document dump into a decision packet. For example, a billing dispute no longer carried the full customer history, two full policy pages, and five past ticket threads. Instead, it carried the current dispute summary, the one applicable billing rule, the latest transaction facts, and the approved resolution workflow. That dramatically improved the signal-to-noise ratio.

A simplified implementation pattern

// Example support pipeline for compressed enterprise context

async function buildSupportContext(ticket) {

const intent = await classifyIntent(ticket.message);

const retrieved = await retrieveRelevantSources({

intent,

customerId: ticket.customerId,

orderId: ticket.orderId,

topK: 12

});

const passages = chunkAndScore(retrieved, ticket.message)

.filter(p => p.score > 0.72)

.slice(0, 6);

const crmSummary = summarizeCRMHistory(ticket.customerHistory, {

fields: ["issue_type", "status", "last_resolution", "risk_flag"]

});

const policySummary = compressPolicies(passages, {

removeDuplicates: true,

keepDecisionRulesOnly: true

});

return {

cachedPrefixKey: `support-policy-v3-${intent}`,

liveContext: {

customer_issue: ticket.message,

crm_summary: crmSummary,

relevant_rules: policySummary,

order_facts: ticket.orderFacts

}

};

}The point of this pattern is not the exact code. It is the sequencing. Classification happens first, retrieval becomes narrower, summaries are structured rather than free-form, and the final prompt contains only what the model needs to act safely.

How the 40% savings showed up in the P&L

The headline result was a 40% reduction in customer support cost for AI-assisted and AI-resolved interactions. That did not come from token savings alone. In fact, the company tracked four separate value levers.

- Lower inference spend: Smaller prompts meant fewer input tokens and less waste from redundant context.

- Higher automation rates: Better context quality improved first-pass resolution for routine cases such as refunds, address changes, and shipping exceptions.

- Reduced human rework: Agents receiving escalated tickets saw cleaner summaries and spent less time re-reading raw logs.

- Faster handling time: Lower latency and more focused answers shortened the full interaction cycle.

One overlooked benefit was governance. Compressed prompts made the system easier to audit. Security and compliance teams could inspect exactly which rules and facts were passed into each response. That matters in regulated support environments where AI agents must show traceable decision logic.

“The best enterprise AI cost reduction projects do not start with a cheaper model. They start with cleaner context.”

Practical lesson from the 2026 customer support AI playbook

Actionable rollout plan for enterprise teams

If you want to reproduce this kind of AI agent ROI, start with one support workflow where context is expensive and success is measurable. Returns, billing disputes, renewals, and shipping issues are often ideal because they combine high volume with structured policies.

- Measure your current context footprint. Track average tokens per request, retrieval depth, duplicated passages, and latency by support intent.

- Define the minimum decision set. For each workflow, identify the exact facts, rules, and historical signals required for safe resolution.

- Introduce a compression layer. Add ranking, summarization, and deduplication before the model call rather than relying on the model to do that work itself.

- Cache what does not change. Policy instructions, tone rules, and workflow scaffolding should not be rebuilt on every request.

- Evaluate quality and economics together. A smaller prompt is only a win if resolution accuracy, containment, and compliance stay strong or improve.

- Roll out intent by intent. Do not compress every workflow at once. Tune high-volume categories first and expand from there.

Teams should also avoid a common mistake: over-compressing critical evidence. The goal is not the shortest possible prompt. The goal is the highest-value prompt. If a dispute decision depends on a very specific clause or transaction detail, that evidence should stay explicit in the context package.

What this means for customer support AI in 2026

Context compression enterprise use cases are moving from optimization projects to core architecture decisions. As AI agents spread across support operations, finance teams are asking harder questions about marginal cost, scaling behavior, and measurable productivity gains. The organizations with the strongest answers are building systems that treat context as a scarce resource, not an unlimited input stream.

The lesson from this Fortune 500 case study is clear. A 40% cost reduction did not require a dramatic reinvention of support operations. It required better information discipline: retrieve less, compress intelligently, cache what repeats, and send the model only what matters. If your enterprise support AI program is struggling to prove value, context compression may be the fastest path to stronger AI agent ROI, better customer outcomes, and a support architecture that scales cleanly through 2026 and beyond.