As agentic AI systems grow more complex, the demand for models that balance reasoning depth with computational efficiency has intensified. NVIDIA’s Nemotron 3 Super represents a significant evolution in open model design, introducing architectural innovations that address the “thinking tax” and “context explosion” challenges plaguing multi-agent workflows. Released in March 2026, this 120-billion-parameter model builds upon its predecessors with breakthrough efficiency gains while maintaining strong accuracy across reasoning, coding, and agentic benchmarks. Understanding these upgrades is crucial for developers and businesses seeking to deploy scalable AI agents without prohibitive infrastructure costs.

Latent MoE: Redefining Expert Efficiency

The most fundamental advancement in Nemotron 3 Super is its Latent Mixture-of-Experts (MoE) architecture, which solves a critical bottleneck in traditional MoE designs. Standard MoE routes tokens directly from the model’s full hidden dimension to experts, creating computational overhead that limits practical expert scaling. Nemotron 3 Super’s Latent MoE compresses token embeddings into a lower-dimensional latent space before routing, enabling the model to consult 4x as many experts for the same inference cost. This compression-decompression approach reduces both parameter loading and all-to-all communication traffic by a factor of d/ℓ (where d is hidden dimension and ℓ is latent dimension). The resulting architecture supports 512 total experts per layer with top-22 activation, providing finer-grained specialization for diverse agentic tasks like code generation, mathematical reasoning, and tool use without proportional increases in latency or memory bandwidth requirements.

Hybrid Mamba-Transformer Backbone for Long-Context Reasoning

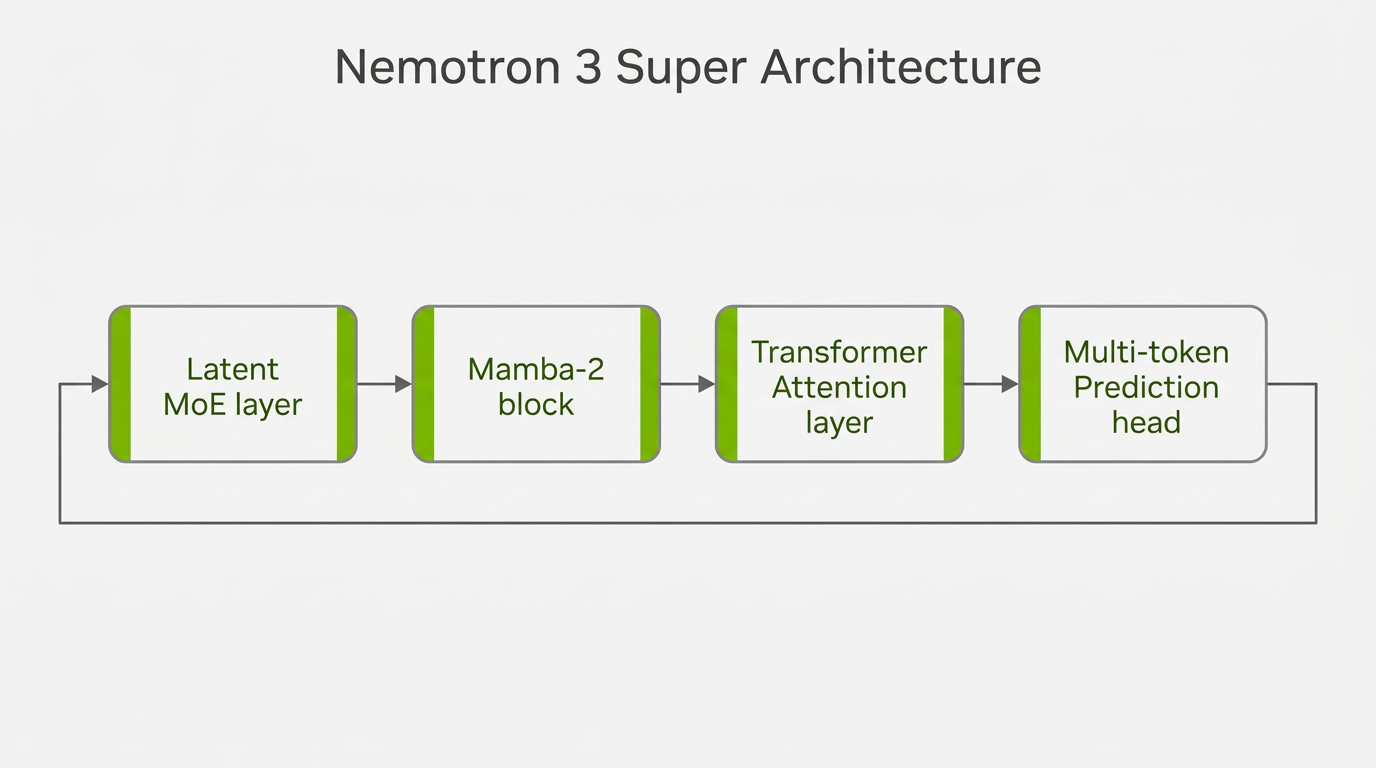

Nemotron 3 Super employs a sophisticated hybrid Mamba-Transformer architecture that strategically combines Mamba-2 state space models with Transformer attention layers to optimize both sequence modeling precision and inference throughput. The 88-layer backbone follows a repeating pattern of Mamba-2, Latent MoE, Mamba-2, Attention, Mamba-2, Latent MoE blocks. Mamba-2 layers handle the majority of sequence processing with linear-time complexity relative to sequence length, making the 1-million-token context window practical for real-world applications. Transformer attention layers are strategically interleaved at key depths to preserve precise associative recall capabilities needed for tasks requiring exact information retrieval from long contexts. This hybrid approach delivers 4x improved memory and compute efficiency compared to pure Transformer designs while maintaining the modeling capacity necessary for complex agentic reasoning workflows that span code analysis, document understanding, and multi-step planning.

Multi-Token Prediction and Native NVFP4 Training

Two complementary innovations further enhance Nemotron 3 Super’s efficiency: Multi-token Prediction (MTP) and native NVFP4 pretraining. MTP trains specialized prediction heads to forecast multiple future tokens simultaneously from each position, creating built-in speculative decoding capabilities that reduce generation time by up to 3x for structured tasks like code generation. Unlike traditional approaches, Nemotron 3 Super uses a shared-weight design across MTP heads, improving training stability and enabling effective longer draft lengths during inference. The model’s native NVFP4 pretraining represents another critical advancement—rather than quantizing a full-precision model after training, Nemotron 3 Super performs the majority of floating-point operations in NVIDIA’s 4-bit floating-point format from the first gradient update. This approach, optimized for Blackwell architecture, significantly cuts memory requirements and speeds up inference by 4x on B200 GPUs compared to FP8 on H100 while maintaining accuracy through early adaptation to 4-bit arithmetic constraints.

Comprehensive Post-Training for Agentic Capabilities

Beyond architectural innovations, Nemotron 3 Super’s post-training pipeline reflects a deep focus on agentic capabilities. The model undergoes supervised fine-tuning on 7 million samples covering reasoning, instruction following, coding, safety, and multi-step agent tasks, followed by multi-environment reinforcement learning across 21 diverse environments using NeMo Gym and NeMo RL. This includes specialized training for software engineering (SWE-RL) and reinforcement learning from human feedback (RLHF), with over 1.2 million environment rollouts collected during training. The post-training process incorporates novel techniques like PivotRL for efficient agentic reasoning and low-effort reasoning modes that provide explicit control over the accuracy-efficiency tradeoff. These improvements yield substantial gains over Nemotron 3 Nano on agentic benchmarks, particularly in software engineering (60.5% on SWE-Bench OpenHands vs 41.9% for GPT-OSS-120B) and terminal use (25.8% vs 14.9%), demonstrating the model’s readiness for real-world autonomous agent deployment.

Practical Deployment and Accessibility

Nemotron 3 Super is designed for practical enterprise adoption with comprehensive deployment resources. The model is fully open—weights, datasets, and training recipes are available on Hugging Face under the NVIDIA Open Model License, which provides flexibility for data control and deployment across various infrastructures. NVIDIA provides ready-to-use cookbooks for major inference engines including vLLM, SGLang, and TensorRT-LLM, each with configuration templates and performance tuning guidance. The model is accessible through leading platforms such as Perplexity (with Pro subscription), OpenRouter, Baseten, Cloudflare Workers AI, Coreweave, DeepInfra, Fireworks AI, FriendliAI, Google Cloud Vertex AI, Inference.net, Lightning AI, Modal, Nebius Token Factory, and Together AI. With support for long-context inference up to 1 million tokens and OpenAI-compatible API options, teams can integrate Nemotron 3 Super into existing workflows with minimal friction while benefiting from its 5x throughput improvement over previous Nemotron Super models for agentic AI applications.

Conclusion

Nemotron 3 Super represents a meaningful evolution in open AI model design, introducing four key upgrades that collectively address the efficiency-accuracy tradeoff in agentic AI: Latent MoE for expert scaling without computational penalty, a hybrid Mamba-Transformer backbone for practical long-context reasoning, Multi-token Prediction with native speculative decoding, and NVFP4 native pretraining for hardware-optimized efficiency. These innovations enable the model to deliver over 5x throughput improvements compared to previous generations while maintaining competitive accuracy across reasoning, coding, and agentic benchmarks. For developers and businesses building multi-agent systems, Nemotron 3 Super offers a compelling combination of open accessibility, architectural sophistication, and practical deployment resources that significantly reduce the “thinking tax” associated with complex agentic workflows. As agentic AI continues to evolve, these foundational upgrades provide a robust platform for both immediate deployment and future research into efficient, scalable AI reasoning systems.