Gemini 3.1 Flash Lite: scaled reasoning and speed at $0.25/1M tokens

Google has released Gemini 3.1 Flash Lite in public preview, positioning it as a “scaled reasoning” model for teams that need fast responses, predictable latency, and very low per-request costs at high volume. Announced on March 3, 2026, Gemini 3.1 Flash-Lite is available to developers via the Gemini API in Google AI Studio and to enterprises via Vertex AI preview. The headline numbers are simple: $0.25 per 1M input tokens and $1.50 per 1M output tokens, plus a major speed gain versus Gemini 2.5 Flash that targets real-time workloads like content moderation, UI generation, and large-scale tagging pipelines.

This update matters because LLM teams are increasingly bottlenecked by time-to-first-token latency, output throughput, and the cost of “reasoning tokens” when models think longer than they need to. Gemini 3.1 Flash Lite’s answer is a combination of ultra-low pricing, 2.5x faster time to first answer token (as reported by Google referencing third-party benchmarking), and controllable thinking levels so you can dial reasoning up or down per request.

What happened

On March 3, 2026, Google introduced Gemini 3.1 Flash-Lite as the fastest and most cost-efficient Gemini 3-series model to date, rolling it out in preview via the Gemini API (AI Studio) and Vertex AI (enterprise). Google’s announcement emphasizes that the model is designed for “high-volume developer workloads,” offering a price/performance profile targeted at production systems where requests are frequent and response time is critical.

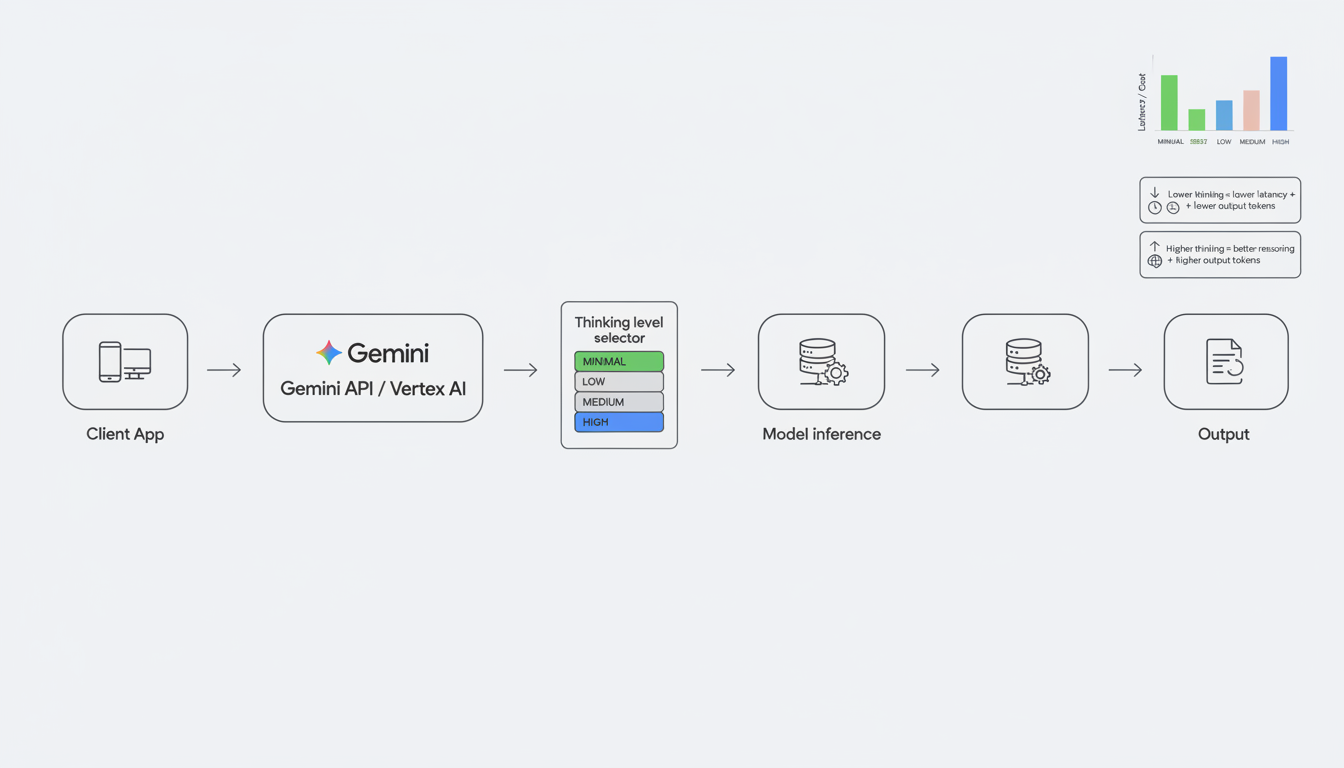

In Vertex AI, the preview model ID is gemini-3.1-flash-lite-preview. Google documents a 1,048,576-token maximum input and a 65,535-token maximum output (default) for this model, supporting multimodal inputs (text, code, images, audio, video, PDFs) with text output. The release also expands “thinking” controls from token budgets (used in Gemini 2.5 models) into simplified thinking levels for Gemini 3 and later: MINIMAL, LOW, MEDIUM, and HIGH.

Why it matters

Gemini 3.1 Flash Lite’s biggest practical impact is that it makes “reasoning at scale” cheaper and easier to control. In many production apps, the model’s default reasoning behavior can inflate output tokens (and therefore cost) and can increase latency in ways that are unacceptable for real-time UX. Thinking levels give teams a direct, repeatable knob for cost and speed tuning, without having to maintain separate prompt variants or route traffic across multiple models for every small change in complexity.

Google is also positioning the model as a strong upgrade path for teams currently using Gemini 2.x/2.5 “Lite” variants. Vertex AI documentation notes that Gemini 3.1 Flash-Lite aims to match Gemini 2.5 Flash performance across key areas while improving instruction following and offering expanded thinking support. Combined with Google’s stated speed improvements, that changes the cost curve for latency-sensitive systems such as:

- Real-time content moderation (high throughput, strict latency budgets)

- Dynamic UI generation (rapid structured outputs for layouts, components, dashboards)

- Large-scale classification and tagging (product catalogs, media labeling, entity extraction)

- Translation at volume (cost-sensitive, repetitive tasks)

Pricing and performance highlights (Gemini 3.1 vs 2.5 Flash)

On the Gemini API, Google lists Gemini 3.1 Flash-Lite Preview at $0.25 per 1M input tokens (text/image/video) and $1.50 per 1M output tokens (including thinking tokens) on the paid tier. On Vertex AI, the same list pricing is shown for standard PayGo, with additional “Flex/Batch” pricing options available at discounted rates for eligible workloads.

For speed, Google’s announcement states that Gemini 3.1 Flash-Lite outperforms Gemini 2.5 Flash with a 2.5x faster time to first answer token and a 45% increase in output speed, citing a third-party benchmark source.

| Model | Availability | Input price (USD / 1M tokens) | Output price (USD / 1M tokens) | Notable speed claim |

|---|---|---|---|---|

| Gemini 3.1 Flash-Lite (preview) | Preview (AI Studio + Vertex AI) | $0.25 (text/image/video) | $1.50 (includes thinking tokens) | 2.5x faster time to first answer token vs Gemini 2.5 Flash (per Google citing third-party benchmarking) |

| Gemini 2.5 Flash | Available on Vertex AI / Gemini API pricing tables | $0.30 (text/image/video) | $2.50 (includes reasoning) | Baseline for the comparison |

Two cost notes that are easy to miss in “low-cost LLM API” discussions:

- Output tokens often dominate cost in moderation, UI generation, and agentic workflows, especially if the model “thinks” a lot. Gemini 3.1 Flash-Lite’s output price is the number to watch when requests are short but responses are long.

- Thinking tokens are billed as output on Gemini pricing tables, so choosing a lower thinking level can directly reduce both latency and cost for simpler tasks.

Impact and implications for developers

The most meaningful “developer” change here is that Gemini 3.1 Flash Lite turns reasoning into an operational control, not just a model selection decision. In Vertex AI, “thinking models” are enabled by default, and Gemini 3 introduces a thinking_level parameter that maps to MINIMAL/LOW/MEDIUM/HIGH levels. For Gemini 3.1 Flash-Lite specifically, Vertex AI documentation states that MINIMAL is the default, which is designed to keep throughput high for everyday production traffic.

For practical workloads, that means you can run a single model in production and still apply different reasoning behaviors by endpoint or request type. Examples:

- Content moderation: Use MINIMAL or LOW for straightforward policy classification and label extraction, and reserve MEDIUM/HIGH for appeals, ambiguous cases, or multi-policy decisions that require deeper analysis.

- Dynamic UI generation: Use LOW when generating predictable JSON for known component libraries, and MEDIUM when the model must reconcile constraints (accessibility rules, layout logic, responsive breakpoints) before emitting structured output.

- Agentic pipelines: Use LOW for tool selection and routing steps, and HIGH for the few steps that truly require planning (multi-hop reasoning, complex code changes, or careful function calling).

Example: setting a thinking level in the Gen AI SDK (Vertex AI)

Vertex AI documentation shows that Gemini 3 models use the thinking_level parameter via a thinking config. The snippet below mirrors the documented pattern (you’ll adjust model ID and auth setup for your environment):

from google import genai

from google.genai import types

client = genai.Client()

response = client.models.generate_content(

model="gemini-3.1-flash-lite-preview",

contents="Classify this user post as SAFE / REVIEW / BLOCK and return JSON only: ...",

config=types.GenerateContentConfig(

thinking_config=types.ThinkingConfig(

thinking_level=types.ThinkingLevel.LOW

)

),

)

print(response.text)In production, the useful pattern is to treat thinking level like an SLO knob: default to MINIMAL/LOW for the 90–99% case, then escalate selectively based on confidence, policy ambiguity, user reputation signals, or the presence of multimodal evidence.

Bottom line

Gemini 3.1 Flash Lite (preview) is a clear signal that Google is optimizing Gemini for production-scale workloads where latency, throughput, and cost per decision matter as much as raw model quality. With $0.25/1M input tokens, $1.50/1M output tokens, and the ability to tune thinking levels per request, it’s designed for teams building high-volume systems like real-time moderation and dynamic UI generation that can’t afford “always-on deep reasoning.”

If you’re currently on Gemini 2.5 Flash for low-latency workflows, the preview is worth evaluating now: measure time-to-first-token, output speed, and total output tokens across your actual traffic mix, then use thinking levels to find the lowest-cost setting that still meets your quality thresholds. As this model moves from preview toward stability, that “scaled reasoning” control may become the default way teams manage LLM cost and performance without constantly re-architecting routing logic.