Struggling with LLM context window limits and skyrocketing token costs in your LangChain agents? As of November 2025, with LangChain 1.0 (released October 22, 2025) and deepagents 0.2.8 (November 23, 2025), you can implement a filesystem as persistent memory or scratchpad. This tutorial goes beyond LangChain’s blog on context engineering, providing a hands-on guide to build a LangChain agent using Deep Agents framework. Offload large tool results to files, enable intelligent search/retrieval of only needed context, and scale to complex tasks without bloating your prompt.

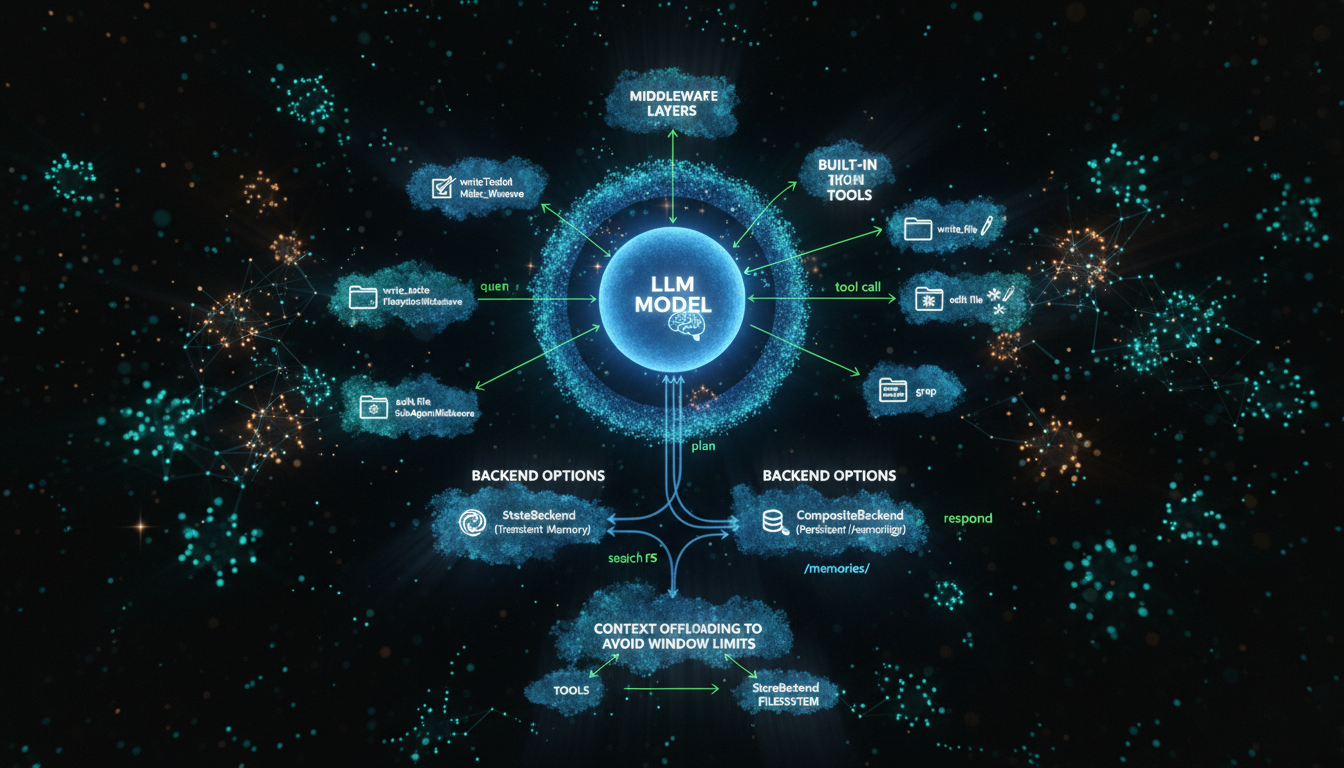

Understanding filesystem memory in LangChain Deep Agents

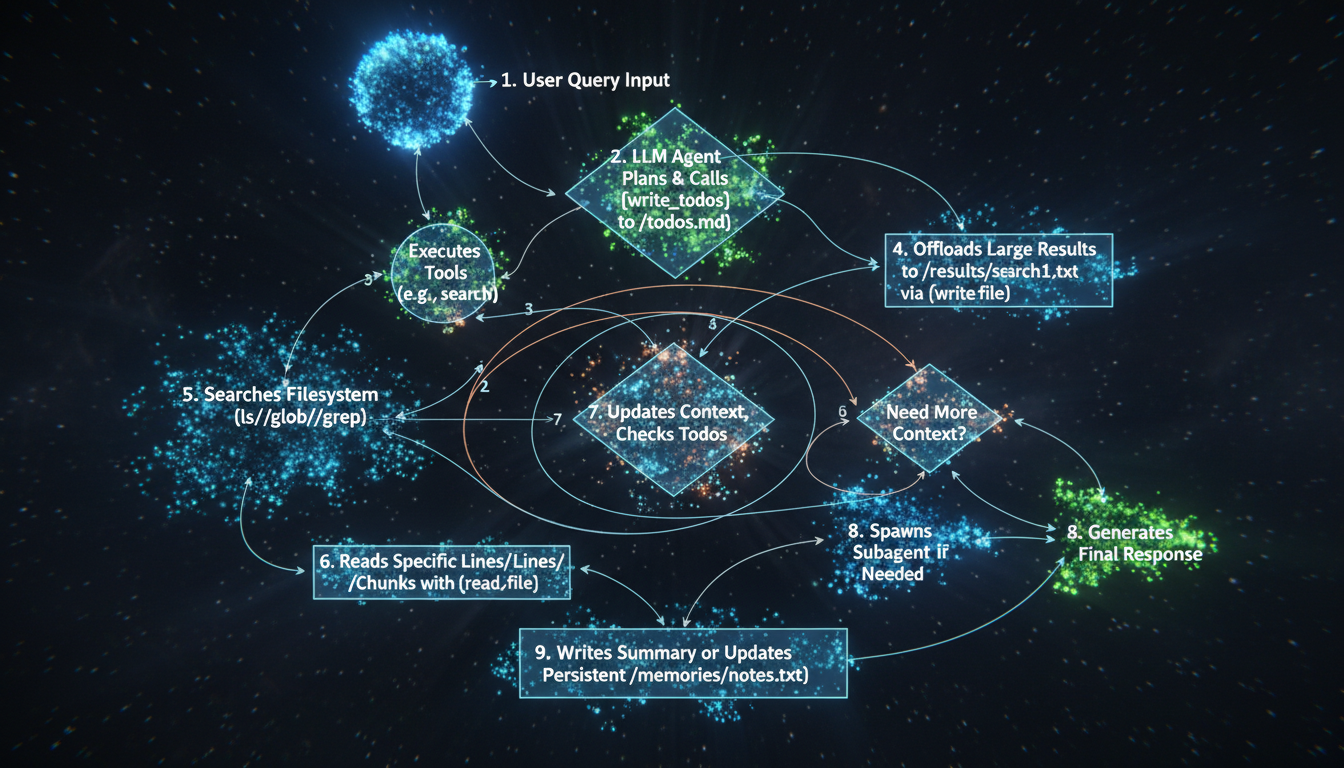

Traditional LangChain agents cram tool outputs and history into the LLM context window, leading to high costs and failures on long tasks. Deep Agents, built on LangGraph 1.0, solve this with a virtual filesystem backend. Agents use tools like write_file, read_file, ls, glob, and grep to manage context externally.

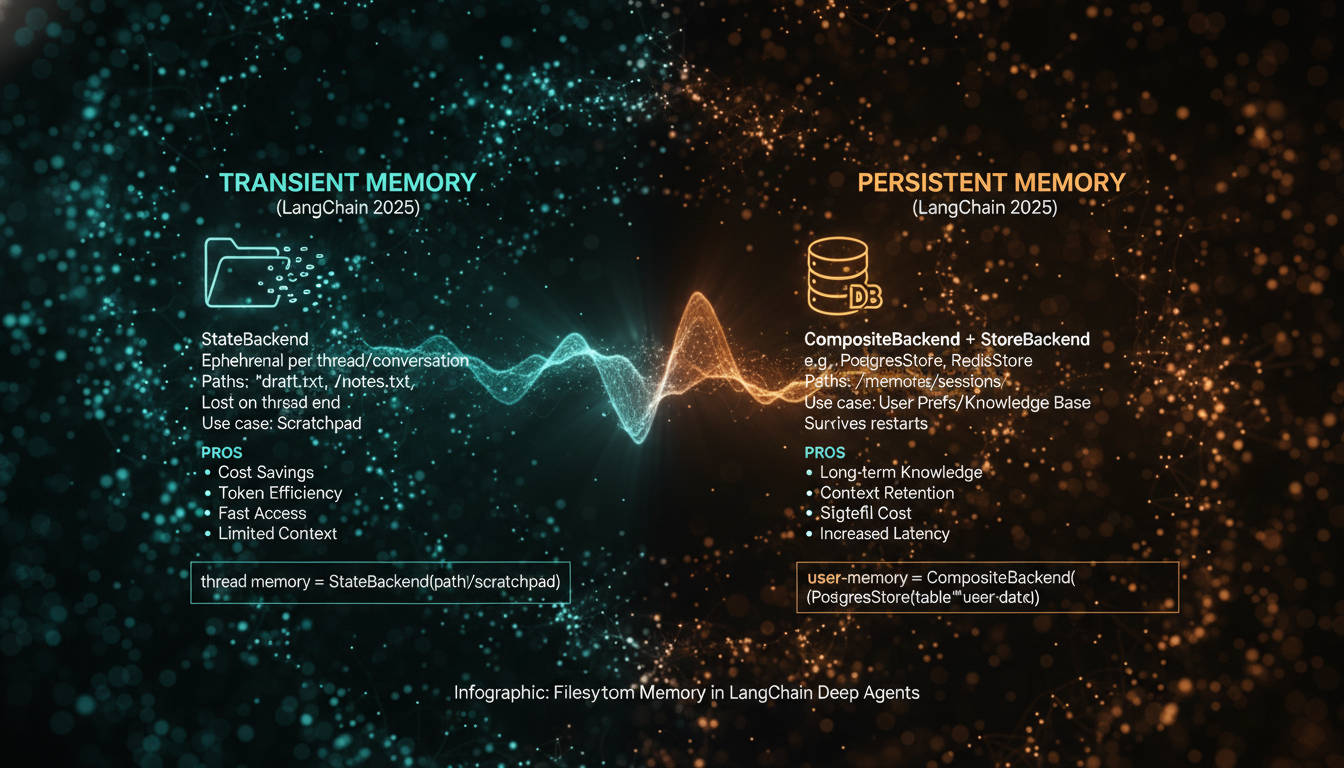

Key benefits include handling massive tool responses (e.g., web searches), persisting knowledge across sessions via CompositeBackend, and subagent delegation. Per official docs, transient StateBackend suits scratchpads (/notes.txt), while StoreBackend (e.g., PostgresStore) enables long-term /memories/ paths.

This approach mirrors Deep Research agents, doubling task lengths every 7 months per METR benchmarks.

Setting up your environment

Install latest packages

Start with Python 3.10+ (required for LangChain 1.0). Install via pip:

pip install langchain==1.0.0 langgraph==1.0.0 deepagents==0.2.8 tavily-python langgraph-store-postgresSet API keys (e.g., Anthropic for Claude Sonnet 4, Tavily for search):

export ANTHROPIC_API_KEY=sk-ant-...

export TAVILY_API_KEY=tvly-...- Verify:

pip show langchain deepagentsconfirms versions. - Create a Postgres DB for persistent store (optional for prod).

Building a basic Deep Agent with filesystem tools

Use create_deep_agent for instant setup with built-in middleware: TodoList for planning, Filesystem for memory, SubAgent for delegation.

from deepagents import create_deep_agent

from langchain_anthropic import ChatAnthropic

from tavily import TavilyClient

import os

model = ChatAnthropic(model="claude-sonnet-4-20250514")

tavily = TavilyClient(api_key=os.environ["TAVILY_API_KEY"])

def search_web(query: str):

"""Web search tool."""

return tavily.search(query=query, max_results=3)

agent = create_deep_agent(

model=model,

tools=[search_web],

system_prompt="""You are a researcher. Use filesystem to offload search results. Plan with write_todos, summarize findings."""

)Invoke:

result = agent.invoke({

"messages": [{"role": "user", "content": "Research LangChain Deep Agents updates 2025."}]

})

print(result["messages"][-1].content)The agent auto-writes todos to /todos.md, offloads search to /results/search.txt, greps relevant info.

Offloading tool results to filesystem scratchpad

Large tools like search return 10k+ tokens. Middleware auto-saves to /workspace/ if oversized. Agent then uses glob/grep:

| Tool | Purpose | Example |

|---|---|---|

| write_file | Offload results | write_file(path="/search_results.txt", content=raw_output) |

| grep | Search files | grep(pattern="LangGraph 1.0", path="**/*.txt") |

| read_file | Read chunks | read_file(path="/search_results.txt", offset=0, limit=2000) |

| ls / glob | List/navigate | ls(path="/"); glob("**/results*") |

This enables "context engineering": retrieve only necessary green context from red total context.

Adding persistent memory across sessions

Default StateBackend is thread-transient. For persistence:

from deepagents.backends import CompositeBackend, StateBackend, StoreBackend

from langgraph.store.memory import InMemoryStore # or PostgresStore

store = InMemoryStore() # Prod: PostgresStore(url=...)

def backend_factory(runtime):

return CompositeBackend(

default=StateBackend(runtime), # /scratch/

routes={"/memories/": StoreBackend(runtime, store=store)} # Persistent

)

agent = create_deep_agent(

store=store,

backend=backend_factory,

system_prompt="Save user prefs to /memories/preferences.txt. Read on start."

)Cross-thread example:

import uuid

config1 = {"configurable": {"thread_id": str(uuid.uuid4())}}

agent.invoke({"messages": [{"role": "user", "content": "My pref: Use bullet points."}]}, config=config1)

config2 = {"configurable": {"thread_id": str(uuid.uuid4())}}

result = agent.invoke({"messages": [{"role": "user", "content": "Summarize Deep Agents."}]}, config=config2)

# Agent reads /memories/preferences.txt

Advanced tips: Subagents, HITL, and production

Spawn subagents for isolation:

subagent = {

"name": "analyzer",

"description": "Analyze data files",

"prompt": "Expert data analyst. Use grep on /results/"

}

agent = create_deep_agent(subagents=[subagent])- Human-in-loop: Set interrupt_on for sensitive tools.

- Deploy: Use LangGraph Cloud or LangSmith.

- Best practices: Descriptive paths (/memories/research/sources.txt), prune old files, combine with RAG.

- Known limits: No real shell by default; extend with FilesystemBackend(root_dir="/project").

Conclusion

Key takeaways: (1) Use Deep Agents for built-in filesystem memory; (2) Offload with write_file/grep to slash tokens; (3) Persist via CompositeBackend; (4) Scale with subagents/planning; (5) Latest LangChain 1.0/LangGraph 1.0 ensure stability.

Next: Experiment with Tavily search on your data. Deploy to LangSmith for monitoring. Build deeper agents that learn over time—reducing costs 5-10x while handling real-world complexity.

Leave a Comment

Sign in to join the discussion and share your thoughts.

Login to Comment