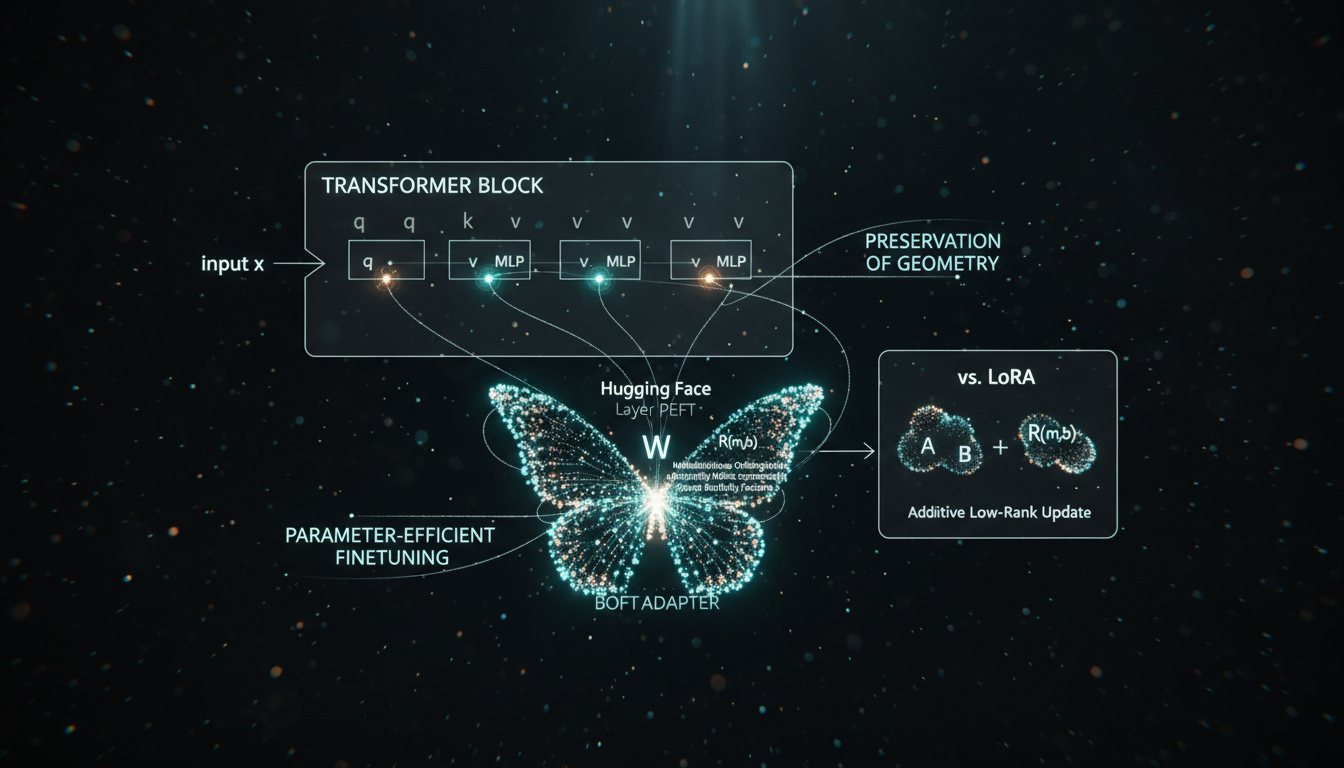

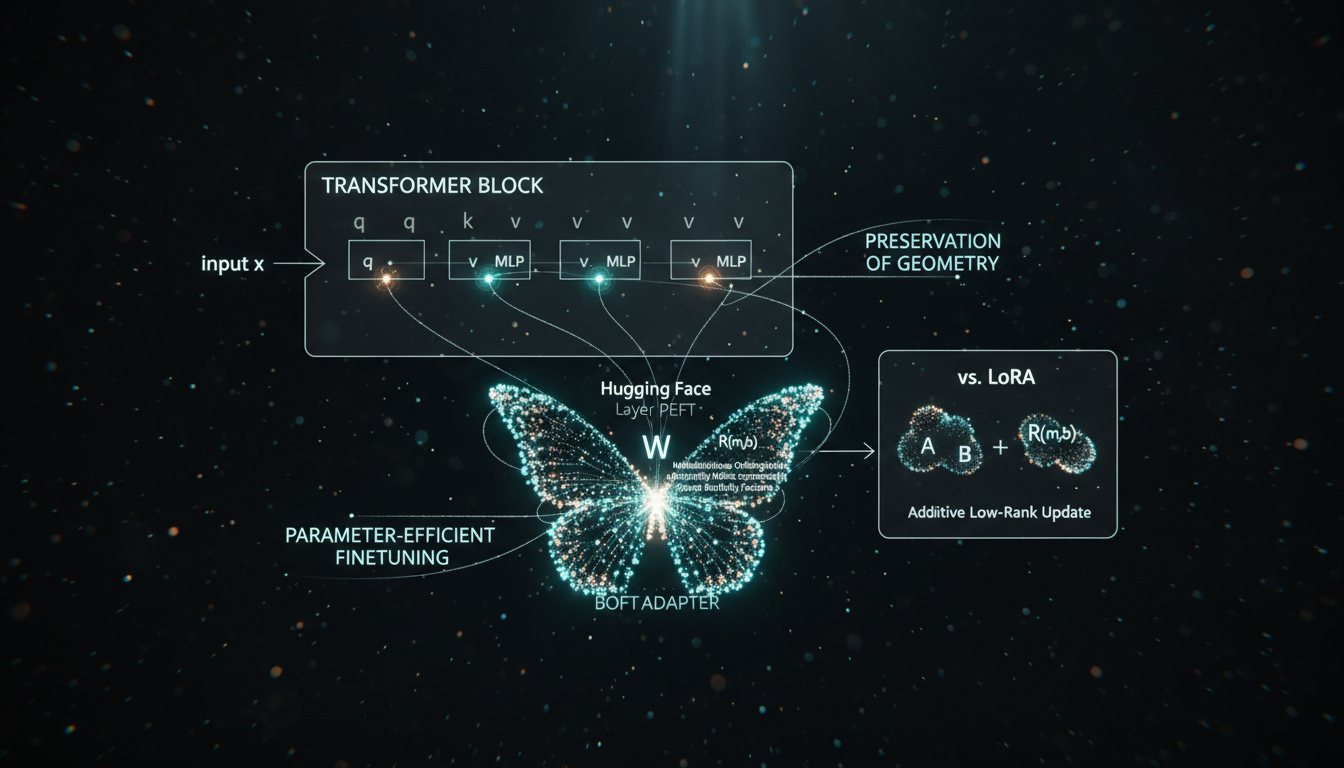

Fine-tuning large models with LoRA can be surprisingly brittle: small hyperparameter shifts or harder reasoning and image tasks often lead to overfitting, geometry drift, or catastrophic forgetting. As of November 2025, Butterfly Orthogonal Finetuning (BOFT) has emerged as a compelling LoRA alternative, now natively supported in the Hugging Face PEFT library (PEFT v0.18.0, released November 2025). This guide explains what BOFT is, why orthogonal finetuning helps preserve a model’s core knowledge, and how to implement BOFT step-by-step with PEFT for LLM reasoning and subject-driven image generation.

You’ll learn the conceptual foundations of Orthogonal Finetuning (OFT), how BOFT improves its parameter-efficiency using butterfly matrices, how it compares to LoRA in practice, and how to wire it into a modern Transformers/PEFT stack for language and diffusion models.

From LoRA to BOFT: why orthogonal finetuning?

LoRA (Low-Rank Adaptation) updates a frozen weight matrix W by adding a low-rank delta: W' = W + BA, with A, B having rank r ≪ d. This works well in many settings, but several 2024–2025 studies highlight recurring issues:

- Geometry drift: low-rank additive updates can distort the spectral norm and neuron angles, harming stability on complex reasoning tasks and long sequences.

- Overfitting under small data: without strong structural constraints, LoRA can easily memorize, especially in subject-driven image generation.

- Sensitivity to rank & placement: the right rank and target modules are task-specific; wrong choices often cause degradation vs full finetuning.

Orthogonal Finetuning (OFT), introduced earlier and extended in “Parameter-Efficient Orthogonal Finetuning via Butterfly Factorization” (ICLR 2024), takes a different approach. Instead of adding a delta, it rotates the weight space:

// Pretrained weight W₀ ∈ ℝ^{d×n}

// OFT / BOFT update:

W' = R · W₀

// R is orthogonal: Rᵀ R = IThis rotation preserves pairwise angles and spectral norm of W₀, so much of the pretrained geometry (and thus knowledge) is retained. Empirically, the BOFT paper reports:

- Consistent improvements over LoRA and AdaLoRA on GLUE and MMLU using DeBERTaV3 and Llama-2.

- Better math reasoning on GSM8K and MATH with fewer trainable parameters.

- Superior subject-identity preservation and controllability in Stable Diffusion vs LoRA, while maintaining prompt-following ability.

The challenge: a dense orthogonal matrix R ∈ ℝ^{d×d} normally has O(d²) parameters. OFT’s original block-diagonal trick reduces parameters but hurts expressiveness. BOFT solves this with butterfly factorization.

Butterfly factorization in BOFT

BOFT represents R as a product of sparse, structured orthogonal factors inspired by the Cooley–Tukey FFT butterfly network:

// BOFT orthogonal update

R(m, b) = Πᵢ ẐB_b(d, kᵢ)

// Each ẐB_b is a block-butterfly orthogonal component

// m = number of butterfly factors

// b = block size- Parameter count: O(

d log d) instead of O(d²) for a denseR. - Dense effect: although each factor is sparse, their product is effectively dense and highly expressive.

- Identity init: factors are initialized as block identities (via Cayley transform), so BOFT is a true “no-op at start” adapter, like zero-initialized LoRA.

Conceptually, LoRA moves you along a few learned directions in weight space; BOFT reorients the space with constrained rotations, keeping the backbone’s geometry intact while still allowing powerful adaptation.

BOFT in Hugging Face PEFT (2025 status)

Hugging Face’s PEFT library (latest stable v0.18.0, released November 2025) ships BOFT as a first-class tuner. The official docs describe:

“Orthogonal Butterfly (BOFT) is a generic method designed for finetuning foundation models. It improves the parameter efficiency of the finetuning paradigm (OFT) by taking inspiration from Cooley–Tukey FFT, and shows favorable results across large vision transformers, large language models and text-to-image diffusion models.”

Hugging Face PEFT BOFT docs (updated 2024+)

Key PEFT components you’ll use:

peft.BOFTConfig– defines butterfly depth, block size, target modules, dropout, etc.peft.BOFTModel/peft.get_peft_model– wraps atransformers.PreTrainedModelwith BOFT adapters.- Full compatibility with Transformers v5 (PEFT ≥ 0.18.0 is required).

| Method | Update form | Params (per d×n layer) | Geometry preservation | Typical use |

|---|---|---|---|---|

| Full finetuning | W' free | O(d·n) | No | Max performance, high cost |

| LoRA | W' = W + BA | O(d·r + n·r) | No | General PEFT baseline |

| OFT | W' = R·W, block diag | O(b·d) | Yes (per block) | Stable, moderate parameter count |

| BOFT | W' = R(m,b)·W, butterfly | O(d·m·(b−1)) | Yes (global) | Reasoning, structured vision, diffusion |

As of late 2025, BOFT coexists with newer PEFT tuners (e.g., OSF, WaveFT, MiSS), but remains particularly attractive when you care about stability and geometry preservation more than raw adaptation capacity.

Core BOFT hyperparameters: what matters

The main BOFT configuration knobs in PEFT are:

boft_block_size(int): block sizebof the block-butterfly factors. Largerb→ more capacity, more params.boft_block_num(int): alternative way to express blocks; often you only setboft_block_sizeand let BOFT derive.boft_n_butterfly_factor(int): number of butterfly factorsmmultiplied per layer. Higherm→ denser effectiveR, more expressivity and compute.target_modules(list[str]): names ofnn.Linearmodules to wrap (e.g.,"q_proj","k_proj","v_proj","o_proj","mlp.up_proj").boft_dropout(float): multiplicative dropout probability on butterfly blocks (a BOFT-specific regularizer).bias(str): usually"boft_only"or"none"; similar to LoRA’s bias handling.layers_to_transform,layers_pattern: restrict BOFT to specific layers (e.g., last N transformer blocks).

Empirical guidance (from the BOFT paper across LLMs, ViTs, SAM, and Stable Diffusion):

- LLMs (7–8B parameters): start with

boft_block_size=8,boft_n_butterfly_factor=2, apply toq/k/vand MLP projections. - Vision transformers (DINOv2-L, SAM decoder): similar

b=8,m=2–4, across attention and MLP layers. - Stable Diffusion UNet: use more depth for dense control (

m=4–6) when you need strong controllability or subject fidelity.

The safe pattern is: start with small m (2) and moderate boft_block_size (8 or 16), then scale up m if underfitting.

Implementing BOFT on an LLM with PEFT

This section walks through a practical BOFT finetuning setup for a causal LLM using Transformers v5 and PEFT ≥ 0.18.0.

Environment and installation

pip install "transformers>=5.0.0" "peft>=0.18.0" accelerate datasets bitsandbytesUse Python 3.10+ (PEFT dropped 3.9 support in v0.18.0).

Step 1: Load base model and tokenizer

from transformers import AutoModelForCausalLM, AutoTokenizer

base_model_name = "meta-llama/Llama-3.1-8B-Instruct" # example, check HF for latest

tokenizer = AutoTokenizer.from_pretrained(base_model_name, use_fast=True)

tokenizer.pad_token = tokenizer.eos_token

model = AutoModelForCausalLM.from_pretrained(

base_model_name,

device_map="auto",

torch_dtype="auto"

)

model.config.use_cache = False # recommended for trainingStep 2: Define BOFTConfig

We’ll target attention and MLP projections, a common pattern for reasoning tasks.

from peft import BOFTConfig, get_peft_model, TaskType

boft_config = BOFTConfig(

task_type=TaskType.CAUSAL_LM,

boft_block_size=8, # block size b

boft_n_butterfly_factor=2, # factors m

target_modules=[

"q_proj", "k_proj", "v_proj",

"o_proj",

"up_proj", "down_proj", "gate_proj"

],

boft_dropout=0.05,

bias="boft_only",

modules_to_save=["lm_head"], # ensure head is trainable/saved

layers_pattern="model.layers", # Llama-style pattern

inference_mode=False,

)Step 3: Wrap model with BOFT

model = get_peft_model(model, boft_config)

model.print_trainable_parameters()You should see a tiny fraction of total parameters marked as trainable (on the order of 0.1–0.3% for typical settings).

Step 4: Training loop (Trainer or Accelerate)

Using transformers.Trainer for simplicity:

from transformers import Trainer, TrainingArguments

from datasets import load_dataset

dataset = load_dataset("gsm8k", "main") # reasoning example

def format_example(example):

q = example["question"].strip()

a = example["answer"].strip()

prompt = f"Question: {q}\nAnswer:"

text = prompt + " " + a

tokens = tokenizer(text, truncation=True, max_length=1024)

tokens["labels"] = tokens["input_ids"].copy()

return tokens

train_ds = dataset["train"].map(format_example, remove_columns=dataset["train"].column_names)

training_args = TrainingArguments(

output_dir="./boft-llm-gsm8k",

per_device_train_batch_size=2,

gradient_accumulation_steps=16,

learning_rate=1e-4,

num_train_epochs=3,

lr_scheduler_type="cosine",

warmup_ratio=0.03,

fp16=True,

logging_steps=20,

save_strategy="epoch",

evaluation_strategy="no",

)

trainer = Trainer(

model=model,

args=training_args,

train_dataset=train_ds,

)

trainer.train()BOFT tends to be numerically stable; you’ll usually see smoother loss curves than aggressive LoRA on small reasoning datasets.

Step 5: Inference and merging

At inference time you can either keep BOFT as an adapter (fast to swap) or merge the orthogonal factors into the base weights to eliminate any runtime overhead:

# Save adapter

model.save_pretrained("./boft_adapter")

# Load later

from peft import PeftModel

base_model = AutoModelForCausalLM.from_pretrained(base_model_name, device_map="auto")

boft_model = PeftModel.from_pretrained(base_model, "./boft_adapter")

# (Optional) merge BOFT weights into base for deployment

boft_model = boft_model.merge_and_unload()Merging turns the multiplicative rotations into plain weights; inference speed matches the original base model.

BOFT for diffusion: subject-driven & controllable generation

BOFT is particularly compelling in two diffusion scenarios where LoRA often struggles:

- Subject-driven generation (DreamBooth-style): preserve a subject’s identity across new prompts.

- Controllable generation (ControlNet-style): enforce spatial controls (landmarks, segmentation maps) while maintaining style and prompt fidelity.

The BOFT paper shows that, on Stable Diffusion v2.1:

- BOFT achieves lower landmark error than LoRA for face-landmark control under similar parameter budgets.

- In subject-driven tasks, BOFT captures identity characteristics more robustly than LoRA, while OFT alone slightly lags in prompt fidelity.

- Increasing the number of butterfly factors (

m) smooths and stabilizes training, leading to better controllability and interpolation-friendly weight spaces.

Practical BOFT diffusion pattern with PEFT

- Load a Stable Diffusion UNet via

diffusersand freeze it. - Use BOFTConfig targeting cross-attention and key/value/query projections in the UNet.

- Fine-tune on your DreamBooth or ControlNet-style dataset with a conservative LR (e.g. 1e-5–3e-5) and moderate

boft_block_size,boft_n_butterfly_factor=4–6for strong control. - Merge adapters into the UNet once trained; inference remains as fast as the original pipeline.

The PEFT BOFT docs include examples for image models (e.g., DINOv2 and SAM); adapting these patterns to diffusion UNets is straightforward once you identify the target nn.Linear layers.

Tuning strategy: when BOFT beats LoRA

Based on current literature and PEFT’s method-comparison benchmarks, BOFT is a strong LoRA alternative when:

- Your task stresses reasoning fidelity (GSM8K, MATH, multi-step CoT) rather than just style transfer.

- You care about preserving base capabilities (general chat, broad-domain knowledge) while specializing.

- You need robust subject identity or spatial control in diffusion without brittle overfitting.

Practical heuristics:

- If a LoRA run shows degraded general performance or unstable loss, try BOFT with similar parameter budget.

- Start with BOFT on the same target modules you would use for LoRA. If underfitting, increase

boft_n_butterfly_factorbefore increasingboft_block_size. - Use multiplicative dropout (

boft_dropout) for small datasets; it tends to regularize without harming geometry.

One caveat: training with BOFT is a bit more compute-heavy than a similarly-sized LoRA, because multiple orthogonal factors are multiplied per forward pass. Inference cost is free after merging.

Conclusion: going beyond LoRA with BOFT

Butterfly Orthogonal Finetuning offers a principled, geometry-preserving alternative to LoRA that is now production-ready via Hugging Face PEFT. By representing weight updates as structured orthogonal rotations instead of additive low-rank deltas, BOFT:

- Preserves spectral norms and neuron angles, stabilizing finetuning.

- Achieves strong performance on complex reasoning and vision tasks with O(

d log d) parameters. - Improves controllability and identity fidelity in diffusion models compared to LoRA.

To adopt BOFT today:

- Upgrade to PEFT ≥ 0.18.0 and Transformers v5+.

- Start from the provided

BOFTConfigtemplates, targeting attention and MLP layers. - Use BOFT for tasks where LoRA shows instability, forgetfulness, or geometry drift, especially in LLM reasoning and subject-driven image generation.

As PEFT continues to integrate advanced tuners (OSF, WaveFT, MiSS, and more), BOFT stands out as a robust, theoretically grounded option whenever preserving your model’s core knowledge and geometric structure is more important than raw adaptation freedom.